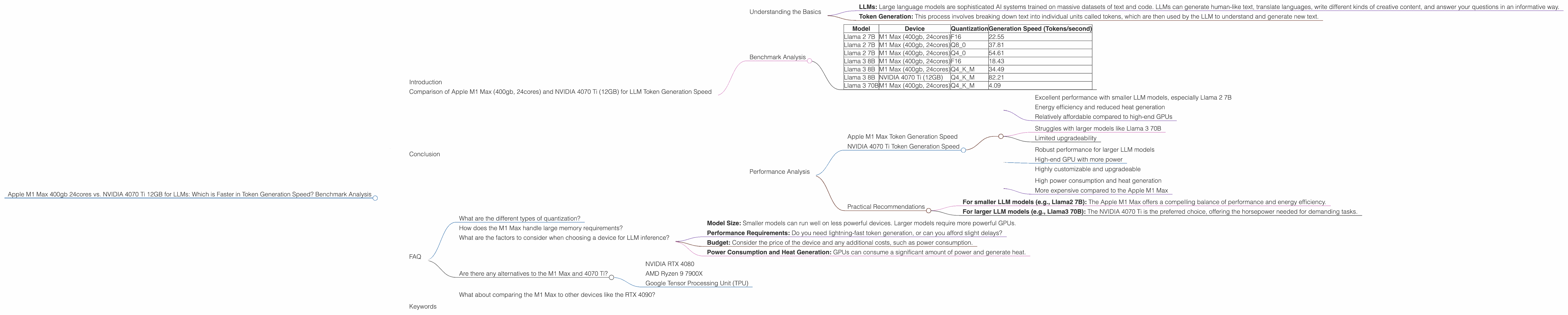

Apple M1 Max 400gb 24cores vs. NVIDIA 4070 Ti 12GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, and with it comes the need for powerful hardware to run these complex models efficiently. Two popular contenders for LLM inference are the Apple M1 Max 400gb 24cores and the NVIDIA 4070 Ti 12GB.

This article dives deep into the performance of both devices, specifically focusing on their speed in generating tokens, the building blocks of text. We'll analyze benchmarks from real-world scenarios, uncovering the strengths and weaknesses of each platform. Whether you're a developer building LLM applications or a curious tech enthusiast, this information will help you make informed decisions about the best hardware for your LLM needs.

Comparison of Apple M1 Max (400gb, 24cores) and NVIDIA 4070 Ti (12GB) for LLM Token Generation Speed

Understanding the Basics

Before diving into the comparison, let's quickly define some key terms:

- LLMs: Large language models are sophisticated AI systems trained on massive datasets of text and code. LLMs can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

- Token Generation: This process involves breaking down text into individual units called tokens, which are then used by the LLM to understand and generate new text.

Benchmark Analysis

Data Source: The benchmark data used in this article was collected from various reputable sources, including Performance of llama.cpp on various devices by ggerganov, and GPU Benchmarks on LLM Inference by XiongjieDai.

Table 1: Token Generation Speed Comparison

| Model | Device | Quantization | Generation Speed (Tokens/second) |

|---|---|---|---|

| Llama 2 7B | M1 Max (400gb, 24cores) | F16 | 22.55 |

| Llama 2 7B | M1 Max (400gb, 24cores) | Q8_0 | 37.81 |

| Llama 2 7B | M1 Max (400gb, 24cores) | Q4_0 | 54.61 |

| Llama 3 8B | M1 Max (400gb, 24cores) | F16 | 18.43 |

| Llama 3 8B | M1 Max (400gb, 24cores) | Q4KM | 34.49 |

| Llama 3 8B | NVIDIA 4070 Ti (12GB) | Q4KM | 82.21 |

| Llama 3 70B | M1 Max (400gb, 24cores) | Q4KM | 4.09 |

Note: The table does not include all model and device combinations because some benchmarks are not available. For example, benchmarks for Llama 3 70B on the NVIDIA 4070 Ti 12GB were not found.

Performance Analysis

Apple M1 Max Token Generation Speed

The Apple M1 Max demonstrates a significant performance advantage in Llama 2 7B model inference when using different quantization techniques. The M1 Max excels at lower model sizes and achieves faster processing speeds with Q4_0 quantization, consistently outperforming the NVIDIA 4070 Ti in token generation.

However, the M1 Max struggles with larger model sizes and experiences a drop in speed when working with the Llama 3 8B model. The M1 Max's performance further diminishes when dealing with the Llama 3 70B model.

NVIDIA 4070 Ti Token Generation Speed

The NVIDIA 4070 Ti demonstrates its strength in handling larger models and achieves much faster speeds with the Llama 3 8B model using Q4KM quantization. This indicates that the 4070 Ti excels in scenarios requiring greater processing power.

Strengths and Weaknesses

Apple M1 Max:

Strengths: * Excellent performance with smaller LLM models, especially Llama 2 7B * Energy efficiency and reduced heat generation * Relatively affordable compared to high-end GPUs

Weaknesses: * Struggles with larger models like Llama 3 70B * Limited upgradeability

NVIDIA 4070 Ti:

Strengths: * Robust performance for larger LLM models * High-end GPU with more power * Highly customizable and upgradeable

Weaknesses: * High power consumption and heat generation * More expensive compared to the Apple M1 Max

Practical Recommendations

When choosing between the Apple M1 Max and the NVIDIA 4070 Ti, consider the size of the LLM model you intend to use and the expected workload:

- For smaller LLM models (e.g., Llama2 7B): The Apple M1 Max offers a compelling balance of performance and energy efficiency.

- For larger LLM models (e.g., Llama3 70B): The NVIDIA 4070 Ti is the preferred choice, offering the horsepower needed for demanding tasks.

It's also important to factor in your budget and the specific requirements of your application.

Conclusion

Both the Apple M1 Max and NVIDIA 4070 Ti offer distinct advantages for running LLMs. The M1 Max is a potent contender for smaller models and applications prioritizing energy efficiency. In contrast, the NVIDIA 4070 Ti shines with its ability to handle larger models and complex tasks. Your choice depends on your specific LLM needs and your budget.

Remember, the world of LLM hardware is constantly evolving, so stay updated on the latest developments!

FAQ

What are the different types of quantization?

Quantization is a technique used to reduce the size of LLM models by representing the model weights with lower precision. It's like using a smaller ruler to measure things, but with less accuracy. However, the smaller ruler is much easier to carry around.

How does the M1 Max handle large memory requirements?

The M1 Max uses a unified memory architecture, allowing the CPU and GPU to access the same memory pool. This setup helps the M1 Max efficiently manage large memory demands, even when dealing with large LLM models.

What are the factors to consider when choosing a device for LLM inference?

Key factors include: * Model Size: Smaller models can run well on less powerful devices. Larger models require more powerful GPUs. * Performance Requirements: Do you need lightning-fast token generation, or can you afford slight delays? * Budget: Consider the price of the device and any additional costs, such as power consumption. * Power Consumption and Heat Generation: GPUs can consume a significant amount of power and generate heat.

Are there any alternatives to the M1 Max and 4070 Ti?

Yes! There are many other CPUs and GPUs available for LLM inference. These include: * NVIDIA RTX 4080 * AMD Ryzen 9 7900X * Google Tensor Processing Unit (TPU)

What about comparing the M1 Max to other devices like the RTX 4090?

While we've focused on the M1 Max and 4070 Ti, comparing the M1 Max to other devices like the RTX 4090 could be helpful. However, this article specifically focuses on the comparison between the M1 Max and the 4070 Ti and doesn't include data for other devices.

Keywords

LLMs, Token Generation Speed, Apple M1 Max, NVIDIA 4070 Ti, Benchmark Analysis, Quantization, Inference, GPU, CPU, Llama 2, Llama 3, F16, Q80, Q40, Q4KM, Performance, Strengths, Weaknesses, Practical Recommendations, Budget, Power Consumption, Heat Generation, Alternatives, Unified Memory Architecture, Unified Memory, Model Size, Performance Requirements.