Apple M1 Max 400gb 24cores vs. NVIDIA 3090 24GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the need for powerful hardware to handle the immense computational demands. Two popular options for running LLMs locally are the Apple M1 Max and the NVIDIA 3090. This article dives into a head-to-head comparison of these two heavyweights, focusing on their performance in token generation speed for various LLM models.

We'll explore the results of real-world benchmarks, analyze the strengths and weaknesses of each device, and provide practical recommendations for choosing the right hardware for your LLM projects. Whether you're a seasoned developer or just starting your journey with LLMs, understanding the performance nuances of different devices can help you optimize your setup and maximize your output.

Apple M1 Max Token Speed Generation

Before we get into the meat of the comparison, let's define "token generation speed". In the context of LLMs, it refers to the speed at which a model can generate new tokens, which are essentially the building blocks of text. Think of a token like a word or a part of a word, and the speed of generation is how quickly the model can output a sentence, paragraph, or even a whole story.

The Apple M1 Max is a powerful chip that packs a punch, particularly in its energy efficiency and performance per watt. It boasts a 24-core CPU and a 38-core GPU, making it a versatile option for a wide range of tasks. Now, let's see how it performs in the world of LLMs:

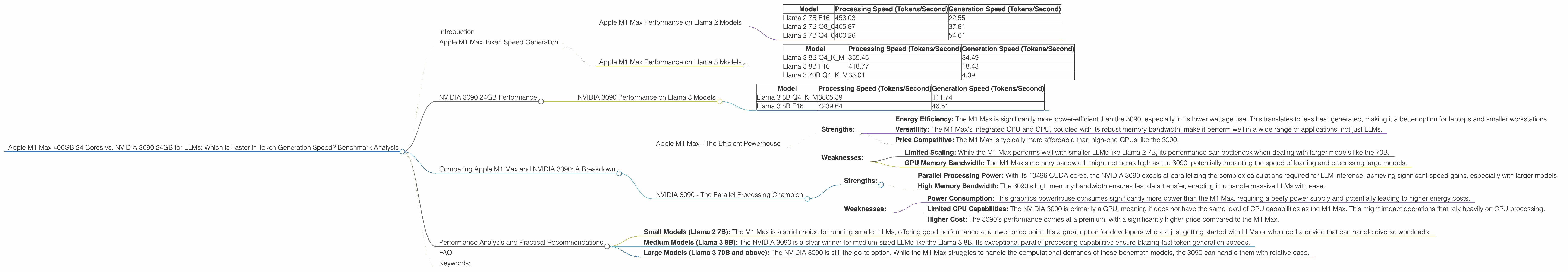

Apple M1 Max Performance on Llama 2 Models

| Model | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Llama 2 7B F16 | 453.03 | 22.55 |

| Llama 2 7B Q8_0 | 405.87 | 37.81 |

| Llama 2 7B Q4_0 | 400.26 | 54.61 |

- F16: stands for "half-precision floating-point", a way to represent numbers that uses less memory and often is faster to process

- Q8_0: describes the model's quantization level, where it uses 8 bits to represent numbers, achieving faster processing speeds and smaller memory footprint.

- Q4_0: employs 4 bits per number, offering further gains in speed and memory efficiency.

Apple M1 Max Performance on Llama 3 Models

| Model | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Llama 3 8B Q4KM | 355.45 | 34.49 |

| Llama 3 8B F16 | 418.77 | 18.43 |

| Llama 3 70B Q4KM | 33.01 | 4.09 |

Whoa! There's a significant drop in token generation speed when scaling up to Llama 3 70B. This is not uncommon, as larger models demand more computational resources. Remember, the M1 Max has 24 cores, while the 3090 has a much larger number of cores!

NVIDIA 3090 24GB Performance

The NVIDIA 3090, a powerhouse GPU known for its impressive graphics processing capabilities, is also a popular choice for running LLMs. It boasts 10496 CUDA cores, making it a beast for parallelized operations.

NVIDIA 3090 Performance on Llama 3 Models

| Model | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Llama 3 8B Q4KM | 3865.39 | 111.74 |

| Llama 3 8B F16 | 4239.64 | 46.51 |

- Q4KM: indicates that the model uses 4 bits per number and applies techniques like "quantization" and "kernel fusion" for even faster processing.

- F16: as before, represents the model's use of half-precision floating-point numbers for computation.

The 3090 shines in the realm of Llama 3 8B, delivering significantly faster token generation speed compared to the M1 Max. This is primarily because the 3090's architecture is optimized for parallel processing, which is essential for handling the complex calculations involved in LLM inference.

Comparing Apple M1 Max and NVIDIA 3090: A Breakdown

Apple M1 Max - The Efficient Powerhouse

Strengths:

- Energy Efficiency: The M1 Max is significantly more power-efficient than the 3090, especially in its lower wattage use. This translates to less heat generated, making it a better option for laptops and smaller workstations.

- Versatility: The M1 Max's integrated CPU and GPU, coupled with its robust memory bandwidth, make it perform well in a wide range of applications, not just LLMs.

- Price Competitive: The M1 Max is typically more affordable than high-end GPUs like the 3090.

Weaknesses:

- Limited Scaling: While the M1 Max performs well with smaller LLMs like Llama 2 7B, its performance can bottleneck when dealing with larger models like the 70B.

- GPU Memory Bandwidth: The M1 Max's memory bandwidth might not be as high as the 3090, potentially impacting the speed of loading and processing large models.

NVIDIA 3090 - The Parallel Processing Champion

Strengths:

- Parallel Processing Power: With its 10496 CUDA cores, the NVIDIA 3090 excels at parallelizing the complex calculations required for LLM inference, achieving significant speed gains, especially with larger models.

- High Memory Bandwidth: The 3090's high memory bandwidth ensures fast data transfer, enabling it to handle massive LLMs with ease.

Weaknesses:

- Power Consumption: This graphics powerhouse consumes significantly more power than the M1 Max, requiring a beefy power supply and potentially leading to higher energy costs.

- Limited CPU Capabilities: The NVIDIA 3090 is primarily a GPU, meaning it does not have the same level of CPU capabilities as the M1 Max. This might impact operations that rely heavily on CPU processing.

- Higher Cost: The 3090's performance comes at a premium, with a significantly higher price compared to the M1 Max.

Performance Analysis and Practical Recommendations

- Small Models (Llama 2 7B): The M1 Max is a solid choice for running smaller LLMs, offering good performance at a lower price point. It's a great option for developers who are just getting started with LLMs or who need a device that can handle diverse workloads.

- Medium Models (Llama 3 8B): The NVIDIA 3090 is a clear winner for medium-sized LLMs like the Llama 3 8B. Its exceptional parallel processing capabilities ensure blazing-fast token generation speeds.

- Large Models (Llama 3 70B and above): The NVIDIA 3090 is still the go-to option. While the M1 Max struggles to handle the computational demands of these behemoth models, the 3090 can handle them with relative ease.

Remember: The choice between the M1 Max and the 3090 ultimately depends on your specific needs and priorities. If you're looking for a more affordable and energy-efficient option that can handle smaller LLMs, the M1 Max is a great choice. If you're dealing with larger models and need the most powerful hardware available, the NVIDIA 3090 is the king of the hill.

Analogy: Imagine you're building a car. The M1 Max is like a sleek hybrid car that gets excellent mileage and can handle most roads. The NVIDIA 3090 is like a powerful racing car that can go from zero to sixty in a flash, but guzzles gas and needs a special track to perform at its best.

FAQ

Q: What are LLMs?

A: Large Language Models (LLMs) are advanced AI models that are trained on massive datasets of text and code. Think of them as super-powered AI assistants that can understand and generate human-like text, translate languages, write different kinds of creative content, and answer your questions in a comprehensive and informative way.

Q: What is tokenization?

A: Tokenization is the process of breaking down text into smaller units called tokens. Each token represents a word, a part of a word, or a symbol. Think of it like segmenting a sentence into individual building blocks.

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model and make it more efficient. It basically replaces the precise numbers used to represent information in a model with simpler, less precise, approximations. This makes the model smaller and faster to load and process.

Q: What is the difference between processing speed and generation speed?

A: Processing speed refers to how fast a model can handle the mathematical operations required to understand and generate its output. Generation speed refers to how quickly the model can actually output the text to the user.

Q: Can I run LLMs on a regular computer?

A: It's possible, but not always practical. Smaller LLMs like Llama 2 7B can sometimes be run on CPUs, but larger models often require high-performance GPUs for optimal performance.

Q: Which is better, the M1 Max or the 3090?

A: It depends on your use case. The M1 Max is more affordable and efficient, while the 3090 is more powerful but comes at a higher cost.

Q: What are some other devices that can run LLMs?

A: Other popular devices for running LLMs include: * NVIDIA GeForce RTX 4090: This is often considered the top-tier GPU for LLMs, offering even faster performance than the 3090. * AMD Radeon RX 7900 XTX: A powerful alternative to NVIDIA GPUs, offering competitive performance in LLM inference. * Google TPU v4: Specialized hardware designed for AI workloads, including LLMs.

Keywords:

LLM, Large Language Model, Apple M1 Max, NVIDIA 3090, Token Generation Speed, Benchmark Analysis, GPU, CPU, Processing Speed, Generation Speed, Quantization, Llama 2, Llama 3, Performance Analysis, Inference, Memory Bandwidth, Power Consumption, Use Cases, Development, AI, Machine Learning, Deep Learning, Tokenization, Natural Language Processing.