Apple M1 Max 400gb 24cores vs. Apple M3 100gb 10cores for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and running these powerful models locally is becoming increasingly popular. But choosing the right hardware can be a daunting task. This article delves into the performance of two popular Apple chips – the M1 Max and the M3 – when it comes to running LLMs. We will compare their token generation speeds and analyze their strengths and weaknesses to help you make an informed decision.

Think of LLMs as incredibly complex brains that understand and generate human-like text. They are like the next generation of AI, able to write stories, translate languages, and summarize information—all powered by complex algorithms and massive datasets. To harness this power locally, you need a device that can handle the heavy computational lifting.

Apple M1 Max: A Powerful Beast for LLMs

The Apple M1 Max is a powerhouse in the world of Apple Silicon, boasting an impressive 400GB/s memory bandwidth and 24 GPU cores. These specs promise blazing-fast processing speeds, making it a perfect candidate for running LLMs locally.

Apple M1 Max Token Speed Generation

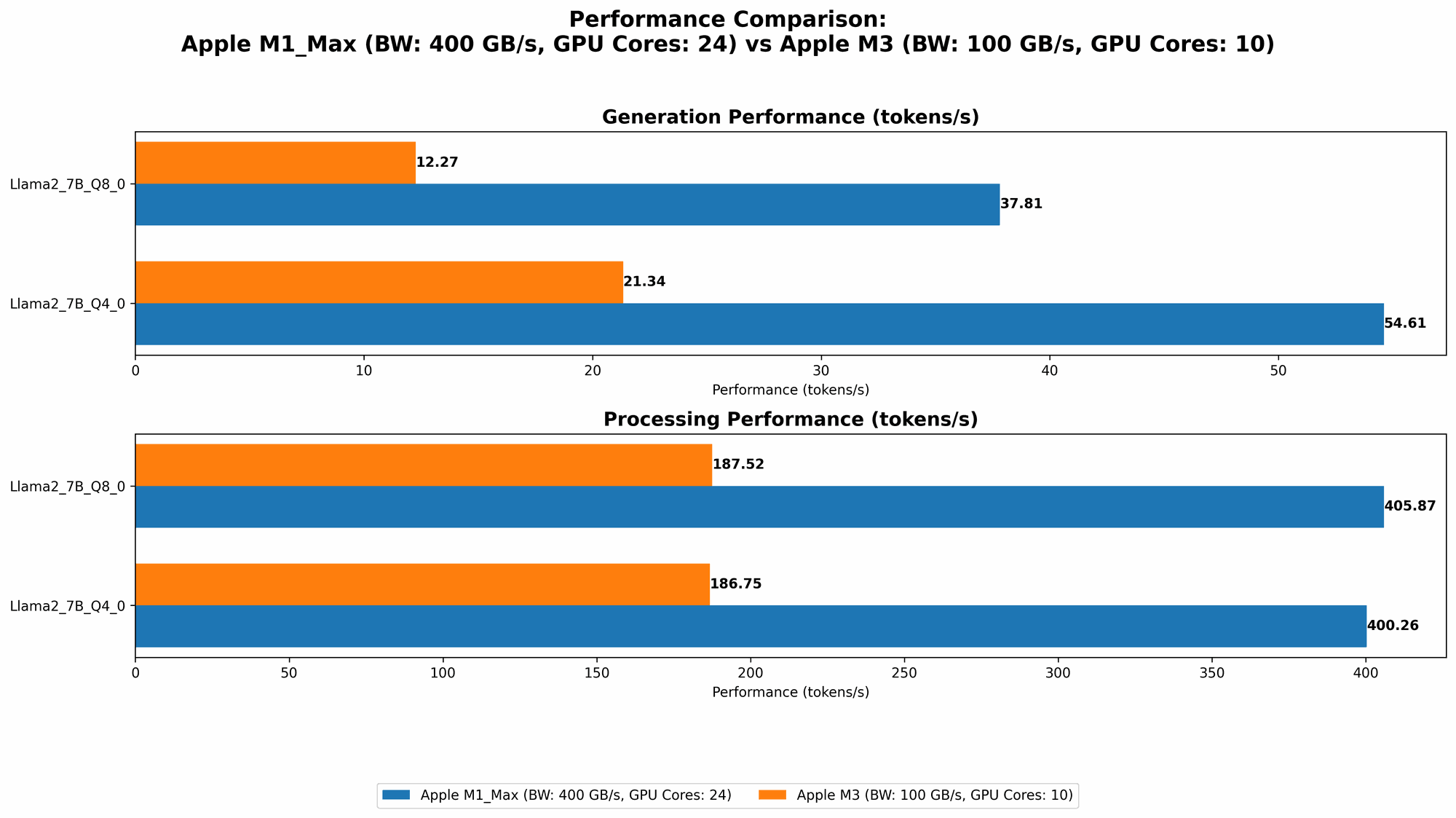

Let's dive into the data:

| Model | Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|

| Llama 2 7B | F16 | 453.03 | 22.55 |

| Llama 2 7B | Q8_0 | 405.87 | 37.81 |

| Llama 2 7B | Q4_0 | 400.26 | 54.61 |

| Llama 3 8B | Q4KM | 355.45 | 34.49 |

| Llama 3 8B | F16 | 418.77 | 18.43 |

| Llama 3 70B | Q4KM | 33.01 | 4.09 |

Important Note: The M1 Max 24 core configuration does not support the Llama 3 70B model with F16 quantization. There simply isn't enough memory to load the entire model on this particular configuration.

M1 Max Performance Analysis

The M1 Max performs exceptionally well in processing speed, especially for the Llama 2 7B model across different quantization levels. However, its generation speed, which determines how fast the model can generate text, is significantly lower. This disparity can be attributed to the model's architecture and the limitations of the M1 Max's GPU.

Strengths:

- High processing speed: The M1 Max excels at handling the complex calculations required for processing LLM models.

- Efficient memory management: The high bandwidth ensures smooth data transfer, crucial for models with large memory footprints.

Weaknesses:

- Lower generation speed: The M1 Max struggles to generate text as fast as other devices. This can be a bottleneck for users who need instant response times.

Apple M3: A Newcomer with Potential

The Apple M3 is the latest addition to the Apple Silicon family, promising enhanced performance. While its memory bandwidth is significantly lower at 100GB/s compared to the M1 Max, it boasts 10 GPU cores. How does it stack up against its predecessor in the LLM arena?

Apple M3 Token Speed Generation

| Model | Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 187.52 | 12.27 |

| Llama 2 7B | Q4_0 | 186.75 | 21.34 |

Important Note: The M3 configuration tested only supports Llama 2 7B models with Q80 and Q40 quantization. No results are available for F16 or other models.

M3 Performance Analysis

The M3's performance is a mixed bag. While it falls behind the M1 Max in terms of processing speed, it shows a slight advantage in generation speed.

Strengths:

- Higher generation speed (relative to M1 Max): Despite its lower processing power, the M3 delivers faster token generation. This is particularly valuable for users who need quick responses.

Weaknesses:

- Lower processing speed: The M3's reduced processing power makes it struggle with larger or more complex models.

- Limited model support: The current test data only includes results for the Llama 2 7B model, making it difficult to assess its performance with other models.

Comparison of M1 Max and M3 for LLMs

Here's a breakdown of the key differences between the M1 Max and M3 in the context of LLM performance:

| Feature | M1 Max | M3 |

|---|---|---|

| Memory Bandwidth | 400GB/s | 100GB/s |

| GPU Cores | 24 | 10 |

| Processing Speed | Excellent | Good |

| Generation Speed | Moderate | Better |

| Model Support | Wider range | Limited |

Practical Example: Imagine you're building a chatbot using the Llama 2 7B model. The M1 Max might give you slightly better overall performance, but the M3 offers faster response times, potentially making your chatbot more engaging.

Understanding Quantization

Quantization is like a diet for LLMs. Think of it as compressing a large model into a smaller size. This results in a faster, more efficient model, but with potential tradeoffs in accuracy.

- F16 (Float16): This format preserves a good level of accuracy while reducing the memory footprint.

- Q8_0 (Quantized 8-bit): More aggressive compression leads to further memory savings but can impact accuracy.

- Q4_0 (Quantized 4-bit): The most compressed format, offering the smallest size but potentially sacrificing accuracy.

Choosing the Right Device for Your LLM Needs

The best device for your LLM workflow depends on your priorities:

- M1 Max: If you need to handle the largest and most complex models with maximum processing power, the M1 Max is the clear winner. However, it might not be ideal for applications that require lightning-fast responses.

- M3: If you prioritize fast token generation speeds and are working with smaller models, the M3 is a good choice. However, its limited model support is a drawback.

FAQ

What's the difference between processing and generation speed?

- Processing Speed: This refers to how quickly the LLM can process the input data. Imagine it like reading a complex book—the faster you read, the quicker you understand its contents.

- Generation Speed: This indicates how fast the LLM can produce output text. Think of it like writing an essay—the faster you write, the more quickly you can share your thoughts.

How does quantization affect LLM performance?

Quantization compromises accuracy, particularly for more compressed formats like Q4_0. However, the tradeoff is significant memory savings, which can lead to faster processing and generation speeds.

Will Apple's future chips be better for LLMs?

Absolutely! Apple is constantly improving its chips, and we can expect future generations to offer even better performance for LLMs. Keep an eye out for new releases!

Keywords

LLMs, Large Language Models, Apple M1 Max, Apple M3, Token Generation Speed, Benchmark Analysis, Quantization, F16, Q80, Q40, Processing Speed, Generation Speed, AI, Machine Learning, Apple Silicon, Developer, Geek, Local Model, Llama 2, Llama 3, Model Inference