Apple M1 68gb 7cores vs. NVIDIA RTX A6000 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the exciting world of Large Language Models (LLMs), performance is paramount. Whether you're a developer building the next groundbreaking AI application or an enthusiast exploring the capabilities of these powerful models, token generation speed plays a crucial role. This article delves into a head-to-head comparison of two popular devices for running LLMs: the Apple M1 68GB 7-core and the NVIDIA RTX A6000 48GB. We'll analyze benchmark data to determine which device reigns supreme in terms of token generation speed for various LLM models.

Apple M1 Token Speed Generation: A Closer Look

The Apple M1 chip, known for its energy efficiency and impressive performance, has made significant strides in the realm of AI. Let's examine its performance in token generation across different LLM models.

Apple M1 Performance with Llama 2 7B

- Q80 Quantization: The M1 demonstrates decent performance with the Llama 2 7B model quantized to Q80. Token generation speed reaches 7.92 tokens/second. You can think of this as the model processing roughly 8 tokens per second, which is not bad for a smaller sized model.

- Q40 Quantization: The M1 shows a noticeable improvement with Q40 quantization. The token generation speed jumps to 14.19 tokens/second, which is almost double the Q8_0 speed. It's like the model is now capable of processing about 14 tokens per second, demonstrating the benefits of a higher quantization level for better performance.

Apple M1 Performance with Llama 3 8B

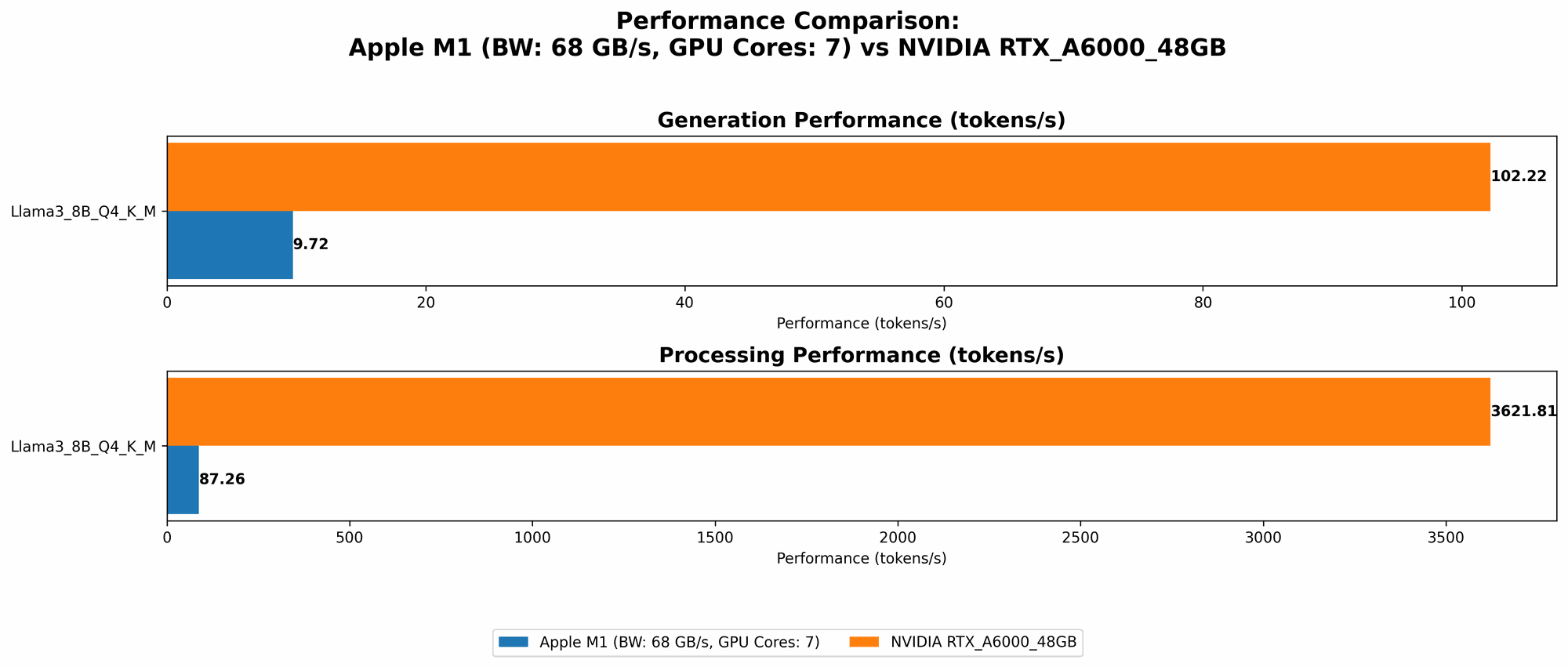

- Q4KM Quantization: The M1 continues to deliver solid performance with the Llama 3 8B model, achieving a token generation speed of 9.72 tokens/second. While this speed is not groundbreaking, it's still respectable considering the model's larger size. Imagine processing 10 tokens per second, and you'll get a better picture of the M1's capabilities with this particular LLM.

NVIDIA RTX A6000 48GB: A Powerhouse for LLMs

The NVIDIA RTX A6000, renowned for its powerful graphics processing capabilities, is a popular choice for running AI workloads. Let's dive into its performance in generating tokens for different LLM models.

NVIDIA RTX A6000 Performance with Llama 3 8B

- Q4KM Quantization: When it comes to Llama 3 8B, the RTX A6000 truly shines. It delivers a blazing-fast token generation speed of 102.22 tokens/second. This remarkable speed signifies that the A6000 can process over 100 tokens per second, making it a powerful engine for LLMs.

- F16 Quantization: The A6000 also performs exceptionally well with F16 quantization, reaching a token generation speed of 40.25 tokens/second. Even though it's slower than Q4KM, it's still significantly faster than the M1. Think of it as processing about 40 tokens per second, showcasing the A6000's versatility in handling different quantization levels.

NVIDIA RTX A6000 Performance with Llama 3 70B

- Q4KM Quantization: The A6000 continues to impress with the Llama 3 70B model. It achieves a token generation speed of 14.58 tokens/second, providing a respectable speed for a much larger model. This is like processing around 15 tokens per second, demonstrating the A6000's ability to handle large LLMs with reasonable efficiency.

Performance Analysis: M1 vs. RTX A6000

Comparison of Apple M1 and NVIDIA RTX A6000

Token Generation Speed for Llama 3

| Model | Quantization | Apple M1 (tokens/second) | NVIDIA RTX A6000 (tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 9.72 | 102.22 |

| Llama 3 8B | F16 | N/A | 40.25 |

| Llama 3 70B | Q4KM | N/A | 14.58 |

| Llama 3 70B | F16 | N/A | N/A |

Observations:

- The NVIDIA RTX A6000 significantly outperforms the Apple M1 in token generation speed for both the Llama 3 8B and Llama 3 70B models.

- The A6000 excels in both Q4KM and F16 quantization for the Llama 3 8B model, highlighting its ability to handle diverse quantization levels.

- While the A6000 delivers a respectable speed for the Llama 3 70B model, the M1 lacks data for this larger model, indicating its limitations in handling very large LLMs.

Strengths and Weaknesses of Each Device

Apple M1:

- Strengths:

- Energy efficiency: The M1 excels in power consumption, making it an excellent choice for portable or energy-constrained environments.

- Affordable: Compared to the RTX A6000, the M1 is significantly more affordable.

- Weaknesses:

- Limited performance for large LLMs: The M1 struggles to handle very large LLM models, particularly with higher quantization levels.

NVIDIA RTX A6000:

- Strengths:

- Powerful GPU: The A6000 boasts a powerful GPU that delivers exceptional performance for token generation, particularly for large LLMs.

- Versatility: The A6000 supports a wide range of quantization levels, allowing for flexibility in model deployment.

- Weaknesses:

- High cost: The A6000 comes with a hefty price tag, making it less accessible for individuals or organizations with limited budgets.

- Power consumption: The A6000 consumes more power compared to the M1, which can be a concern in energy-sensitive settings.

Practical Recommendations for Use Cases

Choosing the Right Device for Your LLM Needs

- For smaller LLMs and budget-conscious users: If you're working with smaller LLMs like Llama 2 7B or Llama 3 8B and prioritize affordability and energy efficiency, the Apple M1 is a solid choice.

- For large LLMs and high-performance needs: If you need the fastest possible token generation speed for large LLMs like Llama 3 70B, the NVIDIA RTX A6000 is the clear winner.

Alternative Approaches to Enhance LLM Performance

If you're looking to push the boundaries of LLM performance, here are some alternative approaches worth considering:

- Model Quantization: Experimenting with different quantization levels can significantly impact performance. Q4KM quantization typically offers better performance compared to Q8_0 or F16, but may require adjustments to the model.

- Model Optimization: Techniques like model pruning, weight quantization, or knowledge distillation can help reduce the model's size and improve performance.

- Distributed Inference: If you need even greater performance, explore distributed inference frameworks that allow you to run LLMs across multiple GPUs or CPUs.

FAQ: Frequently Asked Questions

What are the benefits of using a GPU for running LLMs?

GPUs like the NVIDIA RTX A6000 are designed to perform massively parallel computations, which makes them exceptionally well-suited for the demanding operations involved in running LLMs. They can accelerate tasks like matrix multiplication and tensor operations, leading to significant improvements in token generation speed. GPUs also offer large amounts of memory, essential for storing and processing the vast number of parameters in large LLMs.

How does quantization impact LLM performance?

Quantization is a technique that reduces the size of an LLM by representing its weights and activations with lower precision. This effectively compresses the model, making it smaller and faster to load and run. While quantization can sometimes reduce the model's accuracy, it often provides a significant performance boost, especially when using GPUs.

Are there any other devices suitable for running LLMs?

While the M1 and RTX A6000 are popular choices, other devices can also handle LLMs. For example, you might consider high-end CPUs with dedicated AI accelerators or specialized AI inference chips like those from Google or Intel. The best option will depend on your specific needs and budget.

What are the best practices for optimizing LLM inference?

- Choose the right hardware: Select a device with sufficient memory and processing power for your chosen LLM.

- Optimize quantization: Experiment with different quantization levels to find the best balance between performance and accuracy.

- Enable GPU acceleration: If your device supports GPU acceleration, make sure to enable it for optimal performance.

- Utilize batching: Processing multiple requests together (batching) can improve efficiency, especially for large LLMs.

Keywords

Apple M1, NVIDIA RTX A6000, LLM, Token Generation, Speed, Performance, Benchmark, Llama 2, Llama 3, Quantization, GPU, CPU, Inference, AI, Deep Learning, Machine Learning, Model Optimization, Distributed Inference, Development, Engineering, Data Science, Artificial Intelligence