Apple M1 68gb 7cores vs. NVIDIA RTX 6000 Ada 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, with new models and applications emerging every day. For developers and researchers interested in running LLMs locally, choosing the right hardware is crucial for achieving optimal performance.

This article will compare the performance of two popular devices – the Apple M1 68GB 7 Cores and the NVIDIA RTX6000Ada_48GB – in generating tokens for various LLM models. We will specifically focus on token generation speed, as that's a key indicator of how fast an LLM can produce text. By analyzing benchmark data, we'll explore their strengths and weaknesses, helping you decide which device best suits your needs.

Apple M1 Token Speed Generation

The Apple M1 chip, known for its exceptional energy efficiency and performance, has gained popularity in the LLM community. Let's dive into the benchmark data for the M1 68GB 7 Cores:

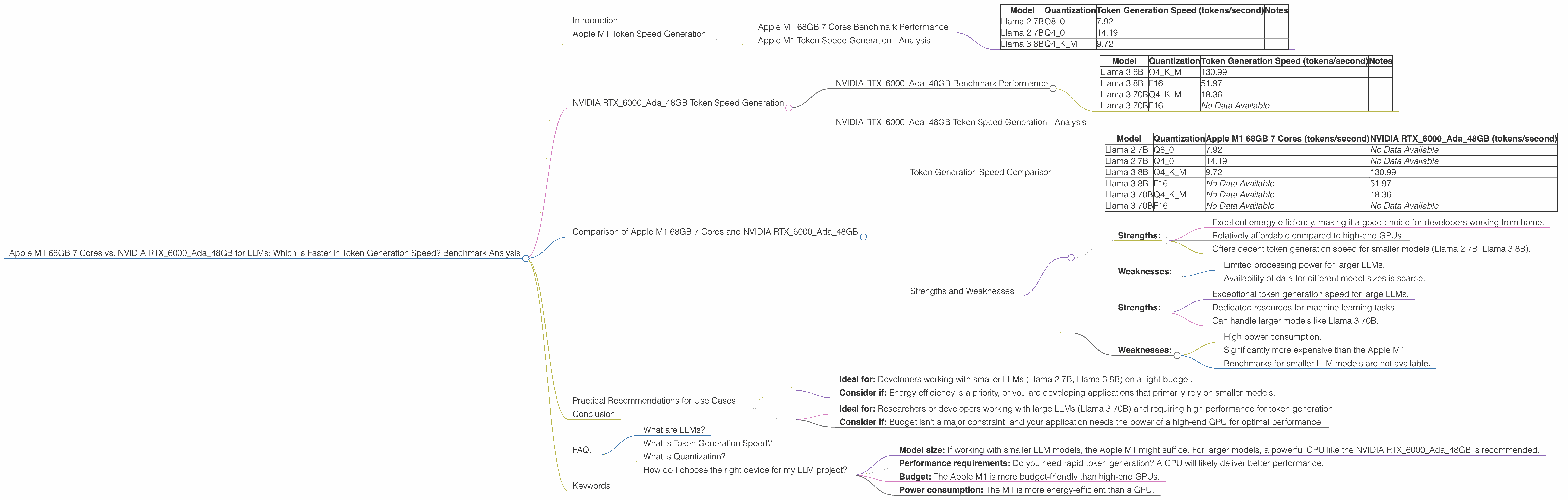

Apple M1 68GB 7 Cores Benchmark Performance

| Model | Quantization | Token Generation Speed (tokens/second) | Notes |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 7.92 | |

| Llama 2 7B | Q4_0 | 14.19 | |

| Llama 3 8B | Q4KM | 9.72 |

Note: This benchmark data focuses on token generation speed (sometimes referred to as "inference") rather than the overall model processing speed. It's important to note that performance can vary depending on the specific LLM model, its quantization level, and the implementation of the inference software.

Apple M1 Token Speed Generation - Analysis

The Apple M1 68GB 7 Cores demonstrates impressive performance for smaller LLM models. Its ability to generate tokens at a decent rate, especially with Q4_0 quantization, makes it a compelling option for developers working with models like Llama 2 7B and Llama 3 8B.

However, it's worth mentioning that the benchmarks for the M1 lack data for larger LLMs like Llama 3 70B. This suggests that the M1 might struggle to handle the increased computational demands of larger models.

NVIDIA RTX6000Ada_48GB Token Speed Generation

Let's shift our attention to the NVIDIA RTX6000Ada_48GB, a powerful GPU specifically designed for demanding tasks like machine learning.

NVIDIA RTX6000Ada_48GB Benchmark Performance

| Model | Quantization | Token Generation Speed (tokens/second) | Notes |

|---|---|---|---|

| Llama 3 8B | Q4KM | 130.99 | |

| Llama 3 8B | F16 | 51.97 | |

| Llama 3 70B | Q4KM | 18.36 | |

| Llama 3 70B | F16 | No Data Available |

NVIDIA RTX6000Ada_48GB Token Speed Generation - Analysis

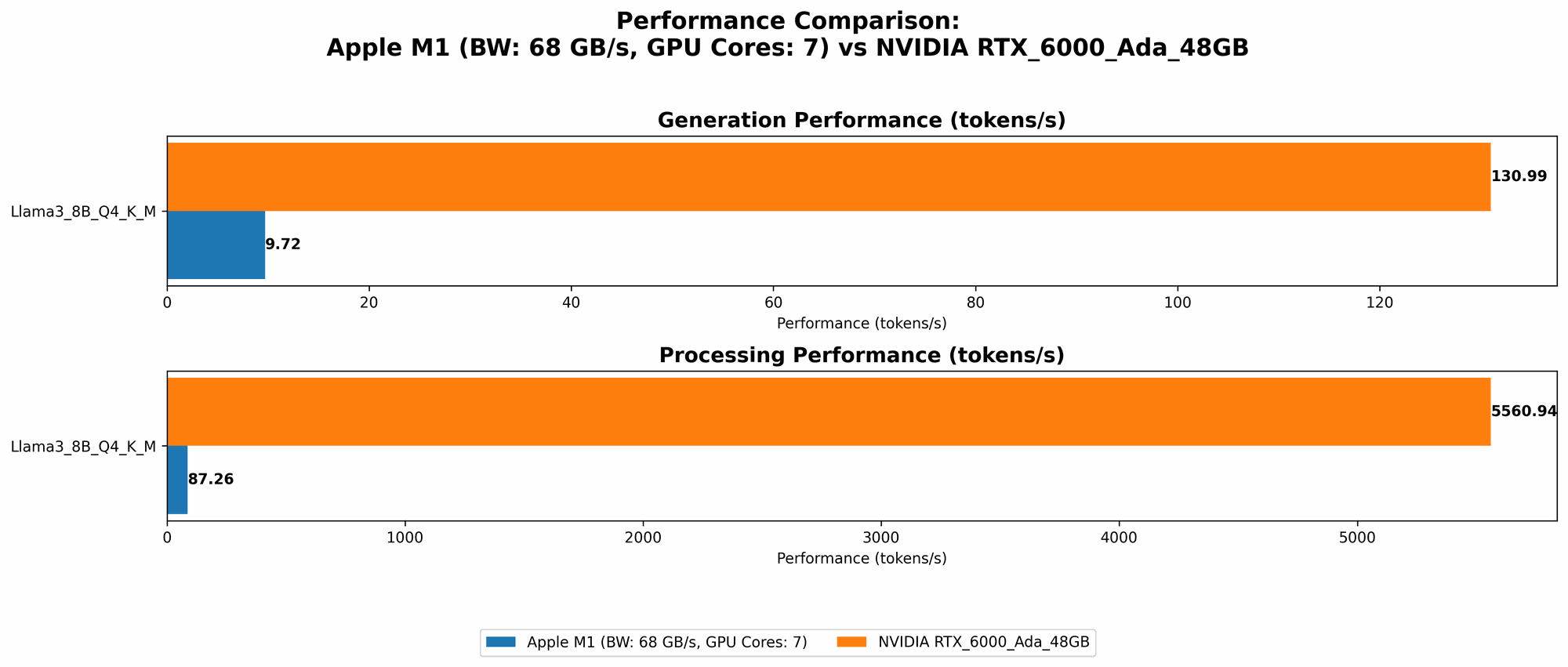

The NVIDIA RTX6000Ada48GB shines when it comes to token generation speed, particularly for larger LLMs. It achieves a significantly higher token generation rate compared to the M1, especially for Llama 3 8B. Even with F16 quantization, the RTX6000Ada48GB surpasses the M1's performance. The RTX6000Ada48GB also demonstrates the ability to handle larger models like Llama 3 70B, though the token generation speed for both Q4K_M and F16 quantizations are significantly slower than with the 8B model.

Comparison of Apple M1 68GB 7 Cores and NVIDIA RTX6000Ada_48GB

Token Generation Speed Comparison

| Model | Quantization | Apple M1 68GB 7 Cores (tokens/second) | NVIDIA RTX6000Ada_48GB (tokens/second) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 7.92 | No Data Available |

| Llama 2 7B | Q4_0 | 14.19 | No Data Available |

| Llama 3 8B | Q4KM | 9.72 | 130.99 |

| Llama 3 8B | F16 | No Data Available | 51.97 |

| Llama 3 70B | Q4KM | No Data Available | 18.36 |

| Llama 3 70B | F16 | No Data Available | No Data Available |

Strengths and Weaknesses

Apple M1 68GB 7 Cores:

- Strengths:

- Excellent energy efficiency, making it a good choice for developers working from home.

- Relatively affordable compared to high-end GPUs.

- Offers decent token generation speed for smaller models (Llama 2 7B, Llama 3 8B).

- Weaknesses:

- Limited processing power for larger LLMs.

- Availability of data for different model sizes is scarce.

NVIDIA RTX6000Ada_48GB:

- Strengths:

- Exceptional token generation speed for large LLMs.

- Dedicated resources for machine learning tasks.

- Can handle larger models like Llama 3 70B.

- Weaknesses:

- High power consumption.

- Significantly more expensive than the Apple M1.

- Benchmarks for smaller LLM models are not available.

Practical Recommendations for Use Cases

Apple M1 68GB 7 Cores:

- Ideal for: Developers working with smaller LLMs (Llama 2 7B, Llama 3 8B) on a tight budget.

- Consider if: Energy efficiency is a priority, or you are developing applications that primarily rely on smaller models.

NVIDIA RTX6000Ada_48GB:

- Ideal for: Researchers or developers working with large LLMs (Llama 3 70B) and requiring high performance for token generation.

- Consider if: Budget isn't a major constraint, and your application needs the power of a high-end GPU for optimal performance.

Conclusion

The choice between the Apple M1 68GB 7 Cores and the NVIDIA RTX6000Ada48GB for running LLMs hinges on the specific needs of your project. The M1 is a more affordable option suitable for smaller models, while the RTX6000Ada48GB excels at handling larger LLMs and delivering exceptional token generation speed, but at a premium price. Understanding your requirements and weighing the benefits and drawbacks of each device will help you make an informed decision.

FAQ:

What are LLMs?

Large Language Models (LLMs) are a type of artificial intelligence (AI) model trained on vast amounts of text data. They can understand and generate human-like text, enabling applications like chatbots, translation, and writing assistance.

What is Token Generation Speed?

Token generation speed refers to how quickly an LLM can produce individual tokens (units of text), which ultimately determines the overall speed of text generation.

What is Quantization?

Quantization is a technique used for reducing the size of LLM models while maintaining reasonable accuracy. It involves representing the model's weights (parameters) with lower-precision numbers, which can significantly reduce memory requirements.

How do I choose the right device for my LLM project?

Consider these factors:

- Model size: If working with smaller LLM models, the Apple M1 might suffice. For larger models, a powerful GPU like the NVIDIA RTX6000Ada_48GB is recommended.

- Performance requirements: Do you need rapid token generation? A GPU will likely deliver better performance.

- Budget: The Apple M1 is more budget-friendly than high-end GPUs.

- Power consumption: The M1 is more energy-efficient than a GPU.

Keywords

LLMs, token generation speed, benchmark analysis, NVIDIA RTX6000Ada_48GB, Apple M1, Llama 2, Llama 3, quantization, GPU, CPU, performance, inference, model size, budget, power consumption, AI, machine learning.