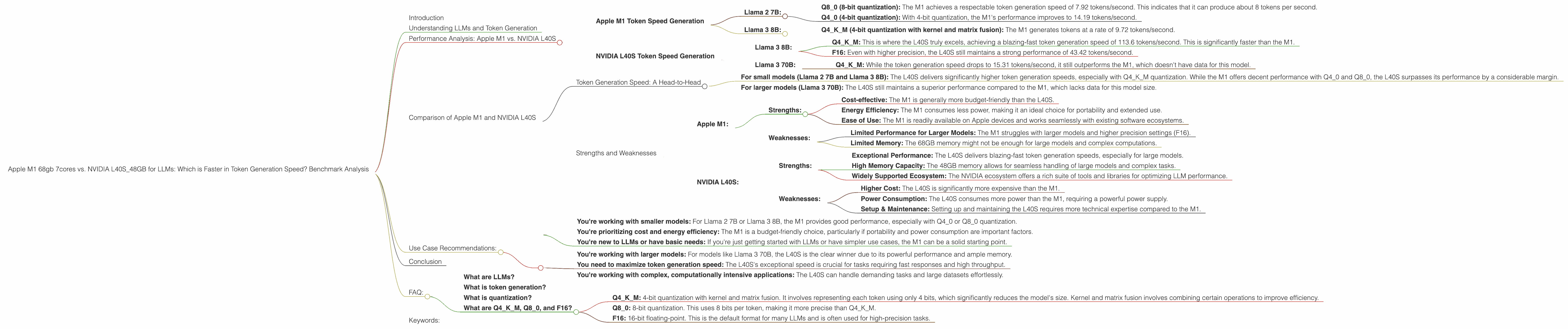

Apple M1 68gb 7cores vs. NVIDIA L40S 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the world of large language models (LLMs), speed is king. Whether you're a developer fine-tuning models or a researcher pushing the boundaries of AI, the ability to generate tokens (the building blocks of text) quickly is crucial. This detailed comparison dives into the performance of two popular devices, the Apple M1 68GB 7cores and the NVIDIA L40S_48GB, when running LLMs. We'll analyze their strengths and weaknesses, focusing on token generation speed, and guide you towards the ideal device based on your specific needs.

Understanding LLMs and Token Generation

LLMs are complex AI systems trained on massive datasets of text. They can generate human-like text, translate languages, answer your questions, and perform many more tasks. At the core of their operation is tokenization, where text is broken down into individual units called tokens. These tokens are then processed by the LLM to generate output.

Think of tokens as the "lego bricks" of language, allowing LLMs to understand and manipulate text. The faster a device can process these tokens, the faster your LLM can generate text, respond to prompts, and complete tasks.

Performance Analysis: Apple M1 vs. NVIDIA L40S

Apple M1 Token Speed Generation

The Apple M1, with its 7-core CPU and 68GB of RAM, is a powerful contender in the LLM arena. However, its performance varies significantly depending on the specific LLM model and the chosen quantization level (the process of reducing the size of the model for faster processing).

Llama 2 7B:

- Q8_0 (8-bit quantization): The M1 achieves a respectable token generation speed of 7.92 tokens/second. This indicates that it can produce about 8 tokens per second.

- Q4_0 (4-bit quantization): With 4-bit quantization, the M1's performance improves to 14.19 tokens/second.

Llama 3 8B:

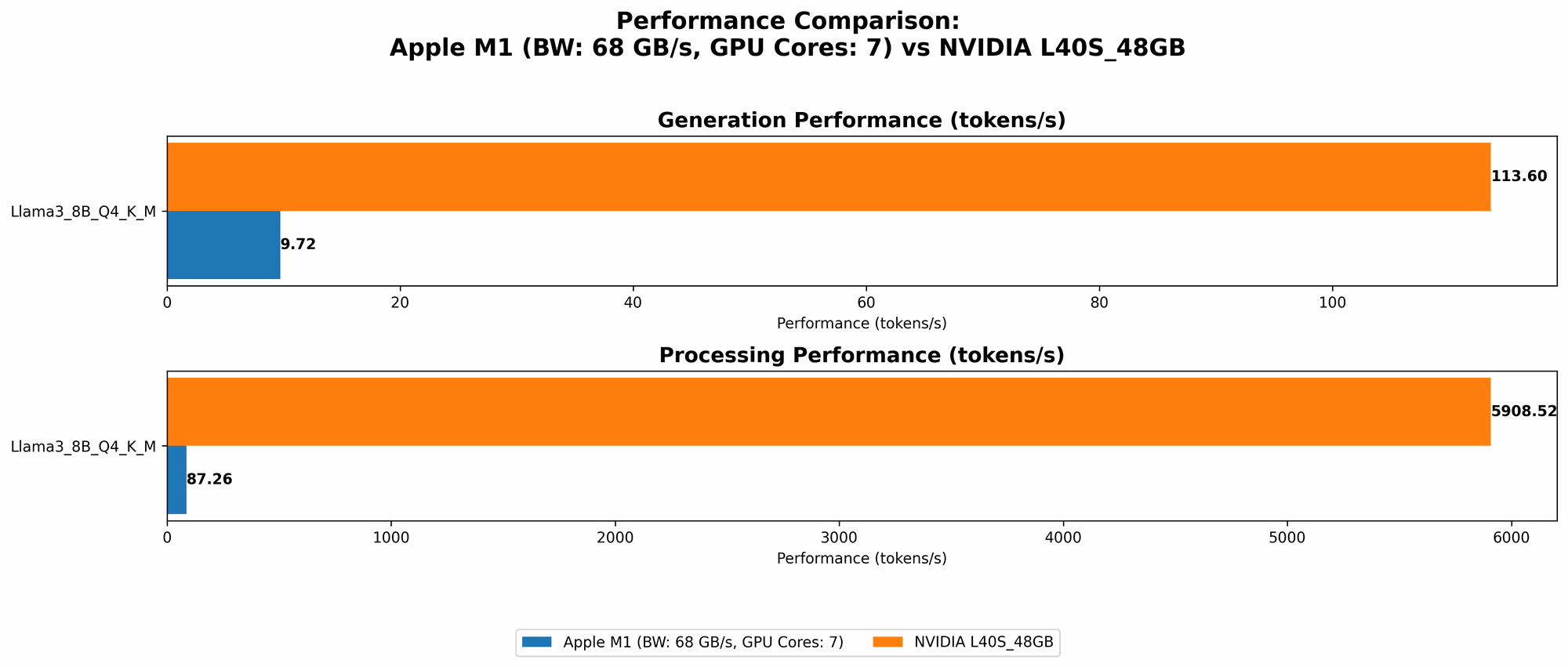

- Q4KM (4-bit quantization with kernel and matrix fusion): The M1 generates tokens at a rate of 9.72 tokens/second.

Important: We do not have data for Llama 2 7B or Llama 3 8B using F16 (16-bit floating-point) or Llama 3 70B for any quantization level. This suggests that the M1 might not be the best choice for larger models or higher precision settings.

NVIDIA L40S Token Speed Generation

The NVIDIA L40S, a powerful GPU with 48GB of memory, shines in handling larger LLM models. Let's delve into its performance for the Llama 3 models:

Llama 3 8B:

- Q4KM: This is where the L40S truly excels, achieving a blazing-fast token generation speed of 113.6 tokens/second. This is significantly faster than the M1.

- F16: Even with higher precision, the L40S still maintains a strong performance of 43.42 tokens/second.

Llama 3 70B:

- Q4KM: While the token generation speed drops to 15.31 tokens/second, it still outperforms the M1, which doesn't have data for this model.

Important: We lack data for Llama 3 70B and F16 on the L40S, highlighting a limitation in our current dataset. It's worth noting that the L40S is designed for high-performance computing, making it an ideal candidate for larger models.

Comparison of Apple M1 and NVIDIA L40S

Token Generation Speed: A Head-to-Head

The L40S takes the crown for token generation speed, consistently outperforming the M1 across different LLM models and quantization levels.

- For small models (Llama 2 7B and Llama 3 8B): The L40S delivers significantly higher token generation speeds, especially with Q4KM quantization. While the M1 offers decent performance with Q40 and Q80, the L40S surpasses its performance by a considerable margin.

- For larger models (Llama 3 70B): The L40S still maintains a superior performance compared to the M1, which lacks data for this model size.

Analogy: Think of it like comparing a sports car to a family sedan. While both can get you from A to B, the sports car (L40S) is built for speed and agility on the highway (handling large LLMs), while the sedan (M1) excels in city driving (small LLMs) but struggles on longer journeys.

Strengths and Weaknesses

Apple M1:

- Strengths:

- Cost-effective: The M1 is generally more budget-friendly than the L40S.

- Energy Efficiency: The M1 consumes less power, making it an ideal choice for portability and extended use.

- Ease of Use: The M1 is readily available on Apple devices and works seamlessly with existing software ecosystems.

- Weaknesses:

- Limited Performance for Larger Models: The M1 struggles with larger models and higher precision settings (F16).

- Limited Memory: The 68GB memory might not be enough for large models and complex computations.

NVIDIA L40S:

- Strengths:

- Exceptional Performance: The L40S delivers blazing-fast token generation speeds, especially for large models.

- High Memory Capacity: The 48GB memory allows for seamless handling of large models and complex tasks.

- Widely Supported Ecosystem: The NVIDIA ecosystem offers a rich suite of tools and libraries for optimizing LLM performance.

- Weaknesses:

- Higher Cost: The L40S is significantly more expensive than the M1.

- Power Consumption: The L40S consumes more power than the M1, requiring a powerful power supply.

- Setup & Maintenance: Setting up and maintaining the L40S requires more technical expertise compared to the M1.

Use Case Recommendations:

Choose the Apple M1 if:

- You're working with smaller models: For Llama 2 7B or Llama 3 8B, the M1 provides good performance, especially with Q40 or Q80 quantization.

- You're prioritizing cost and energy efficiency: The M1 is a budget-friendly choice, particularly if portability and power consumption are important factors.

- You're new to LLMs or have basic needs: If you're just getting started with LLMs or have simpler use cases, the M1 can be a solid starting point.

Choose the NVIDIA L40S if:

- You're working with larger models: For models like Llama 3 70B, the L40S is the clear winner due to its powerful performance and ample memory.

- You need to maximize token generation speed: The L40S's exceptional speed is crucial for tasks requiring fast responses and high throughput.

- You're working with complex, computationally intensive applications: The L40S can handle demanding tasks and large datasets effortlessly.

Conclusion

The choice between the Apple M1 and the NVIDIA L40S ultimately depends on your specific needs. The M1 is a good option for smaller models and budget-conscious developers. However, if you need to work with larger models and prioritize speed, the NVIDIA L40S is the way to go.

FAQ:

What are LLMs?

LLMs are advanced AI systems that can understand and generate human-like text. Imagine a super-intelligent chatbot that can answer your questions, write stories, translate languages, and more. These capabilities are powered by their ability to process vast amounts of text data.

What is token generation?

Token generation is the process of breaking down text into individual units called tokens. Tokens are the building blocks of language, allowing LLMs to understand and manipulate text. Think of them like letters in a word or words in a sentence. The faster a device can process tokens, the faster your LLM can perform its tasks.

What is quantization?

Quantization is a technique used to reduce the size of an LLM model without significantly impacting its accuracy. It's like compressing a file to make it smaller, but it still contains essentially the same information. This reduction in size allows for faster processing and the ability to run models on devices with limited memory.

What are Q4KM, Q8_0, and F16?

These are specific quantization formats used for LLMs:

- Q4KM: 4-bit quantization with kernel and matrix fusion. It involves representing each token using only 4 bits, which significantly reduces the model's size. Kernel and matrix fusion involves combining certain operations to improve efficiency.

- Q80: 8-bit quantization. This uses 8 bits per token, making it more precise than Q4K_M.

- F16: 16-bit floating-point. This is the default format for many LLMs and is often used for high-precision tasks.

Keywords:

LLM, Apple M1, NVIDIA L40S, token generation speed, Llama 2, Llama 3, Q4KM, Q8_0, F16, quantization, performance comparison, benchmark analysis, LLM inference, AI, machine learning, natural language processing, developer, researcher, developer tools, GPU, CPU, memory, cost, energy efficiency, use case recommendations.