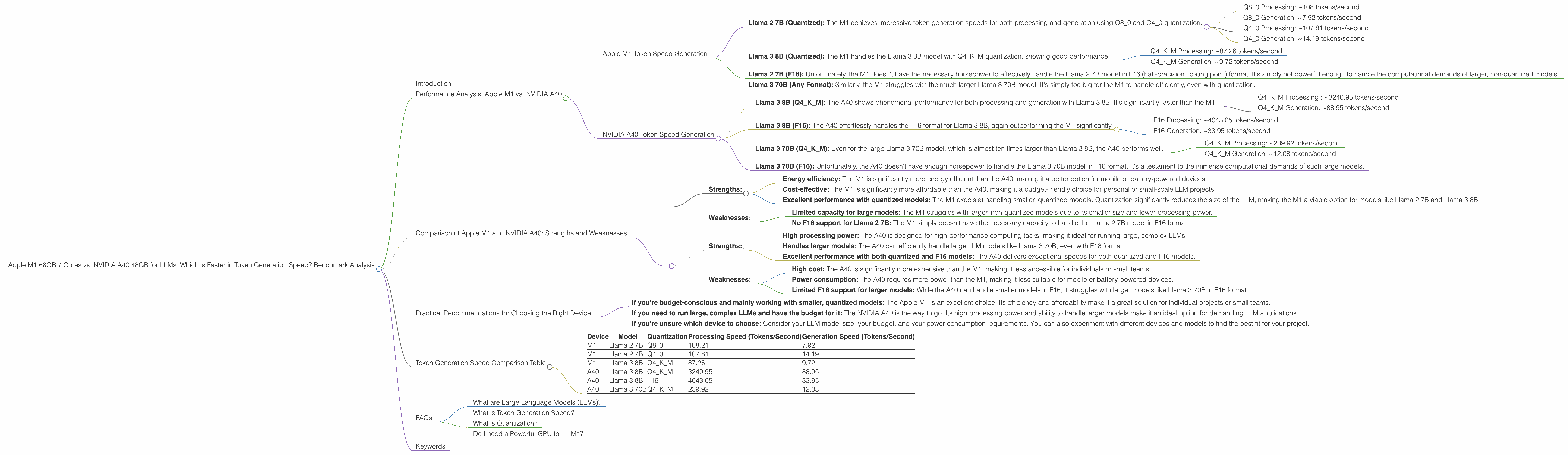

Apple M1 68gb 7cores vs. NVIDIA A40 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models and applications emerging every day. These powerful AI models are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But to harness the power of these LLMs, you need the right hardware.

This article dives into the world of local LLM deployment, comparing the performance of two popular devices: the Apple M1 68GB 7-core CPU and the NVIDIA A40 48GB GPU. We'll analyze their token generation speeds for different LLM models and compare the results using real-world benchmarks. This analysis aims to help developers and enthusiasts choose the best device for their specific needs and applications.

Performance Analysis: Apple M1 vs. NVIDIA A40

Apple M1 Token Speed Generation

The Apple M1 is a powerful chip that boasts impressive performance for its size. In this comparison, we're looking at the M1 with 68GB of RAM and 7 cores. While the M1 is known for its efficiency, it's not specifically designed for high-performance computing tasks like running large language models.

The Apple M1's strength lies in its ability to handle quantized models efficiently. Quantization is a technique that reduces the size of an LLM by using smaller data types, resulting in faster inference speeds and lower memory consumption. This makes the M1 a great choice for running smaller LLM models like Llama 2 7B or Llama 3 8B.

Here's a breakdown of the M1's performance for different LLM models:

Llama 2 7B (Quantized): The M1 achieves impressive token generation speeds for both processing and generation using Q80 and Q40 quantization.

- Q8_0 Processing: ~108 tokens/second

- Q8_0 Generation: ~7.92 tokens/second

- Q4_0 Processing: ~107.81 tokens/second

- Q4_0 Generation: ~14.19 tokens/second

Llama 3 8B (Quantized): The M1 handles the Llama 3 8B model with Q4KM quantization, showing good performance.

- Q4KM Processing: ~87.26 tokens/second

- Q4KM Generation: ~9.72 tokens/second

Llama 2 7B (F16): Unfortunately, the M1 doesn't have the necessary horsepower to effectively handle the Llama 2 7B model in F16 (half-precision floating point) format. It's simply not powerful enough to handle the computational demands of larger, non-quantized models.

Llama 3 70B (Any Format): Similarly, the M1 struggles with the much larger Llama 3 70B model. It's simply too big for the M1 to handle efficiently, even with quantization.

NVIDIA A40 Token Speed Generation

The NVIDIA A40 is a high-performance GPU specifically designed for demanding workloads like deep learning and AI inference. It excels in handling large LLM models but comes at a higher cost compared to the Apple M1.

The A40 with 48GB of memory can handle both larger and smaller LLMs with varying degrees of efficiency. Let's look at its performance:

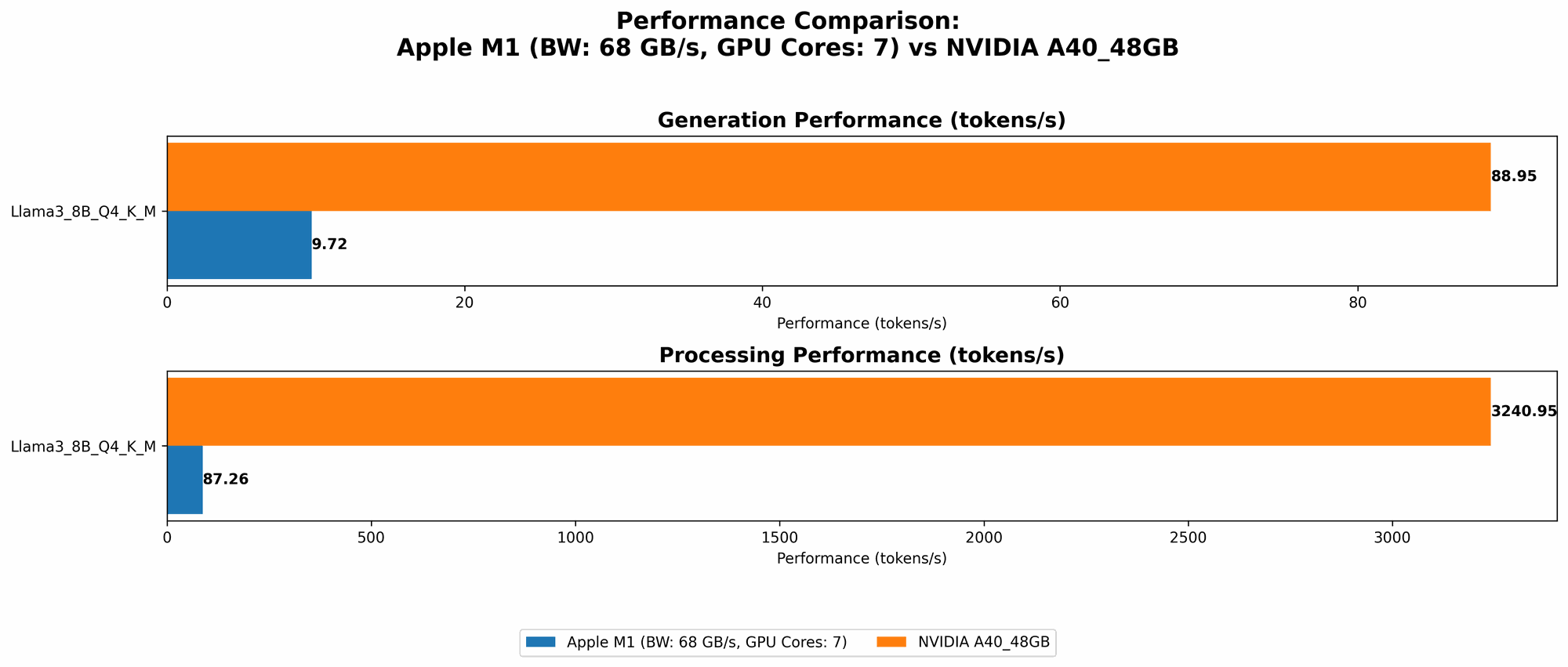

Llama 3 8B (Q4KM): The A40 shows phenomenal performance for both processing and generation with Llama 3 8B. It's significantly faster than the M1.

- Q4KM Processing : ~3240.95 tokens/second

- Q4KM Generation: ~88.95 tokens/second

Llama 3 8B (F16): The A40 effortlessly handles the F16 format for Llama 3 8B, again outperforming the M1 significantly.

- F16 Processing: ~4043.05 tokens/second

- F16 Generation: ~33.95 tokens/second

Llama 3 70B (Q4KM): Even for the large Llama 3 70B model, which is almost ten times larger than Llama 3 8B, the A40 performs well.

- Q4KM Processing: ~239.92 tokens/second

- Q4KM Generation: ~12.08 tokens/second

Llama 3 70B (F16): Unfortunately, the A40 doesn't have enough horsepower to handle the Llama 3 70B model in F16 format. It's a testament to the immense computational demands of such large models.

Comparison of Apple M1 and NVIDIA A40: Strengths and Weaknesses

Here's a breakdown of the strengths and weaknesses of each device:

Apple M1:

Strengths:

- Energy efficiency: The M1 is significantly more energy efficient than the A40, making it a better option for mobile or battery-powered devices.

- Cost-effective: The M1 is significantly more affordable than the A40, making it a budget-friendly choice for personal or small-scale LLM projects.

- Excellent performance with quantized models: The M1 excels at handling smaller, quantized models. Quantization significantly reduces the size of the LLM, making the M1 a viable option for models like Llama 2 7B and Llama 3 8B.

Weaknesses:

- Limited capacity for large models: The M1 struggles with larger, non-quantized models due to its smaller size and lower processing power.

- No F16 support for Llama 2 7B: The M1 simply doesn't have the necessary capacity to handle the Llama 2 7B model in F16 format.

NVIDIA A40:

Strengths:

- High processing power: The A40 is designed for high-performance computing tasks, making it ideal for running large, complex LLMs.

- Handles larger models: The A40 can efficiently handle large LLM models like Llama 3 70B, even with F16 format.

- Excellent performance with both quantized and F16 models: The A40 delivers exceptional speeds for both quantized and F16 models.

Weaknesses:

- High cost: The A40 is significantly more expensive than the M1, making it less accessible for individuals or small teams.

- Power consumption: The A40 requires more power than the M1, making it less suitable for mobile or battery-powered devices.

- Limited F16 support for larger models: While the A40 can handle smaller models in F16, it struggles with larger models like Llama 3 70B in F16 format.

Practical Recommendations for Choosing the Right Device

Choosing the right device for your LLM project depends on your specific needs and budget:

If you're budget-conscious and mainly working with smaller, quantized models: The Apple M1 is an excellent choice. Its efficiency and affordability make it a great solution for individual projects or small teams.

If you need to run large, complex LLMs and have the budget for it: The NVIDIA A40 is the way to go. Its high processing power and ability to handle larger models make it an ideal option for demanding LLM applications.

If you're unsure which device to choose: Consider your LLM model size, your budget, and your power consumption requirements. You can also experiment with different devices and models to find the best fit for your project.

Token Generation Speed Comparison Table

| Device | Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|---|

| M1 | Llama 2 7B | Q8_0 | 108.21 | 7.92 |

| M1 | Llama 2 7B | Q4_0 | 107.81 | 14.19 |

| M1 | Llama 3 8B | Q4KM | 87.26 | 9.72 |

| A40 | Llama 3 8B | Q4KM | 3240.95 | 88.95 |

| A40 | Llama 3 8B | F16 | 4043.05 | 33.95 |

| A40 | Llama 3 70B | Q4KM | 239.92 | 12.08 |

Note: The table above only reflects the token generation speeds for the specific models and devices tested. It's important to note that the performance of an LLM may vary depending on other factors like batch size, model architecture, and hardware specifications.

FAQs

What are Large Language Models (LLMs)?

LLMs are a type of artificial intelligence trained on massive amounts of text data. They are capable of understanding and generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. Examples of well-known LLMs include ChatGPT, Bard, and GPT-3.

What is Token Generation Speed?

Token generation speed refers to the rate at which an LLM can process and generate text, measured in tokens per second. A token is a basic unit of text in an LLM, often representing a word or part of a word.

What is Quantization?

Quantization is a technique used to reduce the size of an LLM by using smaller data types. This reduces the memory footprint of the model and can improve inference speed because it requires less computation. It's like using a smaller ruler to measure something – you might get slightly less precise results, but you can measure much faster.

Do I need a Powerful GPU for LLMs?

While a powerful GPU like the A40 is beneficial for running large LLMs, it's not always necessary. If you're working with smaller, quantized models, a device like the M1 can be sufficient. The key is to consider your specific LLM model size and your budget.

Keywords

Apple M1, NVIDIA A40, LLM, Large Language Model, Token Generation Speed, Performance, Benchmark, Quantization, F16, Llama 2, Llama 3, GPU, CPU, Deep Learning