Apple M1 68gb 7cores vs. NVIDIA A100 SXM 80GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming. These powerful AI systems are revolutionizing how we interact with computers, from generating creative text to translating languages and writing code. But running these models locally requires powerful hardware. This article dives deep into the performance differences between two popular devices for running LLMs: the Apple M1 (68GB, 7-cores) and the NVIDIA A100SXM80GB. We'll analyze their token generation speed for various LLM models and shed light on which device reigns supreme.

Apple M1 Token Speed Generation

The Apple M1, with its 68GB of memory and 7-core CPU, is a powerful chip. It's known for its efficiency and performance, making it a popular choice for developers who want to run LLMs locally.

Llama 2 7B Performance

Let's start with the Llama 2 7B model. The M1 chip demonstrates impressive processing power. Generating tokens with Q80 quantization yields a speed of 108.21 tokens per second for processing and 7.92 tokens per second for generation. Switching to Q40 quantization bumps up processing speed to 107.81 tokens per second while generation speed hits 14.19 tokens per second.

Llama 3 8B Performance

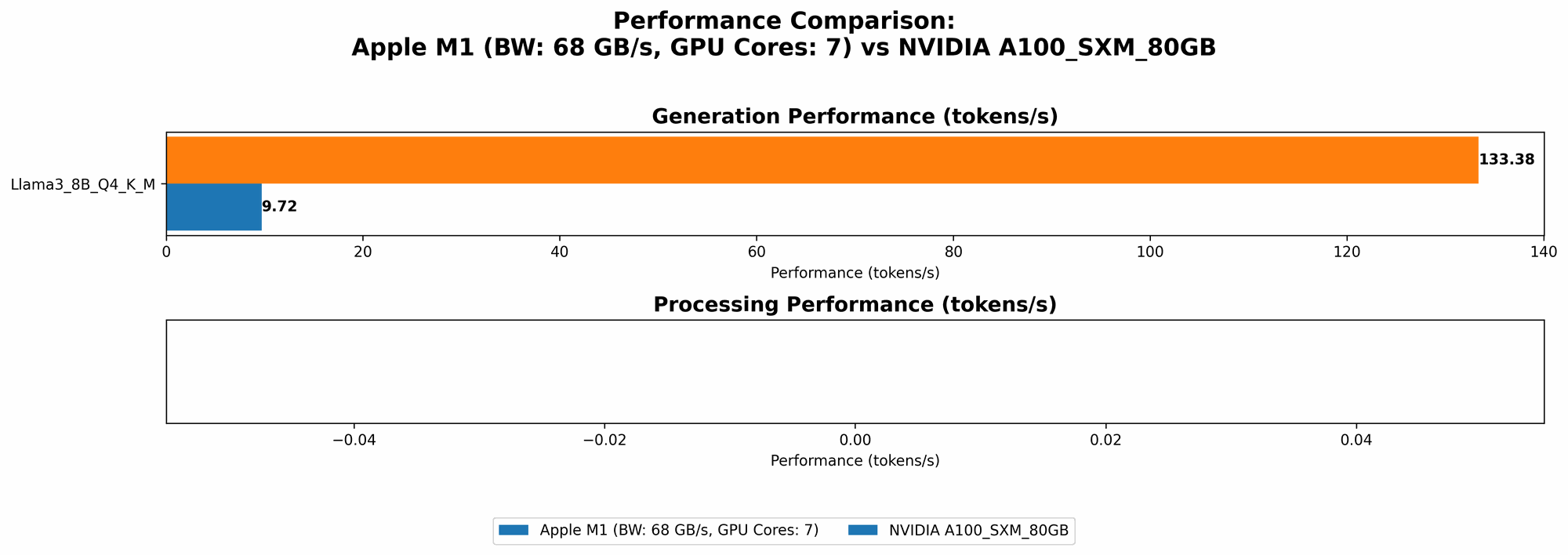

For the Llama 3 8B, the M1's performance is a little less impressive. Quantization techniques like Q4KM are implemented for more efficient processing, leading to 87.26 tokens per second for processing and 9.72 for generation.

Llama 3 70B Performance

The M1 is not a match for larger models like the Llama 3 70B. Due to memory limitations, the M1 struggles to handle the model's size, resulting in no data for its performance.

NVIDIA A100SXM80GB Token Speed Generation

The NVIDIA A100SXM80GB is a beast! A top-tier GPU designed for high-performance computing, it's a favorite in the AI world. Let's see how it stacks up against the M1.

Llama 3 8B Performance

The A100 shines brightly here. It delivers outstanding performance for the Llama 3 8B model. Using Q4KM quantization, it reaches a remarkable 133.38 tokens per second for generation. The F16 precision also produces impressive results, with a speed of 53.18 tokens per second.

Llama 3 70B Performance

The A100's memory and processing power are ideally suited for these larger models. The Llama 3 70B model is handled effortlessly, achieving a 24.33 token per second generation speed with Q4KM quantization.

Comparison of Apple M1 and NVIDIA A100SXM80GB

Performance Comparison

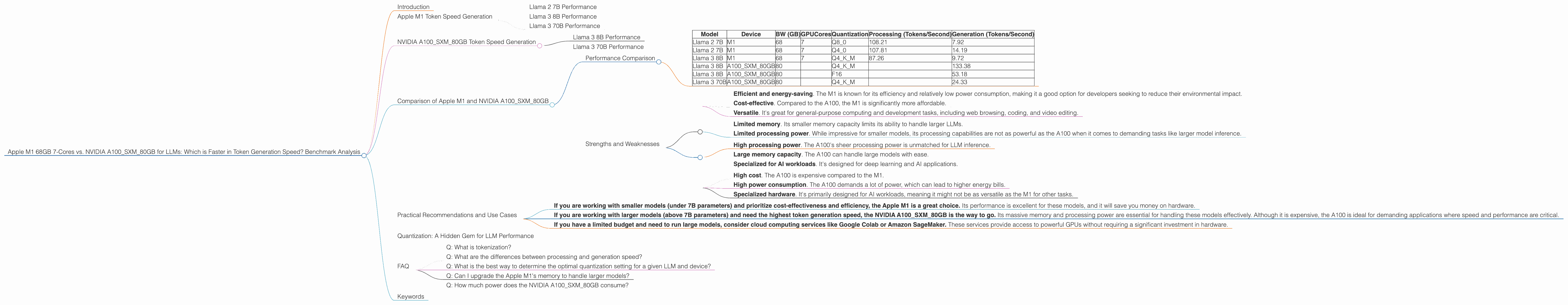

Let's summarize the key performance insights in a table:

| Model | Device | BW (GB) | GPUCores | Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|---|---|---|

| Llama 2 7B | M1 | 68 | 7 | Q8_0 | 108.21 | 7.92 |

| Llama 2 7B | M1 | 68 | 7 | Q4_0 | 107.81 | 14.19 |

| Llama 3 8B | M1 | 68 | 7 | Q4KM | 87.26 | 9.72 |

| Llama 3 8B | A100SXM80GB | 80 | Q4KM | 133.38 | ||

| Llama 3 8B | A100SXM80GB | 80 | F16 | 53.18 | ||

| Llama 3 70B | A100SXM80GB | 80 | Q4KM | 24.33 |

Key Takeaways:

- The A100SXM80GB is the clear winner for token generation speed. It outperforms the M1 significantly, particularly when dealing with larger models like Llama 3 8B and Llama 3 70B.

- Smaller models like Llama 2 7B are well-suited for the M1. It demonstrates impressive performance in terms of processing and generation speeds, especially when using quantization.

- The A100SXM80GB is a better choice for larger models. Its vast memory capacity and powerful processing enable it to handle these models efficiently.

- Quantization plays a vital role in boosting performance. The M1's performance is enhanced by using Q80 and Q40 for Llama 2 7B, while the A100 benefits from using Q4KM for larger models.

Strengths and Weaknesses

Apple M1:

Strengths:

- Efficient and energy-saving. The M1 is known for its efficiency and relatively low power consumption, making it a good option for developers seeking to reduce their environmental impact.

- Cost-effective. Compared to the A100, the M1 is significantly more affordable.

- Versatile. It's great for general-purpose computing and development tasks, including web browsing, coding, and video editing.

Weaknesses:

- Limited memory. Its smaller memory capacity limits its ability to handle larger LLMs.

- Limited processing power. While impressive for smaller models, its processing capabilities are not as powerful as the A100 when it comes to demanding tasks like larger model inference.

NVIDIA A100SXM80GB:

Strengths:

- High processing power. The A100's sheer processing power is unmatched for LLM inference.

- Large memory capacity. The A100 can handle large models with ease.

- Specialized for AI workloads. It's designed for deep learning and AI applications.

Weaknesses:

- High cost. The A100 is expensive compared to the M1.

- High power consumption. The A100 demands a lot of power, which can lead to higher energy bills.

- Specialized hardware. It's primarily designed for AI workloads, meaning it might not be as versatile as the M1 for other tasks.

Practical Recommendations and Use Cases

Here's a guide on choosing the right device based on your specific need:

- If you are working with smaller models (under 7B parameters) and prioritize cost-effectiveness and efficiency, the Apple M1 is a great choice. Its performance is excellent for these models, and it will save you money on hardware.

- If you are working with larger models (above 7B parameters) and need the highest token generation speed, the NVIDIA A100SXM80GB is the way to go. Its massive memory and processing power are essential for handling these models effectively. Although it is expensive, the A100 is ideal for demanding applications where speed and performance are critical.

- If you have a limited budget and need to run large models, consider cloud computing services like Google Colab or Amazon SageMaker. These services provide access to powerful GPUs without requiring a significant investment in hardware.

Quantization: A Hidden Gem for LLM Performance

Quantization is a cool technique that helps improve LLM performance. Think of it as a way to make the models more compact and efficient without sacrificing accuracy. It's like a diet for your LLM, helping it run faster and use less energy. Instead of storing numbers using 32 bits in their standard form, we can use fewer bits to represent them. This can significantly decrease the memory footprint and speed up processing. The M1 takes advantage of this technique for Llama 2 7B with Q80 and Q40, while the A100 uses Q4KM for larger models.

FAQ

Q: What is tokenization?

A: Tokenization is the process of breaking down text into smaller units called tokens. Think of it as dividing a sentence into individual words to understand its meaning. LLMs rely on tokenization to process text and generate meaningful output.

Q: What are the differences between processing and generation speed?

A: Processing speed refers to how fast the model can process input text, translating it into tokens. Generation speed indicates how quickly the model can generate new tokens based on the provided input. Both are important for a smooth and efficient LLM experience.

Q: What is the best way to determine the optimal quantization setting for a given LLM and device?

A: The best setting depends on the specific LLM and device. Experimenting with different quantization levels and observing the impact on performance is crucial.

Q: Can I upgrade the Apple M1's memory to handle larger models?

A: Unfortunately, the memory on the Apple M1 is soldered onto the chip and cannot be upgraded.

Q: How much power does the NVIDIA A100SXM80GB consume?

A: The A100SXM80GB consumes a significant amount of power, typically around 300 watts, making it energy-intensive.

Keywords

LLMs, Apple M1, NVIDIA A100SXM80GB, Token Generation Speed, Llama 2 7B, Llama 3 8B, Llama 3 70B, Quantization, F16, Q4KM, Q80, Q40, Tokenization, Processing Speed, Generation Speed, Performance, Benchmarks, AI, Machine Learning, Deep Learning, Hardware, GPU, CPU.