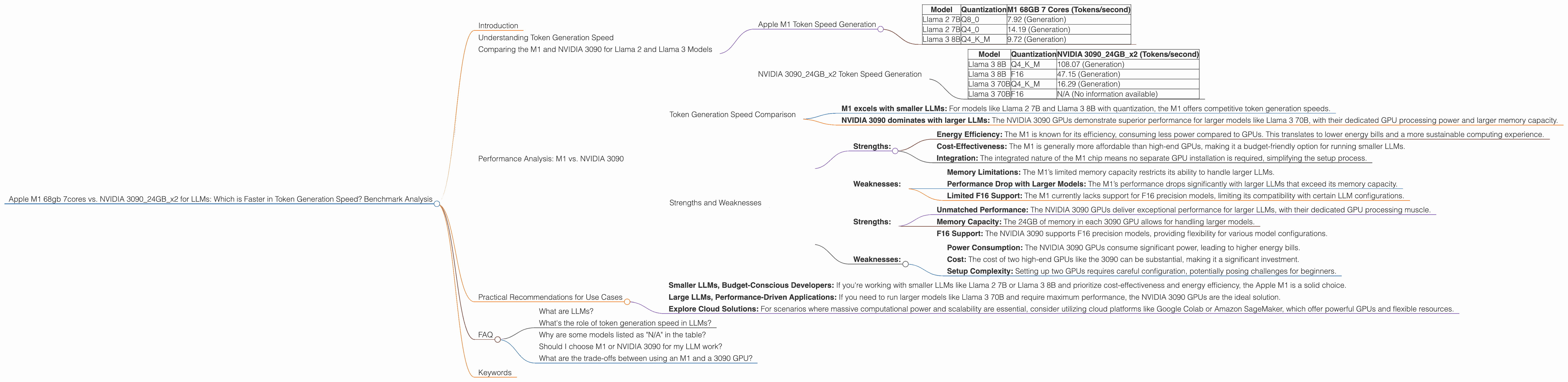

Apple M1 68gb 7cores vs. NVIDIA 3090 24GB x2 for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly evolving, pushing the boundaries of what's possible with artificial intelligence. These powerful models require significant computing resources for both training and inference, making the choice of hardware crucial for optimal performance.

In this article, we'll dive deep into a benchmark analysis comparing two popular devices for running LLMs: the Apple M1 with 68GB of RAM and 7 cores against two NVIDIA 3090 GPUs with 24GB of memory each. We'll focus on token generation speed, a key performance metric for LLMs, and explore the strengths and weaknesses of each device for various LLM models.

Imagine LLMs like super-intelligent assistants capable of generating creative content, translating languages, writing code, and much more. These models need powerful tools to fuel their potential, just like a high-performance engine for a race car. That's where the choice of hardware comes in.

Understanding Token Generation Speed

Token generation speed refers to how quickly a model can process input text and generate output text, measured in tokens per second. A higher token generation speed means faster response times, a crucial factor for real-time applications, such as chatbots, translation services, and code completion tools.

Comparing the M1 and NVIDIA 3090 for Llama 2 and Llama 3 Models

Apple M1 Token Speed Generation

The Apple M1 chip, with its integrated GPU and high memory bandwidth, offers compelling performance for smaller LLM models, especially when using quantized weights.

Let's analyze the data:

| Model | Quantization | M1 68GB 7 Cores (Tokens/second) |

|---|---|---|

| Llama 2 7B | Q8_0 | 7.92 (Generation) |

| Llama 2 7B | Q4_0 | 14.19 (Generation) |

| Llama 3 8B | Q4KM | 9.72 (Generation) |

- Observations: The M1 demonstrates decent performance for smaller LLMs like Llama 2 7B and Llama 3 8B with Q8 and Q4 quantization.

Quantization is like compressing the model's brain. It reduces the size of the model, making it run faster and use less memory. Imagine it like storing the same information in a smaller package, but still having all the important details.

Limitations: The M1 struggles with larger models like Llama 3 70B, for which there are no available performance numbers due to memory limitations. It's important to note the M1 doesn't support F16 (half-precision floating point) models.

NVIDIA 309024GBx2 Token Speed Generation

The NVIDIA 3090 GPUs, with their massive parallel processing power, excel at handling larger LLMs and models with F16 precision.

Let's look at the numbers:

| Model | Quantization | NVIDIA 309024GBx2 (Tokens/second) |

|---|---|---|

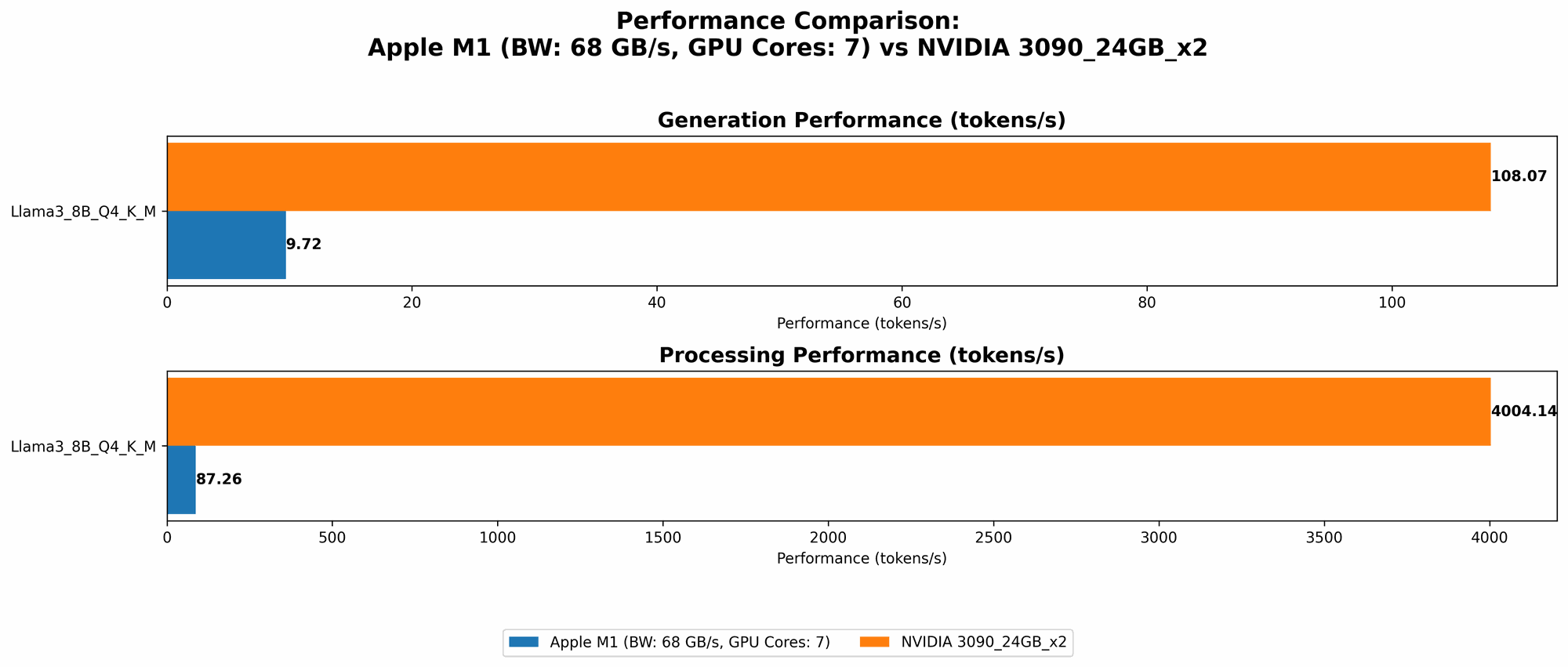

| Llama 3 8B | Q4KM | 108.07 (Generation) |

| Llama 3 8B | F16 | 47.15 (Generation) |

| Llama 3 70B | Q4KM | 16.29 (Generation) |

| Llama 3 70B | F16 | N/A (No information available) |

Observations:

- Exceptional Performance for Larger Models: The 3090 GPUs deliver significantly higher token generation speeds than the M1 for larger models like Llama 3 8B and Llama 3 70B, even with F16 precision.

- Memory Advantage: The 3090 GPUs with their 24GB of memory each can comfortably handle larger models, unlike the M1 which faces limitations with models exceeding its memory capacity.

- Quantization Impact: The 3090 GPUs still benefit from quantization, showing a boost in performance for LLMs like Llama 3 8B with Q4KM quantization compared to F16 precision.

Performance Analysis: M1 vs. NVIDIA 3090

Token Generation Speed Comparison

The benchmark results show a clear trend:

- M1 excels with smaller LLMs: For models like Llama 2 7B and Llama 3 8B with quantization, the M1 offers competitive token generation speeds.

- NVIDIA 3090 dominates with larger LLMs: The NVIDIA 3090 GPUs demonstrate superior performance for larger models like Llama 3 70B, with their dedicated GPU processing power and larger memory capacity.

Think of it like this: The M1 is like a nimble sprinter, excelling in shorter races (smaller LLMs). The NVIDIA 3090 is more like a powerful marathon runner, dominating longer distances (larger LLMs).

Strengths and Weaknesses

Here's a breakdown of the strengths and weaknesses of each device:

Apple M1:

- Strengths:

- Energy Efficiency: The M1 is known for its efficiency, consuming less power compared to GPUs. This translates to lower energy bills and a more sustainable computing experience.

- Cost-Effectiveness: The M1 is generally more affordable than high-end GPUs, making it a budget-friendly option for running smaller LLMs.

- Integration: The integrated nature of the M1 chip means no separate GPU installation is required, simplifying the setup process.

- Weaknesses:

- Memory Limitations: The M1’s limited memory capacity restricts its ability to handle larger LLMs.

- Performance Drop with Larger Models: The M1’s performance drops significantly with larger LLMs that exceed its memory capacity.

- Limited F16 Support: The M1 currently lacks support for F16 precision models, limiting its compatibility with certain LLM configurations.

NVIDIA 309024GBx2:

- Strengths:

- Unmatched Performance: The NVIDIA 3090 GPUs deliver exceptional performance for larger LLMs, with their dedicated GPU processing muscle.

- Memory Capacity: The 24GB of memory in each 3090 GPU allows for handling larger models.

- F16 Support: The NVIDIA 3090 supports F16 precision models, providing flexibility for various model configurations.

- Weaknesses:

- Power Consumption: The NVIDIA 3090 GPUs consume significant power, leading to higher energy bills.

- Cost: The cost of two high-end GPUs like the 3090 can be substantial, making it a significant investment.

- Setup Complexity: Setting up two GPUs requires careful configuration, potentially posing challenges for beginners.

Practical Recommendations for Use Cases

Based on the analysis, here are some recommendations for selecting the right device based on your LLM needs:

- Smaller LLMs, Budget-Conscious Developers: If you're working with smaller LLMs like Llama 2 7B or Llama 3 8B and prioritize cost-effectiveness and energy efficiency, the Apple M1 is a solid choice.

- Large LLMs, Performance-Driven Applications: If you need to run larger models like Llama 3 70B and require maximum performance, the NVIDIA 3090 GPUs are the ideal solution.

- Explore Cloud Solutions: For scenarios where massive computational power and scalability are essential, consider utilizing cloud platforms like Google Colab or Amazon SageMaker, which offer powerful GPUs and flexible resources.

FAQ

What are LLMs?

LLMs are powerful AI models trained on massive amounts of text data, allowing them to generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What's the role of token generation speed in LLMs?

Token generation speed measures how quickly an LLM can produce text. A higher speed means faster responses, which is important for real-time applications like chatbots, translation services, and code completion tools.

Why are some models listed as "N/A" in the table?

The data we used for this analysis may not have complete information for all model combinations. For example, some models may not have been tested on specific devices, or the performance data might not be readily available.

Should I choose M1 or NVIDIA 3090 for my LLM work?

The best choice depends on your specific needs. If you're working with smaller LLMs and prioritize cost and efficiency, the M1 is a good option. For larger models and performance optimization, NVIDIA 3090 GPUs are the way to go.

What are the trade-offs between using an M1 and a 3090 GPU?

The M1 excels in energy efficiency and cost-effectiveness for smaller LLMs, but it's limited in memory and performance with larger models. The 3090 GPUs offer exceptional performance for large LLMs but come at a higher cost and require a significant power investment.

Keywords

LLM, Large Language Model, Apple M1, NVIDIA 3090, token generation speed, GPU, benchmark analysis, Llama 2, Llama 3, quantized weights, F16 precision, energy efficiency, cost-effectiveness, memory limitations, performance, cloud computing, Google Colab, Amazon SageMaker