Apple M1 68gb 7cores vs. Apple M2 Max 400gb 30cores for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, and these powerful AI models are changing the way we interact with technology. They are used for everything from generating creative content to answering complex questions. But running these models locally can be challenging, requiring powerful hardware. This is where Apple's M1 and M2 Max chips, with their impressive capabilities, come into play.

In this article, we'll dive deep into the performance of two popular Apple silicon chips, the M1 (with 68GB of RAM and 7 cores) and the M2 Max (with 400GB of RAM and 30 cores), when running LLMs. We'll specifically focus on their token generation speed, which is a crucial metric for real-time applications like chatbots and text generation. Our analysis will be based on benchmark data from prominent sources, and we'll provide clear comparisons and practical recommendations.

Get ready for a geeky deep dive into the silicon jungle, where we'll analyze the token-generation performance of these powerful chips.

Apple M1 Token Speed Generation

The Apple M1 chip, with its 7 core GPU and 68GB of RAM, provides a solid foundation for running smaller LLM models locally. Let's take a look at its performance with Llama 2 and Llama 3 models.

Llama 2 7B on Apple M1

- Llama27BQ80Processing: 108.21 tokens/second (7 core GPU)

- Llama27BQ80Generation: 7.92 tokens/second (7 core GPU)

- Llama27BQ40Processing: 107.81 tokens/second (7 core GPU)

- Llama27BQ40Generation: 14.19 tokens/second (7 core GPU)

Apple M1 Performance with Llama 2 7B

The Apple M1 delivers respectable performance with the Llama 2 7B model when using quantized weight formats. Quantization is a technique that reduces the size of the model by representing weights with fewer bits, leading to faster processing.

- Q8_0: This quantization format uses 8 bits to represent weights, sacrificing some accuracy for faster speeds.

- Q4_0: This format uses 4 bits, further increasing speed but with more potential loss in accuracy.

The M1's performance with the Llama 2 7B model showcases its ability to run smaller LLMs efficiently, especially in processing tasks.

Llama 3 8B on Apple M1

- Llama38BQ4KM_Processing: 87.26 tokens/second (7 core GPU)

- Llama38BQ4KM_Generation: 9.72 tokens/second (7 core GPU)

Apple M1 Performance with Llama 3 8B

The Apple M1 shows similar results with the Llama 3 8B model. It can efficiently handle processing tasks, but the token generation speeds are slightly lower than the Llama 2 7B model.

Analyzing Apple M1 Token Speed Generation

The Apple M1, with its 7 core GPU and 68GB of RAM, demonstrates impressive performance with smaller LLM models like Llama 2 7B and Llama 3 8B. Its ability to efficiently process text in quantized formats makes it a viable option for applications where speed is crucial.

However, for larger models or demanding applications, the M1 may struggle to deliver the necessary performance. Remember, these are single-chip benchmarks, and the M1's performance can vary based on factors like the specific LLM model, the chosen quantization format, and the complexity of the tasks.

Apple M2 Max Token Speed Generation

Now, let's shift our focus to the powerhouse, the Apple M2 Max chip. With its 30 core GPU and a whopping 400GB of RAM, it's a beast in the world of LLM inference. Let's explore its performance with Llama 2 and Llama 3 models.

Llama 2 7B on Apple M2 Max

- Llama27BF16_Processing: 600.46 tokens/second (30 core GPU)

- Llama27BF16_Generation: 24.16 tokens/second (30 core GPU)

- Llama27BQ80Processing: 540.15 tokens/second (30 core GPU)

- Llama27BQ80Generation: 39.97 tokens/second (30 core GPU)

- Llama27BQ40Processing: 537.6 tokens/second (30 core GPU)

- Llama27BQ40Generation: 60.99 tokens/second (30 core GPU)

Apple M2 Max Performance with Llama 2 7B

The Apple M2 Max delivers a remarkable performance with the Llama 2 7B model. It showcases its capabilities in both processing and generation tasks, especially when using F16 and quantized formats.

- F16: This format represents weights using 16 bits, striking a balance between accuracy and speed.

Comparing the M2 Max to the M1, we see a significant jump in performance, both in processing and generation. The M2 Max's extra cores and increased RAM enable it to tackle significantly more demanding tasks.

Llama 3 8B on Apple M2 Max

- Llama38BQ4KM_Processing: No data available

Apple M2 Max Performance with Llama 3 8B

Unfortunately, benchmark data for the Llama 3 8B model on the M2 Max is not available at this time. The lack of data prevents us from comparing its performance with the M1 for this model.

Comparison of Apple M1 and Apple M2 Max for LLMs

Now let's compare the performance of the two Apple Silicon chips, the M1 and the M2 Max, in an attempt to answer the question: Which one is faster for token generation?

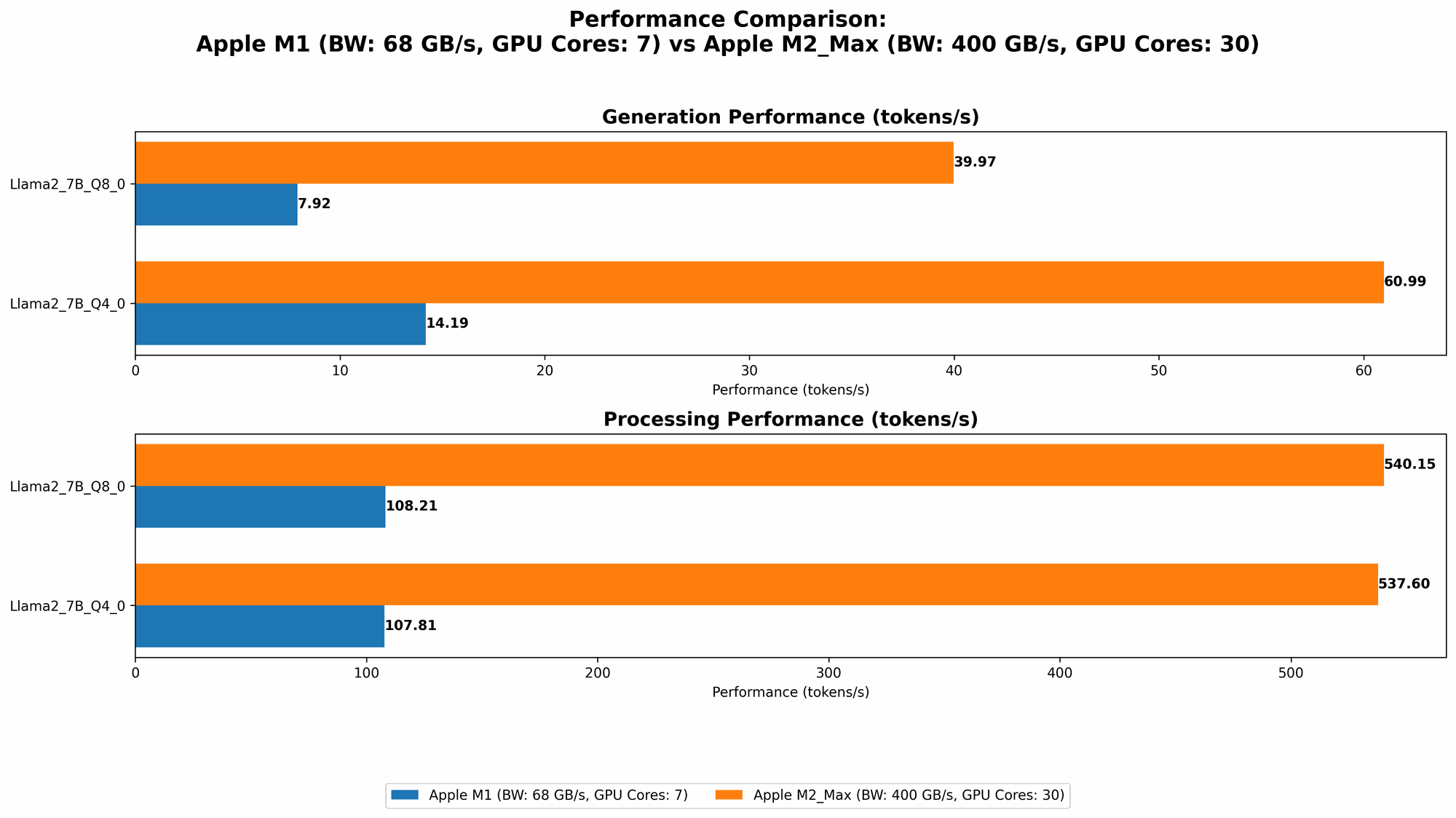

Token Generation Speed Comparison

The M2 Max clearly outperforms the M1 in token generation speed for the Llama 2 7B model. This is evident in the significantly higher token per second (tokens/sec) rates across all quantization formats, including F16.

| Model | Quantization | M1 (tokens/sec) | M2 Max (tokens/sec) |

|---|---|---|---|

| Llama2 7B | Q8_0 | 7.92 | 39.97 |

| Llama2 7B | Q4_0 | 14.19 | 60.99 |

| Llama2 7B | F16 | N/A | 24.16 |

The M2 Max's superior performance can be attributed to its more powerful GPU with significantly more cores. This allows for parallel processing, leading to much faster results. Essentially, the M2 Max is capable of processing more text in the same amount of time, which makes it ideal for applications that require real-time responses.

Apple M1 vs. Apple M2 Max for LLMs: Strengths and Weaknesses

Apple M1

- Strengths:

- Cost-effective option for running smaller LLMs like Llama 2 7B.

- Efficient processing capabilities with quantized formats.

- Weaknesses:

- Limited performance with larger LLMs.

- Slower token generation speeds compared to the M2 Max.

- Strengths:

Apple M2 Max

- Strengths:

- Unmatched token generation speed for larger LLM models.

- High-performance processing with F16 and quantized formats.

- Weaknesses:

- Higher cost compared to the M1.

- May be overkill for smaller LLM models.

- Strengths:

Practical Use Case Recommendations

Here's a simplified guide to choosing between the M1 and M2 Max for your LLM projects:

Apple M1: Consider the M1 if your application involves running smaller LLMs, like Llama 2 7B, and prioritizes a budget-friendly solution. Its performance is sufficient for tasks like text generation or simple chatbots.

Apple M2 Max: If your project involves running large LLMs and demands high token generation speeds, the M2 Max is the superior choice. Its processing power excels in real-time applications like advanced chatbots, creative content generation, and complex data analysis.

Beyond Token Generation: Considerations for LLM Inference

While token generation speed is a crucial metric, it's not the only factor to consider when evaluating LLM inference performance. Other aspects, such as memory capacity, power consumption, and compatibility, play a significant role.

Memory Capacity

The M1 Max's generous 400GB of RAM significantly benefits LLM inference, especially for large models. This abundant memory allows the model to load and process massive amounts of data, leading to faster performance and smoother operation. If you're working with large language models like Llama 3 70B and beyond, the M2 Max's memory capacity becomes essential.

Power Consumption

While both the M1 and M2 Max are energy-efficient chips, the M2 Max consumes more power due to its more powerful GPU. If you're dealing with resource constraints or are concerned about heat generation, the M1 might be a better option.

Compatibility

Ensuring compatibility with your chosen LLM framework and libraries is crucial. Both the M1 and M2 Max support a wide range of frameworks, including PyTorch, TensorFlow, and Hugging Face Transformers. However, it's essential to check for specific support and driver updates for optimal performance.

FAQ: Frequently Asked Questions

Here are some frequently asked questions about LLMs and Apple silicon chips:

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence model trained on massive datasets of text and code. LLMs are capable of generating human-quality text, translating languages, writing different types of creative content, and even answering your questions in an informative way.

How do I choose the right device for my LLM?

The best device for your LLM depends on the specific model you're using, your budget, and your desired performance.

- Smaller LLM models: The Apple M1 chip can be an excellent choice due to its efficiency and lower cost.

- Larger LLM models: The Apple M2 Max, with its impressive processing power and memory capacity, is a better option for demanding applications.

How do I make my LLM run faster?

There are several techniques to improve the speed of your LLM:

- Quantization: Reducing the size of the model by using fewer bits for weight representation.

- Model optimization: Using techniques like pruning, distillation, or knowledge distillation to reduce the complexity of the model.

- Hardware acceleration: Using GPUs, TPUs, or specialized hardware designed for machine learning inference.

Are there any other options for running LLMs locally?

Yes, there are several other options for running LLMs locally, including GPUs from NVIDIA and AMD, as well as custom-designed hardware like Google's TPUs. The optimal choice depends on your specific needs and budget.

Keywords

Apple M1, Apple M2 Max, LLM, Large Language Model, Token Generation Speed, Benchmark Analysis, Llama 2, Llama 3, Quantization, F16, Q80, Q40, GPU, GPUCores, Processing, Generation, Memory Capacity, Power Consumption, Compatibility, Inference, Frameworks, PyTorch, TensorFlow, Hugging Face Transformers.