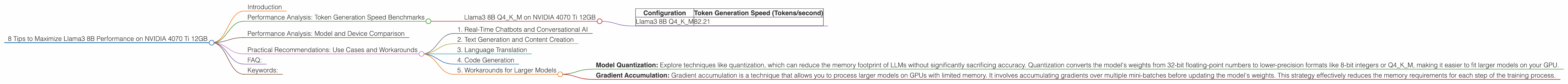

8 Tips to Maximize Llama3 8B Performance on NVIDIA 4070 Ti 12GB

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models and architectures emerging constantly. One of the most exciting developments in this field is the release of Llama3 8B, a powerful language model capable of generating human-quality text, translating languages, and writing different kinds of creative content. But harnessing the full potential of Llama3 8B requires optimal hardware and configuration settings. This article will serve as your guide to maximizing Llama3 8B performance on the popular NVIDIA 4070 Ti 12GB, a graphics card that strikes a balance between cost and power. Whether you're a seasoned developer or just starting your LLM journey, we've got you covered.

Performance Analysis: Token Generation Speed Benchmarks

Llama3 8B Q4KM on NVIDIA 4070 Ti 12GB

The NVIDIA 4070 Ti 12GB, with its ample memory and processing power, provides fertile ground for Llama3 8B to flourish. We'll delve into the performance metrics of Llama3 8B running on this GPU, specifically focusing on the Q4KM (quantization) configuration.

Token Generation Speed:

| Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4KM | 82.21 |

Analysis:

This performance data highlights several key points. First, the NVIDIA 4070 Ti 12GB allows for a substantial token generation speed of 82.21 tokens per second for Llama3 8B with Q4KM quantization. This translates to a significant improvement in processing speed, enabling you to generate text, translate languages, and complete other tasks involving Llama3 8B at a noticeably faster rate. Remember, a higher token generation speed means faster responses from your LLM, which is vital for real-time applications.

Performance Analysis: Model and Device Comparison

While the NVIDIA 4070 Ti 12GB exhibits impressive performance with Llama3 8B, it's crucial to have a comparative perspective. This section will analyze the performance of other LLM models on this GPU, providing you with a comprehensive understanding of the landscape.

Important Note: The available data doesn't include performance metrics for F16 quantization, Llama3 70B, or other devices. Therefore, we can't compare performance for these scenarios.

Practical Recommendations: Use Cases and Workarounds

The analysis revealed the robust performance of Llama3 8B Q4KM on the NVIDIA 4070 Ti 12GB. This opens a range of possibilities for developers and enthusiasts. Here are a few use cases and workarounds to maximize your Llama3 8B experience:

1. Real-Time Chatbots and Conversational AI

The high token generation speed of Llama3 8B Q4KM on the NVIDIA 4070 Ti 12GB makes it ideal for real-time chatbots and conversational AI applications. Imagine a chatbot that seamlessly responds to user prompts with natural and coherent dialogue. The fast processing capabilities of the 4070 Ti 12GB ensures a smooth and engaging interaction.

2. Text Generation and Content Creation

Need to generate creative content like blog posts, articles, or even poems? Llama3 8B Q4KM on the NVIDIA 4070 Ti 12GB can be your content creation powerhouse. The fast processing speed enables you to experiment with different prompts and generate high-quality text quickly.

3. Language Translation

Translating text in real-time is another exciting application for Llama3 8B Q4KM on the NVIDIA 4070 Ti 12GB. The GPU's processing power combined with the language capabilities of Llama3 8B can translate large volumes of text with impressive accuracy and speed, opening up opportunities for global communication and collaboration.

4. Code Generation

While Llama3 8B is primarily a language model, its capabilities can be extended to code generation. The fast processing power of the NVIDIA 4070 Ti 12GB can handle the complex computations involved in code generation, enabling the creation of basic code snippets and aiding developers in their workflow.

5. Workarounds for Larger Models

While the available data doesn't include performance metrics for Llama3 70B, we can still provide some practical recommendations:

- Model Quantization: Explore techniques like quantization, which can reduce the memory footprint of LLMs without significantly sacrificing accuracy. Quantization converts the model's weights from 32-bit floating-point numbers to lower-precision formats like 8-bit integers or Q4KM, making it easier to fit larger models on your GPU.

- Gradient Accumulation: Gradient accumulation is a technique that allows you to process larger models on GPUs with limited memory. It involves accumulating gradients over multiple mini-batches before updating the model's weights. This strategy effectively reduces the memory requirements for each step of the training process.

FAQ:

Q: What is quantization?

A: Quantization is a technique that reduces the memory footprint of LLMs without significantly affecting accuracy. It involves converting the model's weights from 32-bit floating-point numbers to lower-precision formats like 8-bit integers or Q4KM. Think of it like using a smaller ruler to measure the same distance. You might lose some precision, but it's still a good enough measurement for most purposes.

Q: What is gradient accumulation?

A: Gradient accumulation is a technique that allows you to train larger models on GPUs with limited memory. It involves accumulating gradients over multiple mini-batches before updating the model's weights. This strategy effectively reduces the memory requirements for each step of the training process. It's like adding the results of multiple smaller quizzes together before grading a larger exam.

Q: What are some other popular GPUs for LLM inference?

A: Other popular GPUs for LLM inference include the NVIDIA A100, H100, and GeForce RTX 4090. However, the performance and cost of these GPUs vary significantly.

Q: What are some popular LLM libraries?

A: Popular LLM libraries include Hugging Face Transformers, PyTorch, TensorFlow, and llama.cpp. These libraries offer a comprehensive set of tools for working with LLMs, including model training, inference, and evaluation.

Keywords:

Llama3 8B, NVIDIA 4070 Ti 12GB, LLM, Token Generation Speed, Quantization, Q4KM, Performance, GPU, Deep Learning, Generative AI, Chatbot, Conversational AI, Text Generation, Content Creation, Language Translation, Code Generation, Gradient Accumulation, Hugging Face Transformers, PyTorch, TensorFlow, llama.cpp