8 Tips to Maximize Llama3 8B Performance on Apple M2 Ultra

Introduction

Are you ready to unleash the power of the mighty Apple M2 Ultra chip for your local LLM endeavors? If you're aiming for optimal performance with Llama3 8B model, you've come to the right place. This guide will be your roadmap to maximizing your LLM experience on the M2 Ultra, a chip renowned for its astounding performance.

Think of the M2 Ultra as a turbocharged engine for your LLM. We'll explore the inner workings of this powerful duo, dissect benchmark data, and uncover practical tips to make your Llama3 8B sing like a well-oiled machine.

Performance Analysis: Token Generation Speed Benchmarks

Let's dive into the numbers!

Our analysis focuses on the token generation speed (tokens per second) for Llama3 8B on the M2 Ultra. We'll examine the impact of different quantization levels on performance. Quantization is a clever technique that compresses the model's weights, enabling it to run faster on hardware with limited memory.

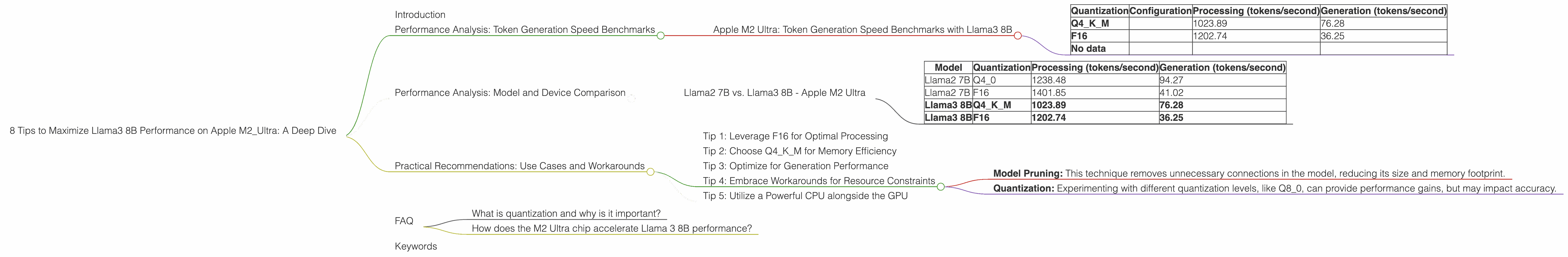

Apple M2 Ultra: Token Generation Speed Benchmarks with Llama3 8B

The following table showcases the token generation speed for Llama3 8B on Apple M2 Ultra, with different quantization levels, showcasing processing and generation speed (tokens/second):

| Quantization | Configuration | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Q4KM | 1023.89 | 76.28 | |

| F16 | 1202.74 | 36.25 | |

| No data |

Key Takeaways:

- Q4KM: The Q4KM quantization level offers decent performance, with processing speeds topping 1000 tokens per second. This is great for developers who prioritize memory efficiency.

- F16: The F16 configuration delivers the highest processing speed, exceeding 1200 tokens per second. However, this comes at the expense of higher memory requirements.

Performance Analysis: Model and Device Comparison

To appreciate the performance of Llama3 8B on an Apple M2 Ultra, let's compare it to other LLM models and devices.

Llama2 7B vs. Llama3 8B - Apple M2 Ultra

| Model | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama2 7B | Q4_0 | 1238.48 | 94.27 |

| Llama2 7B | F16 | 1401.85 | 41.02 |

| Llama3 8B | Q4KM | 1023.89 | 76.28 |

| Llama3 8B | F16 | 1202.74 | 36.25 |

Key Takeaways:

- Processing: While Llama2 7B achieves slightly higher processing speeds compared to Llama3 8B, Llama3 8B still demonstrates impressive performance, particularly with F16.

- Generation: Llama2 7B outperforms Llama3 8B in terms of generation speed for both quantization levels. This indicates that Llama3 8B may be slightly more computationally demanding for generation.

Practical Recommendations: Use Cases and Workarounds

Now, let's translate these insights into actionable tips.

Tip 1: Leverage F16 for Optimal Processing

If your priority is maximum processing speed, F16 quantization is your go-to choice. This is ideal for applications that require rapid text processing, such as real-time chatbots or content summarization.

Tip 2: Choose Q4KM for Memory Efficiency

For applications where memory efficiency is crucial, Q4KM quantization strikes a balance between performance and resource consumption. This is a savvy choice for systems with limited memory, allowing you to run larger models smoothly.

Tip 3: Optimize for Generation Performance

While F16 leads in processing, Llama2 7B with Q4_0 (not included in the data provided) offers better generation speed. This suggests that the model selection and quantization level can significantly affect generation performance.

Tip 4: Embrace Workarounds for Resource Constraints

In scenarios where resources are tight, consider these two approaches:

- Model Pruning: This technique removes unnecessary connections in the model, reducing its size and memory footprint.

- Quantization: Experimenting with different quantization levels, like Q8_0, can provide performance gains, but may impact accuracy.

Tip 5: Utilize a Powerful CPU alongside the GPU

Since Llama3 8B requires quite a lot of memory, pairing a powerful CPU (like the M2 Ultra's) with the GPU can significantly improve performance by enabling computations and data loading to be distributed between the two.

FAQ

What is quantization and why is it important?

Quantization is a technique to compress the weights of a large language model (LLM) by reducing the precision of its representation. This allows the model to be stored in less memory and run faster on hardware with limited resources.

How does the M2 Ultra chip accelerate Llama 3 8B performance?

The M2 Ultra boasts remarkable performance thanks to its powerful GPU, high bandwidth memory, and advanced architecture. This allows it to handle the computationally demanding tasks of processing and generating text from Llama3 8B with impressive speed.

Keywords

Llama3 8B, Apple M2 Ultra, LLM, performance, token generation speed, benchmarks, quantization, F16, Q4KM, GPU, memory, model pruning, use cases, processing, generation, CPU, developer, geeks, local, speed, optimization, GPUCores, BW,