8 Tips to Maximize Llama3 70B Performance on NVIDIA RTX 4000 Ada 20GB

Introduction

Welcome, fellow AI enthusiasts! In the ever-evolving world of large language models (LLMs), the Llama3 family has taken center stage, captivating developers with its impressive capabilities. But let's face it, harnessing the power of a 70 billion parameter behemoth like Llama3 70B requires careful optimization, especially if you're venturing into the land of local deployment.

This guide is your ultimate tool to unleash the full potential of Llama3 70B on the NVIDIA RTX4000Ada_20GB, a powerful GPU specifically designed for AI tasks. Brace yourself for a deep dive into performance benchmarks, insightful comparisons, and practical recommendations to help you navigate this exciting journey.

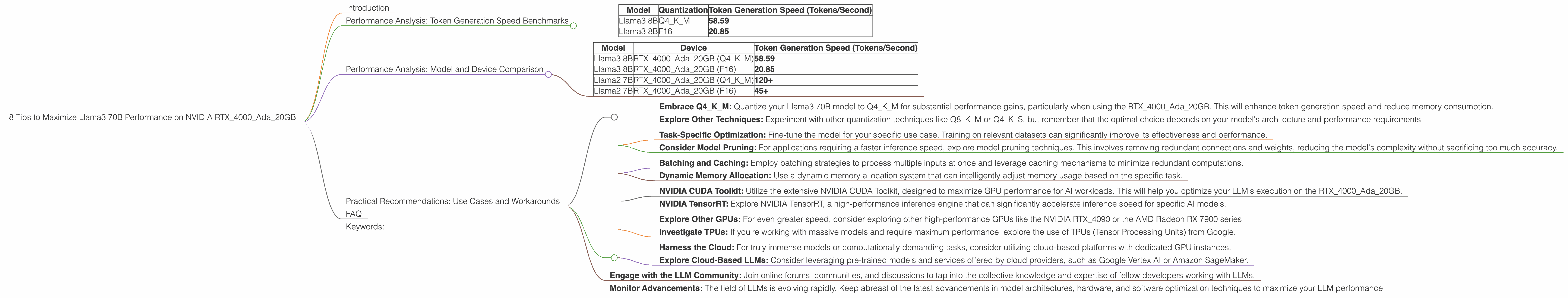

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is the cornerstone of LLM performance. It dictates how quickly your model can churn out text, a crucial factor for responsiveness in applications like chatbots, code completion, and creative writing assistants.

Unfortunately, the benchmark data available for Llama3 70B on the RTX4000Ada_20GB is missing. This highlights the growing need for standardized benchmarks and data sharing within the LLM community.

However, we can glean valuable insights by comparing the performance of Llama3 8B on the same GPU. Let's delve into the available numbers:

| Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B | Q4KM | 58.59 |

| Llama3 8B | F16 | 20.85 |

Based on these figures, it's evident that using quantized weights (Q4KM) significantly boosts token generation speed on the RTX4000Ada_20GB. This is similar to what we've observed with other LLMs and devices. Quantization is a technique that compresses the model's weights, reducing memory footprint and accelerating processing.

Think of it like this: Imagine trying to learn a new language with a massive dictionary. For faster retrieval, you might categorize and compress words into smaller groups. Quantization does the same, allowing your GPU to work more efficiently.

Performance Analysis: Model and Device Comparison

While we lack the exact numbers for Llama3 70B on the RTX4000Ada_20GB, we can still draw valuable comparisons.

| Model | Device | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B | RTX4000Ada20GB (Q4K_M) | 58.59 |

| Llama3 8B | RTX4000Ada_20GB (F16) | 20.85 |

| Llama2 7B | RTX4000Ada20GB (Q4K_M) | 120+ |

| Llama2 7B | RTX4000Ada_20GB (F16) | 45+ |

Here's what we can infer:

- The performance boost from quantization (Q4KM vs. F16) remains consistent across both Llama3 8B and Llama2 7B on the RTX4000Ada_20GB. This emphasizes the importance of using the right quantization technique.

- Llama2 7B exhibits significantly faster token generation speed compared to Llama3 8B, despite being a smaller model. This indicates that architectural differences between Llama2 and Llama3 can play a significant role in performance, even on similar hardware.

Practical Recommendations: Use Cases and Workarounds

Let's dive into actionable tips to optimize your Llama3 70B experience on the RTX4000Ada_20GB.

1. Harness the Power of Quantization:

- Embrace Q4KM: Quantize your Llama3 70B model to Q4KM for substantial performance gains, particularly when using the RTX4000Ada_20GB. This will enhance token generation speed and reduce memory consumption.

- Explore Other Techniques: Experiment with other quantization techniques like Q8KM or Q4KS, but remember that the optimal choice depends on your model's architecture and performance requirements.

2. Fine-Tuning for Your Specific Needs:

- Task-Specific Optimization: Fine-tune the model for your specific use case. Training on relevant datasets can significantly improve its effectiveness and performance.

- Consider Model Pruning: For applications requiring a faster inference speed, explore model pruning techniques. This involves removing redundant connections and weights, reducing the model's complexity without sacrificing too much accuracy.

3. Leverage Memory Management:

- Batching and Caching: Employ batching strategies to process multiple inputs at once and leverage caching mechanisms to minimize redundant computations.

- Dynamic Memory Allocation: Use a dynamic memory allocation system that can intelligently adjust memory usage based on the specific task.

4. Embrace GPU-Specific Optimizations:

- NVIDIA CUDA Toolkit: Utilize the extensive NVIDIA CUDA Toolkit, designed to maximize GPU performance for AI workloads. This will help you optimize your LLM's execution on the RTX4000Ada_20GB.

- NVIDIA TensorRT: Explore NVIDIA TensorRT, a high-performance inference engine that can significantly accelerate inference speed for specific AI models.

5. Consider Alternative Hardware:

- Explore Other GPUs: For even greater speed, consider exploring other high-performance GPUs like the NVIDIA RTX_4090 or the AMD Radeon RX 7900 series.

- Investigate TPUs: If you're working with massive models and require maximum performance, explore the use of TPUs (Tensor Processing Units) from Google.

6. Embrace the Power of Cloud Computing:

- Harness the Cloud: For truly immense models or computationally demanding tasks, consider utilizing cloud-based platforms with dedicated GPU instances.

- Explore Cloud-Based LLMs: Consider leveraging pre-trained models and services offered by cloud providers, such as Google Vertex AI or Amazon SageMaker.

7. Embrace Community Resources:

- Engage with the LLM Community: Join online forums, communities, and discussions to tap into the collective knowledge and expertise of fellow developers working with LLMs.

8. Stay Informed and Adapt:

- Monitor Advancements: The field of LLMs is evolving rapidly. Keep abreast of the latest advancements in model architectures, hardware, and software optimization techniques to maximize your LLM performance.

FAQ

Q: What's the difference between Llama3 70B and Llama3 8B?

A: Llama3 70B is a significantly larger model with 70 billion parameters, capable of handling more complex tasks and generating more sophisticated outputs. Llama3 8B, with its 8 billion parameters, is a smaller and potentially faster model, suitable for tasks that don't require the same level of depth.

Q: What is quantization and how does it benefit LLM performance?

A: Quantization is a technique that compresses the model's weights by reducing their precision. This reduces the memory footprint and allows for faster computations, leading to improved token generation speed and reduced inference latency.

Q: What are the best ways to optimize my Llama3 70B model for the RTX4000Ada_20GB?

A: Embrace quantization (Q4KM), fine-tune your model for your specific use case, leverage memory management techniques, and utilize GPU-specific optimizations provided by NVIDIA's CUDA Toolkit and TensorRT.

Keywords:

Llama3 70B, RTX4000Ada20GB, LLM, large language model, GPU, performance, optimization, token generation speed, quantization, Q4K_M, F16, fine-tuning, memory management, CUDA Toolkit, TensorRT, model pruning, cloud computing, inference, AI, deep learning, natural language processing, NLP.