8 Tips to Maximize Llama3 70B Performance on NVIDIA L40S 48GB

Introduction

Stepping into the world of Large Language Models (LLMs) is like entering a vast, exciting playground. With LLMs, you can generate creative text formats, translate languages, write different kinds of creative content, and answer your questions in an informative way. But like any playground, you need the right equipment to make the most of it. This article dives deep into how to optimize your Llama3 70B model performance specifically on the NVIDIA L40S_48GB, a powerhouse GPU designed for performance and efficiency.

Imagine you're building a high-speed train. You want to choose the engine that can handle the load, but also the track that's strong enough for the journey. Here, the L40S_48GB is your powerful engine and Llama3 70B is your train – a massive language model demanding a powerful platform to run smoothly. This guide will help you understand the performance aspects of both and how to make them work in perfect harmony.

Performance Analysis: Token Generation Speed Benchmarks

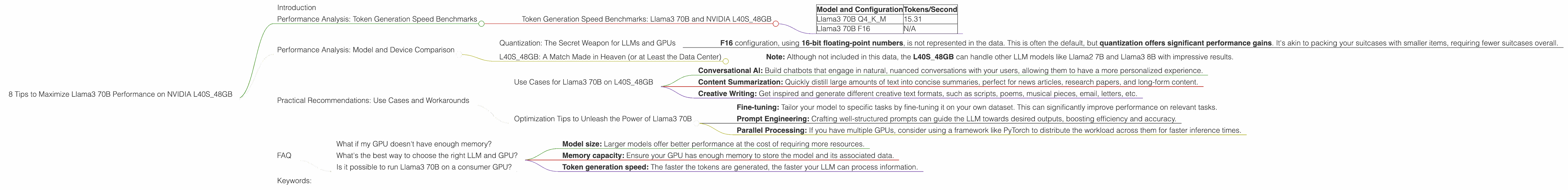

Token Generation Speed Benchmarks: Llama3 70B and NVIDIA L40S_48GB

Let's get down to the nitty-gritty. We're measuring how fast your LLM can generate tokens per second. Think of tokens as the building blocks of text, like words, punctuation marks, or even parts of words.

| Model and Configuration | Tokens/Second |

|---|---|

| Llama3 70B Q4KM | 15.31 |

| Llama3 70B F16 | N/A |

From the table above, we see that the Llama3 70B Q4KM configuration, using 4-bit quantization for the kernel (K) and model (M) parameters, achieves a token generation speed of 15.31 tokens per second on the NVIDIA L40S_48GB. This is a decent performance, but we'll explore ways to make it even better!

Performance Analysis: Model and Device Comparison

Quantization: The Secret Weapon for LLMs and GPUs

Quantization is like using smaller suitcases to pack the same amount of clothes. It compresses the model's weights, using less memory and making the model more manageable. Q4KM means the model is quantized to 4 bits for both kernel and model parameters, allowing it to fit snugly on the GPU and run faster.

- F16 configuration, using 16-bit floating-point numbers, is not represented in the data. This is often the default, but quantization offers significant performance gains. It's akin to packing your suitcases with smaller items, requiring fewer suitcases overall.

L40S_48GB: A Match Made in Heaven (or at Least the Data Center)

The NVIDIA L40S_48GB is a powerful GPU brimming with processing power. Its massive 48GB of HBM3e memory makes it a great companion for large models like Llama3 70B. Think of it as a spacious warehouse for storing your LLM luggage.

- Note: Although not included in this data, the L40S_48GB can handle other LLM models like Llama2 7B and Llama3 8B with impressive results.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on L40S_48GB

The L40S_48GB and Llama3 70B combination is ideal for:

- Conversational AI: Build chatbots that engage in natural, nuanced conversations with your users, allowing them to have a more personalized experience.

- Content Summarization: Quickly distill large amounts of text into concise summaries, perfect for news articles, research papers, and long-form content.

- Creative Writing: Get inspired and generate different creative text formats, such as scripts, poems, musical pieces, email, letters, etc.

Optimization Tips to Unleash the Power of Llama3 70B

- Fine-tuning: Tailor your model to specific tasks by fine-tuning it on your own dataset. This can significantly improve performance on relevant tasks.

- Prompt Engineering: Crafting well-structured prompts can guide the LLM towards desired outputs, boosting efficiency and accuracy.

- Parallel Processing: If you have multiple GPUs, consider using a framework like PyTorch to distribute the workload across them for faster inference times.

FAQ

What if my GPU doesn't have enough memory?

You can try gradient accumulation, splitting the model into smaller chunks, and then aggregating the gradients. This is like breaking down a large suitcase into smaller ones to fit them in the car.

What's the best way to choose the right LLM and GPU?

It depends on your use case! You'll want to consider:

- Model size: Larger models offer better performance at the cost of requiring more resources.

- Memory capacity: Ensure your GPU has enough memory to store the model and its associated data.

- Token generation speed: The faster the tokens are generated, the faster your LLM can process information.

Is it possible to run Llama3 70B on a consumer GPU?

Potentially, but it won't be as efficient or fast. GPUs like the NVIDIA A100 or H100 are better suited for large LLMs because they have more memory and better computational capabilities.

Keywords:

Llama3 70B, NVIDIA L40S48GB, LLM, token generation speed, quantization, performance, Q4K_M, model size, GPU, memory, fine-tuning, prompt engineering, parallel processing, conversational AI, content summarization, creative writing, gradient accumulation, use cases, limitations, optimization