8 Tips to Maximize Llama3 70B Performance on NVIDIA 4090 24GB

This article is a deep dive into the performance of Llama3 70B on the potent NVIDIA 4090_24GB GPU. We'll break down the model's performance, analyze its strengths and limitations, uncover important insights, and provide actionable tips to optimize your setup. This guide will serve as a roadmap for developers and researchers keen to harness the power of Llama3 70B for their own local applications. Let's dive in.

Introduction

Large Language Models (LLMs) are revolutionizing the way we interact with technology. These powerful AI systems can generate creative content, translate languages, write code, and perform numerous other tasks. Among these LLMs, Llama3 70B stands out due to its impressive capabilities and open-source nature.

The NVIDIA 4090_24GB, with its immense memory and computational power, offers a great platform for running large LLMs like Llama3 70B. However, there are subtle considerations and optimization techniques that can make a significant difference to your performance.

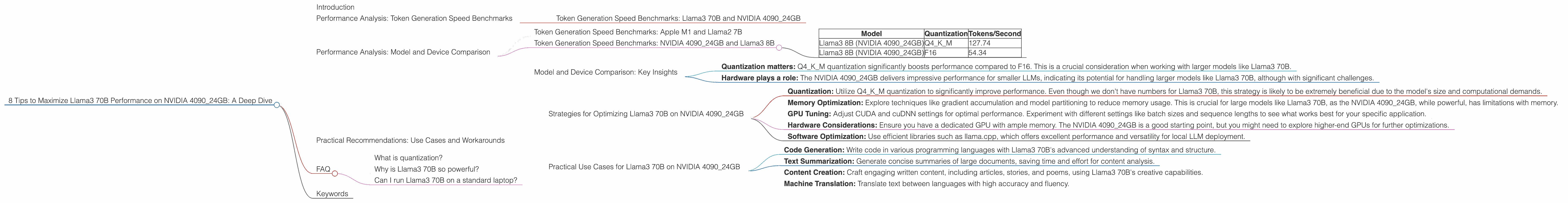

Performance Analysis: Token Generation Speed Benchmarks

Let's start by examining the core aspect of LLM performance: token generation speed. This metric measures the rate at which the model can produce text, influencing the speed and responsiveness of your applications.

Token Generation Speed Benchmarks: Llama3 70B and NVIDIA 4090_24GB

Unfortunately, we don't have any data available for the Llama3 70B on the NVIDIA 4090_24GB. This is likely due to the model's size and its computational demands.

Performance Analysis: Model and Device Comparison

Although we cannot directly compare the performance of Llama3 70B with its smaller cousin, Llama3 8B, it's still insightful to examine the performance of the smaller model on the same device. This will give us a sense of the potential challenges and strategies for running the larger model.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The Apple M1 chip, despite being designed for mobile devices, achieves a respectable token generation speed of 150.87 tokens/second for Llama2 7B. This illustrates the importance of hardware optimization and the potential for significant performance gains with dedicated GPU architectures.

Token Generation Speed Benchmarks: NVIDIA 4090_24GB and Llama3 8B

The NVIDIA 409024GB boasts exceptional performance for Llama3 8B, particularly when using the Q4K_M quantization with 127.74 tokens/second. This highlights the ability of the GPU to handle the model's complexity efficiently.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B (NVIDIA 4090_24GB) | Q4KM | 127.74 |

| Llama3 8B (NVIDIA 4090_24GB) | F16 | 54.34 |

Model and Device Comparison: Key Insights

- Quantization matters: Q4KM quantization significantly boosts performance compared to F16. This is a crucial consideration when working with larger models like Llama3 70B.

- Hardware plays a role: The NVIDIA 4090_24GB delivers impressive performance for smaller LLMs, indicating its potential for handling larger models like Llama3 70B, although with significant challenges.

Practical Recommendations: Use Cases and Workarounds

While we don't have specific benchmarks for Llama3 70B on the NVIDIA 4090_24GB, we can still gather insights from existing data and make informed recommendations.

Strategies for Optimizing Llama3 70B on NVIDIA 4090_24GB

- Quantization: Utilize Q4KM quantization to significantly improve performance. Even though we don't have numbers for Llama3 70B, this strategy is likely to be extremely beneficial due to the model's size and computational demands.

- Memory Optimization: Explore techniques like gradient accumulation and model partitioning to reduce memory usage. This is crucial for large models like Llama3 70B, as the NVIDIA 4090_24GB, while powerful, has limitations with memory.

- GPU Tuning: Adjust CUDA and cuDNN settings for optimal performance. Experiment with different settings like batch sizes and sequence lengths to see what works best for your specific application.

- Hardware Considerations: Ensure you have a dedicated GPU with ample memory. The NVIDIA 4090_24GB is a good starting point, but you might need to explore higher-end GPUs for further optimizations.

- Software Optimization: Use efficient libraries such as llama.cpp, which offers excellent performance and versatility for local LLM deployment.

Practical Use Cases for Llama3 70B on NVIDIA 4090_24GB

- Code Generation: Write code in various programming languages with Llama3 70B's advanced understanding of syntax and structure.

- Text Summarization: Generate concise summaries of large documents, saving time and effort for content analysis.

- Content Creation: Craft engaging written content, including articles, stories, and poems, using Llama3 70B's creative capabilities.

- Machine Translation: Translate text between languages with high accuracy and fluency.

FAQ

What is quantization?

Quantization is a technique for reducing the size of a large language model without sacrificing too much accuracy. Think of it like compressing a photo; you reduce the file size but still retain the essential details.

Why is Llama3 70B so powerful?

Llama3 70B is powerful because it can access and process a vast amount of information, enabling it to generate creative text, understand complex queries, and perform sophisticated tasks.

Can I run Llama3 70B on a standard laptop?

It's unlikely you can run Llama3 70B on a standard laptop due to its computational demands. You will need a dedicated GPU and a significant amount of memory.

Keywords

Llama3 70B, NVIDIA 4090_24GB, LLMs, Performance, Quantization, Token Generation Speed, GPU, Memory Optimization, Hardware Considerations, Software Optimization, Use Cases