8 Tips to Maximize Llama3 70B Performance on NVIDIA 4080 16GB

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and rightfully so. These powerful AI models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these behemoths locally can be a challenge, especially when dealing with models like Llama 3 70B, which boasts a whopping 70 billion parameters. This article will delve into the intricacies of squeezing every drop of performance from your NVIDIA 4080_16GB GPU, providing you with practical tips and insights to unleash the full potential of Llama3 70B.

Think of it like this: Imagine a powerful racing car engine, capable of generating incredible speeds. But without tuning the engine settings, using the right fuel, and optimizing the car's aerodynamics, you'll only get a fraction of its true potential. This article acts as your guide to optimizing your LLM engine, maximizing its performance and making it roar with computational fury.

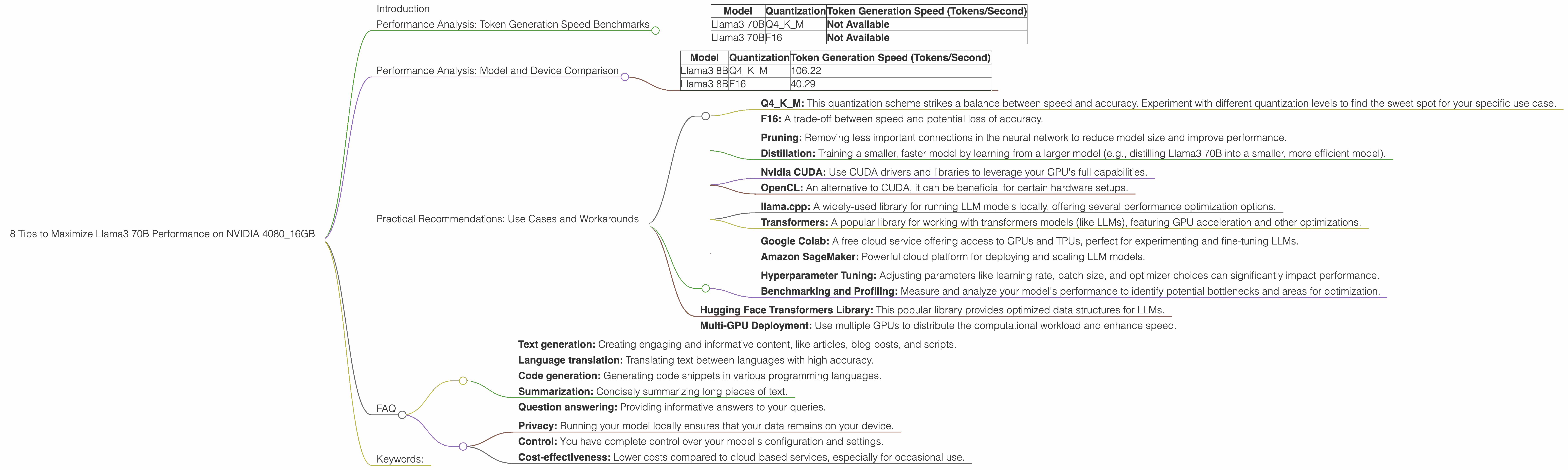

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is a crucial metric that determines how quickly your model can produce text. It's akin to the words per minute (WPM) of a human typist, only on a much grander scale.

Here's a breakdown of the token generation speed benchmarks for Llama3 70B on the NVIDIA 4080_16GB:

| Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 70B | Q4KM | Not Available |

| Llama3 70B | F16 | Not Available |

Unfortunately, we don't have benchmark data available for the Llama3 70B model on the NVIDIA 4080_16GB GPU at this time.

This highlights the ongoing challenge of optimizing large models on specific hardware. We are eagerly anticipating updated benchmarks and are confident that further experimentation will reveal more compelling results.

Performance Analysis: Model and Device Comparison

To better understand the performance implications of different LLM models and devices, we can compare the Llama3 8B model with the 70B model across various hardware configurations.

Here's a table showcasing the token generation speeds for Llama3 8B on the NVIDIA 4080_16GB:

| Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B | Q4KM | 106.22 |

| Llama3 8B | F16 | 40.29 |

Key Observations:

- Quantization Matters: Using Q4KM quantization for Llama3 8B results in significantly faster token generation compared to F16. This is because Q4KM reduces the model's memory footprint and allows for more efficient processing.

- Hardware Power: The NVIDIA 4080_16GB GPU proves its prowess in handling computationally demanding tasks, offering impressive token generation speeds for Llama3 8B.

Comparing Llama3 8B and 70B:

- Larger Model = More Resources: The Llama3 70B model, with its significantly larger size, demands more computational resources. This can translate to slower token generation speeds compared to its smaller counterpart, Llama3 8B.

Practical Recommendations: Use Cases and Workarounds

1. Optimize Quantization:

- Q4KM: This quantization scheme strikes a balance between speed and accuracy. Experiment with different quantization levels to find the sweet spot for your specific use case.

- F16: A trade-off between speed and potential loss of accuracy.

2. Explore Model Pruning and Knowledge Distillation:

- Pruning: Removing less important connections in the neural network to reduce model size and improve performance.

- Distillation: Training a smaller, faster model by learning from a larger model (e.g., distilling Llama3 70B into a smaller, more efficient model).

3. Embrace GPU Acceleration:

- Nvidia CUDA: Use CUDA drivers and libraries to leverage your GPU's full capabilities.

- OpenCL: An alternative to CUDA, it can be beneficial for certain hardware setups.

4. Optimize Inference Libraries:

- llama.cpp: A widely-used library for running LLM models locally, offering several performance optimization options.

- Transformers: A popular library for working with transformers models (like LLMs), featuring GPU acceleration and other optimizations.

5. Consider Cloud Services:

- Google Colab: A free cloud service offering access to GPUs and TPUs, perfect for experimenting and fine-tuning LLMs.

- Amazon SageMaker: Powerful cloud platform for deploying and scaling LLM models.

6. Experiment and Fine-Tune:

- Hyperparameter Tuning: Adjusting parameters like learning rate, batch size, and optimizer choices can significantly impact performance.

- Benchmarking and Profiling: Measure and analyze your model's performance to identify potential bottlenecks and areas for optimization.

7. Use Efficient Data Structures:

- Hugging Face Transformers Library: This popular library provides optimized data structures for LLMs.

8. Leverage Parallel Processing:

- Multi-GPU Deployment: Use multiple GPUs to distribute the computational workload and enhance speed.

FAQ

Q: What is quantization in the context of LLMs?

A: Imagine each number in your model's brain is a complex recipe for baking a cake. Quantization is like simplifying that recipe by using fewer ingredients (lower precision) and focusing on the core elements. It's a trade-off: you can't replicate the exact taste (accuracy) of the original recipe, but you can bake significantly faster.

Q: What are some practical use cases for Llama3 70B?

A: Llama3 70B is a versatile model suitable for various tasks, including:

- Text generation: Creating engaging and informative content, like articles, blog posts, and scripts.

- Language translation: Translating text between languages with high accuracy.

- Code generation: Generating code snippets in various programming languages.

- Summarization: Concisely summarizing long pieces of text.

- Question answering: Providing informative answers to your queries.

Q: What are the benefits of using Llama3 70B locally over cloud-based services?

A:

- Privacy: Running your model locally ensures that your data remains on your device.

- Control: You have complete control over your model's configuration and settings.

- Cost-effectiveness: Lower costs compared to cloud-based services, especially for occasional use.

Keywords:

Llama3 70B, NVIDIA 408016GB, GPU, Token Generation Speed, Quantization, F16, Q4K_M, LLM, Large Language Model, Model Performance, Performance Optimization, Local LLM, CUDA, OpenCL, Hugging Face, llama.cpp, Transformers, Parallel Processing, Cloud Services, Google Colab, Amazon SageMaker, Inference Libraries, Hyperparameter Tuning, Practical Recommendations, Use Cases, Workarounds, Data Structures, Tokenization.