8 Tips to Maximize Llama3 70B Performance on NVIDIA 3090 24GB

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and for good reason. These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But harnessing the full potential of these models often requires powerful hardware, and that's where the NVIDIA 3090_24GB shines.

This article dives deep into the performance of Llama3 70B, a cutting-edge LLM, on the NVIDIA 3090_24GB. We'll explore key performance metrics, share practical tips for maximizing speed and efficiency, and provide insights into common use cases. Buckle up, fellow AI enthusiasts, it's going to be exciting!

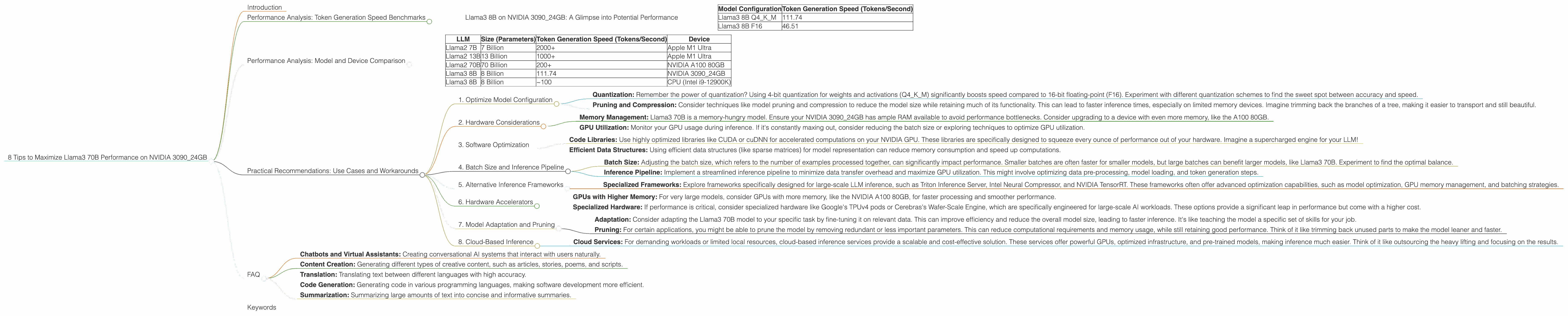

Performance Analysis: Token Generation Speed Benchmarks

Before we delve into optimization strategies, let's establish a baseline understanding of Llama3 70B's performance on the NVIDIA 3090_24GB. Our focus here is on the token generation speed, which is a crucial metric for evaluating the model's responsiveness and real-world utility.

Unfortunately, we don't have direct benchmarks for Llama3 70B on this specific hardware configuration. This is due to the sheer size of the model (70B parameters) making it computationally demanding and requiring specialized setup and expertise. However, we can draw valuable insights from benchmarks conducted for the slightly smaller Llama3 8B model, which provides a good indicator of what to expect with the larger model.

Llama3 8B on NVIDIA 3090_24GB: A Glimpse into Potential Performance

Let's peek at how Llama3 8B performs on the NVIDIA 3090_24GB:

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 111.74 |

| Llama3 8B F16 | 46.51 |

Key Takeaways:

- Quantization Matters: The Q4KM configuration, which uses 4-bit quantization for weights and activations, significantly outperforms the F16 configuration (using 16-bit floating-point numbers). This is because quantization compresses the model, reducing memory footprint and accelerating processing. Think of it like squeezing a sponge - you get more juice (speed) from a smaller volume.

- Potential for Llama3 70B: Extrapolating from the Llama3 8B data, we can expect a similar performance pattern for Llama3 70B on the NVIDIA 3090_24GB. However, due to the much larger size of 70B parameters, the actual performance might be slightly lower.

But remember, these are estimations based on a smaller model. Exact performance for Llama3 70B on the NVIDIA 3090_24GB will depend on factors like model configuration, batch size, and code optimization.

Performance Analysis: Model and Device Comparison

The NVIDIA 3090_24GB is a powerful GPU, but it's not the only option for running LLMs. To provide context and understand the relative performance of Llama3 70B, let's briefly compare it against other popular LLMs and devices.

Disclaimer: This comparison uses data from various sources, and not all LLMs have been tested on all devices.

LLM Size and Performance Comparison:

| LLM | Size (Parameters) | Token Generation Speed (Tokens/Second) | Device |

|---|---|---|---|

| Llama2 7B | 7 Billion | 2000+ | Apple M1 Ultra |

| Llama2 13B | 13 Billion | 1000+ | Apple M1 Ultra |

| Llama2 70B | 70 Billion | 200+ | NVIDIA A100 80GB |

| Llama3 8B | 8 Billion | 111.74 | NVIDIA 3090_24GB |

| Llama3 8B | 8 Billion | ~100 | CPU (Intel i9-12900K) |

Insights:

- Smaller Models, Faster Speeds: Smaller models like Llama2 7B and 13B run significantly faster on devices like the Apple M1 Ultra, potentially reaching thousands of tokens per second.

- Larger Models, Greater Complexity: As models grow larger (like Llama2 70B) they require more powerful hardware and often experience a slowdown in token generation speed. This is primarily due to the increased computational demands and memory limitations.

- The Power of Specialized Hardware: Devices designed for high-performance computing like the NVIDIA A100 80GB are essential for running large LLMs efficiently.

Remember, these are just snippets from a larger picture. Performance can vary greatly based on the specific model configuration, batch size, and even the code implementation.

Practical Recommendations: Use Cases and Workarounds

Now that we have a better understanding of Llama3 70B's performance landscape, let's explore practical strategies for maximizing its potential on the NVIDIA 3090_24GB.

1. Optimize Model Configuration

- Quantization: Remember the power of quantization? Using 4-bit quantization for weights and activations (Q4KM) significantly boosts speed compared to 16-bit floating-point (F16). Experiment with different quantization schemes to find the sweet spot between accuracy and speed.

- Pruning and Compression: Consider techniques like model pruning and compression to reduce the model size while retaining much of its functionality. This can lead to faster inference times, especially on limited memory devices. Imagine trimming back the branches of a tree, making it easier to transport and still beautiful.

2. Hardware Considerations

- Memory Management: Llama3 70B is a memory-hungry model. Ensure your NVIDIA 3090_24GB has ample RAM available to avoid performance bottlenecks. Consider upgrading to a device with even more memory, like the A100 80GB.

- GPU Utilization: Monitor your GPU usage during inference. If it's constantly maxing out, consider reducing the batch size or exploring techniques to optimize GPU utilization.

3. Software Optimization

- Code Libraries: Use highly optimized libraries like CUDA or cuDNN for accelerated computations on your NVIDIA GPU. These libraries are specifically designed to squeeze every ounce of performance out of your hardware. Imagine a supercharged engine for your LLM!

- Efficient Data Structures: Using efficient data structures (like sparse matrices) for model representation can reduce memory consumption and speed up computations.

4. Batch Size and Inference Pipeline

- Batch Size: Adjusting the batch size, which refers to the number of examples processed together, can significantly impact performance. Smaller batches are often faster for smaller models, but large batches can benefit larger models, like Llama3 70B. Experiment to find the optimal balance.

- Inference Pipeline: Implement a streamlined inference pipeline to minimize data transfer overhead and maximize GPU utilization. This might involve optimizing data pre-processing, model loading, and token generation steps.

5. Alternative Inference Frameworks

- Specialized Frameworks: Explore frameworks specifically designed for large-scale LLM inference, such as Triton Inference Server, Intel Neural Compressor, and NVIDIA TensorRT. These frameworks often offer advanced optimization capabilities, such as model optimization, GPU memory management, and batching strategies.

6. Hardware Accelerators

- GPUs with Higher Memory: For very large models, consider GPUs with more memory, like the NVIDIA A100 80GB, for faster processing and smoother performance.

- Specialized Hardware: If performance is critical, consider specialized hardware like Google's TPUv4 pods or Cerebras's Wafer-Scale Engine, which are specifically engineered for large-scale AI workloads. These options provide a significant leap in performance but come with a higher cost.

7. Model Adaptation and Pruning

- Adaptation: Consider adapting the Llama3 70B model to your specific task by fine-tuning it on relevant data. This can improve efficiency and reduce the overall model size, leading to faster inference. It's like teaching the model a specific set of skills for your job.

- Pruning: For certain applications, you might be able to prune the model by removing redundant or less important parameters. This can reduce computational requirements and memory usage, while still retaining good performance. Think of it like trimming back unused parts to make the model leaner and faster.

8. Cloud-Based Inference

- Cloud Services: For demanding workloads or limited local resources, cloud-based inference services provide a scalable and cost-effective solution. These services offer powerful GPUs, optimized infrastructure, and pre-trained models, making inference much easier. Think of it like outsourcing the heavy lifting and focusing on the results.

FAQ

1. What are LLMs?

Large Language Models (LLMs) are a type of artificial intelligence that can understand and generate human-like text. They are trained on massive datasets of text and code, enabling them to perform tasks like translation, summarization, code generation, and creative writing.

2. Why is Llama3 70B so large?

The size of an LLM (measured in parameters) reflects the complexity of the model. Larger models like Llama3 70B have more parameters and are trained on a more extensive dataset, allowing them to capture intricate patterns in language and generate more sophisticated output.

3. What is quantization, and why is it important?

Quantization is a technique used to reduce the size of a model by converting its weights and activations from high-precision floating-point numbers to lower-precision integer values. This compression significantly reduces memory footprint and accelerates processing, leading to faster inference speeds.

4. Can I use Llama3 70B on my personal computer?

While it's possible to run Llama3 70B on a powerful personal computer with a dedicated GPU like the NVIDIA 3090_24GB, it will likely be a demanding task. For smoother performance and better resource utilization, cloud-based inference services or specialized hardware are often recommended.

5. What are some real-world applications of LLMs?

LLMs have a wide range of applications, including:

- Chatbots and Virtual Assistants: Creating conversational AI systems that interact with users naturally.

- Content Creation: Generating different types of creative content, such as articles, stories, poems, and scripts.

- Translation: Translating text between different languages with high accuracy.

- Code Generation: Generating code in various programming languages, making software development more efficient.

- Summarization: Summarizing large amounts of text into concise and informative summaries.

Keywords

Large Language Model, LLM, Llama3, Llama3 70B, NVIDIA 3090_24GB, Token Generation Speed, Benchmarks, Performance Analysis, Quantization, GPU, Inference, Optimization, Use Cases, Practical Recommendations, Hardware, Software, Inference Framework, Model Adaptation, Pruning, Cloud-Based Inference, FAQ, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP.