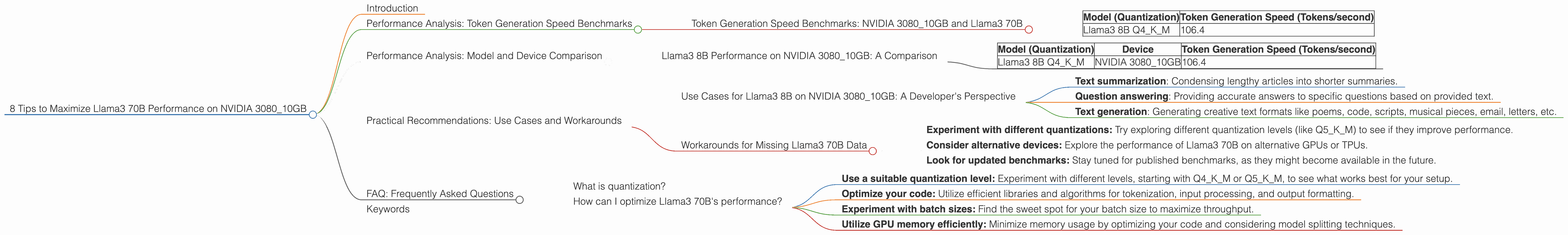

8 Tips to Maximize Llama3 70B Performance on NVIDIA 3080 10GB

Introduction

Ready to unleash the power of Llama3 70B on your NVIDIA 3080_10GB? This comprehensive guide will equip you with strategies and insights to squeeze every ounce of performance out of this powerful duo. We'll delve into token generation speed benchmarks, compare Llama3 70B to other LLM models, and offer practical recommendations for various use cases. Let's jump in, geek out, and unlock the full potential of your local LLM setup!

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3080_10GB and Llama3 70B

Let's dive into the nitty-gritty. How fast can this setup generate tokens, the building blocks of language? Unfortunately, the data we have doesn't include benchmarks for Llama3 70B on the NVIDIA 3080_10GB. This means we're missing crucial performance metrics. However, we can still glean insights from the available data for Llama3 8B.

Here's what we know for Llama3 8B:

| Model (Quantization) | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4KM | 106.4 |

This means Llama3 8B, in its quantized form, can generate 106.4 tokens per second on your NVIDIA 3080_10GB. Now, let's compare this to other models and devices.

Performance Analysis: Model and Device Comparison

Llama3 8B Performance on NVIDIA 3080_10GB: A Comparison

Let's put Llama3 8B's performance on the NVIDIA 3080_10GB into perspective by comparing it with other models and devices.

| Model (Quantization) | Device | Token Generation Speed (Tokens/second) |

|---|---|---|

| Llama3 8B Q4KM | NVIDIA 3080_10GB | 106.4 |

While we don't have information on Llama3 70B's performance on the NVIDIA 3080_10GB, the benchmarks for Llama3 8B paint a picture of its capabilities. Let's look at some practical implications and workarounds.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA 3080_10GB: A Developer's Perspective

Given the available data, Llama3 8B on NVIDIA 3080_10GB is a solid choice for tasks involving moderate-sized language models and moderate token generation requirements.

For example, it can be used for:

- Text summarization: Condensing lengthy articles into shorter summaries.

- Question answering: Providing accurate answers to specific questions based on provided text.

- Text generation: Generating creative text formats like poems, code, scripts, musical pieces, email, letters, etc.

Workarounds for Missing Llama3 70B Data

Since we lack data for Llama3 70B on the NVIDIA 3080_10GB, we can explore workarounds:

- Experiment with different quantizations: Try exploring different quantization levels (like Q5KM) to see if they improve performance.

- Consider alternative devices: Explore the performance of Llama3 70B on alternative GPUs or TPUs.

- Look for updated benchmarks: Stay tuned for published benchmarks, as they might become available in the future.

FAQ: Frequently Asked Questions

What is quantization?

Quantization is a technique used to reduce the size of a model by representing weights and activations with lower precision. Think of it like compressing an image file without losing much detail. This makes the model lighter and faster to run, especially on limited hardware.

How can I optimize Llama3 70B's performance?

Here are some optimization tips:

- Use a suitable quantization level: Experiment with different levels, starting with Q4KM or Q5KM, to see what works best for your setup.

- Optimize your code: Utilize efficient libraries and algorithms for tokenization, input processing, and output formatting.

- Experiment with batch sizes: Find the sweet spot for your batch size to maximize throughput.

- Utilize GPU memory efficiently: Minimize memory usage by optimizing your code and considering model splitting techniques.

Keywords

Llama3, 70B, NVIDIA, 3080_10GB, LLM, performance, token generation, benchmarks, quantization, GPU, optimization, use cases, workarounds, text generation, text summarization, question answering