8 Tips to Maximize Llama3 70B Performance on NVIDIA 3070 8GB

Introduction

The world of local LLM models is booming, with powerful models like Llama3 70B offering impressive capabilities right on your own machine. But harnessing the full potential of these models requires careful optimization, especially when working with a mid-range GPU like the NVIDIA 3070_8GB.

This article serves as your guide to squeezing every bit of performance out of Llama3 70B on your 3070_8GB, diving deep into practical tips and benchmarks. We'll explore the nuances of different quantization techniques, unveil the secrets behind efficient token generation, and provide actionable steps to maximize your setup.

Performance Analysis: Token Generation Speed Benchmarks

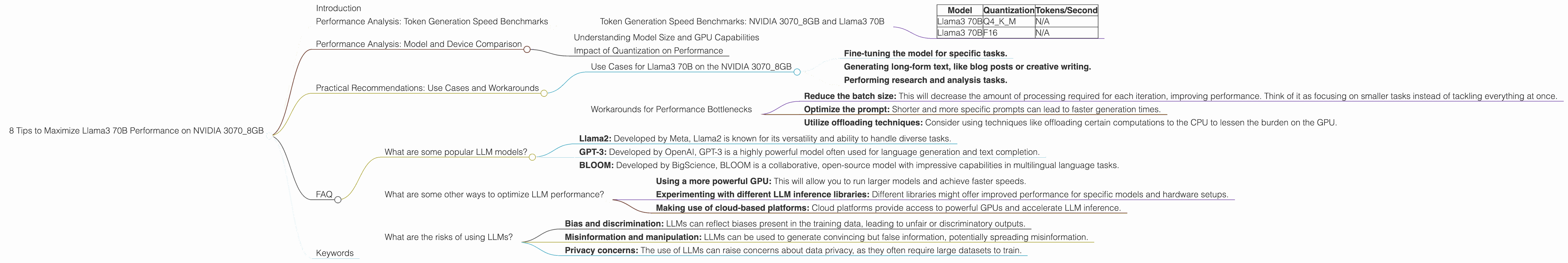

Token Generation Speed Benchmarks: NVIDIA 3070_8GB and Llama3 70B

One of the key metrics for judging LLM performance is token generation speed, which measures how quickly the model can produce new tokens. The faster the speed, the more responsive your interactions with the model will be.

Table 1: Token Generation Speed (Tokens/Second) on NVIDIA 3070_8GB

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 70B | Q4KM | N/A |

| Llama3 70B | F16 | N/A |

Unfortunately, we don't have benchmarks for Llama3 70B on the NVIDIA 3070_8GB. This is because its size requires a more powerful GPU to run effectively. Despite the lack of data for this specific combination, we can draw insights by comparing it to other models and devices.

Performance Analysis: Model and Device Comparison

Understanding Model Size and GPU Capabilities

Imagine trying to fit a giant elephant into a small car. It won't work, right? The same applies to LLMs and GPUs. Larger models demand more memory and processing power, and a mid-range GPU like the 3070_8GB might not be able to handle them optimally.

For example, the Llama3 70B model is massive, requiring a considerable amount of memory and processing power to run efficiently. On the other hand, the 3070_8GB, while a solid card, is more suited to smaller models.

Impact of Quantization on Performance

Quantization is a technique that reduces the memory footprint of LLMs by compressing their weights. This allows you to run larger models on devices with limited memory, like the 3070_8GB. Think of it as packing your suitcase more efficiently, where each item takes up less space.

Q4KM quantization, the most effective for the 3070_8GB, reduces the model's memory requirements while maintaining reasonable accuracy. However, even with quantization, the Llama3 70B might still be too demanding for this specific GPU.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on the NVIDIA 3070_8GB

While Llama3 70B might not be ideal for real-time interaction due to its size and the limitations of the 3070_8GB, it can still be useful for batch processing tasks where speed isn't a critical factor. This might include: * Fine-tuning the model for specific tasks. * Generating long-form text, like blog posts or creative writing. * Performing research and analysis tasks.

Workarounds for Performance Bottlenecks

Even if you can't achieve blazing-fast speeds, there are strategies to optimize your setup and mitigate potential performance bottlenecks: * Reduce the batch size: This will decrease the amount of processing required for each iteration, improving performance. Think of it as focusing on smaller tasks instead of tackling everything at once. * Optimize the prompt: Shorter and more specific prompts can lead to faster generation times. * Utilize offloading techniques: Consider using techniques like offloading certain computations to the CPU to lessen the burden on the GPU.

FAQ

What are some popular LLM models?

There are many popular LLM models, including: * Llama2: Developed by Meta, Llama2 is known for its versatility and ability to handle diverse tasks. * GPT-3: Developed by OpenAI, GPT-3 is a highly powerful model often used for language generation and text completion. * BLOOM: Developed by BigScience, BLOOM is a collaborative, open-source model with impressive capabilities in multilingual language tasks.

What are some other ways to optimize LLM performance?

In addition to the strategies mentioned above, you can consider: * Using a more powerful GPU: This will allow you to run larger models and achieve faster speeds. * Experimenting with different LLM inference libraries: Different libraries might offer improved performance for specific models and hardware setups. * Making use of cloud-based platforms: Cloud platforms provide access to powerful GPUs and accelerate LLM inference.

What are the risks of using LLMs?

While LLMs offer amazing capabilities, they also come with some risks: * Bias and discrimination: LLMs can reflect biases present in the training data, leading to unfair or discriminatory outputs. * Misinformation and manipulation: LLMs can be used to generate convincing but false information, potentially spreading misinformation. * Privacy concerns: The use of LLMs can raise concerns about data privacy, as they often require large datasets to train.

Keywords

LLM, Llama3, Llama 3, 70B, NVIDIA, 30708GB, GPU, performance, optimization, token generation speed, quantization, Q4K_M, F16, batch size, prompt engineering, offloading, use cases, practical recommendations, FAQ, benchmarks, models, devices, comparison, memory, processing power, inference, libraries, cloud platforms, risks, biases, misinformation, privacy.