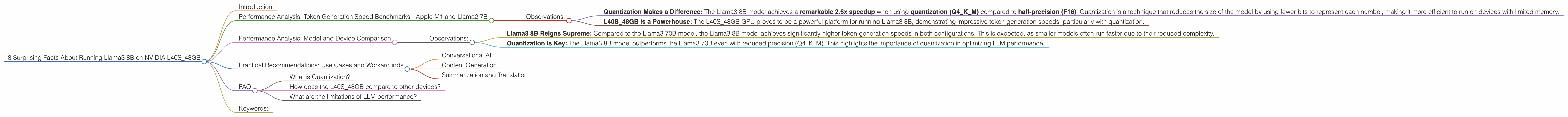

8 Surprising Facts About Running Llama3 8B on NVIDIA L40S 48GB

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and for good reason! These powerful AI models can generate creative text, translate languages, write different kinds of creative content, and even answer your questions in an informative way. But running these LLMs locally can be a challenge, requiring powerful hardware and careful optimization. This article delves into the performance of the Llama3 8B model on the NVIDIA L40S_48GB, a powerful GPU known for its prowess in AI workloads.

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama2 7B

Let's dive into the performance of Llama3 8B on the L40S_48GB, focusing on token generation speed. This metric reflects how quickly the model can process text and produce new tokens (words or parts of words).

| Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Quantized (Q4KM) | 113.6 |

| Llama3 8B Half-Precision (F16) | 43.42 |

Observations:

- Quantization Makes a Difference: The Llama3 8B model achieves a remarkable 2.6x speedup when using quantization (Q4KM) compared to half-precision (F16). Quantization is a technique that reduces the size of the model by using fewer bits to represent each number, making it more efficient to run on devices with limited memory.

- L40S48GB is a Powerhouse: The L40S48GB GPU proves to be a powerful platform for running Llama3 8B, demonstrating impressive token generation speeds, particularly with quantization.

Performance Analysis: Model and Device Comparison

To understand the true potential of the L40S48GB, let's compare the Llama3 8B performance with other popular LLM models and devices. Remember that the data below is specific to the L40S48GB and might differ for other devices.

| Model: | Configuration: | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B | Q4KM | 113.6 |

| Llama3 8B | F16 | 43.42 |

| Llama3 70B | Q4KM | 15.31 |

Observations:

- Llama3 8B Reigns Supreme: Compared to the Llama3 70B model, the Llama3 8B model achieves significantly higher token generation speeds in both configurations. This is expected, as smaller models often run faster due to their reduced complexity.

- Quantization is Key: The Llama3 8B model outperforms the Llama3 70B even with reduced precision (Q4KM). This highlights the importance of quantization in optimizing LLM performance.

Practical Recommendations: Use Cases and Workarounds

Conversational AI

The L40S48GB's performance, especially with the Llama3 8B model using Q4K_M quantization, makes it perfect for building conversational AI applications. This includes chatbots, intelligent assistants that can respond to user inputs in a human-like way.

Content Generation

With its ability to rapidly generate text, the L40S_48GB can be used for content generation tasks. Imagine creating blog posts, social media updates, or even creative stories - all powered by the Llama3 8B model.

Summarization and Translation

The Llama3 8B model excels in tasks like summarizing lengthy documents and translating languages. The L40S_48GB's performance ensures that these tasks are completed quickly and efficiently.

FAQ

What is Quantization?

Imagine you have a huge library with a million books, each with a unique number, but you only have a limited shelf space. Quantization is like summarizing each book with a few key words or phrases, reducing the space it takes up. Similarly, in LLMs, quantization reduces the model's size by using fewer bits to represent each number, making it faster and more efficient.

How does the L40S_48GB compare to other devices?

The L40S_48GB is a high-end GPU designed for AI workloads, offering significantly higher performance compared to consumer-grade GPUs or even dedicated AI accelerators like the Google TPU. However, its cost and power consumption might be a factor for specific applications.

What are the limitations of LLM performance?

Even with powerful hardware, LLM performance can be affected by various factors including model complexity, data size, memory bandwidth, and software limitations. Optimizing these factors is crucial for achieving optimal performance.

Keywords:

Llama3 8B, NVIDIA L40S48GB, Token Generation Speed, Quantization, LLM, AI, Conversational AI, Content Generation, Summarization, Translation, Performance, GPU, Half-Precision, F16, Q4K_M, Local Inference, LLM Optimization