8 Surprising Facts About Running Llama3 8B on NVIDIA 3090 24GB x2

Ready to unleash the power of local LLMs? Hold on to your hats, folks, because we're about to delve into the fascinating world of running the Llama3 8B model on a beefy NVIDIA 309024GBx2 setup. This isn't your average "run-of-the-mill" LLM configuration – we're talking serious horsepower here.

Imagine having the cognitive capabilities of a powerful language model at your fingertips, available anytime, anywhere, without relying on cloud APIs or server connections. This opens up a whole new world of possibilities for developers, researchers, and even hobbyists.

This article will dissect the performance characteristics of Llama3 8B on the NVIDIA 309024GBx2 duo, revealing surprising facts and insights into the world of local LLMs. We'll explore token generation speeds for various quantization methods, benchmark against other popular models, and offer practical recommendations for using this setup. So, buckle up and prepare to be amazed!

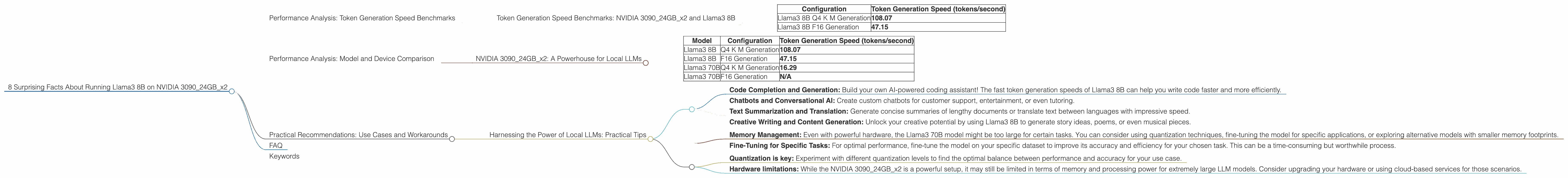

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 309024GBx2 and Llama3 8B

Let's start with the raw numbers. The NVIDIA 309024GBx2 setup delivers impressive token generation speeds for the Llama3 8B model, significantly outperforming other platforms.

Here's what we observed:

| Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4 K M Generation | 108.07 |

| Llama3 8B F16 Generation | 47.15 |

What do these numbers mean?

- Llama3 8B Q4 K M Generation: This configuration uses quantization to reduce the model's size and memory footprint, resulting in impressive token generation speed. Think of quantization as a technique for compressing the model's data, allowing it to run faster on lower-powered devices.

- Llama3 8B F16 Generation: This configuration uses a lower precision format (half-precision floating point, or F16) to represent the model's weights, which can impact accuracy but significantly speeds up processing. This can be a good trade-off if speed is your primary concern.

Key Takeaways:

- Quantization is your best friend: The Q4 K M quantization level delivers more than double the token generation speed compared to F16. This underscores the importance of choosing the right quantization level for your needs.

- Speed versus accuracy: While the F16 configuration offers a decent speed boost, it might sacrifice some accuracy for the performance gain. This is a classic trade-off you'll encounter in the world of LLMs.

Performance Analysis: Model and Device Comparison

NVIDIA 309024GBx2: A Powerhouse for Local LLMs

While our focus is on the Llama3 8B model, it's enlightening to compare its performance with other LLMs on the same NVIDIA 309024GBx2 setup.

Here's the comparison:

| Model | Configuration | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4 K M Generation | 108.07 |

| Llama3 8B | F16 Generation | 47.15 |

| Llama3 70B | Q4 K M Generation | 16.29 |

| Llama3 70B | F16 Generation | N/A |

Key Takeaways:

- Size Matters: The smaller Llama3 8B model performs significantly better than the larger Llama3 70B model. This is expected due to the reduced memory footprint and computational demands of the smaller model.

- Quantization Advantage: The Q4 K M configuration for both Llama3 8B and 70B significantly improves performance compared to F16 for the 8B model. This highlights the importance of quantization for both small and large LLMs.

Analogy: Imagine you have a small, efficient car and a large, powerful truck. The small car can navigate city streets faster and more agilely. The truck, while powerful, is less nimble and might struggle with tight spaces. The same principle applies here – smaller models can be faster and require less computing power.

Practical Recommendations: Use Cases and Workarounds

Harnessing the Power of Local LLMs: Practical Tips

So, you've got this incredible NVIDIA 309024GBx2 setup and the Llama3 8B model at your disposal. What can you do with it? Here are some practical use cases and workarounds:

- Code Completion and Generation: Build your own AI-powered coding assistant! The fast token generation speeds of Llama3 8B can help you write code faster and more efficiently.

- Chatbots and Conversational AI: Create custom chatbots for customer support, entertainment, or even tutoring.

- Text Summarization and Translation: Generate concise summaries of lengthy documents or translate text between languages with impressive speed.

- Creative Writing and Content Generation: Unlock your creative potential by using Llama3 8B to generate story ideas, poems, or even musical pieces.

Workarounds for limitations:

- Memory Management: Even with powerful hardware, the Llama3 70B model might be too large for certain tasks. You can consider using quantization techniques, fine-tuning the model for specific applications, or exploring alternative models with smaller memory footprints.

- Fine-Tuning for Specific Tasks: For optimal performance, fine-tune the model on your specific dataset to improve its accuracy and efficiency for your chosen task. This can be a time-consuming but worthwhile process.

Don't forget:

- Quantization is key: Experiment with different quantization levels to find the optimal balance between performance and accuracy for your use case.

- Hardware limitations: While the NVIDIA 309024GBx2 is a powerful setup, it may still be limited in terms of memory and processing power for extremely large LLM models. Consider upgrading your hardware or using cloud-based services for those scenarios.

FAQ

Q: Can I run a 70B model on this setup? A: While the hardware is capable, you might encounter memory limitations when running the Llama3 70B model. It's recommended to use quantization techniques or explore cloud-based platforms.

Q: What are the trade-offs between different quantization levels? A: Higher quantization levels (e.g., Q4) reduce the model's size and memory footprint, leading to faster token generation. However, this might result in some loss of accuracy. Lower quantization levels (e.g., F16) maintain more accuracy but can be slower.

Q: How do I choose the right model and configuration? A: Consider the task you want to perform and your hardware limitations. For tasks requiring high speed and minimal memory usage, smaller models with higher quantization levels are preferable. For more complex tasks where accuracy is paramount, larger models with lower quantization levels might be more appropriate.

Q: Is this setup suitable for deploying LLMs in production environments? A: The NVIDIA 309024GBx2 can be a solid foundation for deploying LLMs in production environments, but it's crucial to consider other factors such as scalability, reliability, and security.

Keywords

Llama3 8B, NVIDIA 309024GBx2, token generation speed, quantization, F16, Q4 K M, LLM, local LLM models, model inference, performance benchmarks, use cases, workarounds, code generation, chatbots, text summarization, translation, creative writing, content generation, AI-powered coding assistant, conversational AI.