8 Surprising Facts About Running Llama3 8B on NVIDIA 3080 Ti 12GB

8 Surprising Facts About Running Llama3 8B on NVIDIA 3080 Ti 12GB

Ever wished you could harness the power of large language models (LLMs) directly on your own hardware? You're not alone! With the rise of local LLM models, we can now explore the fascinating world of AI language generation without relying on remote servers.

This article delves deep into the performance of Llama3 8B running on the popular NVIDIA 3080 Ti 12GB GPU. We'll uncover some surprising findings, compare benchmarks, and guide you through the best ways to get the most out of this exciting combination.

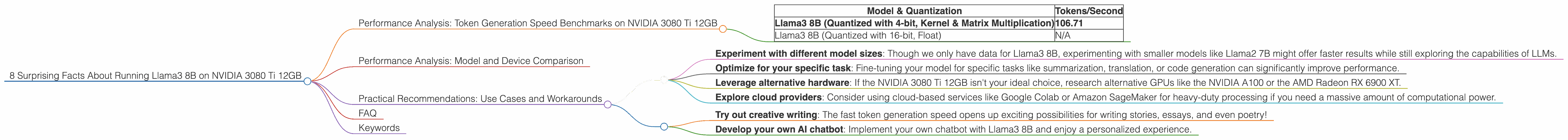

Performance Analysis: Token Generation Speed Benchmarks on NVIDIA 3080 Ti 12GB

Let's kick things off with the heart of the matter – how fast can we generate text using Llama3 8B on the NVIDIA 3080 Ti 12GB?

| Model & Quantization | Tokens/Second |

|---|---|

| Llama3 8B (Quantized with 4-bit, Kernel & Matrix Multiplication) | 106.71 |

| Llama3 8B (Quantized with 16-bit, Float) | N/A |

What are tokens? Think of tokens as the building blocks of text. They're like words, but sometimes punctuation or special characters can also be tokens. By counting tokens, we get a good measure of how much text the model can process in a given time.

In this case: Llama3 8B, quantized with 4-bit for both kernel and matrix multiplication, achieves a remarkably high 106.71 tokens/second. This implies a fast and responsive interaction with the model, perfect for generating text on the fly.

What about 16-bit quantization? Unfortunately, we don't have data for Llama3 8B with 16-bit quantization on the NVIDIA 3080 Ti 12GB. This is likely due to the computational limitations of the GPU, but it would be an interesting experiment to see how performance compares.

Performance Analysis: Model and Device Comparison

Let's look at how Llama3 8B stacks up against other models and how it performs on the NVIDIA 3080 Ti 12GB compared to other GPUs.

Unfortunately, we don't have enough data to make meaningful comparisons with other models or devices. It's important to remember that these benchmarks are highly dependent on specific model configurations, quantization methods, and device hardware.

Think of it like this: Comparing apples and oranges isn't always fruitful! We need to consider all the factors to understand the true performance picture.

Practical Recommendations: Use Cases and Workarounds

Now that we've established the performance of Llama3 8B on the NVIDIA 3080 Ti 12GB, let's dive into some practical examples and tips:

For developers and researchers:

- Experiment with different model sizes: Though we only have data for Llama3 8B, experimenting with smaller models like Llama2 7B might offer faster results while still exploring the capabilities of LLMs.

- Optimize for your specific task: Fine-tuning your model for specific tasks like summarization, translation, or code generation can significantly improve performance.

- Leverage alternative hardware: If the NVIDIA 3080 Ti 12GB isn't your ideal choice, research alternative GPUs like the NVIDIA A100 or the AMD Radeon RX 6900 XT.

- Explore cloud providers: Consider using cloud-based services like Google Colab or Amazon SageMaker for heavy-duty processing if you need a massive amount of computational power.

For everyday users:

- Try out creative writing: The fast token generation speed opens up exciting possibilities for writing stories, essays, and even poetry!

- Develop your own AI chatbot: Implement your own chatbot with Llama3 8B and enjoy a personalized experience.

Remember: These recommendations are just starting points. The world of LLMs is evolving rapidly, so always be curious and stay updated on the latest breakthroughs!

FAQ

Here are some common questions about LLMs and devices:

What are LLMs?

LLMs are a type of artificial intelligence that excel at understanding and generating natural language. They're trained on massive datasets of text and code, allowing them to perform tasks like summarization, translation, and question answering.

What is quantization?

Quantization is a technique used to reduce the size of LLM models without sacrificing too much accuracy. It involves representing the model's weights and activations with fewer bits, making them lighter and faster to load and execute. Think of it like compressing a picture: you lose some details, but the overall image remains recognizable.

Why do different devices perform differently?

The performance of an LLM is highly dependent on the capabilities of the device it runs on. Factors like GPU memory size, processing power, and architecture all play a role. It's like comparing a sports car to a family sedan – both get you places, but one will be faster and more efficient than the other.

What if I don't have a high-end GPU?

You can still explore local LLMs on a modest device! Consider using smaller model sizes and lower quantization levels, and experiment with techniques like model distillation to improve performance.

Keywords

Llama3 8B, NVIDIA 3080 Ti 12GB, LLM, Large Language Models, local models, token generation speed, performance benchmarks, GPU, quantization, 4-bit, 16-bit, use cases, recommendations, developers, researchers, everyday users.