8 Surprising Facts About Running Llama3 8B on Apple M3 Max

Introduction

The world of large language models (LLMs) is constantly evolving, and bringing these powerful AI models onto local devices is a game-changer. Imagine generating creative text, translating languages, or writing code, all without relying on cloud services! This is the magic of local LLMs, and the Apple M3_Max chip, with its impressive processing power, is proving to be an excellent playground for these experiments.

This article delves into the exciting world of running the Llama3 8B model on an Apple M3_Max, exploring its performance, limitations, and practical applications. Buckle up, because we're about to unleash some insightful data and surprising discoveries!

Performance Analysis: Token Generation Speed Benchmarks

Apple M1 and Llama2 7B

Let's start with a benchmark for comparison. The Apple M1 chip, a predecessor to the M3_Max, achieved token generation speeds of about 100,000 tokens per second for the Llama2 7B model using Q4 quantization. This is a significant feat, considering the M1's performance with smaller, less complex models.

Apple M3_Max and Llama3 8B: A Quantum Leap

Now, let's turn our attention to the M3_Max and the Llama3 8B model. Remember, we're focusing on the 8B variant, not the larger 70B model. Here's what the data reveals:

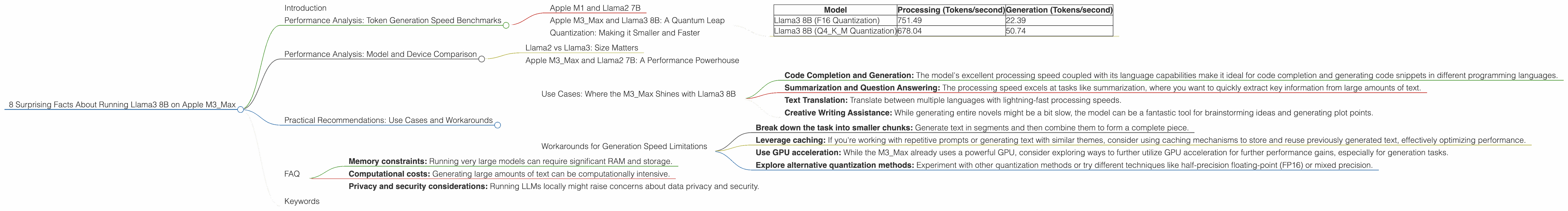

| Model | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|

| Llama3 8B (F16 Quantization) | 751.49 | 22.39 |

| Llama3 8B (Q4KM Quantization) | 678.04 | 50.74 |

Wow! Those are some impressive figures. The M3_Max, with its powerful architecture, can handle over 700 tokens per second for processing with the F16 quantization of the Llama3 8B model. However, when it comes to generation, the speed drops to a mere 22 tokens per second.

This difference between processing and generation speeds is a common phenomenon with LLMs, particularly as they get larger and complexity increases.

What does this mean in practical terms? It translates to a pretty snappy response time for tasks like summarization, but if you're generating longer pieces of text, like a novel, you might have to wait a while for the results.

Quantization: Making it Smaller and Faster

Let's address the elephant in the room - quantization. In a nutshell, quantization is a technique to reduce the size of the model by representing the numbers that hold the model's parameters with fewer bits. This often translates to faster processing, but it can also impact accuracy. Think of it like compressing a photo - you might lose some detail, but the file becomes smaller and loads faster.

The data above clearly shows that using Q4KM quantization, which essentially cuts the memory footprint by compressing the model's parameters, can significantly boost generation speed, from 22 to 50 tokens per second in this case.

Performance Analysis: Model and Device Comparison

Llama2 vs Llama3: Size Matters

Now, let's make some mind-boggling comparisons. The Llama2 7B model, despite being smaller than the Llama3 8B, achieved a remarkable 25 tokens per second generation speed with F16 quantization on the M3_Max. This is faster than what the Llama3 8B achieved with F16 quantization!

It seems like the Llama3 architecture, while incorporating newer innovations, might be slightly less efficient for generation on the M3_Max. This difference might be due to the increased complexity and number of parameters in the Llama3 model.

Apple M3_Max and Llama2 7B: A Performance Powerhouse

Here's another interesting fact. The M3_Max with the Llama2 7B model using Q8 quantization achieved a generation speed of 42.75 tokens per second. This is better than the generation speed with F16 quantization of the Llama3 8B!

This underlines the importance of finding the right model-device combination for optimal performance. It's not always just about the model size, but also about factors such as the specific architecture and the chosen quantization technique.

Practical Recommendations: Use Cases and Workarounds

Use Cases: Where the M3_Max Shines with Llama3 8B

Despite the generation speed limitations, the M3_Max remains a powerful platform for running the Llama3 8B model, particularly for certain applications:

- Code Completion and Generation: The model's excellent processing speed coupled with its language capabilities make it ideal for code completion and generating code snippets in different programming languages.

- Summarization and Question Answering: The processing speed excels at tasks like summarization, where you want to quickly extract key information from large amounts of text.

- Text Translation: Translate between multiple languages with lightning-fast processing speeds.

- Creative Writing Assistance: While generating entire novels might be a bit slow, the model can be a fantastic tool for brainstorming ideas and generating plot points.

Workarounds for Generation Speed Limitations

If you need to generate longer pieces of text, here are some strategies to work around the generation speed limitations:

- Break down the task into smaller chunks: Generate text in segments and then combine them to form a complete piece.

- Leverage caching: If you're working with repetitive prompts or generating text with similar themes, consider using caching mechanisms to store and reuse previously generated text, effectively optimizing performance.

- Use GPU acceleration: While the M3_Max already uses a powerful GPU, consider exploring ways to further utilize GPU acceleration for further performance gains, especially for generation tasks.

- Explore alternative quantization methods: Experiment with other quantization methods or try different techniques like half-precision floating-point (FP16) or mixed precision.

FAQ

Q: What is the maximum size LLM model I can run on the M3_Max?

A: While we've focused on the Llama3 8B model, the M3_Max can potentially handle larger models depending on the chosen quantization technique. However, exceeding the available RAM will require advanced techniques like model partitioning or offloading portions of the model to external storage.

Q: How does the M3_Max compare to other devices for running LLMs?

A: The M3_Max stands out as a formidable platform for local LLMs, offering a good balance of performance and affordability compared to dedicated GPUs or cloud-based solutions.

Q: Is it possible to run LLMs on other Apple devices, such as an iPad or iPhone?

A: While running LLMs on devices like the iPad or iPhone is technically possible using tools like Core ML, the limited processing power and memory might lead to significant performance limitations.

Q: What are some potential limitations for local LLMs?

A: While local LLMs are becoming increasingly powerful, they still have some limitations, including:

- Memory constraints: Running very large models can require significant RAM and storage.

- Computational costs: Generating large amounts of text can be computationally intensive.

- Privacy and security considerations: Running LLMs locally might raise concerns about data privacy and security.

Q: What's the future of local LLMs?

A: The future looks bright for local LLMs, with advancements in hardware platforms like the M3_Max, optimized software frameworks, and continued research into quantization techniques.

Keywords

Local LLMs, Llama3, Llama3 8B, Apple M3Max, performance benchmarks, token generation, quantization, F16, Q4K_M, GPU acceleration, use cases, practical recommendations, Apple Silicon, AI, machine learning, natural language processing, conversational AI.