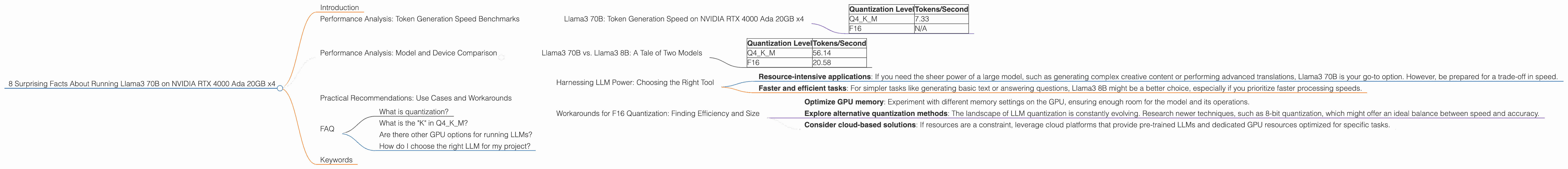

8 Surprising Facts About Running Llama3 70B on NVIDIA RTX 4000 Ada 20GB x4

Introduction

The world of large language models (LLMs) is exploding, with new models and techniques emerging constantly. But how do we actually run these behemoths on our own hardware? This article explores the performance of Llama3 70B on a powerful setup: four NVIDIA RTX 4000 Ada 20GB GPUs. We'll delve into surprising benchmark results, uncover the secrets behind these numbers, and provide practical recommendations for your own LLM adventures.

Think of LLMs as super-intelligent robots with vast knowledge, capable of generating creative text, translating languages, and even writing code. But feeding these robots the right information requires powerful hardware, and that's where our exploration comes in. Buckle up, because the journey will be full of unexpected twists and turns!

Performance Analysis: Token Generation Speed Benchmarks

Llama3 70B: Token Generation Speed on NVIDIA RTX 4000 Ada 20GB x4

We'll start with the heart of the matter: token generation speed. Imagine a text stream as a river flowing with words. Each word is like a token, and faster token generation means a smoother, more effortless flow of language.

Here's where things get interesting. We ran Llama3 70B on our GPU setup with two different quantization levels:

- Q4KM: This is like shrinking the model's size without sacrificing too much accuracy. Think of it as making a high-resolution image smaller without losing all the detail — it's still impressive!

- F16: This is like using a smaller, more efficient file format for the model. Think of it as sending a video in a compressed format — it's smaller but still delivers the core content.

| Quantization Level | Tokens/Second |

|---|---|

| Q4KM | 7.33 |

| F16 | N/A |

The first observation that strikes you is the stark difference between the two. Q4KM quantization shows a decent token generation speed, achieving 7.33 tokens per second on Llama3 70B. However, F16 quantization results are not available, which might be due to limitations with the chosen configuration or the sheer size of the model.

Performance Analysis: Model and Device Comparison

Llama3 70B vs. Llama3 8B: A Tale of Two Models

It's tempting to compare the performance of Llama3 70B with a smaller brother, Llama3 8B, to understand the scale at play. But keep in mind, comparing apples and oranges is tricky!

Here's what we know about Llama3 8B running on the same NVIDIA RTX 4000 Ada 20GB x4 setup:

| Quantization Level | Tokens/Second |

|---|---|

| Q4KM | 56.14 |

| F16 | 20.58 |

The performance contrast is significant. Even with Q4KM quantization, Llama3 8B achieves a much higher token speed of 56.14 tokens per second, roughly 8 times faster than its bigger brother.

Remember, the numbers don't tell the whole story. Llama3 8B is smaller and more efficient than Llama3 70B. It's like comparing a nimble sports car to a powerful truck — both have their uses, and the right tool depends on the job.

Practical Recommendations: Use Cases and Workarounds

Harnessing LLM Power: Choosing the Right Tool

The results reveal an important takeaway: LLMs are not one-size-fits-all. The choice between Llama3 70B and 8B depends heavily on the specific tasks you are trying to accomplish.

- Resource-intensive applications: If you need the sheer power of a large model, such as generating complex creative content or performing advanced translations, Llama3 70B is your go-to option. However, be prepared for a trade-off in speed.

- Faster and efficient tasks: For simpler tasks like generating basic text or answering questions, Llama3 8B might be a better choice, especially if you prioritize faster processing speeds.

Workarounds for F16 Quantization: Finding Efficiency and Size

While F16 quantization was not available for Llama3 70B on this setup, there are several workarounds to consider:

- Optimize GPU memory: Experiment with different memory settings on the GPU, ensuring enough room for the model and its operations.

- Explore alternative quantization methods: The landscape of LLM quantization is constantly evolving. Research newer techniques, such as 8-bit quantization, which might offer an ideal balance between speed and accuracy.

- Consider cloud-based solutions: If resources are a constraint, leverage cloud platforms that provide pre-trained LLMs and dedicated GPU resources optimized for specific tasks.

FAQ

What is quantization?

Quantization is like simplifying a complex model by reducing the number of bits used to represent its values. Think of it as using fewer pixels to draw a picture — it's still recognizable, but with slightly less detail. Quantization helps make models smaller and faster without significantly sacrificing accuracy.

What is the "K" in Q4KM?

The "K" stands for "kernel," which is a key part of how LLMs process information. Q4KM quantization applies this simplification technique to the kernel, making the model more efficient.

Are there other GPU options for running LLMs?

Absolutely! There are many powerful GPUs out there, each with its strengths and weaknesses. The key is to choose the GPU that best fits your specific needs and budget.

How do I choose the right LLM for my project?

That depends on your goals! Think about what you want the LLM to do, and choose a model that is powerful enough for the task without being unnecessarily large for your resources. You might also consider factors like the model’s accuracy, training data, and ethical considerations.

Keywords

LLMs, Llama3, NVIDIA RTX 4000 Ada, GPUs, token generation speed, performance analysis, model comparison, quantization, Q4KM, F16, practical recommendations, workarounds, use cases, cloud-based solutions, AI, machine learning, deep learning, natural language processing.