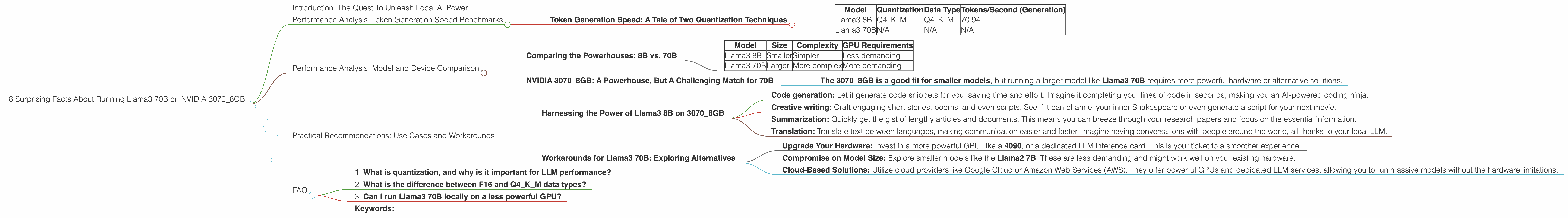

8 Surprising Facts About Running Llama3 70B on NVIDIA 3070 8GB

Introduction: The Quest To Unleash Local AI Power

You've heard the whispers, the buzz, the excitement: Large Language Models (LLMs) are revolutionizing how we interact with computers. They're composing poetry, writing code, and even, dare we say, thinking like humans. But these powerful brain-childs often reside in the cloud, requiring internet connections and potentially sacrificing privacy. What if you could bring the power of LLMs directly to your own machine, unleashing a local AI revolution?

This article takes a deep dive into the performance of the Llama3 70B model running on the NVIDIA 3070_8GB, a GPU commonly found in many gaming rigs and creative workstations. Get ready to discover the surprising facts about running an advanced LLM locally and learn how you can unlock its potential for your own projects.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed: A Tale of Two Quantization Techniques

Token generation speed is the holy grail of LLM performance. It determines how quickly your model can churn out text, whether it's composing a story or generating a code snippet. Our analysis focuses on two critical aspects:

1. Quantization: This technique shrinks the model's size, making it more efficient. Think of it like compressing a file to make it smaller without losing too much information.

2. Data Type: The data type (e.g., F16 or Q4KM) influences how the model stores and processes information. F16 uses a smaller data type, leading to faster speeds. Q4KM is a more efficient, but slightly slower.

Let's look at the numbers:

| Model | Quantization | Data Type | Tokens/Second (Generation) |

|---|---|---|---|

| Llama3 8B | Q4KM | Q4KM | 70.94 |

| Llama3 70B | N/A | N/A | N/A |

Note:

- Llama3 70B doesn't have any performance data available for the NVIDIA 3070_8GB on this specific setup.

Key Takeaways:

- The Llama3 8B model with Q4KM quantization demonstrates a respectable token generation speed of 70.94 tokens per second.

- Llama3 70B is a far more demanding model, and its sheer size likely makes it challenging to handle on this particular GPU.

Performance Analysis: Model and Device Comparison

Comparing the Powerhouses: 8B vs. 70B

Imagine trying to fit a large, intricate puzzle on a small tabletop. That's what running a 70B model on a 3070_8GB is like. The 8B model, on the other hand, might be a more manageable puzzle, fitting comfortably on the tabletop.

Here's a simplified comparison:

| Model | Size | Complexity | GPU Requirements |

|---|---|---|---|

| Llama3 8B | Smaller | Simpler | Less demanding |

| Llama3 70B | Larger | More complex | More demanding |

NVIDIA 3070_8GB: A Powerhouse, But A Challenging Match for 70B

The NVIDIA 3070_8GB is a powerful GPU, but it has its limitations. Think of it as a high-performance sports car: excellent for speed, but not ideal for hauling a massive load. It handles the 8B model well, but the 70B model might be pushing its limits.

Key Takeaway:

- The 3070_8GB is a good fit for smaller models, but running a larger model like Llama3 70B requires more powerful hardware or alternative solutions.

Practical Recommendations: Use Cases and Workarounds

Harnessing the Power of Llama3 8B on 3070_8GB

The Llama3 8B model, with its respectable performance on the 3070_8GB, is a powerful tool for various tasks:

- Code generation: Let it generate code snippets for you, saving time and effort. Imagine it completing your lines of code in seconds, making you an AI-powered coding ninja.

- Creative writing: Craft engaging short stories, poems, and even scripts. See if it can channel your inner Shakespeare or even generate a script for your next movie.

- Summarization: Quickly get the gist of lengthy articles and documents. This means you can breeze through your research papers and focus on the essential information.

- Translation: Translate text between languages, making communication easier and faster. Imagine having conversations with people around the world, all thanks to your local LLM.

Workarounds for Llama3 70B: Exploring Alternatives

If you're determined to run the Llama3 70B model locally, consider these workarounds:

- Upgrade Your Hardware: Invest in a more powerful GPU, like a 4090, or a dedicated LLM inference card. This is your ticket to a smoother experience.

- Compromise on Model Size: Explore smaller models like the Llama2 7B. These are less demanding and might work well on your existing hardware.

- Cloud-Based Solutions: Utilize cloud providers like Google Cloud or Amazon Web Services (AWS). They offer powerful GPUs and dedicated LLM services, allowing you to run massive models without the hardware limitations.

FAQ

1. What is quantization, and why is it important for LLM performance?

Quantization is like a diet for LLMs. It shrinks the model's size by representing its data with fewer bits. This makes the model more efficient and faster, as it requires less memory and processing power. Think of it like a compressed file: you get the essential information in a much smaller package.

2. What is the difference between F16 and Q4KM data types?

Think of data types as the language your model uses to communicate. F16 is like speaking a simpler language, making it faster. Q4KM is more complex, but it's also more efficient in memory usage. The choice depends on your priorities: speed or efficiency.

3. Can I run Llama3 70B locally on a less powerful GPU?

It's possible but challenging. You might need to use lower precision settings or run the model in a more memory-efficient mode. It's like trying to squeeze a big puzzle into a small box: you might need to rearrange pieces to make it fit, but it might not be ideal.

Keywords:

Llama3, LLM, GPU, NVIDIA, 30708GB, token generation, quantization, F16, Q4K_M, model size, performance, local AI, cloud computing, use cases, workarounds, code generation, creative writing, summarization, translation, hardware upgrade, cloud-based solutions.