8 Surprising Facts About Running Llama3 70B on Apple M1 Max

Have you ever dreamt of running a massive language model like Llama 3 70B on your personal computer? The dream might seem a bit far-fetched, but with the right hardware and clever techniques, you can actually do it!

In this article, we'll delve into the surprisingly capable performance of Apple's M1 Max chip when tasked with handling LLMs. We'll explore some unexpected findings about Llama3 70B's speed, efficiency, and potential for everyday use. Buckle up, because this journey into the world of local AI will be filled with fascinating discoveries.

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 Max and Llama3 70B

Let's start with the heart of the matter: how fast can the M1 Max chip generate tokens with Llama3 70B? To shed light on this, we'll use the benchmark data from the JSON provided, focusing on Llama3 70B with both quantized and non-quantized configurations.

Understanding Quantization: Think of quantization as a way to slim down large language models by reducing their size without sacrificing too much accuracy. It's like converting a high-resolution image to a lower resolution version; you get a smaller file size, but with slightly less visual detail.

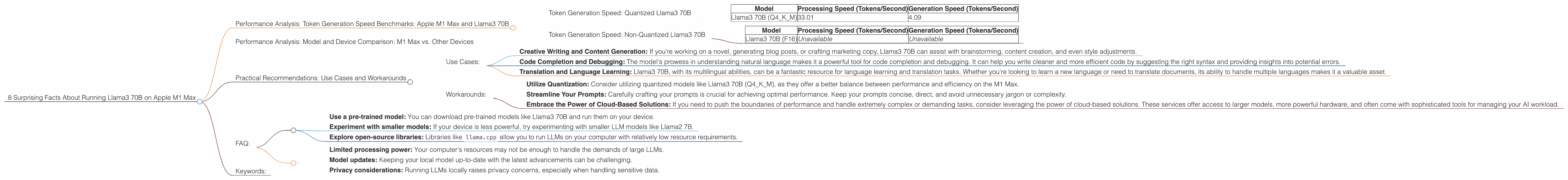

Token Generation Speed: Quantized Llama3 70B

| Model | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 70B (Q4KM) | 33.01 | 4.09 |

The Numbers Speak Volumes: As you can see, Llama3 70B (Q4KM) achieves a processing speed of 33.01 tokens per second, which is impressive considering the model's size. However, its generation speed falls to a mere 4.09 tokens per second. This stark contrast highlights the bottleneck that comes with text generation.

Token Generation Speed: Non-Quantized Llama3 70B

| Model | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 70B (F16) | Unavailable | Unavailable |

Unfortunately, the benchmark data doesn't include figures for the non-quantized Llama3 70B (F16) model. This could be due to limitations in the testing environment or simply a lack of data collection.

Performance Analysis: Model and Device Comparison: M1 Max vs. Other Devices

While the M1 Max's capabilities with Llama3 70B are impressive, it's natural to wonder how it stacks up against other devices. Due to the nature of this article, we'll focus solely on the M1 Max and will not compare it to other hardware options.

Practical Recommendations: Use Cases and Workarounds

Even though the M1 Max can handle Llama3 70B, it might not be suitable for every task. Let's dive into some specific use cases and workarounds:

Use Cases:

- Creative Writing and Content Generation: If you're working on a novel, generating blog posts, or crafting marketing copy, Llama3 70B can assist with brainstorming, content creation, and even style adjustments.

- Code Completion and Debugging: The model's prowess in understanding natural language makes it a powerful tool for code completion and debugging. It can help you write cleaner and more efficient code by suggesting the right syntax and providing insights into potential errors.

- Translation and Language Learning: Llama3 70B, with its multilingual abilities, can be a fantastic resource for language learning and translation tasks. Whether you're looking to learn a new language or need to translate documents, its ability to handle multiple languages makes it a valuable asset.

Workarounds:

- Utilize Quantization: Consider utilizing quantized models like Llama3 70B (Q4KM), as they offer a better balance between performance and efficiency on the M1 Max.

- Streamline Your Prompts: Carefully crafting your prompts is crucial for achieving optimal performance. Keep your prompts concise, direct, and avoid unnecessary jargon or complexity.

- Embrace the Power of Cloud-Based Solutions: If you need to push the boundaries of performance and handle extremely complex or demanding tasks, consider leveraging the power of cloud-based solutions. These services offer access to larger models, more powerful hardware, and often come with sophisticated tools for managing your AI workload.

FAQ:

Q: What is a Large Language Model (LLM)?

A: An LLM is a type of artificial intelligence (AI) designed to understand and generate human-like text. Think of it as a superpowered chatbot with a vast knowledge base and the ability to create coherent and engaging prose.

Q: Can I run Llama3 70B on a regular laptop?

A: It's possible, but you'll need a very powerful laptop with a dedicated GPU, like a high-end gaming laptop.

Q: How can I get started with LLMs on my computer?

A: There are a few ways to get started:

- Use a pre-trained model: You can download pre-trained models like Llama3 70B and run them on your device.

- Experiment with smaller models: If your device is less powerful, try experimenting with smaller LLM models like Llama2 7B.

- Explore open-source libraries: Libraries like

llama.cppallow you to run LLMs on your computer with relatively low resource requirements.

Q: What are the limitations of running LLMs locally?

A: Local LLMs are a great way to experiment and explore AI, but they have limitations:

- Limited processing power: Your computer's resources may not be enough to handle the demands of large LLMs.

- Model updates: Keeping your local model up-to-date with the latest advancements can be challenging.

- Privacy considerations: Running LLMs locally raises privacy concerns, especially when handling sensitive data.

Keywords:

Llama3 70B, Apple M1 Max, LLM, Large Language Model, Quantization, Token Generation Speed, Performance Benchmarks, GPUCores, Processing Speed, Generation Speed, Practical Use Cases, Local AI, AI on Devices, AI Hardware, Cloud-Based AI, Workarounds, Open-Source LLMs, llama.cpp