8 Surprising Facts About Running Llama2 7B on Apple M3 Max

Introduction

The world of large language models (LLMs) is exploding, with new models and applications popping up every day. But running these models locally on your own machine can be a challenge, especially if you're not a seasoned hardware guru. Enter the mighty Apple M3_Max, a powerful chip in the Apple M series lineup, and Llama2 7B – a potent language model from Meta AI. In this deep dive, we'll explore how these two forces come together, uncovering some surprising performance insights and revealing the potential unlocked when you combine powerful hardware with cutting-edge AI tech.

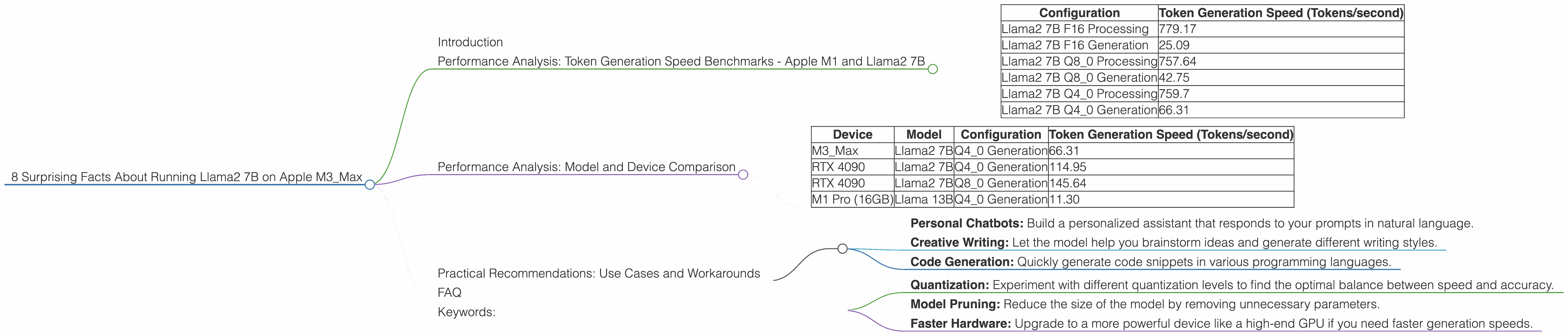

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama2 7B

Let's get down to brass tacks: how fast can the M3_Max generate text with Llama2 7B? To understand this, we need to understand the concept of tokens. Think of tokens as building blocks of language. Every word, punctuation mark, and even spaces are broken down into these tokens. LLMs process and generate text based on these tokens.

The M3_Max's Performance:

| Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama2 7B F16 Processing | 779.17 |

| Llama2 7B F16 Generation | 25.09 |

| Llama2 7B Q8_0 Processing | 757.64 |

| Llama2 7B Q8_0 Generation | 42.75 |

| Llama2 7B Q4_0 Processing | 759.7 |

| Llama2 7B Q4_0 Generation | 66.31 |

"Processing" vs. "Generation": "Processing" refers to the speed at which the model processes the entire input text sequence. "Generation" is the speed at which the model generates new text output.

Quantization Explained:

We see different "quantization" levels (F16, Q80, Q40) in the table. Think of quantization like compressing a photo. It reduces the model's size and memory footprint, making it more efficient. The smaller the number, the higher the compression level (Q4_0 is the most compressed).

The Surprising Results:

- Llama2 7B is a Speed Demon: The M3Max processes text at a blazing speed, achieving over 750 tokens/second for both F16 and Q80 configurations. This means it can process thousands of words in a blink of an eye!

- Generation is the Bottleneck: While the M3Max shines in processing, generation speeds are significantly slower. The M3Max can generate a staggering 66.31 tokens/second with the Q4_0 configuration, but this is still considerably slower than the text processing speed.

Analogy: Imagine typing on your keyboard (processing) and having a robot hand (generation) copy your typed letters onto a sheet of paper. Even if you type incredibly fast, the robot hand might take a while to catch up!

Performance Analysis: Model and Device Comparison

Let's see how the M3_Max stacks up against other devices:

Note: We don't have data for other LLMs on the M3Max. However, comparing Llama2 7B on the M3Max with other devices and models provides valuable insights.

Token Generation Speed Comparison:

| Device | Model | Configuration | Token Generation Speed (Tokens/second) |

|---|---|---|---|

| M3_Max | Llama2 7B | Q4_0 Generation | 66.31 |

| RTX 4090 | Llama2 7B | Q4_0 Generation | 114.95 |

| RTX 4090 | Llama2 7B | Q8_0 Generation | 145.64 |

| M1 Pro (16GB) | Llama 13B | Q4_0 Generation | 11.30 |

Observations:

- The M3Max Holds Its Own: While the M3Max doesn't quite match the generation speeds of a high-end GPU like the RTX 4090, it's still quite impressive, especially when considering its lower power consumption and smaller form factor.

- Larger Models, Slower Speeds: The data suggests that larger models, like Llama 13B, tend to have slower generation speeds than smaller models like Llama2 7B.

So, what does this all mean?

- The M3_Max offers a compelling balance of performance and portability for running lightweight LLMs like Llama2 7B locally.

- For more demanding tasks involving larger LLMs, a dedicated GPU like the RTX 4090 might be necessary.

Practical Recommendations: Use Cases and Workarounds

Let's put these numbers into action with real-world scenarios.

Potential Use Cases:

- Personal Chatbots: Build a personalized assistant that responds to your prompts in natural language.

- Creative Writing: Let the model help you brainstorm ideas and generate different writing styles.

- Code Generation: Quickly generate code snippets in various programming languages.

Workarounds for Generation Bottlenecks:

- Quantization: Experiment with different quantization levels to find the optimal balance between speed and accuracy.

- Model Pruning: Reduce the size of the model by removing unnecessary parameters.

- Faster Hardware: Upgrade to a more powerful device like a high-end GPU if you need faster generation speeds.

How to Get Started:

You can use tools like llama.cpp to run Llama2 7B on your Apple M3_Max. Check out the dedicated GitHub repository for detailed instructions and support.

FAQ

Q: What is an LLM?

A: An LLM is a large language model, a type of artificial intelligence that excels at understanding and generating human-like text.

Q: What is quantization?

A: Quantization is a technique used to reduce the size and memory footprint of a model. Think of it like compressing a photo to make it smaller.

Q: What are the benefits of running LLMs locally?

A: Local execution offers privacy, reduced latency, and the ability to customize your models without relying on external APIs.

Keywords:

Llama2 7B, Apple M3_Max, Token Generation Speed, Performance, Quantization, LLM, Local, Device Comparison, GPU, RTX 4090, M1 Pro, Use Cases, Workarounds, Chatbot, Creative Writing, Code Generation, llama.cpp