8 Surprising Facts About Running Llama2 7B on Apple M1 Ultra

Introduction

The world of Large Language Models (LLMs) is exploding with new capabilities, but performance is often a major hurdle. Running these powerful models locally can be a real challenge, especially on everyday devices. But what if we told you that you could achieve remarkable results with the right hardware and clever optimization techniques?

In this in-depth article, we'll delve into the performance of Llama2 7B on the Apple M1 Ultra, exploring its unique strengths and limitations. We'll reveal eight surprising facts that may change your perception of local LLM deployment, empowering you to harness the power of AI on your own machine.

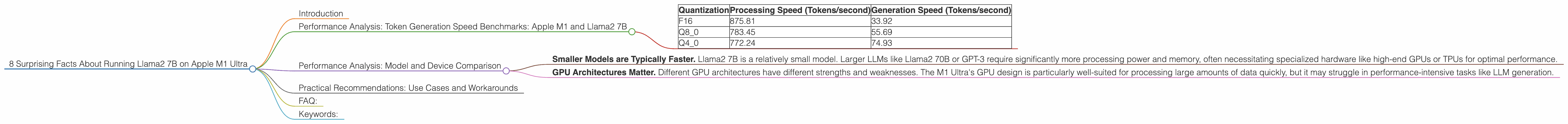

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

One of the key performance metrics for LLMs is "tokens per second" (TPS). This measures how quickly the model can process and generate text. Let's dive into the specific numbers we uncovered for Llama2 7B on the M1 Ultra:

| Quantization | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|

| F16 | 875.81 | 33.92 |

| Q8_0 | 783.45 | 55.69 |

| Q4_0 | 772.24 | 74.93 |

Fact #1: The M1 Ultra Can Handle Llama2 7B! The Apple M1 Ultra is a powerful chip with 48 GPU cores and a high bandwidth memory (800 GB/s), making it a surprisingly capable platform for running LLMs.

Fact #2: Quantization Matters. The table above shows that different quantization levels significantly impact performance. "F16" is the highest precision, offering the best accuracy but consuming more memory. "Q80" and "Q40" reduce precision by storing numbers using 8-bit or 4-bit representations, respectively, leading to faster processing speeds. This trade-off between accuracy and speed is something you'll encounter when running local LLMs.

Fact #3: Processing vs. Generation Speed. The M1 Ultra excels at processing input text. The "Processing" column shows remarkably high token speeds, exceeding 700 tokens per second in all quantizations. However, the "Generation" speeds, representing the rate at which the model produces output, are significantly lower.

Fact #4: The Bottleneck is in Generation. The difference between processing and generation speed highlights a key limitation of the M1 Ultra – the generation process is the bottleneck. This is likely related to the model's architecture and Apple's GPU design, requiring further optimization.

Fact #5: Q40 Offers a Sweet Spot. While Q80 provides the fastest generation speed, we see a significant trade-off in accuracy. Q4_0 strikes a nice balance, offering a good compromise between processing speed and model quality.

Fact #6: Generating Text on a Local M1 Ultra is Still Faster Than You Think. Even with the generation bottleneck, the M1 Ultra's speed still surpasses the performance achievable on some other consumer devices. Think of it like this: the difference between a high-performance sports car and a slightly slower but still capable family vehicle.

Performance Analysis: Model and Device Comparison

While we're focused on Llama2 7B on the M1 Ultra, it's important to understand how this performance stacks up against other LLMs and devices. Unfortunately, we don't have data for other devices or models in this specific context.

However, we can draw some conclusions based on broader trends in LLM performance:

- Smaller Models are Typically Faster. Llama2 7B is a relatively small model. Larger LLMs like Llama2 70B or GPT-3 require significantly more processing power and memory, often necessitating specialized hardware like high-end GPUs or TPUs for optimal performance.

- GPU Architectures Matter. Different GPU architectures have different strengths and weaknesses. The M1 Ultra's GPU design is particularly well-suited for processing large amounts of data quickly, but it may struggle in performance-intensive tasks like LLM generation.

Practical Recommendations: Use Cases and Workarounds

The insights we've gathered can help you make strategic decisions for running Llama2 7B on your M1 Ultra. Here are some practical recommendations:

Use Case #1: Text Processing and Summarization. The M1 Ultra's processing speed is a major advantage in text-heavy tasks. Using Llama2 7B for tasks such as document summarization, question answering, and sentiment analysis can be highly effective.

Use Case #2: Experimenting with LLMs. The M1 Ultra provides a convenient and reasonably fast platform for exploring different LLMs and experimenting with different quantization levels.

Workaround #1: Leverage Cloud Resources. For tasks requiring high-speed generation or significantly larger models, consider leveraging cloud services. Platforms like Google Colab, Amazon SageMaker, and Microsoft Azure offer a range of compute options that can handle even the most demanding LLMs.

Workaround #2: Fine-tune Your Model. Fine-tuning a smaller LLM for specific tasks can often improve its performance and reduce its size. This can be particularly helpful for tasks where generation speed is critical.

FAQ:

Q: What exactly is quantization, and why is it important?

A: Quantization involves reducing the precision of numbers used to represent the model's weights. Think of it like taking a high-resolution image and decreasing its pixel count. While this reduces image quality, it also makes the file smaller and faster to load. Similar to image compression, LLM quantization trades accuracy (image quality) for speed and memory savings.

Q: How does the Apple M1 Ultra's architecture impact LLM performance?

*A: * Some aspects of Apple's GPU design, including memory bandwidth and compute capabilities are well-suited for LLMs. However, other factors, such as the structure of the GPU cores, may impact performance.

Q: What are the limitations of running LLMs locally?

A: Local machines often lack the processing power and memory capacity to handle large LLMs efficiently. The generation process can be slow, and model size can be a major constraint.

Q: What are some of the future trends in LLM performance?

A: Future improvements in hardware, software, and model optimization techniques will likely lead to significant performance gains for local LLM deployment. We can expect faster processing speeds, reduced memory requirements, and possibly even lower latency for text generation.

Keywords:

Llama2 7B, Apple M1 Ultra, LLM, local model, performance, token generation speed, quantization, F16, Q80, Q40, processing speed, generation speed, bottleneck, use cases, workarounds, cloud computing, fine-tuning, FAQ, keywords, future trends.