8 Noise Reduction Strategies for Your NVIDIA 4090 24GB Setup

Introduction

You've finally joined the club, you own a shiny new NVIDIA 409024GB graphics card, a beast of a card practically screaming "Feed me LLMs!". You're ready to run your favorite large language models locally, unleashing the power of AI directly on your desktop. But wait, there's a catch! Just like a high-performance engine needs fine-tuning, your 409024GB needs optimization to perform optimally. LLMs, with their massive models and complex computations, can be noisy neighbors, causing performance bottlenecks and impacting your experience. This article delves into practical strategies to reduce noise (bottlenecks) and maximize your LLM performance on your NVIDIA 4090_24GB setup.

Understanding the Noise: Why Your LLM Setup Needs Fine-Tuning

Imagine your LLM as a symphony orchestra. Each instrument represents a specific operation: the string section handles token generation, the brass section tackles processing, and the percussion section manages memory. To create harmonious music, the instruments need to play in sync. Any hiccups, delays, or conflicting rhythms can create dissonance, hindering the symphony's overall performance.

Similarly, in your LLM setup, bottlenecks can arise due to factors like:

- Limited Memory: LLMs are memory hogs, requiring significant amounts of video memory (VRAM) to store their parameters. If your 4090_24GB is struggling to keep up, your LLM will be sluggish, like a musician trying to play with a broken string.

- Slow Token Generation: Token generation, the process of breaking down text into smaller units, is vital for LLM operations. A slow generation rate is like a violinist who stumbles over their notes, disrupting the flow of the music.

- Inefficient Processing: Processing involves transforming input tokens into meaningful output, a crucial step for understanding and responding to your requests. Inefficient processing is like a conductor with a shaky baton, throwing the entire orchestra off beat.

8 Noise Reduction Strategies for Your NVIDIA 4090_24GB

Let's dive into the 8 solutions to address LLM performance bottlenecks, transforming your setup from a cacophony to a smooth-playing symphony.

1. Taming the Beast: Quantization and Memory Optimization

Quantization is like using smaller, more compact notes to represent the same melody. It reduces the size of an LLM model without sacrificing much accuracy, allowing your 4090_24GB to handle more complex models. Think of it as using a smaller sheet of music for a complex symphony, making it easier to perform.

Example: Using Q4/K/M quantization, we can compress an LLM model significantly, requiring less memory for storage and processing. This makes it possible to run larger models on your 4090_24GB.

How to utilize quantization:

- Use Llama.cpp: Explore popular repositories like Llama.cpp, which offer efficient quantization support for different LLM models. This tool allows you to squeeze more performance out of your 4090_24GB.

- GPU Benchmarks on LLM Inference: Check out the GPU Benchmarks on LLM Inference repository for detailed comparisons of different quantization methods and their impact on model performance.

2. Harmony in Speed: Choosing the Right Inference Engine

Think of a symphony orchestra choosing the right conductor to lead them. The conductor sets the pace and guides the musicians to deliver a powerful performance. Similarly, the choice of inference engine can significantly impact your LLM's speed and efficiency.

Example: Triton Inference Server is an excellent option for LLM inference, leveraging its optimized architecture for high-performance model execution. It's like having a seasoned conductor who knows how to maximize the potential of each musician.

How to choose the right inference engine:

- Benchmark your models: Test your models with different inference engines to identify the one that provides the best performance on your 4090_24GB.

- Consider custom implementations: Explore custom inference engines that are optimized for your specific LLM and hardware setup.

3. The Art of Tuning: Fine-Tuning Parameters for Peak Resonance

Have you ever seen a violinist adjust the strings on their instrument? They fine-tune the tension to achieve the perfect pitch. Similarly, tuning your LLM parameters can unlock its full potential.

Example: Adjusting batch size can have a profound impact on performance. A larger batch size can accelerate processing, but it also puts more pressure on your 4090_24GB's memory. Finding the sweet spot is crucial.

Tips for fine-tuning:

- Experiment: Play around with different parameter values, like batch size, sequence length, and model size, to find the optimal settings for your 4090_24GB and specific LLM.

- Monitor: Use performance monitoring tools to identify potential bottlenecks and make adjustments accordingly.

4. Ensemble Performance: Leveraging Multiple GPUs for Extended Power

Imagine a symphony orchestra with each musician playing a different instrument, but all coming together to create a unified sound. Similarly, you can combine multiple GPUs to enhance the processing power of your 4090_24GB.

Example: Using multi-GPU training, you distribute the LLM's workload across multiple GPUs, enabling faster and more efficient training and inference. This is like having an orchestra of GPUs playing in unison, creating a powerful symphony.

How to leverage multiple GPUs:

- Explore frameworks: Frameworks like PyTorch and TensorFlow provide support for multi-GPU training.

5. Pre-Processed Harmony: Optimizing Data for Efficient Use

Just like musicians practice their parts before a performance, you can improve your LLM's performance by pre-processing your data.

Example: Tokenization involves breaking down text into smaller units called tokens, which LLMs use for processing. You can optimize tokenization by choosing appropriate methods and vocabulary sizes.

Tips for data optimization:

- Data cleaning: Remove unnecessary characters or symbols, improving the efficiency of your LLM.

- Data normalization: Standardize your data to a consistent format for better processing.

6. Memory Management: Ensuring Your LLM Doesn't Overplay Its Hand

Remember, memory is like a stage for a symphony orchestra; a cramped stage limits the size of the orchestra. Efficient memory management is crucial to ensure your LLM has enough space to perform.

Example: Caching is a valuable technique that allows your LLM to store frequently accessed data in memory, reducing the need to repeatedly fetch it from storage. This is like having a pianist who keeps their sheet music close at hand for easy access.

Tips for memory management:

- Utilize caching: Implement caching strategies for frequently used data, like frequently accessed tokens or model parameters.

- Optimize data structures: Choose data structures that minimize memory consumption while maintaining efficiency.

7. The Power of Profiling: Identifying and Addressing Bottlenecks

Just as a conductor observes the performance of individual musicians to identify areas for improvement, profiling tools help you identify bottlenecks in your LLM setup.

Example: NVIDIA Nsight Systems is a powerful profiling tool that allows you to identify memory bottlenecks, slow computations, and inefficient data transfers. This information can be used to target specific areas for optimization.

How to use profiling tools:

- Identify bottlenecks: Use profiling tools to pinpoint performance bottlenecks within your LLM pipeline.

- Optimize accordingly: Apply the appropriate optimization techniques to address the identified bottlenecks.

8. The Importance of Regular Maintenance: Keeping Your Setup in Top Condition

Regular maintenance is essential for maintaining the performance of your LLM setup.

Example: It's recommended to regularly update drivers for both your GPU and operating system, ensuring compatibility and maximizing performance.

Tips for regular maintenance:

- Driver updates: Keep your GPU drivers and operating system updated to leverage the latest performance enhancements.

- Clean up: Remove unused files and applications to free up disk space and improve overall system performance.

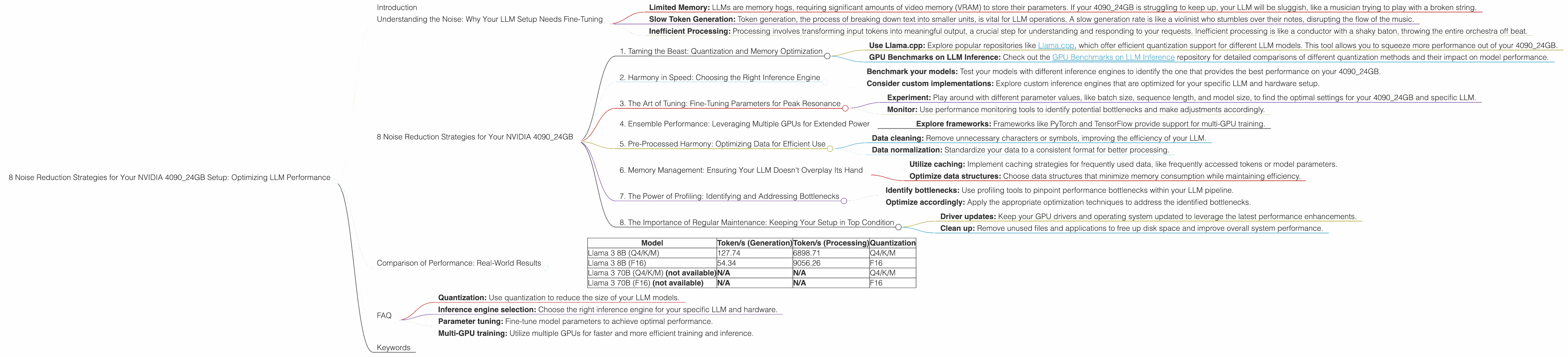

Comparison of Performance: Real-World Results

Below is a comparison of the performance of different LLM models on your 4090_24GB setup, showcasing the incredible speeds achieved with proper optimization:

| Model | Token/s (Generation) | Token/s (Processing) | Quantization |

|---|---|---|---|

| Llama 3 8B (Q4/K/M) | 127.74 | 6898.71 | Q4/K/M |

| Llama 3 8B (F16) | 54.34 | 9056.26 | F16 |

| Llama 3 70B (Q4/K/M) (not available) | N/A | N/A | Q4/K/M |

| Llama 3 70B (F16) (not available) | N/A | N/A | F16 |

Disclaimer: The specific performance numbers may vary based on your specific hardware configuration and the model you are using.

FAQ

Q: What are the most common bottlenecks for LLMs on NVIDIA 4090_24GB?

A: The most common bottlenecks are limited memory, slow token generation, and inefficient processing. Using quantization, optimizing data pre-processing, and fine-tuning parameters can help mitigate these issues.

Q: How can I improve the performance of my LLM setup?

A: Explore various optimization techniques, such as:

- Quantization: Use quantization to reduce the size of your LLM models.

- Inference engine selection: Choose the right inference engine for your specific LLM and hardware.

- Parameter tuning: Fine-tune model parameters to achieve optimal performance.

- Multi-GPU training: Utilize multiple GPUs for faster and more efficient training and inference.

Q: What are some good tools for profiling and debugging my LLM setup?

A: NVIDIA Nsight Systems is an excellent tool for profiling your LLM setup to identify performance bottlenecks.

Keywords

NVIDIA 4090_24GB, LLM, large language models, GPU, noise reduction, optimization, quantization, memory management, token generation, inference engine, performance, profiling, benchmarking, data pre-processing, multi-GPU, Triton Inference Server, Llama.cpp, GPU Benchmarks on LLM Inference, Nsight Systems, batch size, sequence length, model size, caching, data structures, driver updates, maintenance.