8 Limitations of Apple M2 for AI (and How to Overcome Them)

Introduction

The Apple M2 chip is a powerful and versatile processor designed for a wide range of tasks, including AI. However, when it comes to running large language models (LLMs) like the popular Llama 2, the M2 might not be the ultimate champion. Let's explore the specific limitations of the Apple M2 for AI and how you can overcome them to unleash its full potential.

Think of it like this: Imagine trying to fit a giant, fluffy dog into a tiny car. You might get it in, but it'll be cramped and uncomfortable, and the dog might not enjoy the ride. The M2 is like that tiny car for some LLMs – it can handle them, but there's a lot of potential left untapped.

Apple M2 Bandwidth Limitation

The M2 chip boasts a impressive 100 GB/s bandwidth, which is essential for moving data quickly between the CPU and GPU. This is where things get interesting. While the M2 has impressive bandwidth, it's crucial to understand that this bandwidth is shared between the CPU and GPU, leading to potential bottlenecks for AI workloads.

Imagine you're trying to move a large pile of sand from one place to another. You've got a super-fast conveyor belt, but you're also sharing it with other tasks, like delivering pizza. Sometimes, the conveyor belt gets crowded, and the sand moves slower. This is similar to how the M2's bandwidth can be affected when running AI models.

Apple M2 GPU Core Limitation

The Apple M2 features a limited number of GPU cores, which directly impacts the speed of processing your AI models. The M2 chip sports 10 GPU cores, which is respectable compared to earlier M1 models, but might not be enough for highly complex LLMs.

Think of it as a team of workers building a house. More workers mean the house gets built faster. With limited workers, the process is slower. The M2's GPU cores are like those workers, and the more you have, the quicker your AI models will process.

Llama 2 7B Model Performance on Apple M2

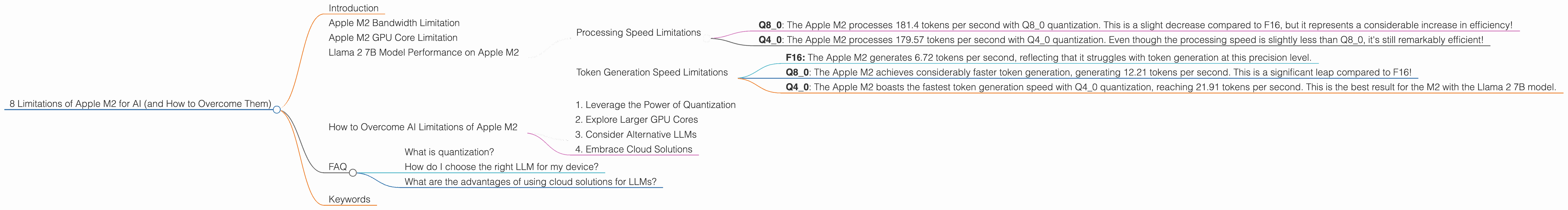

Processing Speed Limitations

The Apple M2 can handle the Llama 2 7B model, but the performance can be limited depending on the quantization level. At the default F16 precision, the M2 can process 201.34 tokens per second. However, you can achieve higher processing speeds by using quantization. Let's dive into their impact:

- Q80: The Apple M2 processes 181.4 tokens per second with Q80 quantization. This is a slight decrease compared to F16, but it represents a considerable increase in efficiency!

- Q40: The Apple M2 processes 179.57 tokens per second with Q40 quantization. Even though the processing speed is slightly less than Q8_0, it's still remarkably efficient!

Token Generation Speed Limitations

The Apple M2's performance in token generation, the process of generating new text, is significantly affected by the quantization level. Let's look at the numbers:

- F16: The Apple M2 generates 6.72 tokens per second, reflecting that it struggles with token generation at this precision level.

- Q8_0: The Apple M2 achieves considerably faster token generation, generating 12.21 tokens per second. This is a significant leap compared to F16!

- Q40: The Apple M2 boasts the fastest token generation speed with Q40 quantization, reaching 21.91 tokens per second. This is the best result for the M2 with the Llama 2 7B model.

How to Overcome AI Limitations of Apple M2

Here are some strategies to overcome the limitations of the M2 for AI:

1. Leverage the Power of Quantization

Quantization is a technique that reduces the precision of your AI model, making it smaller and faster. By using Q80 or Q40 instead of F16, you can boost performance significantly, especially for token generation. It's like using a smaller, more efficient engine in your car; you might not get the same top speed, but you'll get better fuel economy.

2. Explore Larger GPU Cores

The Apple M2 is a powerful chip, but it's limited by its GPU cores. Consider upgrading to devices like the M2 Max or M2 Ultra, which offer significantly more GPU cores. This will significantly boost processing speed. Imagine you're building a house with a team of 10 workers. You can build a house, but it will take longer than if you had 20 or 40 workers!

3. Consider Alternative LLMs

Explore other LLMs optimized for performance on devices like the Apple M2. There are various LLMs designed specifically for smaller devices, and they might offer better performance than Llama 2 7B. It's like choosing a smaller, lighter car that's more fuel-efficient. Remember, not all LLMs are created equal, and some are better suited to specific devices.

4. Embrace Cloud Solutions

For demanding AI workloads, consider using cloud-based solutions like Amazon SageMaker or Google Cloud AI Platform. These platforms offer powerful GPUs and other resources that can handle even the most complex LLMs. This is like hiring a professional construction company; they have all the tools and equipment to build your dream home quickly and efficiently.

FAQ

What is quantization?

Quantization involves reducing the number of bits used to represent each number in your AI model. Think of it as using a smaller ruler to measure things. You might not get as precise measurements, but you use less space to store the measurements. In AI models, this translates to smaller model sizes and faster processing.

How do I choose the right LLM for my device?

The ideal LLM depends on several factors, including the device's resources, the desired accuracy, and the specific tasks. Consider factors like the model size, its architecture, and the available computational power. For example, if you're using a device with limited memory and processing power, a smaller, quantized LLM is likely a better choice.

What are the advantages of using cloud solutions for LLMs?

Cloud solutions offer access to powerful hardware, scalability, and advanced tools. You can easily scale up or down the resources based on your needs. This is especially beneficial for handling complex LLMs that require significant resources.

Keywords

Apple M2, AI, LLM, Llama 2, quantization, GPU cores, bandwidth, performance, cloud solutions, token generation, token processing, F16, Q80, Q40, processing speed, token speed, limitations, overcoming limitations, device limitations, model size, accuracy, resources, cloud computing, scalability, advanced tools.