8 Key Factors to Consider When Choosing Between NVIDIA 4080 16GB and NVIDIA RTX 4000 Ada 20GB x4 for AI

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models popping up like mushrooms after a rain. These models have become increasingly powerful, capable of generating realistic text, translating languages, and even writing code. But running these models requires serious computational horsepower. Choosing the right hardware can be a real headache, especially when you're trying to decide between a single high-end GPU like the NVIDIA 4080 16GB and a multi-GPU setup like the NVIDIA RTX 4000 Ada 20GB x4.

This article delves into the fascinating world of LLM hardware choices, aiming to help you make an informed decision for your specific needs. We'll dissect the performance of these two powerhouses, explore their strengths and weaknesses, and offer practical recommendations based on your use case and budget. Buckle up, because this is going to be a wild ride!

Performance Analysis: NVIDIA 4080 16GB vs. NVIDIA RTX 4000 Ada 20GB x4

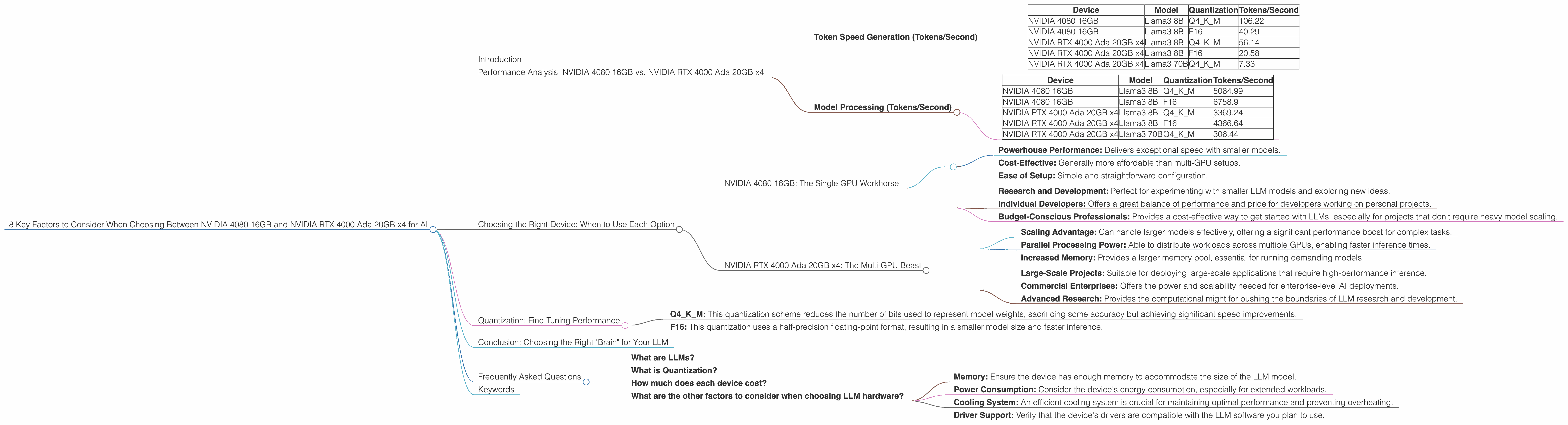

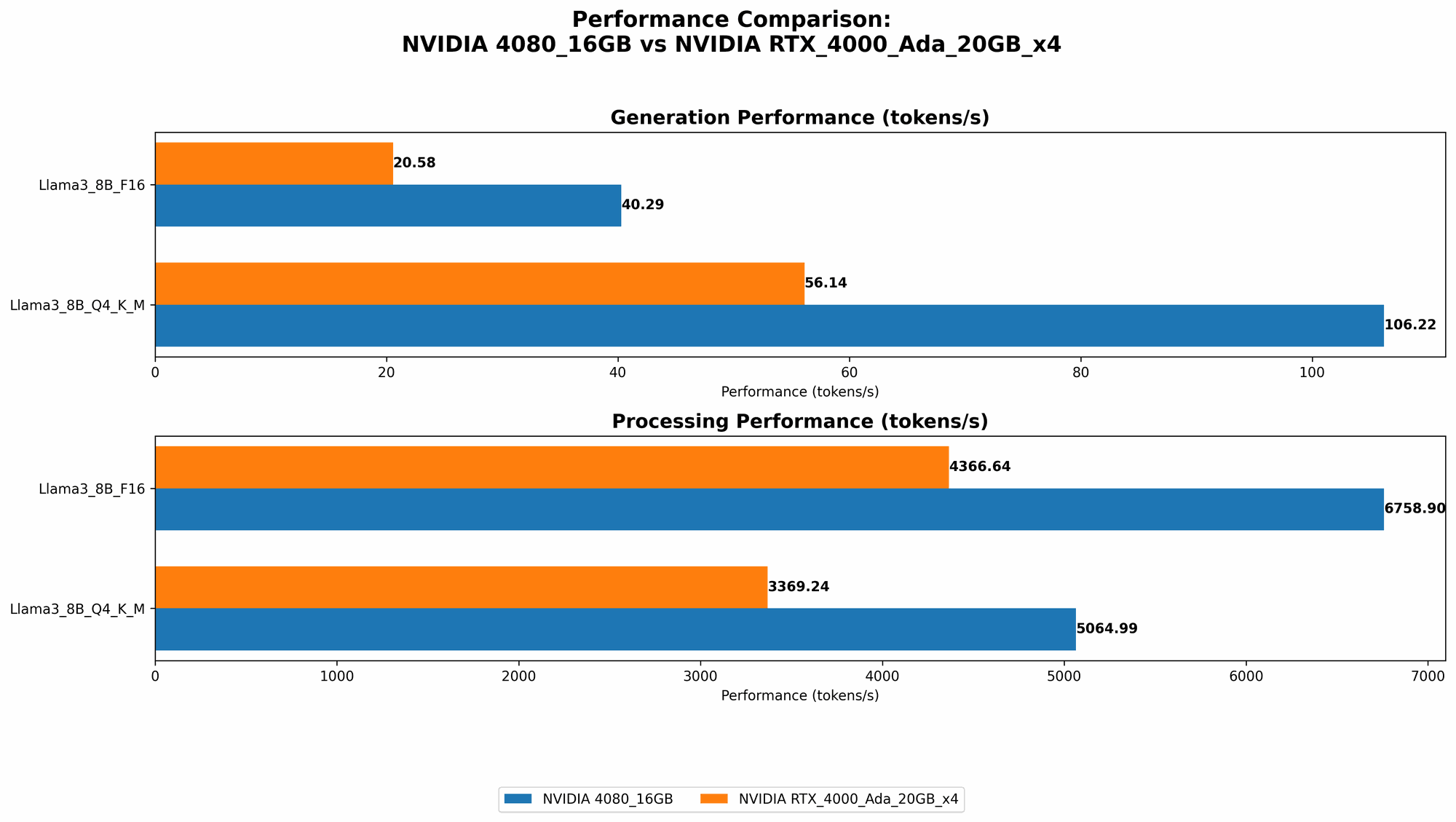

Token Speed Generation (Tokens/Second)

To understand the raw performance of each device, we need to look at their token generation speed. This metric measures how many tokens (words or sub-word units) a device can process per second, basically how fast it can churn out text.

Let's analyze the data:

| Device | Model | Quantization | Tokens/Second |

|---|---|---|---|

| NVIDIA 4080 16GB | Llama3 8B | Q4KM | 106.22 |

| NVIDIA 4080 16GB | Llama3 8B | F16 | 40.29 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama3 8B | Q4KM | 56.14 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama3 8B | F16 | 20.58 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama3 70B | Q4KM | 7.33 |

Observations:

- Single GPU vs. Multi-GPU: The NVIDIA 4080 16GB dominates the smaller Llama 3 8B model in both Q4KM and F16 quantization, achieving more than double the token generation speed of the NVIDIA RTX 4000 Ada 20GB x4. This clearly shows that a single, high-end GPU can outperform multiple lower-end GPUs for smaller models. Think of it this way, a single racehorse might outrun a team of zebras.

- Scaling with Model Size: The NVIDIA RTX 4000 Ada 20GB x4 starts to show its worth with the larger Llama 3 70B model. It can still generate tokens, although significantly slower than with the 8B model. The 4080 16GB has no data available for the 70B model, so we can't compare it directly.

Key Takeaways:

- Single GPU: The NVIDIA 4080 16GB reigns supreme for smaller models, offering superior token generation speed.

- Multi-GPU: The NVIDIA RTX 4000 Ada 20GB x4 becomes more competitive with larger models, but performance drops considerably with the 70B model.

Model Processing (Tokens/Second)

Now we shift our focus to model processing speed, which measures how quickly the device can process tokens during inference. This gives us insight into a model's overall efficiency.

| Device | Model | Quantization | Tokens/Second |

|---|---|---|---|

| NVIDIA 4080 16GB | Llama3 8B | Q4KM | 5064.99 |

| NVIDIA 4080 16GB | Llama3 8B | F16 | 6758.9 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama3 8B | Q4KM | 3369.24 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama3 8B | F16 | 4366.64 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama3 70B | Q4KM | 306.44 |

Observations:

- Larger Model Advantage: Once again, the NVIDIA RTX 4000 Ada 20GB x4 excels with the Llama 3 70B model, achieving a decent processing speed compared to the NVIDIA 4080 16GB for which data is unavailable. This multi-GPU system shows its ability to handle the demands of larger models more effectively.

- Dominant Performance: For the Llama 3 8B model, the NVIDIA 4080 16GB shines in terms of processing speed, delivering significantly higher results than the multi-GPU setup in both Q4KM and F16 quantization.

Key Takeaways:

- Larger Models: The NVIDIA RTX 4000 Ada 20GB x4 shows its strength with larger models, achieving a decent processing speed even with the 70B model.

- Smaller Models: The NVIDIA 4080 16GB remains the champion for processing speed, showcasing its efficiency with smaller models.

Choosing the Right Device: When to Use Each Option

The choice between the NVIDIA 4080 16GB and the NVIDIA RTX 4000 Ada 20GB x4 boils down to your specific needs and use case. Think of it like choosing the right tool for a specific task.

NVIDIA 4080 16GB: The Single GPU Workhorse

Strengths:

- Powerhouse Performance: Delivers exceptional speed with smaller models.

- Cost-Effective: Generally more affordable than multi-GPU setups.

- Ease of Setup: Simple and straightforward configuration.

Ideal for:

- Research and Development: Perfect for experimenting with smaller LLM models and exploring new ideas.

- Individual Developers: Offers a great balance of performance and price for developers working on personal projects.

- Budget-Conscious Professionals: Provides a cost-effective way to get started with LLMs, especially for projects that don't require heavy model scaling.

NVIDIA RTX 4000 Ada 20GB x4: The Multi-GPU Beast

Strengths:

- Scaling Advantage: Can handle larger models effectively, offering a significant performance boost for complex tasks.

- Parallel Processing Power: Able to distribute workloads across multiple GPUs, enabling faster inference times.

- Increased Memory: Provides a larger memory pool, essential for running demanding models.

Ideal for:

- Large-Scale Projects: Suitable for deploying large-scale applications that require high-performance inference.

- Commercial Enterprises: Offers the power and scalability needed for enterprise-level AI deployments.

- Advanced Research: Provides the computational might for pushing the boundaries of LLM research and development.

Quantization: Fine-Tuning Performance

Quantization is a technique that reduces the precision of model weights, making them smaller and faster to load and process. Think of it as reducing the number of colors in an image, making it less "pixel-perfect" but much smaller and easier to download. This often comes at a small cost in terms of accuracy.

- Q4KM: This quantization scheme reduces the number of bits used to represent model weights, sacrificing some accuracy but achieving significant speed improvements.

- F16: This quantization uses a half-precision floating-point format, resulting in a smaller model size and faster inference.

In our data, we observe that the NVIDIA 4080 16GB consistently outperforms the NVIDIA RTX 4000 Ada 20GB x4 in both Q4KM and F16 quantization with the Llama 3 8B model. This highlights the importance of choosing the right quantization for your specific needs.

Conclusion: Choosing the Right "Brain" for Your LLM

Ultimately, the decision of choosing between the NVIDIA 4080 16GB and the NVIDIA RTX 4000 Ada 20GB x4 depends on your specific needs, priorities, and budget. If you're working with smaller models or on a tighter budget, the NVIDIA 4080 16GB is a fantastic option. If you're tackling larger models or require massive computational muscle, the NVIDIA RTX 4000 Ada 20GB x4 is the way to go.

Remember, the right hardware can make or break your AI endeavors. By carefully considering factors like model size, desired speed, and budget, you can ensure that your LLM runs seamlessly and unleashes its full potential.

Frequently Asked Questions

What are LLMs?

LLMs are large language models, a type of artificial intelligence that excels at understanding and generating human language. They are trained on massive datasets of text, allowing them to perform tasks like translation, text summarization, and creative writing.

What is Quantization?

Quantization is a technique used to reduce the size of LLM models by lowering the precision of their weights. This results in smaller models that are faster to load and process, often with a small trade-off in accuracy.

How much does each device cost?

The price of both the NVIDIA 4080 16GB and the NVIDIA RTX 4000 Ada 20GB x4 can vary depending on the retailer and current market conditions. However, the NVIDIA 4080 16GB is generally more affordable than the multi-GPU setup.

What are the other factors to consider when choosing LLM hardware?

Beyond performance, other essential factors include:

- Memory: Ensure the device has enough memory to accommodate the size of the LLM model.

- Power Consumption: Consider the device's energy consumption, especially for extended workloads.

- Cooling System: An efficient cooling system is crucial for maintaining optimal performance and preventing overheating.

- Driver Support: Verify that the device's drivers are compatible with the LLM software you plan to use.

Keywords

LLM, Large Language Model, NVIDIA 4080 16GB, NVIDIA RTX 4000 Ada 20GB x4, Token Speed, Model Processing, Quantization, Q4KM, F16, AI, Machine Learning, Deep Learning, Inference, GPU, Hardware, Performance, Comparison, Cost, Budget, Model Size, Use Case, FAQs, Memory, Power Consumption, Cooling System, Driver Support.