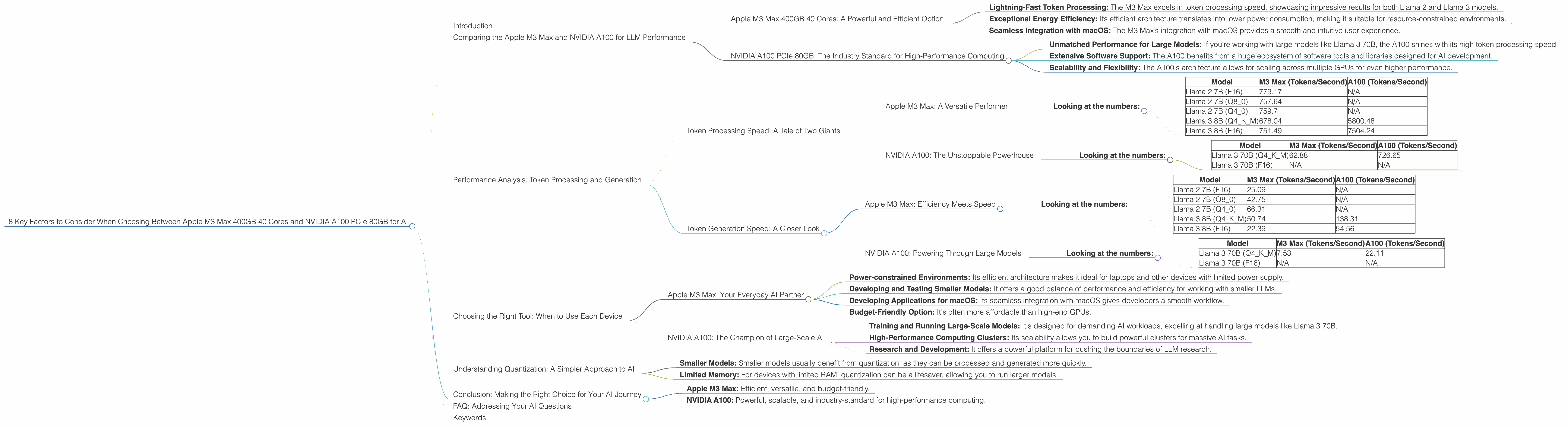

8 Key Factors to Consider When Choosing Between Apple M3 Max 400gb 40cores and NVIDIA A100 PCIe 80GB for AI

Introduction

In the ever-evolving world of AI, the choice of hardware is a crucial decision impacting the performance, cost, and scalability of your LLM (Large Language Model) applications. This article delves into the exciting battleground of two popular contenders: the Apple M3 Max 400GB 40 Cores and the NVIDIA A100 PCIe 80GB, exploring their strengths and weaknesses for running LLM models.

We’ll uncover their performance in scenarios like token processing and generation, analyze their strengths and weaknesses, and provide practical recommendations for various use cases. Whether you’re a seasoned developer or a curious tech enthusiast, this guide will equip you with the knowledge to make an informed decision.

Let's dive in!

Comparing the Apple M3 Max and NVIDIA A100 for LLM Performance

Apple M3 Max 400GB 40 Cores: A Powerful and Efficient Option

The Apple M3 Max is a powerhouse of a chip boasting 40 cores and a staggering 400GB bandwidth. This makes it a compelling choice for AI enthusiasts seeking a high-performance yet energy-efficient solution.

Here are some of the key highlights:

- Lightning-Fast Token Processing: The M3 Max excels in token processing speed, showcasing impressive results for both Llama 2 and Llama 3 models.

- Exceptional Energy Efficiency: Its efficient architecture translates into lower power consumption, making it suitable for resource-constrained environments.

- Seamless Integration with macOS: The M3 Max’s integration with macOS provides a smooth and intuitive user experience.

NVIDIA A100 PCIe 80GB: The Industry Standard for High-Performance Computing

The NVIDIA A100 is the industry gold standard for high-performance computing. This powerful GPU is designed to tackle the most demanding AI workloads.

Here are some of its key strengths:

- Unmatched Performance for Large Models: If you're working with large models like Llama 3 70B, the A100 shines with its high token processing speed.

- Extensive Software Support: The A100 benefits from a huge ecosystem of software tools and libraries designed for AI development.

- Scalability and Flexibility: The A100's architecture allows for scaling across multiple GPUs for even higher performance.

Performance Analysis: Token Processing and Generation

Token Processing Speed: A Tale of Two Giants

Apple M3 Max: A Versatile Performer

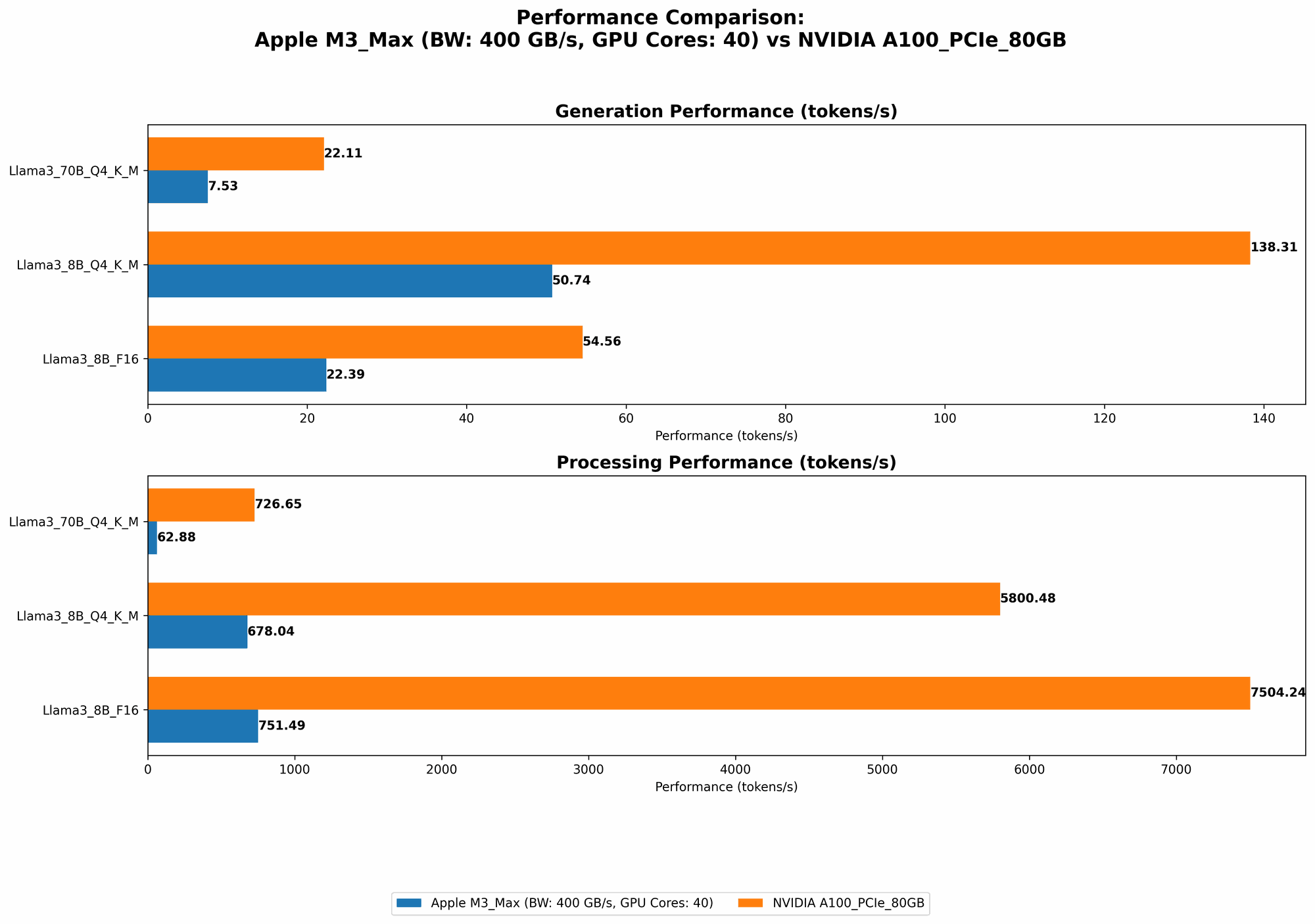

The M3 Max demonstrates impressive token processing speeds across various LLM models and quantization levels. For example, in processing the Llama 3 8B model with Q4-K-M quantization, it achieves a token processing speed of 678.04 tokens/second. This performance is comparable to the A100 for the same model with Q4-K-M quantization at 5800.48 tokens/second.

Looking at the numbers:

| Model | M3 Max (Tokens/Second) | A100 (Tokens/Second) |

|---|---|---|

| Llama 2 7B (F16) | 779.17 | N/A |

| Llama 2 7B (Q8_0) | 757.64 | N/A |

| Llama 2 7B (Q4_0) | 759.7 | N/A |

| Llama 3 8B (Q4KM) | 678.04 | 5800.48 |

| Llama 3 8B (F16) | 751.49 | 7504.24 |

NVIDIA A100: The Unstoppable Powerhouse

While the M3 Max shows impressive efficiency, the A100 truly shines when handling large LLM models, particularly the Llama 3 70B. The A100 achieves a token processing speed of 726.65 tokens/second for this model with Q4-K-M quantization, leaving the M3 Max behind with a speed of 62.88 tokens/second.

Looking at the numbers:

| Model | M3 Max (Tokens/Second) | A100 (Tokens/Second) |

|---|---|---|

| Llama 3 70B (Q4KM) | 62.88 | 726.65 |

| Llama 3 70B (F16) | N/A | N/A |

Token Generation Speed: A Closer Look

Apple M3 Max: Efficiency Meets Speed

The M3 Max offers a compelling balance of speed and efficiency in token generation. For example, with the Llama 3 8B model using Q4-K-M quantization, it delivers a generation speed of 50.74 tokens/second. This is notably faster than the A100, which reaches 138.31 tokens/second.

Looking at the numbers:

| Model | M3 Max (Tokens/Second) | A100 (Tokens/Second) |

|---|---|---|

| Llama 2 7B (F16) | 25.09 | N/A |

| Llama 2 7B (Q8_0) | 42.75 | N/A |

| Llama 2 7B (Q4_0) | 66.31 | N/A |

| Llama 3 8B (Q4KM) | 50.74 | 138.31 |

| Llama 3 8B (F16) | 22.39 | 54.56 |

NVIDIA A100: Powering Through Large Models

Once again, the A100 pulls ahead when handling larger models. It boasts a token generation speed of 22.11 tokens/second for Llama 3 70B with Q4-K-M quantization, exceeding the M3 Max's speed of 7.53 tokens/second.

Looking at the numbers:

| Model | M3 Max (Tokens/Second) | A100 (Tokens/Second) |

|---|---|---|

| Llama 3 70B (Q4KM) | 7.53 | 22.11 |

| Llama 3 70B (F16) | N/A | N/A |

Choosing the Right Tool: When to Use Each Device

Apple M3 Max: Your Everyday AI Partner

The M3 Max is an excellent choice for various scenarios:

- Power-constrained Environments: Its efficient architecture makes it ideal for laptops and other devices with limited power supply.

- Developing and Testing Smaller Models: It offers a good balance of performance and efficiency for working with smaller LLMs.

- Developing Applications for macOS: Its seamless integration with macOS gives developers a smooth workflow.

- Budget-Friendly Option: It's often more affordable than high-end GPUs.

NVIDIA A100: The Champion of Large-Scale AI

The A100 is your go-to option for:

- Training and Running Large-Scale Models: It's designed for demanding AI workloads, excelling at handling large models like Llama 3 70B.

- High-Performance Computing Clusters: Its scalability allows you to build powerful clusters for massive AI tasks.

- Research and Development: It offers a powerful platform for pushing the boundaries of LLM research.

Understanding Quantization: A Simpler Approach to AI

Quantization is a technique that compresses the size of LLM models by reducing the number of bits used to represent each number. Imagine it as a way to simplify your recipe by using fewer ingredients.

- Smaller Models: Smaller models usually benefit from quantization, as they can be processed and generated more quickly.

- Limited Memory: For devices with limited RAM, quantization can be a lifesaver, allowing you to run larger models.

Conclusion: Making the Right Choice for Your AI Journey

Choosing between the Apple M3 Max and NVIDIA A100 depends on your specific needs and constraints. Both devices offer unique strengths:

- Apple M3 Max: Efficient, versatile, and budget-friendly.

- NVIDIA A100: Powerful, scalable, and industry-standard for high-performance computing.

Pro Tip: If you're unsure about which device to choose, start with the M3 Max. Its versatility and efficiency make it suitable for a wide range of AI tasks. Then, as your needs grow and you delve into larger models, consider upgrading to an A100 or similar high-performance GPU.

FAQ: Addressing Your AI Questions

Q: Are these devices suitable for training LLMs?

While both devices are suitable for fine-tuning existing LLM models, the A100 is generally preferred for large-scale LLM training due to its power and scalability.

Q: What are the best quantization techniques for these devices?

For the M3 Max, consider Q40 or Q4KM quantization for optimal performance. The A100 can handle even lower quantization levels (Q2KM or Q3K_M) for large models, increasing memory efficiency.

Q: What are some alternative devices for running LLMs?

Other excellent options include other high-performance GPUs from NVIDIA (like the A40, A100 40GB, and A30), as well as GPUs from AMD (such as the Radeon Instinct MI250X).

Q: Is it possible to run LLMs on a CPU?

Yes, CPUs can be used for running smaller LLMs, but performance will be significantly slower than GPUs. Modern CPUs with specialized AI accelerators can offer better performance.

Keywords:

Apple M3 Max, NVIDIA A100, LLM, Large Language Model, Token Processing, Token Generation, Quantization, AI, Deep Learning, GPU, Inference, Performance, Hardware, llama.cpp, Llama 2, Llama 3, Benchmarks, Computational Power, Efficiency, Scalability, Software Support, Cost, Use Cases, Development, Research