8 Key Factors to Consider When Choosing Between Apple M3 100gb 10cores and NVIDIA RTX A6000 48GB for AI

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the demand for powerful hardware to run these models efficiently. Two popular choices for AI enthusiasts and developers are the Apple M3 100GB 10cores and the NVIDIA RTX A6000 48GB. But which one comes out on top?

This comprehensive guide will delve into the key factors to consider when making this critical decision. We'll explore the strengths and weaknesses of each device, analyze performance benchmarks, and provide practical recommendations for various use cases.

Comparison of Apple M3 100GB 10cores and NVIDIA RTX A6000 48GB

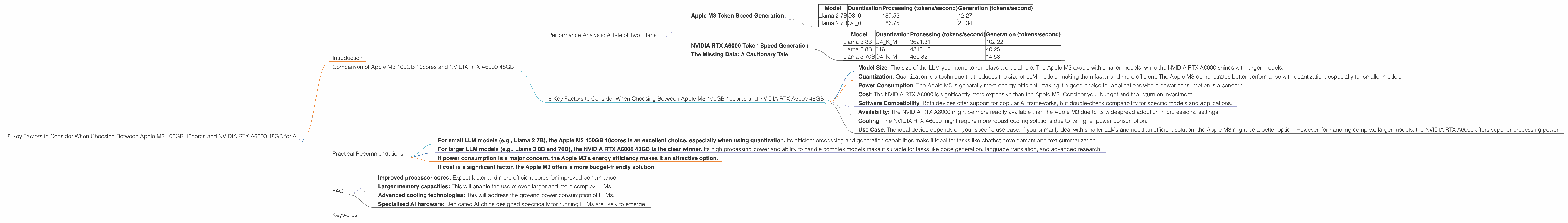

Performance Analysis: A Tale of Two Titans

Let's dive into the performance comparison of the Apple M3 100GB 10cores and NVIDIA RTX A6000 48GB, focusing on their token speed generation capabilities for various LLM models. Keep in mind that the data presented here is based on specific benchmarks, and real-world performance may vary depending on the chosen model, implementation, and workload.

Apple M3 Token Speed Generation

The Apple M3 100GB 10cores demonstrates remarkable performance for smaller LLM models, especially when using efficient quantization techniques.

- Llama 2 7B: This chip exhibits a strong performance for the Llama 2 7B model, showcasing its prowess in processing and generating tokens with both Q40 and Q80 quantization. Its high token generation speed makes it an excellent choice for tasks involving smaller models, such as chatbots and text summarization.

Here's a breakdown:

| Model | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 187.52 | 12.27 |

| Llama 2 7B | Q4_0 | 186.75 | 21.34 |

Key Takeaway: The Apple M3 excels in handling smaller LLMs, particularly with quantization, offering speedy generation and processing for streamlined applications.

NVIDIA RTX A6000 Token Speed Generation

The NVIDIA RTX A6000 48GB shines when it comes to handling larger LLM models and demanding applications.

- Llama 3 8B and 70B: This powerhouse delivers impressive token speeds for both the Llama 3 8B and 70B models, demonstrating its ability to handle complex models with high accuracy and efficiency. Its strength lies in its robust processing capabilities, making it ideal for tasks like code generation and complex language translation.

| Model | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 3621.81 | 102.22 |

| Llama 3 8B | F16 | 4315.18 | 40.25 |

| Llama 3 70B | Q4KM | 466.82 | 14.58 |

Key Takeaway: The NVIDIA RTX A6000 excels at handling larger, more complex LLMs, providing impressive performance for demanding tasks that require high processing power.

The Missing Data: A Cautionary Tale

While the performance data is compelling, it's important to note that there are missing benchmarks for certain model and device combinations. For instance, no data exists for Llama 3 70B F16 performance on either device. This highlights the importance of conducting thorough research and testing, as the ideal solution might not be clearly apparent based on available benchmarks alone.

8 Key Factors to Consider When Choosing Between Apple M3 100GB 10cores and NVIDIA RTX A6000 48GB

- Model Size: The size of the LLM you intend to run plays a crucial role. The Apple M3 excels with smaller models, while the NVIDIA RTX A6000 shines with larger models.

- Quantization: Quantization is a technique that reduces the size of LLM models, making them faster and more efficient. The Apple M3 demonstrates better performance with quantization, especially for smaller models.

- Power Consumption: The Apple M3 is generally more energy-efficient, making it a good choice for applications where power consumption is a concern.

- Cost: The NVIDIA RTX A6000 is significantly more expensive than the Apple M3. Consider your budget and the return on investment.

- Software Compatibility: Both devices offer support for popular AI frameworks, but double-check compatibility for specific models and applications.

- Availability: The NVIDIA RTX A6000 might be more readily available than the Apple M3 due to its widespread adoption in professional settings.

- Cooling: The NVIDIA RTX A6000 might require more robust cooling solutions due to its higher power consumption.

- Use Case: The ideal device depends on your specific use case. If you primarily deal with smaller LLMs and need an efficient solution, the Apple M3 might be a better option. However, for handling complex, larger models, the NVIDIA RTX A6000 offers superior processing power.

Practical Recommendations

Here are some practical recommendations based on the performance analysis and key factors discussed above:

- For small LLM models (e.g., Llama 2 7B), the Apple M3 100GB 10cores is an excellent choice, especially when using quantization. Its efficient processing and generation capabilities make it ideal for tasks like chatbot development and text summarization.

- For larger LLM models (e.g., Llama 3 8B and 70B), the NVIDIA RTX A6000 48GB is the clear winner. Its high processing power and ability to handle complex models make it suitable for tasks like code generation, language translation, and advanced research.

- If power consumption is a major concern, the Apple M3's energy efficiency makes it an attractive option.

- If cost is a significant factor, the Apple M3 offers a more budget-friendly solution.

FAQ

Q: What is quantization and how does it affect LLM performance?

A: Quantization is a technique that reduces the size of LLM models by using fewer bits to represent numbers. This can significantly improve performance, especially on devices with limited memory and processing power. For example, a model using 16-bit floating-point numbers (F16) can be quantized to use 8-bit integers (Q8) or even 4-bit integers (Q4). This reduces the model's size and allows for faster processing and generation.

Q: Which device is right for me?

A: The best device for you depends on your specific needs. If you're working with smaller LLMs and need an efficient solution, the Apple M3 is an excellent choice. If you're dealing with larger models and need more processing power, the NVIDIA RTX A6000 is a better option.

Q: How frequently are these devices updated and what are the expected future improvements?

A: Both Apple and NVIDIA are constantly innovating and releasing new processors. The future of AI hardware is likely to see advancements in areas like:

- Improved processor cores: Expect faster and more efficient cores for improved performance.

- Larger memory capacities: This will enable the use of even larger and more complex LLMs.

- Advanced cooling technologies: This will address the growing power consumption of LLMs.

- Specialized AI hardware: Dedicated AI chips designed specifically for running LLMs are likely to emerge.

Keywords

Apple M3, NVIDIA RTX A6000, LLM, Large Language Model, token speed, generation, processing, quantization, Llama 2, Llama 3, performance, comparison, AI hardware, AI development, deep learning, machine learning.