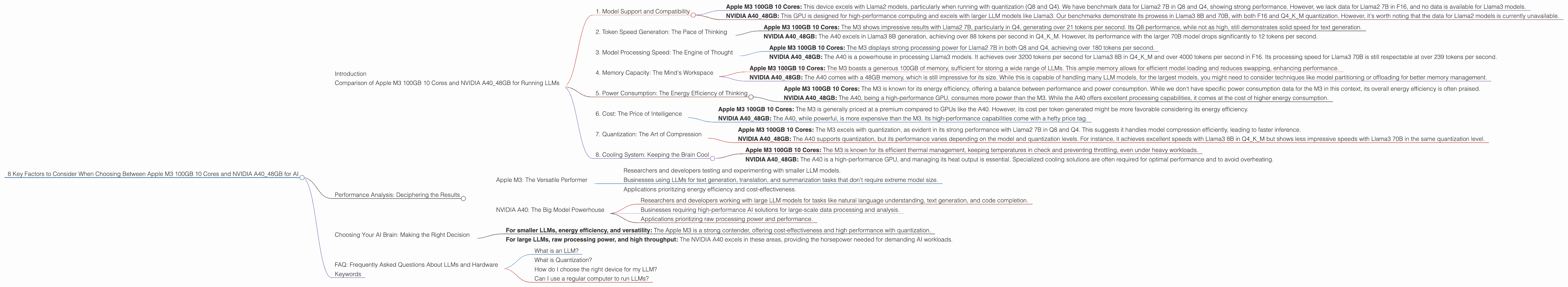

8 Key Factors to Consider When Choosing Between Apple M3 100gb 10cores and NVIDIA A40 48GB for AI

Introduction

The world of Large Language Models (LLMs) is booming, and with it comes a surge in demand for powerful hardware capable of handling these massive AI models. Two players that often come up in discussions are the Apple M3 100GB 10 Cores and the NVIDIA A40_48GB. Both offer impressive capabilities, but choosing the right one for your AI workload can feel like navigating a labyrinth of technical jargon.

This article will delve into the key factors you need to consider when selecting between these two giants of the AI hardware world. We'll break down their performance for various LLM models using real-world benchmarks, compare their strengths and weaknesses, and ultimately guide you towards making the right choice for your specific needs.

Comparison of Apple M3 100GB 10 Cores and NVIDIA A40_48GB for Running LLMs

1. Model Support and Compatibility

The first crucial factor is whether the device supports the LLM models you plan to run. For our analysis, we'll consider the popular Llama2 and Llama3 models.

Apple M3 100GB 10 Cores: This device excels with Llama2 models, particularly when running with quantization (Q8 and Q4). We have benchmark data for Llama2 7B in Q8 and Q4, showing strong performance. However, we lack data for Llama2 7B in F16, and no data is available for Llama3 models.

NVIDIA A4048GB: This GPU is designed for high-performance computing and excels with larger LLM models like Llama3. Our benchmarks demonstrate its prowess in Llama3 8B and 70B, with both F16 and Q4K_M quantization. However, it's worth noting that the data for Llama2 models is currently unavailable.

Summary: The M3 shines with Llama2 models in quantized modes. While the A40 is a clear winner for Llama3, it remains to be seen how it performs with Llama2 models.

2. Token Speed Generation: The Pace of Thinking

Now, let's talk about the core of LLM performance: token speed generation. This measure quantifies how fast a device can process and generate text.

Apple M3 100GB 10 Cores: The M3 shows impressive results with Llama2 7B, particularly in Q4, generating over 21 tokens per second. Its Q8 performance, while not as high, still demonstrates solid speed for text generation.

NVIDIA A4048GB: The A40 excels in Llama3 8B generation, achieving over 88 tokens per second in Q4K_M. However, its performance with the larger 70B model drops significantly to 12 tokens per second.

Summary: The M3 outperforms the A40 in Llama2 7B generation (Q8 and Q4). However, the A40 holds the lead in Llama3 8B generation (Q4KM). When it comes to larger models like Llama3 70B, the A40 struggles compared to its performance with smaller models.

3. Model Processing Speed: The Engine of Thought

While token generation measures output speed, model processing speed focuses on how quickly the model can process and understand input text.

Apple M3 100GB 10 Cores: The M3 displays strong processing power for Llama2 7B in both Q8 and Q4, achieving over 180 tokens per second.

NVIDIA A4048GB: The A40 is a powerhouse in processing Llama3 models. It achieves over 3200 tokens per second for Llama3 8B in Q4K_M and over 4000 tokens per second in F16. Its processing speed for Llama3 70B is still respectable at over 239 tokens per second.

Summary: The A40 is the clear winner in model processing speed for both Llama3 8B and 70B, outperforming the M3 significantly.

4. Memory Capacity: The Mind's Workspace

Memory capacity is crucial for holding large LLM models and facilitating their operation.

Apple M3 100GB 10 Cores: The M3 boasts a generous 100GB of memory, sufficient for storing a wide range of LLMs. This ample memory allows for efficient model loading and reduces swapping, enhancing performance.

NVIDIA A40_48GB: The A40 comes with a 48GB memory, which is still impressive for its size. While this is capable of handling many LLM models, for the largest models, you might need to consider techniques like model partitioning or offloading for better memory management.

Summary: The M3's 100GB of memory is a clear advantage, providing more space for larger models and potentially improving performance through reduced swapping.

5. Power Consumption: The Energy Efficiency of Thinking

Power consumption is a critical factor, especially in data centers and for users concerned about energy efficiency.

Apple M3 100GB 10 Cores: The M3 is known for its energy efficiency, offering a balance between performance and power consumption. While we don't have specific power consumption data for the M3 in this context, its overall energy efficiency is often praised.

NVIDIA A40_48GB: The A40, being a high-performance GPU, consumes more power than the M3. While the A40 offers excellent processing capabilities, it comes at the cost of higher energy consumption.

Summary: The M3 offers a more energy-efficient solution compared to the A40. However, if you prioritize raw processing power and are not concerned about energy costs, the A40 might be the better choice.

6. Cost: The Price of Intelligence

The cost factor is paramount when deciding on your hardware investment.

Apple M3 100GB 10 Cores: The M3 is generally priced at a premium compared to GPUs like the A40. However, its cost per token generated might be more favorable considering its energy efficiency.

NVIDIA A40_48GB: The A40, while powerful, is more expensive than the M3. Its high-performance capabilities come with a hefty price tag.

Summary: The A40 is the more expensive option upfront, but its power and performance might be more beneficial for specific tasks. The M3, while less expensive overall, could be a more value-driven choice for users seeking a balance of performance and cost.

7. Quantization: The Art of Compression

Quantization is a technique for compressing LLM models, reducing their size and potentially boosting their speed.

Apple M3 100GB 10 Cores: The M3 excels with quantization, as evident in its strong performance with Llama2 7B in Q8 and Q4. This suggests it handles model compression efficiently, leading to faster inference.

NVIDIA A4048GB: The A40 supports quantization, but its performance varies depending on the model and quantization levels. For instance, it achieves excellent speeds with Llama3 8B in Q4K_M but shows less impressive speeds with Llama3 70B in the same quantization level.

Summary: Both devices support quantization, but the M3 has shown greater efficiency with some models, indicating that it might be better suited for situations where quantization is crucial.

8. Cooling System: Keeping the Brain Cool

Cooling is crucial for maintaining stable device performance and longevity.

Apple M3 100GB 10 Cores: The M3 is known for its efficient thermal management, keeping temperatures in check and preventing throttling, even under heavy workloads.

NVIDIA A40_48GB: The A40 is a high-performance GPU, and managing its heat output is essential. Specialized cooling solutions are often required for optimal performance and to avoid overheating.

Summary: The M3's efficient cooling system makes it a reliable choice for consistent performance, while the A40 might require additional cooling measures to prevent overheating.

Performance Analysis: Deciphering the Results

Apple M3: The Versatile Performer

The Apple M3 is a versatile performer, particularly impressive with smaller LLM models like Llama2 7B. Its strengths lie in its ability to handle quantization efficiently, leading to faster inference and lower memory consumption.

Its 100GB memory capacity provides ample workspace for large models, and its energy efficiency makes it a cost-effective choice over time.

However, the M3's lack of data for Llama3 models and its absence of dedicated GPU acceleration limit its potential for handling extremely large models.

Ideal use cases:

- Researchers and developers testing and experimenting with smaller LLM models.

- Businesses using LLMs for text generation, translation, and summarization tasks that don't require extreme model size.

- Applications prioritizing energy efficiency and cost-effectiveness.

NVIDIA A40: The Big Model Powerhouse

The NVIDIA A40 shines in its ability to handle large LLM models with incredible speed, particularly with the Llama3 8B model. Its dedicated GPU acceleration provides the horsepower needed for demanding training and inference tasks related to complex AI applications.

Ideal use cases:

- Researchers and developers working with large LLM models for tasks like natural language understanding, text generation, and code completion.

- Businesses requiring high-performance AI solutions for large-scale data processing and analysis.

- Applications prioritizing raw processing power and performance.

Choosing Your AI Brain: Making the Right Decision

Ultimately, the choice between the Apple M3 and NVIDIA A40 depends on your specific needs and budget:

- For smaller LLMs, energy efficiency, and versatility: The Apple M3 is a strong contender, offering cost-effectiveness and high performance with quantization.

- For large LLMs, raw processing power, and high throughput: The NVIDIA A40 excels in these areas, providing the horsepower needed for demanding AI workloads.

Remember, your choice should be based on a careful assessment of your LLM use cases, performance requirements, and budget constraints.

FAQ: Frequently Asked Questions About LLMs and Hardware

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence that excels at understanding and generating human-like text. Think of it as a super-powered version of your smartphone's auto-correct feature, but on a much grander scale.

What is Quantization?

Quantization is a technique used to compress LLM models, making them smaller and potentially faster without sacrificing too much accuracy. Imagine it like converting a high-resolution image to a lower-resolution image – it's still recognizable, but takes up less space.

How do I choose the right device for my LLM?

Consider your specific needs, such as the size of the LLM you'll use, the type of tasks you'll perform, and your budget. Do some research and compare the performance and capabilities of different devices to find the best fit for your project.

Can I use a regular computer to run LLMs?

While you can run some smaller LLMs on a standard computer, for larger models, you'll likely need specialized hardware like a powerful GPU or a dedicated AI server.

Keywords

LLMs, Large Language Models, Apple M3, NVIDIA A40, Token Speed Generation, Model Processing Speed, Memory Capacity, Quantization, Power Consumption, Cost, Performance Analysis, Model Support, Compatibility, Cooling System, Inference, Text Generation, AI, Machine Learning