8 Key Factors to Consider When Choosing Between Apple M2 Ultra 800gb 60cores and NVIDIA 3090 24GB for AI

Introduction

The world of artificial intelligence (AI) is exploding, with the advent of Large Language Models (LLMs) like ChatGPT and Bard, driving the need for powerful computing resources. For developers looking to run these models locally, choices abound, but choosing the right device is crucial. This article delves into the core differences between two leading contenders: the Apple M2 Ultra 800GB 60-core chip and the NVIDIA 3090 24GB GPU, focusing on their suitability for running LLMs.

We'll discuss key factors that matter most when choosing between these titans of computing power, including:

- Processing Speed: How fast each device can churn through the computations needed for LLM inference.

- Token Generation Speed: How quickly the models can generate text, the output we all love.

- Memory Capacity: How much data each device can handle, crucial for larger LLM models.

- Model Optimization Techniques: Exploring quantization and other techniques that improve efficiency.

- Power Consumption: The trade-off between performance and energy usage.

- Cost and Accessibility: Finding the right balance between price and capabilities.

- Software & Ecosystem: The tools and libraries available for working with LLMs on each platform.

- Use Cases: Matching the right device to the specific requirements of different AI tasks.

Let's dive in!

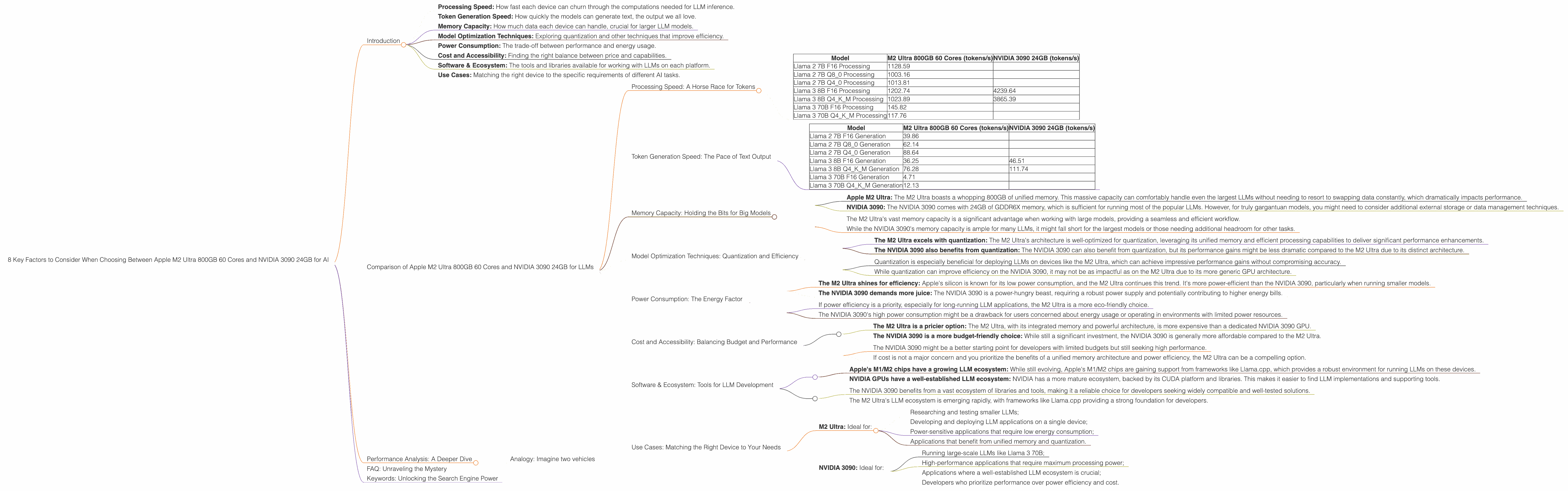

Comparison of Apple M2 Ultra 800GB 60 Cores and NVIDIA 3090 24GB for LLMs

Processing Speed: A Horse Race for Tokens

The processing speed of a device determines how quickly it can handle the complex calculations required to process LLM input and generate output. This is measured in tokens per second (tokens/s), meaning how many units of text the device can process per second.

Here's a breakdown of the performance:

| Model | M2 Ultra 800GB 60 Cores (tokens/s) | NVIDIA 3090 24GB (tokens/s) |

|---|---|---|

| Llama 2 7B F16 Processing | 1128.59 | |

| Llama 2 7B Q8_0 Processing | 1003.16 | |

| Llama 2 7B Q4_0 Processing | 1013.81 | |

| Llama 3 8B F16 Processing | 1202.74 | 4239.64 |

| Llama 3 8B Q4KM Processing | 1023.89 | 3865.39 |

| Llama 3 70B F16 Processing | 145.82 | |

| Llama 3 70B Q4KM Processing | 117.76 |

Key Observations:

- NVIDIA 3090 reigns supreme in processing speed: When it comes to processing power for LLMs, the NVIDIA 3090 is a clear winner. It can handle Llama 3 8B models at significantly higher speeds than the M2 Ultra, offering up to 4x the performance.

- The M2 Ultra shines for smaller models: The M2 Ultra performs well with smaller models like Llama 2 7B, making it suitable for tasks where the size of the model isn't a constraint.

- Missing Data: We don't have data for the NVIDIA 3090 with the Llama 2 7B and Llama 3 70B models.

Practical Implications:

- If you're working with large-scale models and require the absolute fastest processing speeds, the NVIDIA 3090 is your go-to choice.

- If you're focusing on smaller models or optimizing for power efficiency, the Apple M2 Ultra can be a compelling option.

Token Generation Speed: The Pace of Text Output

Token generation speed refers to how quickly a device can output text based on the processed input. This speed is equally important as processing power, as it determines the responsiveness of the LLM.

Here's how the two devices perform in token generation:

| Model | M2 Ultra 800GB 60 Cores (tokens/s) | NVIDIA 3090 24GB (tokens/s) |

|---|---|---|

| Llama 2 7B F16 Generation | 39.86 | |

| Llama 2 7B Q8_0 Generation | 62.14 | |

| Llama 2 7B Q4_0 Generation | 88.64 | |

| Llama 3 8B F16 Generation | 36.25 | 46.51 |

| Llama 3 8B Q4KM Generation | 76.28 | 111.74 |

| Llama 3 70B F16 Generation | 4.71 | |

| Llama 3 70B Q4KM Generation | 12.13 |

Key Observations:

- Smaller models: The M2 Ultra is in the lead: For smaller models like Llama 2 7B, the M2 Ultra has faster token generation speeds, producing text more quickly.

- Larger models: The NVIDIA 3090 takes the crown: The NVIDIA 3090 delivers significantly faster generation speeds for the Llama 3 8B model, especially in its quantized (Q4KM) configuration.

- Generational gap: The M2 Ultra falls short for the Llama 3 70B model, indicating that larger models may require the enhanced processing capabilities of the NVIDIA 3090.

Practical Implications:

- If you prioritize a fast and responsive chat experience or rapid text output, the M2 Ultra is a strong contender for smaller models.

- For large models, the NVIDIA 3090 provides significantly faster token generation, leading to a smoother and more efficient interaction with the LLMs.

Memory Capacity: Holding the Bits for Big Models

Memory capacity is crucial for LLMs, as it determines how much data the device can hold. Larger models require more memory, making it a vital factor in selecting the right device.

- Apple M2 Ultra: The M2 Ultra boasts a whopping 800GB of unified memory. This massive capacity can comfortably handle even the largest LLMs without needing to resort to swapping data constantly, which dramatically impacts performance.

- NVIDIA 3090: The NVIDIA 3090 comes with 24GB of GDDR6X memory, which is sufficient for running most of the popular LLMs. However, for truly gargantuan models, you might need to consider additional external storage or data management techniques.

Practical Implications:

- The M2 Ultra's vast memory capacity is a significant advantage when working with large models, providing a seamless and efficient workflow.

- While the NVIDIA 3090's memory capacity is ample for many LLMs, it might fall short for the largest models or those needing additional headroom for other tasks.

Model Optimization Techniques: Quantization and Efficiency

Quantization is a powerful technique used to reduce the size of LLMs and improve inference speed, making them run more efficiently on hardware with limited memory or processing power. It essentially simplifies the numerical representation of the model, reducing the memory footprint and computational requirements.

- The M2 Ultra excels with quantization: The M2 Ultra's architecture is well-optimized for quantization, leveraging its unified memory and efficient processing capabilities to deliver significant performance enhancements.

- The NVIDIA 3090 also benefits from quantization: The NVIDIA 3090 can also benefit from quantization, but its performance gains might be less dramatic compared to the M2 Ultra due to its distinct architecture.

Practical Implications:

- Quantization is especially beneficial for deploying LLMs on devices like the M2 Ultra, which can achieve impressive performance gains without compromising accuracy.

- While quantization can improve efficiency on the NVIDIA 3090, it may not be as impactful as on the M2 Ultra due to its more generic GPU architecture.

Power Consumption: The Energy Factor

Power consumption is an important consideration, especially when operating LLMs for extended periods. Higher performance often comes with increased power draw.

- The M2 Ultra shines for efficiency: Apple's silicon is known for its low power consumption, and the M2 Ultra continues this trend. It's more power-efficient than the NVIDIA 3090, particularly when running smaller models.

- The NVIDIA 3090 demands more juice: The NVIDIA 3090 is a power-hungry beast, requiring a robust power supply and potentially contributing to higher energy bills.

Practical Implications:

- If power efficiency is a priority, especially for long-running LLM applications, the M2 Ultra is a more eco-friendly choice.

- The NVIDIA 3090's high power consumption might be a drawback for users concerned about energy usage or operating in environments with limited power resources.

Cost and Accessibility: Balancing Budget and Performance

Cost and accessibility are crucial factors, particularly for individuals and smaller development teams.

- The M2 Ultra is a pricier option: The M2 Ultra, with its integrated memory and powerful architecture, is more expensive than a dedicated NVIDIA 3090 GPU.

- The NVIDIA 3090 is a more budget-friendly choice: While still a significant investment, the NVIDIA 3090 is generally more affordable compared to the M2 Ultra.

Practical Implications:

- The NVIDIA 3090 might be a better starting point for developers with limited budgets but still seeking high performance.

- If cost is not a major concern and you prioritize the benefits of a unified memory architecture and power efficiency, the M2 Ultra can be a compelling option.

Software & Ecosystem: Tools for LLM Development

Software and ecosystem play a crucial role in enabling developers to work effectively with LLMs.

- Apple's M1/M2 chips have a growing LLM ecosystem: While still evolving, Apple's M1/M2 chips are gaining support from frameworks like Llama.cpp, which provides a robust environment for running LLMs on these devices.

- NVIDIA GPUs have a well-established LLM ecosystem: NVIDIA has a more mature ecosystem, backed by its CUDA platform and libraries. This makes it easier to find LLM implementations and supporting tools.

Practical Implications:

- The NVIDIA 3090 benefits from a vast ecosystem of libraries and tools, making it a reliable choice for developers seeking widely compatible and well-tested solutions.

- The M2 Ultra's LLM ecosystem is emerging rapidly, with frameworks like Llama.cpp providing a strong foundation for developers.

Use Cases: Matching the Right Device to Your Needs

The choice between the M2 Ultra and the NVIDIA 3090 ultimately depends on the specific use case and requirements:

M2 Ultra: Ideal for:

- Researching and testing smaller LLMs;

- Developing and deploying LLM applications on a single device;

- Power-sensitive applications that require low energy consumption;

- Applications that benefit from unified memory and quantization.

NVIDIA 3090: Ideal for:

- Running large-scale LLMs like Llama 3 70B;

- High-performance applications that require maximum processing power;

- Applications where a well-established LLM ecosystem is crucial;

- Developers who prioritize performance over power efficiency and cost.

Performance Analysis: A Deeper Dive

The data we've examined paints a clear picture: both the Apple M2 Ultra and the NVIDIA 3090 are capable contenders for running LLMs, but they excel in distinct areas:

- Apple M2 Ultra: This beast prioritizes efficiency and memory capacity. It excels at smaller models and offers a more cost-effective approach for users with limited budgets. Its unified memory architecture makes it ideal for applications that rely on fast data access. It's also a greener choice, consuming less power than its NVIDIA counterpart.

- NVIDIA 3090: This GPU is a performance powerhouse built for speed. It shines at larger models and delivers unparalleled processing speeds, making it ideal for applications that demand maximum computational muscle.

Analogy: Imagine two vehicles

Picture the M2 Ultra as a sleek electric sports car. It's nimble, energy-efficient, and has a spacious trunk for carrying all your necessary cargo. It might not be the fastest on the track, but it gets the job done with style and grace. On the other hand, think of the NVIDIA 3090 as a powerful gas-guzzling muscle car. It's a beast on the road, capable of blistering acceleration and handling any terrain. However, it comes with a higher price tag and requires more frequent pit stops for fuel.

FAQ: Unraveling the Mystery

Q: What are LLMs?

A: LLMs are a type of AI model that can process and generate human-like text. They are trained on massive datasets of text and can perform a wide range of tasks, including translation, writing different types of creative text formats, and answering questions in an informative way.

Q: What's the difference between "processing" and "generation" speed?

A: Processing speed refers to how quickly the device can handle the mathematical calculations involved in understanding the input text. Generation speed refers to how quickly the device can produce the text output based on those processed calculations. It's like the difference between reading a book and writing a story—you need to understand the information before you can create something new.

Q: What is quantization?

A: Quantization is a way to make LLMs smaller and faster by simplifying the numerical representation of the model. It's like using a simplified language to communicate the same ideas, making it easier to process and understand.

Q: Can I run LLMs on both devices?

A: Yes, both the M2 Ultra and the NVIDIA 3090 are capable of running LLMs. The specific LLM you choose will depend on the device's processing power, memory capacity, and the available software and libraries.

Keywords: Unlocking the Search Engine Power

LLMs, Apple M2 Ultra, NVIDIA 3090, GPU, CPU, AI, Machine Learning, Deep Learning, Token Generation Speed, Processing Speed, Memory Capacity, Quantization, Power Consumption, Cost, Accessibility, Software, Ecosystem, Use Cases, Llama 2, Llama 3