8 Key Factors to Consider When Choosing Between Apple M2 Pro 200gb 16cores and NVIDIA RTX A6000 48GB for AI

Introduction

The world of artificial intelligence (AI) is rapidly evolving, and Large Language Models (LLMs) are at the forefront of this revolution. LLMs are capable of performing complex tasks like generating human-quality text, translating languages, and writing different creative text formats, making them valuable tools for researchers, developers, and businesses. However, running these models efficiently requires powerful hardware.

This article compares two popular devices, the Apple M2 Pro 200GB 16-core and NVIDIA RTX A6000 48GB, commonly used for running LLMs locally, to help you identify the best option for your specific needs. We will analyze their strengths and weaknesses, focusing on factors like processing speed, memory bandwidth, and cost, to guide you in making an informed choice.

Performance Comparison of Apple M2 Pro 200GB 16cores and NVIDIA RTX A6000 48GB

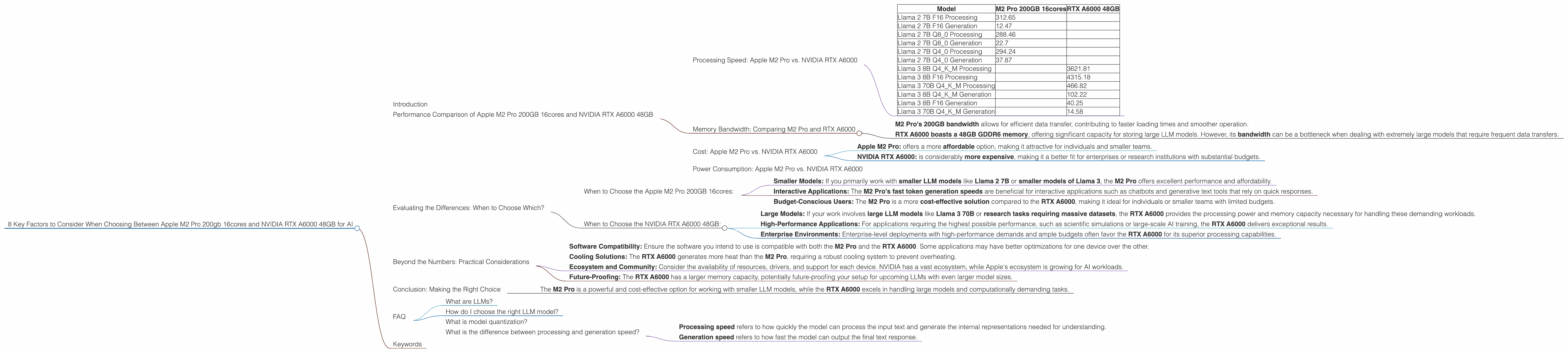

Processing Speed: Apple M2 Pro vs. NVIDIA RTX A6000

Let's start with processing power, a crucial factor for running demanding LLM models. The M2 Pro boasts 16 CPU cores and a 200GB bandwidth, while the RTX A6000 features a 48GB GPU with impressive processing capabilities. Both devices offer different strengths:

- M2 Pro: excels in fast token generation, particularly when using quantized models, which reduce the model's size and memory footprint.

- RTX A6000: shines in processing large models like Llama 3 70B, leveraging its dedicated GPU for high-speed computation.

Here's a breakdown of token speeds (tokens/second) for different LLM models and quantization levels:

| Model | M2 Pro 200GB 16cores | RTX A6000 48GB |

|---|---|---|

| Llama 2 7B F16 Processing | 312.65 | |

| Llama 2 7B F16 Generation | 12.47 | |

| Llama 2 7B Q8_0 Processing | 288.46 | |

| Llama 2 7B Q8_0 Generation | 22.7 | |

| Llama 2 7B Q4_0 Processing | 294.24 | |

| Llama 2 7B Q4_0 Generation | 37.87 | |

| Llama 3 8B Q4KM Processing | 3621.81 | |

| Llama 3 8B F16 Processing | 4315.18 | |

| Llama 3 70B Q4KM Processing | 466.82 | |

| Llama 3 8B Q4KM Generation | 102.22 | |

| Llama 3 8B F16 Generation | 40.25 | |

| Llama 3 70B Q4KM Generation | 14.58 |

Observations:

- The M2 Pro outperforms the RTX A6000 in token generation for smaller models like Llama 2 7B when using quantized formats (Q80 and Q40), indicating its potential for faster interactive AI experiences.

- However, the RTX A6000 takes the lead in processing large models, like Llama 3 70B and 8B, showcasing its superior power for handling computationally intensive tasks.

Memory Bandwidth: Comparing M2 Pro and RTX A6000

Memory bandwidth is crucial for transferring data between the CPU/GPU and RAM, impacting the overall performance of LLMs.

M2 Pro's 200GB bandwidth allows for efficient data transfer, contributing to faster loading times and smoother operation.

RTX A6000 boasts a 48GB GDDR6 memory, offering significant capacity for storing large LLM models. However, its bandwidth can be a bottleneck when dealing with extremely large models that require frequent data transfers.

Cost: Apple M2 Pro vs. NVIDIA RTX A6000

Cost is a significant consideration for choosing hardware. The price differences between these two devices are considerable:

Apple M2 Pro: offers a more affordable option, making it attractive for individuals and smaller teams.

NVIDIA RTX A6000: is considerably more expensive, making it a better fit for enterprises or research institutions with substantial budgets.

Power Consumption: Apple M2 Pro vs. NVIDIA RTX A6000

Power consumption is a significant factor for long-term use and energy efficiency. The M2 Pro is known for its low power consumption, making it an energy-efficient choice.

The RTX A6000, on the other hand, consumes more power, especially when processing demanding workloads. While its performance capabilities are impressive, it might lead to higher energy bills and heat generation.

Evaluating the Differences: When to Choose Which?

Based on the above comparisons, here's a breakdown of when to choose each device:

When to Choose the Apple M2 Pro 200GB 16cores:

Smaller Models: If you primarily work with smaller LLM models like Llama 2 7B or smaller models of Llama 3, the M2 Pro offers excellent performance and affordability.

Interactive Applications: The M2 Pro's fast token generation speeds are beneficial for interactive applications such as chatbots and generative text tools that rely on quick responses.

Budget-Conscious Users: The M2 Pro is a more cost-effective solution compared to the RTX A6000, making it ideal for individuals or smaller teams with limited budgets.

When to Choose the NVIDIA RTX A6000 48GB:

Large Models: If your work involves large LLM models like Llama 3 70B or research tasks requiring massive datasets, the RTX A6000 provides the processing power and memory capacity necessary for handling these demanding workloads.

High-Performance Applications: For applications requiring the highest possible performance, such as scientific simulations or large-scale AI training, the RTX A6000 delivers exceptional results.

Enterprise Environments: Enterprise-level deployments with high-performance demands and ample budgets often favor the RTX A6000 for its superior processing capabilities.

Beyond the Numbers: Practical Considerations

While the performance benchmarks provide a good starting point, it's essential to consider other factors:

Software Compatibility: Ensure the software you intend to use is compatible with both the M2 Pro and the RTX A6000. Some applications may have better optimizations for one device over the other.

Cooling Solutions: The RTX A6000 generates more heat than the M2 Pro, requiring a robust cooling system to prevent overheating.

Ecosystem and Community: Consider the availability of resources, drivers, and support for each device. NVIDIA has a vast ecosystem, while Apple's ecosystem is growing for AI workloads.

Future-Proofing: The RTX A6000 has a larger memory capacity, potentially future-proofing your setup for upcoming LLMs with even larger model sizes.

Conclusion: Making the Right Choice

The choice between the Apple M2 Pro 200GB 16cores and NVIDIA RTX A6000 48GB ultimately depends on your specific needs and budget.

- The M2 Pro is a powerful and cost-effective option for working with smaller LLM models, while the RTX A6000 excels in handling large models and computationally demanding tasks.

By carefully analyzing your LLM workload, considering your budget, and evaluating other practical aspects beyond just performance numbers, you can confidently select the ideal device to power your AI endeavors.

FAQ

What are LLMs?

LLMs are a specific type of AI model designed to understand and generate human-like text. They are trained on vast amounts of text data and can perform various tasks, including language translation, text summarization, and creative writing.

How do I choose the right LLM model?

The choice of LLM model depends on your specific use case. Consider factors like model size, training data, and performance characteristics to select the best option for your task.

What is model quantization?

Quantization is a technique that reduces the size and memory footprint of LLM models without significantly impacting their performance. It involves representing model parameters using fewer bits, making them more efficient to run on devices with limited resources.

What is the difference between processing and generation speed?

Processing speed refers to how quickly the model can process the input text and generate the internal representations needed for understanding.

Generation speed refers to how fast the model can output the final text response.

Keywords

LLMs, Large Language Models, Apple M2 Pro, NVIDIA RTX A6000, GPU, CPU, Token Speed, Processing Power, Memory Bandwidth, Cost, Power Consumption, Performance Comparison, AI, Machine Learning, Llama 2, Llama 3, Quantization, F16, Q4KM, Q8_0, Generation, Processing, AI Hardware, AI Devices, LLMs on Local Devices, AI Development, AI Research.