8 Key Factors to Consider When Choosing Between Apple M2 Pro 200gb 16cores and NVIDIA A100 SXM 80GB for AI

Introduction

The world of large language models (LLMs) is exploding with new models and capabilities, pushing the boundaries of what's possible with artificial intelligence. But running these powerful LLMs locally requires serious hardware. Two popular choices for AI development and exploration are the Apple M2 Pro with 200GB of memory and 16 cores and the NVIDIA A100 SXM with 80GB of memory.

This article will guide you through the key factors to consider when choosing between these two powerful devices for your AI projects. We'll delve into their performance characteristics, explore their strengths and weaknesses, and provide practical recommendations for different use cases.

Performance Analysis: Apple M2 Pro vs. NVIDIA A100SXM80GB

Let's dive into the nitty-gritty of how these processors perform with LLMs.

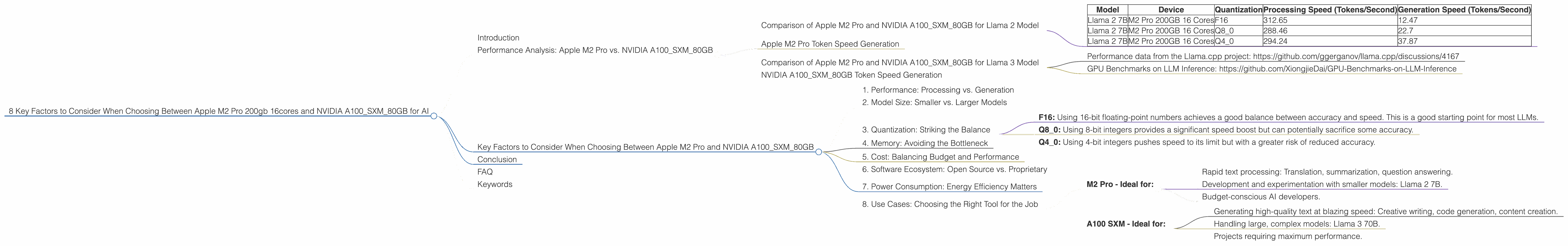

Comparison of Apple M2 Pro and NVIDIA A100SXM80GB for Llama 2 Model

We'll start our comparison with the popular Llama 2 model, focusing on the Apple M2 Pro with 200GB of memory and 16 cores and the NVIDIA A100 SXM with 80GB of memory.

Data Sources:

- Performance data from the Llama.cpp project: https://github.com/ggerganov/llama.cpp/discussions/4167

- GPU Benchmarks on LLM Inference: https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference

Here's what we've found:

| Model | Device | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|---|

| Llama 2 7B | M2 Pro 200GB 16 Cores | F16 | 312.65 | 12.47 |

| Llama 2 7B | M2 Pro 200GB 16 Cores | Q8_0 | 288.46 | 22.7 |

| Llama 2 7B | M2 Pro 200GB 16 Cores | Q4_0 | 294.24 | 37.87 |

Note: The NVIDIA A100 SXM 80GB data for Llama 2 is not available in the sources we've used.

Apple M2 Pro Token Speed Generation

The M2 Pro demonstrates remarkable speed for processing, particularly in F16 quantization. This means it's well-suited for tasks like text translation, summarization, and question answering where fast processing of large amounts of text is crucial. However, its generation speed for Llama 2, even with Q4_0 quantization, is noticeably slower than its processing speed. While the M2 Pro shines in processing a large volume of information, the A100 might be more efficient for tasks that require generating text at a rapid pace, like creative writing.

Comparison of Apple M2 Pro and NVIDIA A100SXM80GB for Llama 3 Model

Let's move on to the Llama 3 model.

| Model | Device | Quantization | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama 3 8B | A100SXM80GB | Q4KM | 133.38 |

| Llama 3 8B | A100SXM80GB | F16 | 53.18 |

| Llama 3 70B | A100SXM80GB | Q4KM | 24.33 |

Data Sources:

- Performance data from the Llama.cpp project: https://github.com/ggerganov/llama.cpp/discussions/4167

- GPU Benchmarks on LLM Inference: https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference

Note: Data for the Llama 3 model on the M2 Pro is not available from our sources.

NVIDIA A100SXM80GB Token Speed Generation

The NVIDIA A100 SXM shines in generating text with the Llama 3 model, especially with the Q4KM quantization. It demonstrates a much higher speed than the M2 Pro for Llama 2 in terms of generation. While the A100 may struggle with complex tasks requiring high accuracy, its speed and efficiency in generating text make it a compelling choice for tasks like creative writing, code generation, and generating high-quality summaries.

Key Factors to Consider When Choosing Between Apple M2 Pro and NVIDIA A100SXM80GB

Now that we've examined their performance characteristics, let's delve into the key factors to consider when choosing between the Apple M2 Pro and the NVIDIA A100 SXM for running your LLMs:

1. Performance: Processing vs. Generation

M2 Pro - Processing Powerhouse: The M2 Pro excels at processing large volumes of text data, making it ideal for tasks involving translation, summarization, and question answering. It's a fantastic option if speed is critical for text analysis.

A100 SXM - Generation Speed Demon: The A100 SXM is built for generating text at lightning speeds, handling creative writing, code generation, and summaries.

2. Model Size: Smaller vs. Larger Models

M2 Pro - Ideal for Smaller & Mid-Sized Models: The M2 Pro provides a good balance for models like Llama 2 7B. While it can handle larger models, its performance may be limited.

A100 SXM - Built for the Big Leagues: The A100 SXM is designed for handling large, complex models like Llama 3 70B. If you're working with models that require significant memory, the A100 SXM is your go-to choice.

3. Quantization: Striking the Balance

Quantization is a technique to reduce the size of a neural network by representing weights and activations with fewer bits. This results in faster processing, but it can impact accuracy.

- F16: Using 16-bit floating-point numbers achieves a good balance between accuracy and speed. This is a good starting point for most LLMs.

- Q8_0: Using 8-bit integers provides a significant speed boost but can potentially sacrifice some accuracy.

- Q4_0: Using 4-bit integers pushes speed to its limit but with a greater risk of reduced accuracy.

M2 Pro - Versatility in Quantization: The M2 Pro supports various quantization levels, allowing you to fine-tune the balance between accuracy and performance.

A100 SXM - Optimal for Q4KM: The A100 SXM excels with Q4KM quantization, which combines 4-bit quantization with techniques like Kernel (K) and Matrix (M) sparsity. This allows for exceptional speed, especially when working with larger models.

4. Memory: Avoiding the Bottleneck

M2 Pro - 200GB: Enough for Most Models: The M2 Pro's 200GB of memory provides sufficient space for most LLMs. However, it may struggle with very large models.

A100 SXM - 80GB: Built for the Big Guys: The A100 SXM's 80GB of memory is a powerhouse for handling large models.

5. Cost: Balancing Budget and Performance

M2 Pro - Budget-Friendly Choice: The M2 Pro delivers a solid balance of performance and affordability. It's an excellent option for developers who are starting their LLM journey or working on smaller models.

A100 SXM - Premium Performance: The A100 SXM comes with a premium price tag, but it's well worth it if you need lightning-fast performance for large models.

6. Software Ecosystem: Open Source vs. Proprietary

M2 Pro - Flexibility with Open Source: The M2 Pro benefits from a robust open-source community, allowing for broader compatibility with different LLM frameworks and tools.

A100 SXM - Optimized for NVIDIA's Ecosystem: The A100 SXM is tightly integrated with NVIDIA's software ecosystem, including CUDA and cuDNN, which maximize performance for NVIDIA's own frameworks and tools.

7. Power Consumption: Energy Efficiency Matters

M2 Pro - Lower Power Consumption: The M2 Pro is known for its energy efficiency, making it a sensible choice for environmentally conscious users.

A100 SXM - High Power Consumption: The A100 SXM is a power-hungry beast, demanding a substantial amount of energy.

8. Use Cases: Choosing the Right Tool for the Job

Here's a breakdown of how these devices best serve different use cases:

M2 Pro - Ideal for:

- Rapid text processing: Translation, summarization, question answering.

- Development and experimentation with smaller models: Llama 2 7B.

- Budget-conscious AI developers.

A100 SXM - Ideal for:

- Generating high-quality text at blazing speed: Creative writing, code generation, content creation.

- Handling large, complex models: Llama 3 70B.

- Projects requiring maximum performance.

Conclusion

Choosing the right hardware for your AI projects is crucial for unlocking the potential of LLMs. Both the Apple M2 Pro and NVIDIA A100 SXM offer unique advantages and limitations.

The M2 Pro is an excellent choice for developers who prioritize processing speed, work with smaller LLMs, and value affordability. The A100 SXM is the go-to option for projects requiring lightning-fast text generation, working with massive models, and demanding the highest performance. Ultimately, the best choice depends on your individual needs and priorities.

FAQ

Q: What is the best device for running Llama 2 models?

A: The M2 Pro is great for Llama 2 7B, particularly if processing speed is a priority. However, the A100 SXM shines if you need to generate text quickly.

Q: What is quantization and how does it impact performance?

A: Quantization is a technique to reduce the size of a neural network, resulting in faster processing but with a potential compromise in accuracy. The M2 Pro offers various quantization levels, while the A100 SXM excels with Q4KM.

Q: Does the M2 Pro require specific software for LLM development?

A: The M2 Pro works with various open-source LLM frameworks and tools.

Q: Is the A100 SXM energy efficient?

A: No, the A100 SXM consumes a lot of power.

Q: Can the M2 Pro handle large models?

A: While the M2 Pro can handle larger models, its performance may be limited compared to the A100 SXM.

Keywords

Apple M2 Pro, NVIDIA A100SXM80GB, LLM models, Llama 2, Llama 3, AI, performance, processing speed, generation speed, quantization, memory, cost, software ecosystem, power consumption, use cases, text generation, text analysis, translation, summarization, question answering, creative writing, code generation, development, experimentation, budget, optimization, hardware, AI development, open-source, proprietary, CUDA, cuDNN, energy efficiency.