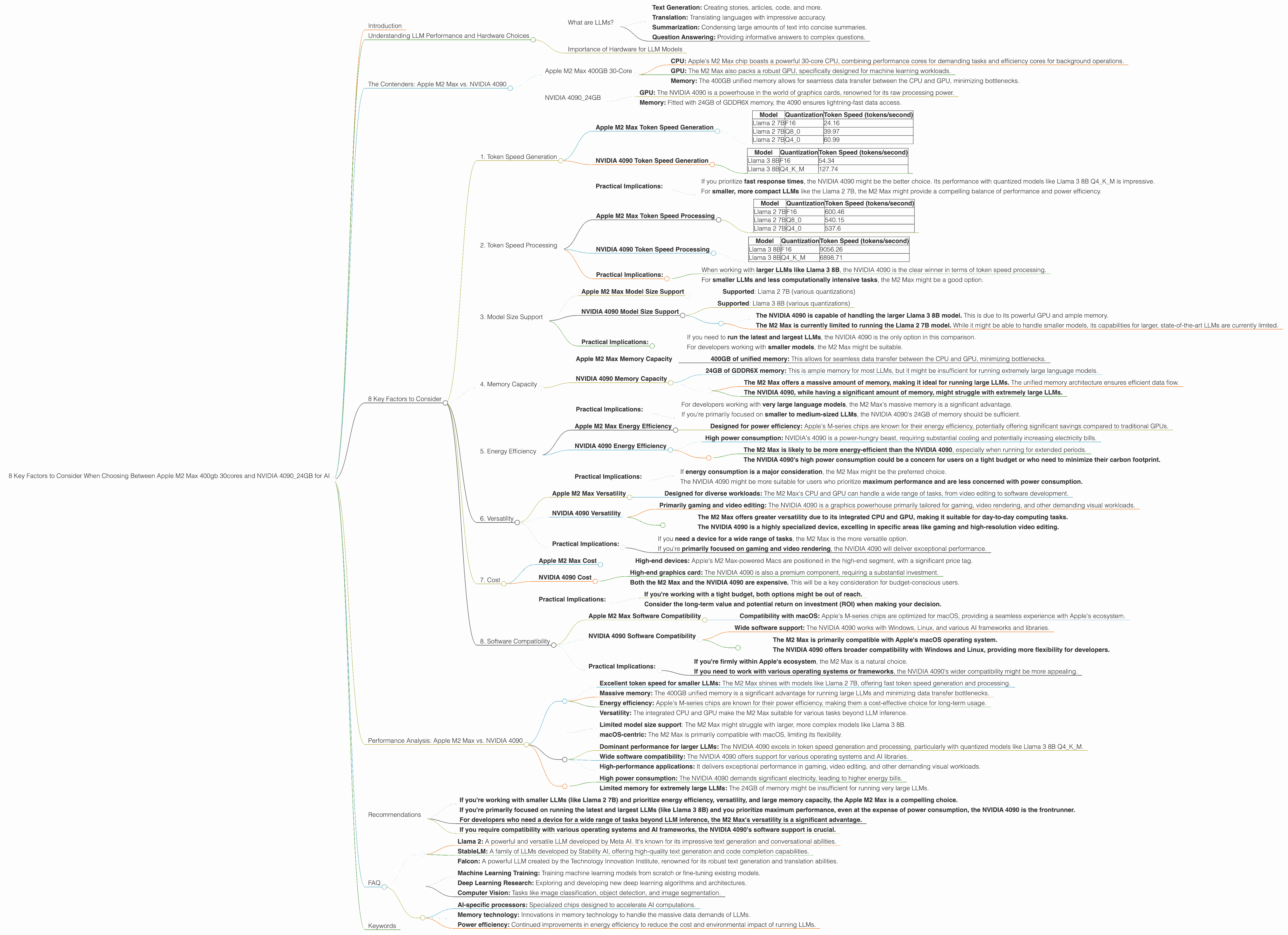

8 Key Factors to Consider When Choosing Between Apple M2 Max 400gb 30cores and NVIDIA 4090 24GB for AI

Introduction

The world of large language models (LLMs) is rapidly evolving, and with it comes a growing need for powerful hardware to run these models effectively. Two titans in the hardware world, Apple and NVIDIA, offer compelling options for developers and enthusiasts looking to unleash the potential of LLMs.

This article delves into the performance of Apple's M2 Max 400gb 30-core processor and NVIDIA's 4090_24GB graphics card, comparing their capabilities for running popular LLM models like Llama 2 and Llama 3. We'll dissect the crucial factors that influence performance, providing practical recommendations for different use cases.

Understanding LLM Performance and Hardware Choices

Before we dive into the nitty-gritty, let's define some key concepts:

What are LLMs?

LLMs are a type of artificial intelligence (AI) capable of understanding and generating human-like text. They learn from vast datasets of text and code, enabling them to perform various tasks, including:

- Text Generation: Creating stories, articles, code, and more.

- Translation: Translating languages with impressive accuracy.

- Summarization: Condensing large amounts of text into concise summaries.

- Question Answering: Providing informative answers to complex questions.

Importance of Hardware for LLM Models

The performance of LLMs hinges on the underlying hardware. LLMs are computationally expensive, meaning they require significant processing power to run effectively. Choosing the right hardware can be the difference between smooth, real-time interactions and frustrating delays.

The Contenders: Apple M2 Max vs. NVIDIA 4090

Apple M2 Max 400GB 30-Core

- CPU: Apple's M2 Max chip boasts a powerful 30-core CPU, combining performance cores for demanding tasks and efficiency cores for background operations.

- GPU: The M2 Max also packs a robust GPU, specifically designed for machine learning workloads.

- Memory: The 400GB unified memory allows for seamless data transfer between the CPU and GPU, minimizing bottlenecks.

NVIDIA 4090_24GB

- GPU: The NVIDIA 4090 is a powerhouse in the world of graphics cards, renowned for its raw processing power.

- Memory: Fitted with 24GB of GDDR6X memory, the 4090 ensures lightning-fast data access.

8 Key Factors to Consider

Now, let's dive into the key factors you should consider when choosing between the Apple M2 Max and the NVIDIA 4090 for running LLMs:

1. Token Speed Generation

This metric measures how many tokens a device can process per second. A higher token speed translates to faster generation of responses and more efficient model inference.

Apple M2 Max Token Speed Generation

| Model | Quantization | Token Speed (tokens/second) |

|---|---|---|

| Llama 2 7B | F16 | 24.16 |

| Llama 2 7B | Q8_0 | 39.97 |

| Llama 2 7B | Q4_0 | 60.99 |

NVIDIA 4090 Token Speed Generation

| Model | Quantization | Token Speed (tokens/second) |

|---|---|---|

| Llama 3 8B | F16 | 54.34 |

| Llama 3 8B | Q4KM | 127.74 |

Observations:

- The NVIDIA 4090 generally outperforms the M2 Max for token speed generation, especially with the Q4KM quantization. This indicates that the NVIDIA 4090 is particularly proficient at processing quantized models, which are often more efficient and require less memory.

- The M2 Max excels in token speed generation for the Llama 2 7B model, particularly with the Q4_0 quantization. This suggests that the M2 Max might be a better choice for smaller and less computationally demanding LLMs.

Practical Implications:

- If you prioritize fast response times, the NVIDIA 4090 might be the better choice. Its performance with quantized models like Llama 3 8B Q4KM is impressive.

- For smaller, more compact LLMs like the Llama 2 7B, the M2 Max might provide a compelling balance of performance and power efficiency.

2. Token Speed Processing

This metric measures the speed at which a device can process the input tokens to generate the context of a response. Faster processing allows for quicker and more efficient inference.

Apple M2 Max Token Speed Processing

| Model | Quantization | Token Speed (tokens/second) |

|---|---|---|

| Llama 2 7B | F16 | 600.46 |

| Llama 2 7B | Q8_0 | 540.15 |

| Llama 2 7B | Q4_0 | 537.6 |

NVIDIA 4090 Token Speed Processing

| Model | Quantization | Token Speed (tokens/second) |

|---|---|---|

| Llama 3 8B | F16 | 9056.26 |

| Llama 3 8B | Q4KM | 6898.71 |

Observations:

- The NVIDIA 4090 clearly dominates in token speed processing across both Llama 3 8B quantizations. Its dedicated GPU architecture and raw power excel in handling the demanding processing requirements of larger LLMs.

- The M2 Max demonstrates decent performance with the Llama 2 7B model, particularly with the F16 quantization. However, its capabilities might be limited when dealing with larger and more complex models.

Practical Implications:

- When working with larger LLMs like Llama 3 8B, the NVIDIA 4090 is the clear winner in terms of token speed processing.

- For smaller LLMs and less computationally intensive tasks, the M2 Max might be a good option.

3. Model Size Support

The ability to run different sizes of LLM models is crucial. Larger models offer more capabilities, but they require more resources.

Apple M2 Max Model Size Support

- Supported: Llama 2 7B (various quantizations)

NVIDIA 4090 Model Size Support

- Supported: Llama 3 8B (various quantizations)

Observations:

- The NVIDIA 4090 is capable of handling the larger Llama 3 8B model. This is due to its powerful GPU and ample memory.

- The M2 Max is currently limited to running the Llama 2 7B model. While it might be able to handle smaller models, its capabilities for larger, state-of-the-art LLMs are currently limited.

Practical Implications:

- If you need to run the latest and largest LLMs, the NVIDIA 4090 is the only option in this comparison.

- For developers working with smaller models, the M2 Max might be suitable.

4. Memory Capacity

Memory capacity significantly impacts the performance of LLMs. Larger models require more memory to load and process effectively.

Apple M2 Max Memory Capacity

- 400GB of unified memory: This allows for seamless data transfer between the CPU and GPU, minimizing bottlenecks.

NVIDIA 4090 Memory Capacity

- 24GB of GDDR6X memory: This is ample memory for most LLMs, but it might be insufficient for running extremely large language models.

Observations:

- The M2 Max offers a massive amount of memory, making it ideal for running large LLMs. The unified memory architecture ensures efficient data flow.

- The NVIDIA 4090, while having a significant amount of memory, might struggle with extremely large LLMs.

Practical Implications:

- For developers working with very large language models, the M2 Max's massive memory is a significant advantage.

- If you're primarily focused on smaller to medium-sized LLMs, the NVIDIA 4090's 24GB of memory should be sufficient.

5. Energy Efficiency

Energy efficiency is a critical factor, especially when running LLMs for extended periods.

Apple M2 Max Energy Efficiency

- Designed for power efficiency: Apple's M-series chips are known for their energy efficiency, potentially offering significant savings compared to traditional GPUs.

NVIDIA 4090 Energy Efficiency

- High power consumption: NVIDIA's 4090 is a power-hungry beast, requiring substantial cooling and potentially increasing electricity bills.

Observations:

- The M2 Max is likely to be more energy-efficient than the NVIDIA 4090, especially when running for extended periods.

- The NVIDIA 4090's high power consumption could be a concern for users on a tight budget or who need to minimize their carbon footprint.

Practical Implications:

- If energy consumption is a major consideration, the M2 Max might be the preferred choice.

- The NVIDIA 4090 might be more suitable for users who prioritize maximum performance and are less concerned with power consumption.

6. Versatility

Many developers require a device that can handle various tasks beyond LLM inference.

Apple M2 Max Versatility

- Designed for diverse workloads: The M2 Max's CPU and GPU can handle a wide range of tasks, from video editing to software development.

NVIDIA 4090 Versatility

- Primarily gaming and video editing: The NVIDIA 4090 is a graphics powerhouse primarily tailored for gaming, video rendering, and other demanding visual workloads.

Observations:

- The M2 Max offers greater versatility due to its integrated CPU and GPU, making it suitable for day-to-day computing tasks.

- The NVIDIA 4090 is a highly specialized device, excelling in specific areas like gaming and high-resolution video editing.

Practical Implications:

- If you need a device for a wide range of tasks, the M2 Max is the more versatile option.

- If you're primarily focused on gaming and video rendering, the NVIDIA 4090 will deliver exceptional performance.

7. Cost

The cost of hardware is a major factor, especially for developers and enthusiasts.

Apple M2 Max Cost

- High-end devices: Apple's M2 Max-powered Macs are positioned in the high-end segment, with a significant price tag.

NVIDIA 4090 Cost

- High-end graphics card: The NVIDIA 4090 is also a premium component, requiring a substantial investment.

Observations:

- Both the M2 Max and the NVIDIA 4090 are expensive. This will be a key consideration for budget-conscious users.

Practical Implications:

- If you're working with a tight budget, both options might be out of reach.

- Consider the long-term value and potential return on investment (ROI) when making your decision.

8. Software Compatibility

LLMs are constantly evolving, and compatibility with different software frameworks and libraries is essential.

Apple M2 Max Software Compatibility

- Compatibility with macOS: Apple's M-series chips are optimized for macOS, providing a seamless experience with Apple's ecosystem.

NVIDIA 4090 Software Compatibility

- Wide software support: The NVIDIA 4090 works with Windows, Linux, and various AI frameworks and libraries.

Observations:

- The M2 Max is primarily compatible with Apple's macOS operating system.

- The NVIDIA 4090 offers broader compatibility with Windows and Linux, providing more flexibility for developers.

Practical Implications:

- If you're firmly within Apple's ecosystem, the M2 Max is a natural choice.

- If you need to work with various operating systems or frameworks, the NVIDIA 4090's wider compatibility might be more appealing.

Performance Analysis: Apple M2 Max vs. NVIDIA 4090

Let's summarize the performance analysis:

Strengths of the Apple M2 Max:

- Excellent token speed for smaller LLMs: The M2 Max shines with models like Llama 2 7B, offering fast token speed generation and processing.

- Massive memory: The 400GB unified memory is a significant advantage for running large LLMs and minimizing data transfer bottlenecks.

- Energy efficiency: Apple's M-series chips are known for their power efficiency, making them a cost-effective choice for long-term usage.

- Versatility: The integrated CPU and GPU make the M2 Max suitable for various tasks beyond LLM inference.

Weaknesses of the Apple M2 Max:

- Limited model size support: The M2 Max might struggle with larger, more complex models like Llama 3 8B.

- macOS-centric: The M2 Max is primarily compatible with macOS, limiting its flexibility.

Strengths of the NVIDIA 4090:

- Dominant performance for larger LLMs: The NVIDIA 4090 excels in token speed generation and processing, particularly with quantized models like Llama 3 8B Q4KM.

- Wide software compatibility: The NVIDIA 4090 offers support for various operating systems and AI libraries.

- High-performance applications: It delivers exceptional performance in gaming, video editing, and other demanding visual workloads.

Weaknesses of the NVIDIA 4090:

- High power consumption: The NVIDIA 4090 demands significant electricity, leading to higher energy bills.

- Limited memory for extremely large LLMs: The 24GB of memory might be insufficient for running very large LLMs.

Recommendations

- If you're working with smaller LLMs (like Llama 2 7B) and prioritize energy efficiency, versatility, and large memory capacity, the Apple M2 Max is a compelling choice.

- If you're primarily focused on running the latest and largest LLMs (like Llama 3 8B) and you prioritize maximum performance, even at the expense of power consumption, the NVIDIA 4090 is the frontrunner.

- For developers who need a device for a wide range of tasks beyond LLM inference, the M2 Max's versatility is a significant advantage.

- If you require compatibility with various operating systems and AI frameworks, the NVIDIA 4090's software support is crucial.

FAQ

Q1: What are the benefits of using quantization for LLMs?

A: Quantization is a technique that reduces the size of LLM models by representing their weights (the parameters that determine the model's behavior) with fewer bits. This leads to smaller models that require less memory and can be processed more efficiently. For example, instead of using 32 bits per weight, a quantized model might use 8 bits per weight. This can significantly reduce the model's size and improve its performance.

Q2: How does the memory capacity of a device affect LLM performance?

A: LLMs require a considerable amount of memory to load and operate. If a device has insufficient memory, the model will slow down or even fail to run. This is especially true for larger LLMs. For instance, imagine trying to run a large LLM on a device with limited memory – the device will constantly be juggling data, leading to sluggish performance and even potential crashes.

Q3: What are the popular open-source LLMs available today?

A: There are various open-source LLMs available, each with unique characteristics. Some popular examples include:

- Llama 2: A powerful and versatile LLM developed by Meta AI. It's known for its impressive text generation and conversational abilities.

- StableLM: A family of LLMs developed by Stability AI, offering high-quality text generation and code completion capabilities.

- Falcon: A powerful LLM created by the Technology Innovation Institute, renowned for its robust text generation and translation abilities.

Q4: Can I use the Apple M2 Max or the NVIDIA 4090 for other AI tasks besides running LLMs?

A: Yes, both the M2 Max and the NVIDIA 4090 can be used for other AI tasks. Their powerful processors are well-suited for:

- Machine Learning Training: Training machine learning models from scratch or fine-tuning existing models.

- Deep Learning Research: Exploring and developing new deep learning algorithms and architectures.

- Computer Vision: Tasks like image classification, object detection, and image segmentation.

Q5: What is the future of LLM hardware?

A: The future of LLM hardware promises even more powerful and efficient devices, specifically tailored for AI workloads. We're likely to see advances in:

- AI-specific processors: Specialized chips designed to accelerate AI computations.

- Memory technology: Innovations in memory technology to handle the massive data demands of LLMs.

- Power efficiency: Continued improvements in energy efficiency to reduce the cost and environmental impact of running LLMs.

Keywords

Apple M2 Max, NVIDIA 4090, LLM, Large Language Model, Llama 2, Llama 3, Token Speed Generation, Token Speed Processing, Model Size Support, Memory Capacity, Energy Efficiency, Versatility, Cost, Software Compatibility, Quantization, Open-source LLMs, AI Hardware, Future of LLM Hardware.