8 Key Factors to Consider When Choosing Between Apple M2 Max 400gb 30cores and Apple M3 Pro 150gb 14cores for AI

Introduction

The world of large language models (LLMs) is exploding, and with it comes the need for powerful hardware to run these complex models. For developers looking to explore and experiment with LLMs locally, choosing the right device is crucial. Today, we'll dive into the fascinating world of Apple's M2Max and M3Pro chips – specifically, the 400GB, 30-core M2Max and the 150GB, 14-core M3Pro – and see how they fare when it comes to running the Llama 2 7B model.

We'll break down key factors like memory bandwidth, GPU cores, and quantization levels to shed light on which chip reigns supreme for different LLM workloads and use cases. Whether you're a seasoned developer or just starting your exploration, this article will equip you with the knowledge to make an informed decision for your LLM journey.

Memory Bandwidth: The Road to Speedy Data

Imagine your LLM as a hungry beast devouring data. Memory bandwidth is its “feeding pipe.” The wider the pipe, the faster the data flows, leading to quicker processing and smoother performance.

Our contenders, the M2Max and M3Pro, boast different memory bandwidths: the M2Max with a whopping 400GB/s and the M3Pro with a more modest 150GB/s. This difference is significant, particularly for large models where a constant flow of information is critical.

Here's a simple analogy: think of a car engine. The M2Max is like a high-performance engine with a wide fuel line, allowing it to gulp down fuel (data) rapidly. The M3Pro is like a smaller engine with a narrower line, leading to a slower intake.

Memory Bandwidth and LLM Performance

The impact of memory bandwidth on LLM performance is undeniable. The M2Max, with its superior bandwidth, can handle the flow of data with ease, leading to faster processing times. The M3Pro, while capable, may experience slowdowns if the data volume is too high, especially for models like Llama 2 7B.

GPU Cores: The Muscle of LLM Processing

GPU cores are the powerhouse of LLM processing. The more cores you have, the more computational power you can harness, which translates to quicker inference times.

The M2Max boasts 30 GPU cores compared to the M3Pro's 14. This significant difference in core count underscores their distinct strengths.

Think of the M2Max as a weightlifter with a team of 30 strong muscle fibers, while the M3Pro has a team of 14. For heavy lifting (like complex LLM processing), the M2_Max has a clear advantage.

GPU Cores and LLM Performance

When it comes to LLM workloads like processing and generation, more GPU cores translate to faster inference times and a more responsive experience. The M2Max, with its 30 cores, shines in these scenarios, delivering a noticeable performance boost compared to the M3Pro.

Quantization: Shrinking LLMs for Efficiency

Quantization is a technique used to reduce the size of LLM models without sacrificing too much accuracy. It's like a diet for LLMs, making them leaner and more efficient.

The M2Max and M3Pro both support different quantization levels: F16, Q80, and Q40. These levels represent different precision levels. F16 is the most precise, while Q4_0 is the least precise, leading to smaller model sizes but potentially impacting accuracy.

Let's think of quantization as a recipe for cooking. F16 is like using all the ingredients in their original form, while Q40 is like using a simplified version of the ingredients. The final dish may taste slightly different with Q40, but it's much lighter and easier to make!

Comparison of M2Max and M3Pro for Llama 2 7B

Now, let's delve into the heart of the matter: how do these two chips compare in the real world when running the Llama 2 7B model?

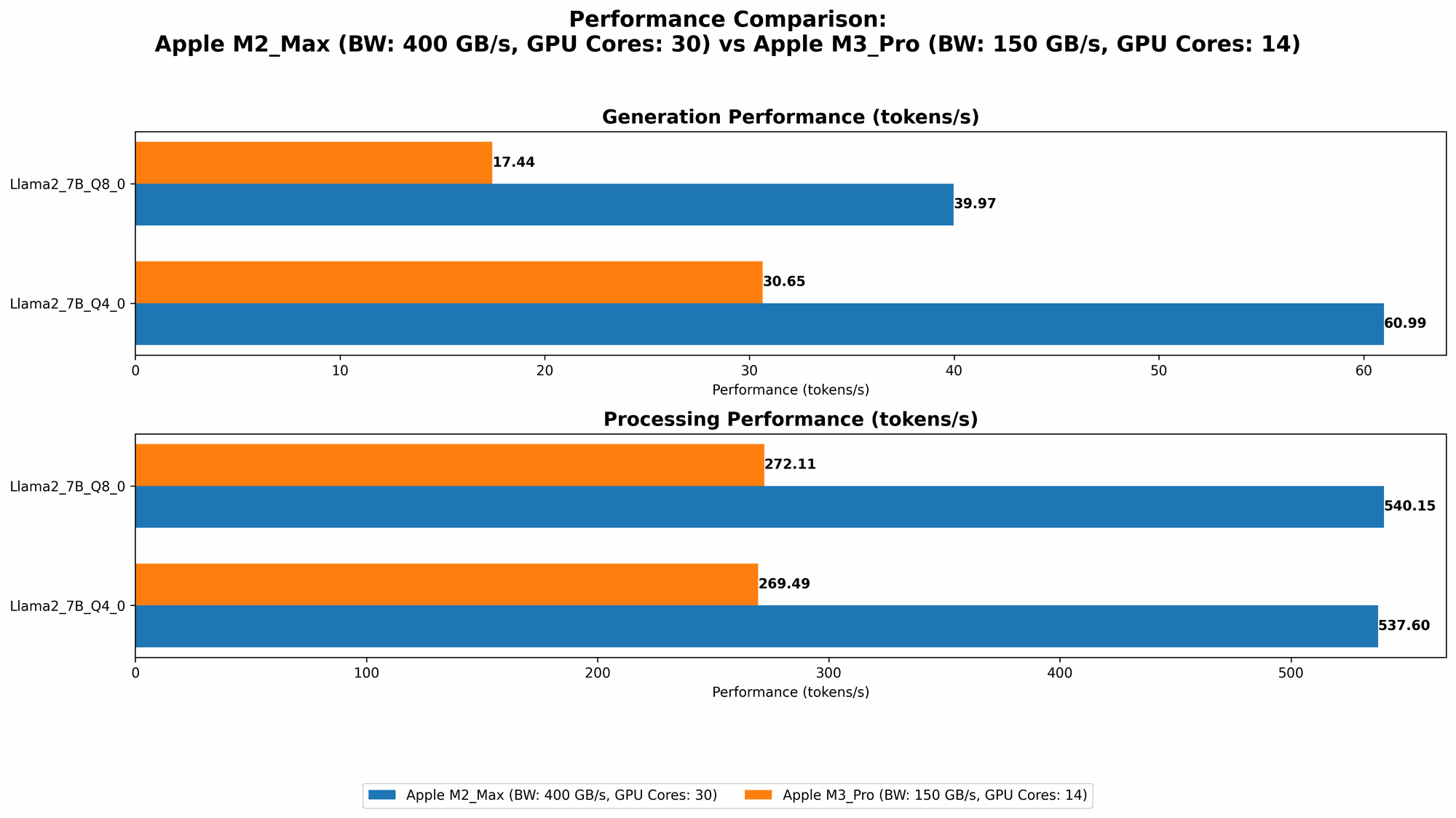

Apple M2_Max 400gb 30cores Performance Analysis

| Configuration | Llama 2 7B Processing (Tokens/second) | Llama 2 7B Generation (Tokens/second) |

|---|---|---|

| M2_Max 400GB 30 Cores (F16) | 600.46 | 24.16 |

| M2Max 400GB 30 Cores (Q80) | 540.15 | 39.97 |

| M2Max 400GB 30 Cores (Q40) | 537.6 | 60.99 |

As you can see from the data, the M2_Max delivers impressive performance with the Llama 2 7B model, with impressive token speeds for both processing and generation. Here's a breakdown:

Strengths:

- Powerhouse Processing: The M2_Max excels in processing tasks, pushing through tokens at a rapid pace.

- Efficient Generation: The M2_Max demonstrates strong performance in text generation, enabling faster and smoother text output.

- Quantization Flexibility: The M2_Max can effectively handle different quantization levels, allowing you to optimize for model size and accuracy as needed.

Weaknesses:

- Cost Factor: The M2Max is a premium chip with a higher price tag compared to the M3Pro.

Apple M3_Pro 150gb 14cores Performance Analysis

| Configuration | Llama 2 7B Processing (Tokens/second) | Llama 2 7B Generation (Tokens/second) |

|---|---|---|

| M3Pro 150GB 14 Cores (Q80) | 272.11 | 17.44 |

| M3Pro 150GB 14 Cores (Q40) | 269.49 | 30.65 |

The M3Pro, while not as powerful as the M2Max, still delivers respectable performance. It's a great option for those needing a balance between power and affordability.

Strengths:

- Cost-effective: The M3_Pro offers a more budget-friendly option for running LLMs locally.

- Decent Performance: The M3Pro provides acceptable performance for the Llama 2 7B model, especially when using Q80 or Q4_0 quantization.

Weaknesses:

- Limited Performance: Compared to the M2Max, the M3Pro struggles with processing and generation speed, particularly using F16 which isn't available with the M3_Pro.

Choosing the Right Chip for Your Needs

The best chip for you depends on your specific requirements. Here's a quick guide:

M2_Max: The Powerhouse Choice

- Ideal for: Developers needing the fastest processing and generation speeds for LLMs, large-scale projects, or applications that require maximum performance.

M3_Pro: The Balanced Option

- Ideal for: Developers working with smaller LLMs or those on a tighter budget. The M3_Pro provides a good balance between performance and affordability.

FAQs

What is quantization?

Imagine you have a detailed map of a city, but you need to send it over a limited data connection. Quantization is a technique that simplifies the map by using fewer colors and details, making it smaller and easier to transmit without losing too much information. Similarly, it helps LLMs become smaller and faster by reducing the precision of their weights, enabling them to run on devices with less memory and processing power.

What is Llama 2 7B?

Llama 2 7B is a large language model with 7 billion parameters, a type of artificial intelligence that has been trained on a vast amount of text data. Think of it as a highly knowledgeable and articulate digital assistant that can understand and generate human-like text. It's used in various applications like chatbots, writing assistance, summarizing information, and more.

Does the M2Max or M3Pro support models other than Llama 2 7B?

Yes, both chips are versatile and can run a wide range of LLMs. The benchmarks are specific to Llama 2 7B, but you can expect similar performance trends with other models. The specific speed will vary depending on the model's size and complexity.

Keywords

Apple M2Max, Apple M3Pro, Llama 2 7B, LLM, AI, Inference, Token Speed, GPU Cores, Memory Bandwidth, Quantization, F16, Q80, Q40, Performance Analysis, Model Size, Model Accuracy, Local LLMs, Developers, Deep Learning, Machine Learning, AI Hardware, Technology.