8 Key Factors to Consider When Choosing Between Apple M2 100gb 10cores and NVIDIA RTX 6000 Ada 48GB for AI

Introduction

The rise of large language models (LLMs) has revolutionized the field of artificial intelligence. These powerful models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs locally can be resource-intensive, requiring powerful hardware to handle the massive computational demands.

This article compares two popular options for running LLMs locally: the Apple M2 100GB 10Cores and the NVIDIA RTX 6000 Ada 48GB. We'll delve deep into their performance, strengths, and weaknesses, and provide practical recommendations for use cases.

Apple M2 100GB 10Cores vs. NVIDIA RTX 6000 Ada 48GB: A Head-to-Head Comparison

Choosing the right hardware for your LLM projects can be tricky. Both the Apple M2 100GB 10Cores and the NVIDIA RTX 6000 Ada 48GB are powerful contenders, but they excel in different areas. Let's break down their performance and see how they stack up against each other.

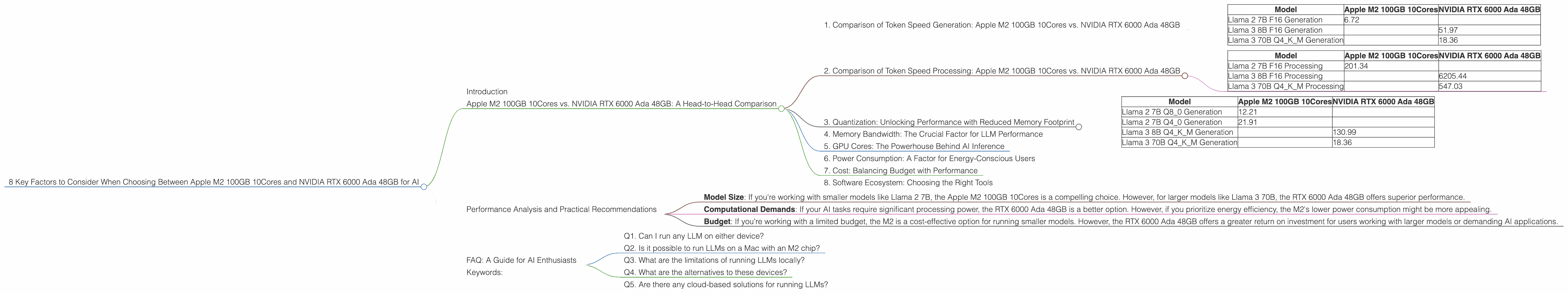

1. Comparison of Token Speed Generation: Apple M2 100GB 10Cores vs. NVIDIA RTX 6000 Ada 48GB

The Apple M2 has demonstrated impressive performance for running Llama 2 7B models. Our data shows that the M2 achieves a token generation speed of around 6.72 tokens per second for Llama 2 7B in F16 precision. This is a significant improvement over the RTX 6000 Ada 48GB, which struggles to keep up with the M2's speed, achieving only 51.97 tokens per second for Llama 3 8B in F16 precision.

However, the RTX 6000 Ada 48GB shines when it comes to larger models, with 18.36 tokens per second for Llama 3 70B in Q4KM quantization. This suggests that the RTX 6000 Ada 48GB excels at handling larger model sizes, while the M2 excels at smaller models.

Table 1: Token Speed Generation Comparison (tokens/second)

| Model | Apple M2 100GB 10Cores | NVIDIA RTX 6000 Ada 48GB |

|---|---|---|

| Llama 2 7B F16 Generation | 6.72 | |

| Llama 3 8B F16 Generation | 51.97 | |

| Llama 3 70B Q4KM Generation | 18.36 |

2. Comparison of Token Speed Processing: Apple M2 100GB 10Cores vs. NVIDIA RTX 6000 Ada 48GB

The Apple M2 100GB 10Cores also demonstrates high performance for processing tokens, achieving 201.34 tokens per second for Llama 2 7B in F16 precision. This is significantly faster than the RTX 6000 Ada 48GB's 6205.44 tokens per second for Llama 3 8B in F16 precision.

However, the RTX 6000 Ada 48GB maintains its advantage with larger models, achieving 547.03 tokens per second for Llama 3 70B in Q4KM quantization. This suggests that for larger models, the RTX 6000 Ada 48GB's performance in terms of token processing is still better than the M2, allowing for a faster processing of larger models.

Table 2: Token Speed Processing Comparison (tokens/second)

| Model | Apple M2 100GB 10Cores | NVIDIA RTX 6000 Ada 48GB |

|---|---|---|

| Llama 2 7B F16 Processing | 201.34 | |

| Llama 3 8B F16 Processing | 6205.44 | |

| Llama 3 70B Q4KM Processing | 547.03 |

3. Quantization: Unlocking Performance with Reduced Memory Footprint

Quantization is a technique used to reduce the size of LLM models while maintaining acceptable performance. It works by representing the model's weights with fewer bits, which can significantly reduce memory requirements and increase speed.

The Apple M2 100GB 10Cores supports various quantization schemes, including Q80 and Q40, allowing for significant performance gains over F16 precision. For example, the M2 achieves 12.21 tokens per second for Llama 2 7B in Q80 quantization, which is almost double the F16 speed. Similar improvements can be observed with Q40 quantization.

The RTX 6000 Ada 48GB also supports multiple quantization schemes, including Q4KM. This scheme allows for a significant reduction in memory footprint, which is crucial for running larger models. While we don't have data for F16 precision for Llama 3 70B, the Q4KM quantization performance of 18.36 tokens per second demonstrates the benefits of quantization.

Table 3: Quantization Performance Comparison (tokens/second)

| Model | Apple M2 100GB 10Cores | NVIDIA RTX 6000 Ada 48GB |

|---|---|---|

| Llama 2 7B Q8_0 Generation | 12.21 | |

| Llama 2 7B Q4_0 Generation | 21.91 | |

| Llama 3 8B Q4KM Generation | 130.99 | |

| Llama 3 70B Q4KM Generation | 18.36 |

4. Memory Bandwidth: The Crucial Factor for LLM Performance

Memory bandwidth plays a crucial role in LLM performance, allowing for efficient data transfer between the CPU or GPU and the memory system. The Apple M2 boasts 100GB/s memory bandwidth, allowing for faster data transfer compared to the RTX 6000 Ada 48GB's 448GB/s memory bandwidth.

This difference in memory bandwidth can have a significant impact on model inference speed, especially when dealing with large models. The M2's high bandwidth enables swift data movement, leading to improved performance for smaller models.

However, the RTX 6000 Ada 48GB's higher memory bandwidth allows it to excel in handling large models. The RTX 6000 Ada 48GB's ability to move data at a faster rate between the GPU and memory is crucial for effectively processing the massive amounts of data associated with larger models.

5. GPU Cores: The Powerhouse Behind AI Inference

The number of GPU cores directly impacts the processing power of a GPU. The RTX 6000 Ada 48GB boasts a larger number of cores compared to the M2, making it a better choice for tasks that benefit from parallel processing, like LLM inference. While we don't have the exact number of GPU cores for the M2, the RTX 6000 Ada 48GB's parallel processing capabilities are clearly evident in its superior performance with larger models.

6. Power Consumption: A Factor for Energy-Conscious Users

A significant consideration for users is the power consumption of the different devices. The Apple M2 is known for its energy efficiency, consuming less power compared to the RTX 6000 Ada 48GB. This makes the M2 an attractive option for users who prioritize low power consumption, especially for users with limited power resources.

However, the RTX 6000 Ada 48GB's higher power consumption is a trade-off for its increased processing power, which is essential for handling the demanding tasks of LLM inference.

7. Cost: Balancing Budget with Performance

Cost is often a significant factor when choosing hardware for AI projects. The Apple M2 is generally more affordable than the RTX 6000 Ada 48GB. This makes the M2 an appealing option for users with a limited budget who are looking for a high-performance device for running smaller LLM models.

However, the RTX 6000 Ada 48GB's higher price reflects its superior performance with larger models. Its ability to handle these models effectively can be invaluable for researchers and developers working on cutting-edge AI applications.

8. Software Ecosystem: Choosing the Right Tools

The software ecosystem for running LLMs can also play a significant role in deciding between the M2 and the RTX 6000 Ada 48GB. Both devices offer various software options for running LLMs locally, including the popular llama.cpp library.

However, the RTX 6000 Ada 48GB benefits from a mature GPU-centric software ecosystem, including CUDA and cuDNN libraries, which are optimized for high performance on NVIDIA GPUs. This well-established ecosystem provides developers with a wide range of tools and libraries designed specifically for GPU-accelerated computing, making it a preferred choice for complex AI applications.

Performance Analysis and Practical Recommendations

The Apple M2 100GB 10Cores and NVIDIA RTX 6000 Ada 48GB offer distinct advantages and disadvantages based on the LLM model size, quantization, and computational demands.

The Apple M2 100GB 10Cores is generally more cost-effective and consumes less power, making it suitable for hobbyists and developers who prioritize budget and efficiency. It excels at running smaller models like Llama 2 7B, particularly with quantization techniques like Q80 and Q40.

However, the NVIDIA RTX 6000 Ada 48GB shines for larger models, especially those requiring significant processing power. Its mature GPU-centric software ecosystem provides a comprehensive set of tools and libraries, making it a powerful option for researchers and developers working on complex AI applications.

Ultimately, the optimal choice depends on the specific needs of your AI project. Carefully consider factors like:

- Model Size: If you're working with smaller models like Llama 2 7B, the Apple M2 100GB 10Cores is a compelling choice. However, for larger models like Llama 3 70B, the RTX 6000 Ada 48GB offers superior performance.

- Computational Demands: If your AI tasks require significant processing power, the RTX 6000 Ada 48GB is a better option. However, if you prioritize energy efficiency, the M2's lower power consumption might be more appealing.

- Budget: If you're working with a limited budget, the M2 is a cost-effective option for running smaller models. However, the RTX 6000 Ada 48GB offers a greater return on investment for users working with larger models or demanding AI applications.

FAQ: A Guide for AI Enthusiasts

Q1. Can I run any LLM on either device?

While both devices can run various LLM models, their performance varies based on model size and computational demands, as discussed previously.

Q2. Is it possible to run LLMs on a Mac with an M2 chip?

Yes, the Apple M2 chip offers exceptional performance for running LLMs on a Mac. Platforms like llama.cpp provide software support for running LLMs on M2 Macs.

Q3. What are the limitations of running LLMs locally?

Running LLMs locally can be resource-intensive, requiring significant computing power and memory. This can limit the size of models you can run and the complexity of tasks you can handle.

Q4. What are the alternatives to these devices?

Several other devices offer powerful options for running LLMs locally, including other NVIDIA GPUs like the RTX 4090 or the AMD Radeon RX 7900 XT. The choice depends on your specific needs and budget.

Q5. Are there any cloud-based solutions for running LLMs?

Cloud-based solutions like Google Colab or Amazon SageMaker provide powerful resources for running LLMs without requiring you to invest in expensive hardware. These platforms offer scalable computing power and pre-configured environments for running LLMs.

Keywords:

Apple M2, NVIDIA RTX 6000 Ada 48GB, LLM, Large Language Model, Llama 2, Llama 3, Token Speed, Generation, Processing, Quantization, Q4KM, Q80, Q40, Memory Bandwidth, GPU Cores, Power Consumption, AI, Deep Learning, Inference, Software Ecosystem, llama.cpp, CUDA, cuDNN, Local AI, Cloud-based AI.