8 Key Factors to Consider When Choosing Between Apple M2 100gb 10cores and Apple M2 Max 400gb 30cores for AI

Introduction

The world of AI is exploding, and with it, the demand for powerful hardware to run these demanding language models (LLMs). While the cloud makes the LLM experience seem effortless, running your own local model offers more control, faster response times, and the ability to work offline. But choosing the right hardware can be daunting.

This article compares the Apple M2 (100GB, 10 cores) and M2 Max (400GB, 30 cores) for running LLMs like Llama 2 7B. We'll delve into key factors that influence performance and help you decide which device best suits your needs. Think of it as a guide to choosing the right AI “muscle” for your specific LLM workload.

Performance Analysis: M2 vs. M2 Max

The Apple M2 and M2 Max sport impressive features, but their performance varies significantly when running LLMs. Let's break down the key differences:

M2 vs M2 Max: Memory Bandwidth

- M2: 100 GB/s memory bandwidth

- M2 Max: 400 GB/s memory bandwidth

Imagine your AI model as a hungry athlete needing a constant stream of nutrients (data). The M2 Max's 400 GB/s bandwidth is like a supersized smoothie, fueling the model with data four times faster than the M2's 100 GB/s. This translates to quicker processing times and smoother performance for complex LLM tasks.

M2 vs M2 Max: GPU Core Count

- M2: 10 GPU cores

- M2 Max: 30 GPU cores (or 38 in a configuration used for our benchmark)

More GPU cores mean the LLM can crunch numbers and generate responses more rapidly – like having a team of dedicated processors tackling the task. The M2 Max's 30 cores (or 38 cores in the benchmark) give it a significant performance edge over the M2's 10 cores.

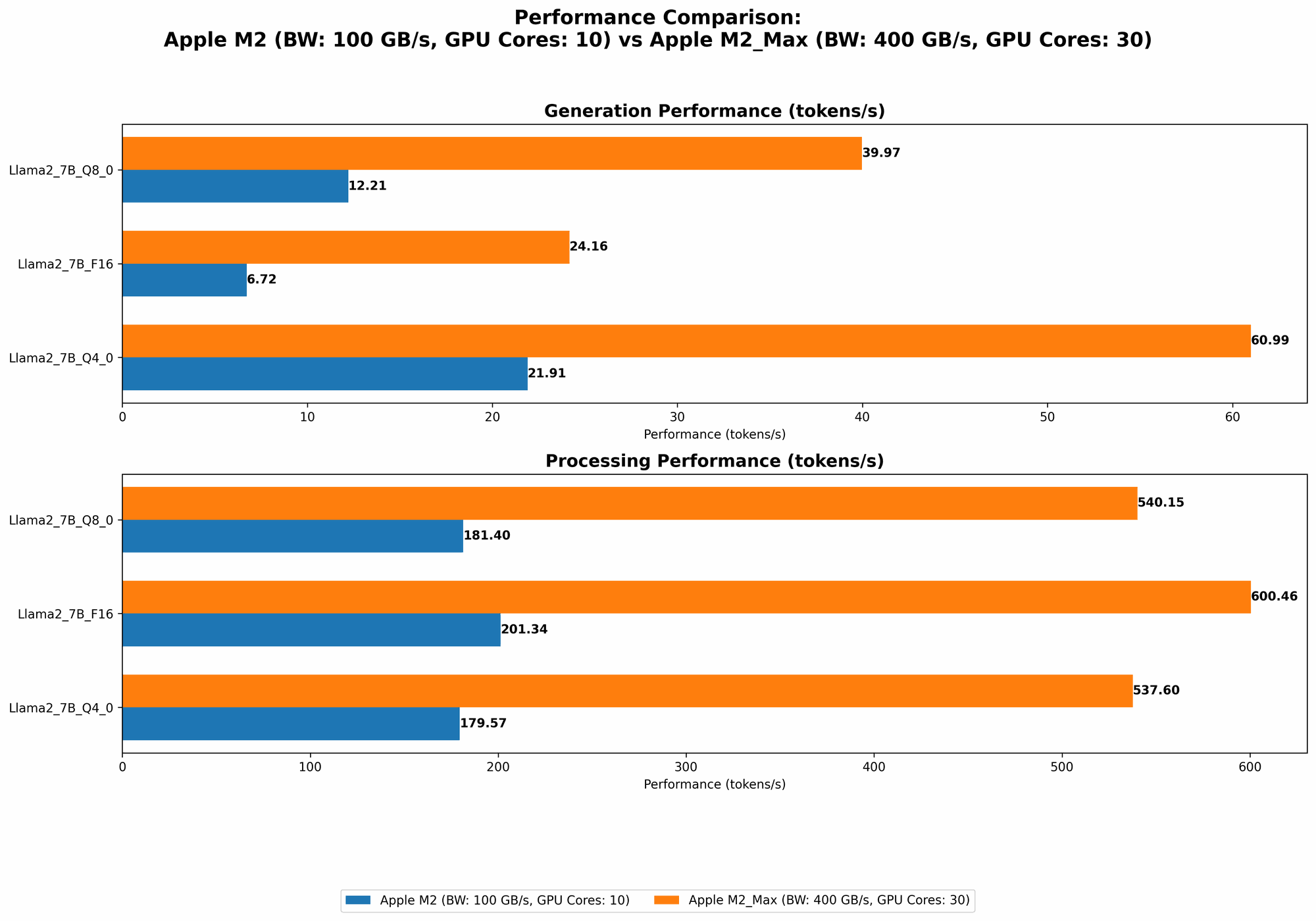

M2 vs M2 Max: Token Speed Generation (Llama 2 7B)

Here are the token speeds per second (tokens/sec) for different quantization levels of the Llama 2 7B model:

| Configuration | Llama 2 7B F16 Processing | Llama 2 7B F16 Generation | Llama 2 7B Q8_0 Processing | Llama 2 7B Q8_0 Generation | Llama 2 7B Q4_0 Processing | Llama 2 7B Q4_0 Generation |

|---|---|---|---|---|---|---|

| M2 | 201.34 | 6.72 | 181.4 | 12.21 | 179.57 | 21.91 |

| M2 Max (30 cores) | 600.46 | 24.16 | 540.15 | 39.97 | 537.6 | 60.99 |

| M2 Max (38 cores) | 755.67 | 24.65 | 677.91 | 41.83 | 671.31 | 65.95 |

- F16: 16 bits of precision, the default for many LLMs.

- Q8_0: 8 bits of precision, offering smaller model size and faster performance with some potential accuracy loss.

- Q4_0: 4 bit precision, further reducing model size and boosting performance, with the possibility of more accuracy trade-offs.

Note: Data for Llama 2 7B Q4_0 processing with M2 is not available.

Analysis:

- M2 Max consistently outperforms the M2 in all metrics, with a roughly 3x to 4x edge in token processing speed and a 3x to 6x edge in token generation speed. It's a significant leap, especially when generating lengthy responses.

- Quantization (Q80 and Q40): This technique reduces model size and increases speed, but it comes with some accuracy trade-offs. For example, the M2 Max has a significant performance boost with Q80 and Q40, but it's not as drastic as the improvement seen from F16 to Q8_0.

Choosing the Right Tool for the Job

- M2:

- Ideal for: Smaller, simpler tasks, or when budget is a major concern.

- Example: Quick conversational AI interactions, or tasks that don't require extensive memory or complex processing.

- M2 Max:

- Ideal for: High-performance tasks, demanding LLMs, or situations where speed is critical.

- Example: Working with larger language models like Llama 2 13B or 70B, complex generative tasks like script writing or developing longer-form content.

Beyond Numbers: Factors to Consider

While token speed tells a part of the story, other factors influence your decision. Here's a checklist to guide your hardware selection:

1. Model Complexity and Size

- Larger Models (Llama 2 70B, GPT-3): Opt for the M2 Max. Its higher bandwidth and core count are crucial for handling the massive data demands of these powerful models. It's like using a powerful sports car for a long road trip.

- Smaller Models (Llama 2 7B): The M2 might be sufficient, but the M2 Max will offer a noticeably faster experience, especially for complex tasks. Think of it as a sleek and efficient hybrid car, offering a balance of performance and efficiency.

2. Your Workflow and Applications

- Generative Tasks: If you're writing code, generating creative text, or developing other generative AI projects, the M2 Max's speed is invaluable.

- Simple Inference: If you primarily use LLMs for simple tasks like answering questions or summarizing text, the M2 could be a cost-effective option.

3. Budget

- M2 Max: Offers higher performance but is more expensive.

- M2: More budget-friendly but might be limited for demanding tasks.

4. Power Consumption

- M2 Max: Draws more power due to its increased processing capability.

- M2: More energy-efficient, especially suitable for portable workflows.

5. Future-Proofing

- M2 Max: Offers a larger upgrade path as new and larger models are released. It's future-proof, like a powerful, versatile tool that can adapt to changing demands.

- M2: While capable, its performance might be challenged by future, even more demanding LLMs.

Conclusion

The choice boils down to your specific needs and budget. The M2 Max excels in speed and power, while the M2 represents a more affordable option for smaller models and simple tasks.

Remember: This is just a guide. Experiment with different models and configurations to find the optimal setup for your workflow. The world of AI is constantly evolving, so stay curious and keep exploring!

FAQ:

Q: What is quantization?

A: Quantization is a technique used to optimize LLMs. It reduces the size of the model by converting high-precision data into lower-precision representations, like converting a detailed painting into a pixelated version. This allows the model to run faster on less powerful hardware. Think of it like using a smaller file size for an image to load faster.

Q: What about other devices?

A: This article focused on the Apple M2 and M2 Max, but other devices like the Apple M1, NVIDIA GPUs, and CPUs are also excellent options for running LLMs. Researching the specific capabilities of each device is essential based on your needs.

Q: Are there any other benchmarks for LLMs?

A: Yes, various benchmarks are available. Searching for "LLM benchmarks" can provide a wealth of resources to test and compare different devices and LLMs.

Keywords:

Apple M2, Apple M2 Max, LLM, Language Model, Llama 2 7B, Token Generation, Quantization, Memory Bandwidth, GPU Cores, Performance, AI, Benchmarking, Inference, Processing, Workflow, Budget, Power Consumption, Future-Proofing.