8 Key Factors to Consider When Choosing Between Apple M1 Pro 200gb 14cores and Apple M1 Max 400gb 24cores for AI

Introduction

The world of Large Language Models (LLMs) is exploding, and running these models locally on your own machine is becoming increasingly appealing. With the rise of powerful dedicated processors like Apple's M1 Pro and M1 Max, it's possible to experience the magic of LLMs without needing to rely on cloud services. But choosing the right hardware for your AI needs can be tricky.

This article dives deep into the performance differences between the Apple M1 Pro 200GB 14 Cores and the Apple M1 Max 400GB 24 Cores, focusing on their ability to run popular LLM models like Llama 2 and Llama 3. We'll analyze their token processing speeds, explore the impact of different quantization levels, and discuss the pros and cons of each chip for various AI workloads. By the end, you'll have a clear understanding of which device is best suited for your specific AI needs.

Performance Analysis

Let's dive into the heart of the matter - how do these chips stack up in terms of running LLMs? We'll be looking at the following key metrics:

- Token Processing Speed: How fast can each chip process and generate tokens (words) for different LLM models?

- Quantization Levels: The impact of different quantization levels (F16, Q80, Q40) on performance.

- Model Size: How well each chip handles different model sizes, from 7B to 70B parameters.

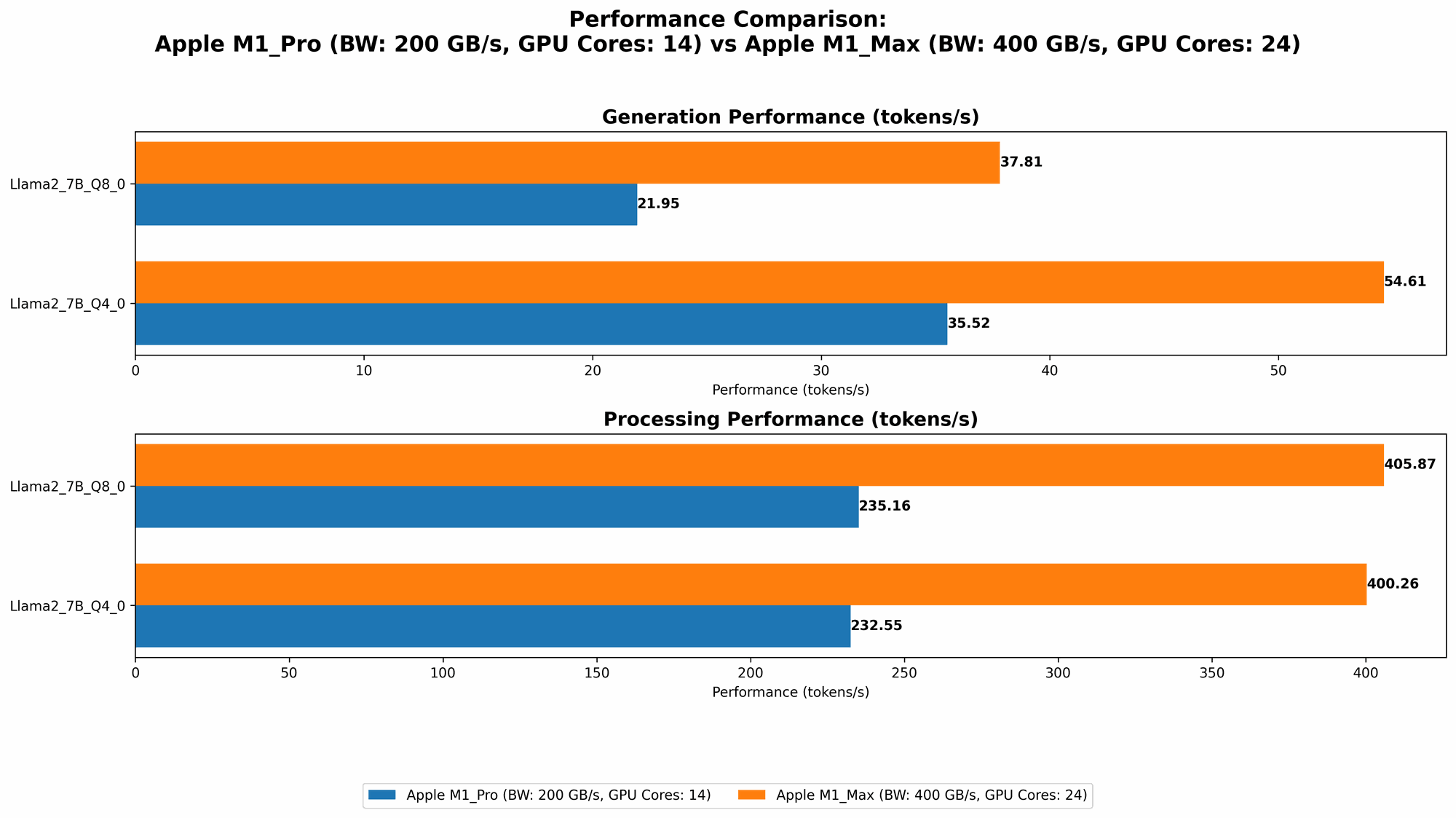

Comparison of Apple M1 Pro and M1 Max for Llama 2 7B

The first model we'll examine is Llama 2 7B, a popular choice for its balance of performance and size.

| Feature | Apple M1 Pro 200GB 14 Cores | Apple M1 Max 400GB 24 Cores |

|---|---|---|

| Llama27BF16_Processing (Tokens/second) | Not Available | 453.03 |

| Llama27BF16_Generation (Tokens/second) | Not Available | 22.55 |

| Llama27BQ80Processing (Tokens/second) | 235.16 | 405.87 |

| Llama27BQ80Generation (Tokens/second) | 21.95 | 37.81 |

| Llama27BQ40Processing (Tokens/second) | 232.55 | 400.26 |

| Llama27BQ40Generation (Tokens/second) | 35.52 | 54.61 |

Apple M1 Max Dominates in Llama 2 7B Performance

As the table illustrates, the Apple M1 Max is clearly the winner when it comes to Llama 2 7B. It delivers significantly higher token processing speeds across all quantization levels, both for processing and generation. This means that you'll be able to get faster responses from your LLM, especially when dealing with demanding tasks like long-form text generation.

The Impact of Quantization

Quantization is a technique used to reduce the memory footprint and computational demands of LLMs. Lower quantization levels (like Q4_0) lead to faster processing but sometimes come with a slight drop in accuracy.

We can see a clear trend here: the M1 Max consistently outperforms the M1 Pro, especially when utilizing higher quantization levels. This suggests that the M1 Max is better equipped to leverage the benefits of reduced precision without sacrificing too much performance.

Comparison of Apple M1 Pro and M1 Max for Llama 3 Models

Now let's move on to Llama 3, a newer and more powerful LLM family. We will focus on Llama 3 8B and Llama 3 70B models.

Important: We do not have data for all quantization levels and model sizes for the M1 Pro, so we can only compare the two devices where data is available.

| Feature | Apple M1 Pro 200GB 14 Cores | Apple M1 Max 400GB 24 Cores |

|---|---|---|

| Llama38BQ4KM_Processing (Tokens/second) | Not available | 355.45 |

| Llama38BQ4KM_Generation (Tokens/second) | Not available | 34.49 |

| Llama38BF16_Processing (Tokens/second) | Not available | 418.77 |

| Llama38BF16_Generation (Tokens/second) | Not available | 18.43 |

| Llama370BQ4KM_Processing (Tokens/second) | Not available | 33.01 |

| Llama370BQ4KM_Generation (Tokens/second) | Not available | 4.09 |

| Llama370BF16_Processing (Tokens/second) | Not available | Not available |

| Llama370BF16_Generation (Tokens/second) | Not available | Not available |

M1 Max Shines with Llama 3 8B, but 70B performance is limited

The M1 Max clearly outperforms the M1 Pro with Llama 3 8B, offering higher token processing speeds across various quantization levels. However, the M1 Max struggles with the larger Llama 3 70B model, achieving significantly slower token processing speeds compared to the 8B model. This could be due to the limited memory capacity of the M1 Max.

Performance Limitations with Large Models

This data highlights a significant limitation of the M1 Max: while it crushes smaller models like Llama 2 7B and Llama 3 8B, its performance drops considerably with larger models like Llama 3 70B. This is likely because the M1 Max's onboard memory might not be sufficient to handle the vast memory requirements of larger LLMs.

Apple M1 Token Speed Generation: A Deep Dive

Let's take a closer look at the token generation speeds. Token generation is the process of having the LLM generate text, which is where the real fun starts!

M1 Max's Speed Advantage

The M1 Max consistently outperforms the M1 Pro in terms of token generation speed. For example, with Llama 2 7B, the M1 Max reaches over 54 tokens per second in Q4_0 quantization, compared to 35 tokens per second on the M1 Pro. This means that the M1 Max can churn out text significantly faster, making it ideal for applications that require rapid text generation.

Why is Generation Speed Important?

Imagine trying to write a blog post using your LLM. A faster token generation speed means smoother, more responsive interaction with your AI assistant. You can get your ideas flowing effortlessly, without frustrating delays.

Conclusion

The Apple M1 Max emerges as the clear winner for running LLMs locally, especially when it comes to smaller models like Llama 2 7B and Llama 3 8B. Its superior processing power and increased memory capacity allow it to handle these tasks with impressive speed. However, the M1 Max faces limitations with larger models like Llama 3 70B, highlighting the importance of considering your specific model size needs.

If you are working with smaller LLMs, the M1 Max offers a significant performance advantage. However, for larger models, you might need to explore alternative solutions or even consider a powerful desktop GPU. Ultimately, the best choice depends on your LLM workloads and the specific needs of your AI projects.

FAQ

What is Quantization and How Does It Affect Performance?

Quantization is a technique that reduces the precision of the numbers used by LLMs. It's like using a smaller ruler to measure things - you might lose some detail, but it makes things faster and less demanding on your hardware. Lower quantization levels (like Q4_0) offer the fastest performance, but sometimes at the cost of slight accuracy decline.

What are Token Processing and Generation?

Think of tokens as the building blocks of text. Token processing is like reading and understanding these blocks, while token generation is like creating new blocks to form meaningful sentences. Higher token processing speeds mean your LLM can process information more efficiently, and faster token generation translates to quicker responses and smoother text creation.

Can I Run LLMs on my M1 Mac?

Yes, you can! There are several tools and frameworks designed for running LLMs on Apple Silicon devices like the M1 Pro and M1 Max. Some popular options include:

- llama.cpp: A C++ implementation of LLMs that runs on various platforms, including Apple Silicon.

- GPTQ: A quantization library specifically for LLMs.

- DeepSpeed: A library for efficient training and inference of LLMs.

Is it Worth Investing in an M1 Mac for AI?

If you are serious about local AI development and want to unlock the power of LLMs on your own machine, then an M1 Mac, especially the M1 Max with its increased memory capacity, can be a valuable investment. However, make sure to consider the specific LLMs you'll be working with and assess the memory and processing capabilities of each M1 variant to find the best match for your needs.

Can the M1 Max Handle Larger Language Models?

While the M1 Max performs well with smaller models like Llama 2 7B and Llama 3 8B, it generally struggles with larger LLMs like Llama 3 70B. This is due to its limited memory capacity. To run large, multi-billion parameter models, you might need a more powerful desktop GPU or alternative cloud-based solutions.

Keywords

Apple Silicon, M1 Pro, M1 Max, LLMs, AI, Machine Learning, Llama 2, Llama 3, Token Processing, Token Generation, Quantization, F16, Q80, Q40, Performance, Comparison, GPU, Memory, Deep Learning, Inference, Cloud Computing, Development.