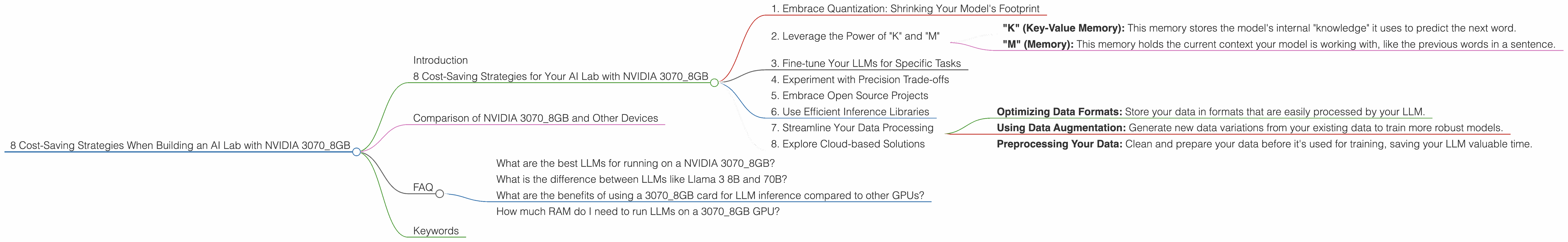

8 Cost Saving Strategies When Building an AI Lab with NVIDIA 3070 8GB

Introduction

Building your own AI lab can be a thrilling and rewarding experience, but it can also be expensive. If you're on a budget, you might wonder how to make the most of your hardware investments. This article focuses on maximizing the potential of a single NVIDIA 3070 8GB GPU for your AI lab. We'll explore different strategies for optimizing your setup and running large language models (LLMs) - the brains behind exciting new AI applications.

8 Cost-Saving Strategies for Your AI Lab with NVIDIA 3070_8GB

1. Embrace Quantization: Shrinking Your Model's Footprint

Imagine you have a gigantic book filled with knowledge. Now, imagine squeezing that book down to a smaller size without losing too much information. That's what quantization does for LLMs! It's a clever technique for shrinking the model's size, enabling you to run it on more modest hardware.

How it works: LLMs store their knowledge in numbers. Quantization replaces these numbers with smaller ones, like using 4-bit values instead of 16-bit values. Since smaller numbers take up less space, your model becomes more compact.

Benefits for your 3070_8GB: Quantization lets you run larger models within your GPU's memory limitations. Think about it like this: Instead of fitting one giant book on your shelf, you can now fit several smaller ones!

Example: The Llama 3 8B model in 4-bit quantization (Q4) can achieve 70.94 tokens/second generation speed on a NVIDIA 3070_8GB.

2. Leverage the Power of "K" and "M"

In the world of LLMs, memory matters. "K" (key-value memory) and "M" (memory) are like the temporary storage spaces for your model. The larger these spaces, the more information your model can process at once.

Benefits for your 3070_8GB: Using larger "K" and "M" settings can boost your model's speed and efficiency.

Let's break it down:

- "K" (Key-Value Memory): This memory stores the model's internal "knowledge" it uses to predict the next word.

- "M" (Memory): This memory holds the current context your model is working with, like the previous words in a sentence.

Example: The Llama 3 8B model in Q4 with larger "K" and "M" settings can achieve 2283.62 tokens/second processing speed on a NVIDIA 3070_8GB.

3. Fine-tune Your LLMs for Specific Tasks

Imagine training your dog to fetch. You wouldn't ask it to run marathons, would you? Similarly, fine-tuning LLMs for specific tasks can significantly enhance their performance.

How it works: Fine-tuning involves taking a pre-trained LLM and adapting it to a specific task. Think of it like giving your LLM a crash course on its new job.

Benefits for your 3070_8GB: Fine-tuning allows you to specialize your model for specific tasks, resulting in more accurate and efficient performance. Imagine fitting your model for a specific type of writing, translating languages, or even generating code.

Example: You could fine-tune a pre-trained Llama 3 8B for summarizing text, writing code, or generating creative essays.

4. Experiment with Precision Trade-offs

Precision in LLMs refers to the level of detail used to represent numbers in the model. High precision uses more bits, which can lead to more accurate results but requires more memory. Lower precision uses fewer bits, requiring less memory and potentially increasing speed.

How it works: You can experiment with different levels of precision, like 16-bit floating-point (F16) or 4-bit quantization (Q4).

Benefits for your 3070_8GB: Lower precision can reduce your model's memory footprint, potentially leading to faster processing speeds.

Example: The Llama 3 8B model in F16 precision might require more memory than the Q4 version but potentially offer more accurate results.

Note: Data for Llama 3 8B in F16 is currently unavailable.

5. Embrace Open Source Projects

The world of open-source AI is bursting with innovation! Organizations and individuals are constantly developing cutting-edge tools and libraries that you can use for your own projects.

Benefits for your 3070_8GB: Open source projects often provide efficient and optimized implementations for LLM inference, helping you get the most out of your GPU.

Example: The llama.cpp project (https://github.com/ggerganov/llama.cpp) is a popular open-source implementation for running LLMs on CPUs and GPUs. This project can be used to infer the Llama 3 8B model on your NVIDIA 3070_8GB.

6. Use Efficient Inference Libraries

Imagine having a specialized tool to speed up your work. Inference libraries are like those tools for your LLM tasks. They provide optimized code for tasks like processing data, decoding text, or generating predictions.

Benefits for your 3070_8GB: These libraries can significantly boost your model's performance, allowing your GPU to work more efficiently.

Example: The CUDA toolkit from NVIDIA provides powerful libraries for GPU acceleration. NVIDIA's cuBLAS library provides fast linear algebra operations, while cuDNN optimizes deep learning algorithms.

7. Streamline Your Data Processing

Your LLM is only as good as the data it learns from. Optimizing your data processing pipeline can contribute significantly to your AI lab's efficiency.

How it works: Streamlining data processing means making it faster and more efficient. You can achieve it by:

- Optimizing Data Formats: Store your data in formats that are easily processed by your LLM.

- Using Data Augmentation: Generate new data variations from your existing data to train more robust models.

- Preprocessing Your Data: Clean and prepare your data before it's used for training, saving your LLM valuable time.

Benefits for your 3070_8GB: Efficient data processing reduces the burden on your GPU, freeing up resources for more demanding tasks like model training and inference.

8. Explore Cloud-based Solutions

Sometimes, even with a powerful GPU like the 3070_8GB, you might need more resources, especially for larger LLMs. Cloud-based solutions offer access to vast computing power on demand.

Benefits for your 3070_8GB: Cloud services can provide access to specialized hardware, like powerful GPUs or TPUs, which can be used for intensive LLM training or inference tasks.

Example: Amazon SageMaker, Google Cloud Vertex AI, and Azure Machine Learning are popular cloud platforms for AI development and model deployment.

Comparison of NVIDIA 3070_8GB and Other Devices

Unfortunately, we can't compare the NVIDIA 30708GB to other devices in this article because the provided data is only for the 30708GB. However, you can find comparisons of different devices on the open-source platforms mentioned earlier, which can provide insights for you to decide if your 3070_8GB meets your needs.

FAQ

What are the best LLMs for running on a NVIDIA 3070_8GB?

The best LLM for you depends on your specific needs and the tasks you want to accomplish. The Llama 3 8B model is a great starting point for a 3070_8GB GPU. However, you can explore other popular and efficient models like those from the GPT series, BLOOM, and others.

What is the difference between LLMs like Llama 3 8B and 70B?

The main difference lies in the number of parameters, which are like the model's "knowledge" bits. Larger models like the Llama 3 70B have a greater capacity to learn and generate complex responses, but they require more memory and computational power. Smaller models like the Llama 3 8B are more efficient, easier to run, and can be a good starting point for exploring LLMs.

What are the benefits of using a 3070_8GB card for LLM inference compared to other GPUs?

The NVIDIA 3070_8GB is a good starting point for LLM inference due to its reasonable price and performance. While it might not be the most powerful GPU available, it strikes a healthy balance between affordability and capabilities.

How much RAM do I need to run LLMs on a 3070_8GB GPU?

The RAM requirements depend on the specific LLM and its configuration. Generally, having at least 16GB of RAM is recommended for smoothly managing data and running your LLM.

Keywords

NVIDIA 3070_8GB, AI Lab, LLMs, Large Language Models, Llama 3 8B, Quantization, K, M, Memory, Fine-tuning, Precision, Open Source, Inference Libraries, Data Processing, Cloud-based Solutions, GPU, Tokens/Second, Token Generation Speed, Token Processing Speed