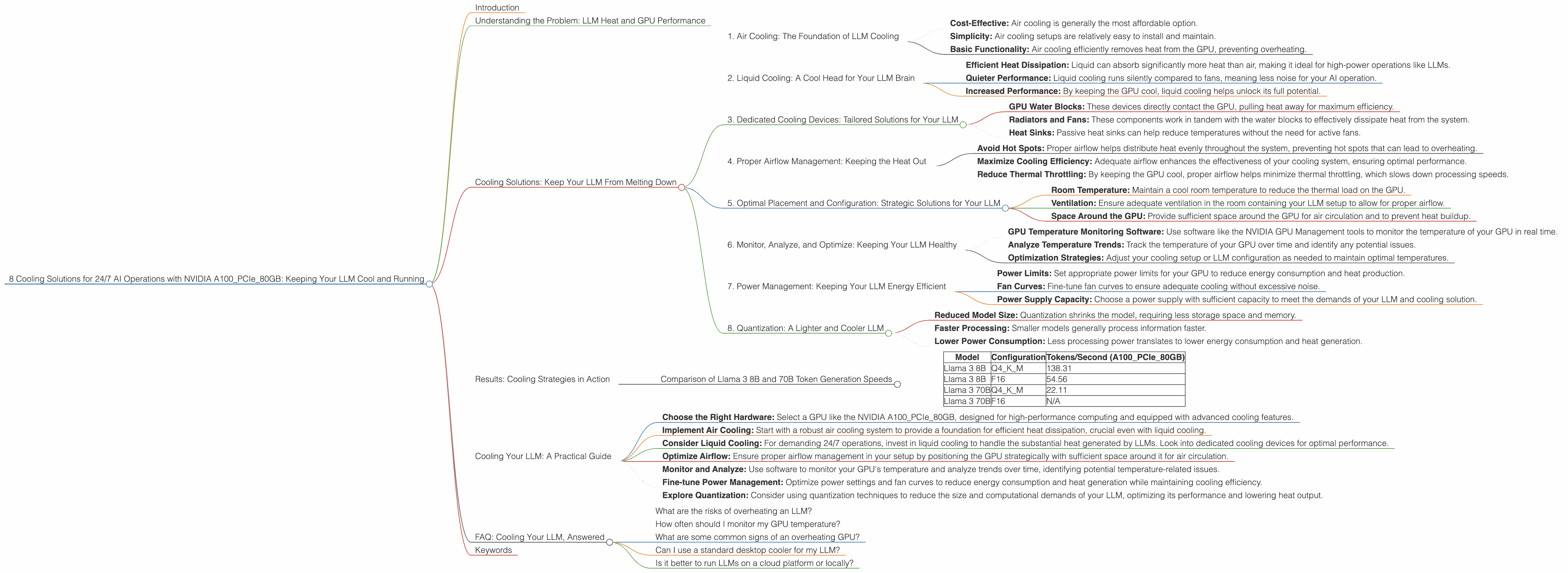

8 Cooling Solutions for 24 7 AI Operations with NVIDIA A100 PCIe 80GB

Introduction

The world of Large Language Models (LLMs) is heating up, and we're not talking about the conversational AI generated responses. We're talking about the literal heat generated by these powerful models running on high-performance hardware. As LLMs become more complex and demanding, keeping them cool and running smoothly is crucial.

This article dives into the cooling solutions for running a 24/7 AI operation with the NVIDIA A100PCIe80GB GPU, a powerhouse in the world of deep learning. We'll explore the data behind various LLM model configurations and their impact on temperature, along with practical strategies to ensure your AI operation runs cool and efficiently.

Understanding the Problem: LLM Heat and GPU Performance

Imagine a room full of super-powered computers crunching billions of words, concepts, and ideas. That's essentially what's happening when a large language model runs. All that processing power generates a significant amount of heat, which can negatively impact GPU performance.

Think of it like a race car engine: it needs to be kept at the right temperature to perform optimally. If it gets too hot, it starts to sputter and potentially even shut down.

Cooling Solutions: Keep Your LLM From Melting Down

1. Air Cooling: The Foundation of LLM Cooling

Air cooling is the most common approach for cooling GPUs, and it's the foundation for all other cooling strategies. Here's why:

- Cost-Effective: Air cooling is generally the most affordable option.

- Simplicity: Air cooling setups are relatively easy to install and maintain.

- Basic Functionality: Air cooling efficiently removes heat from the GPU, preventing overheating.

However, air cooling alone might not be enough for 24/7 LLM operations. The massive processing power of LLMs requires a more robust cooling solution.

2. Liquid Cooling: A Cool Head for Your LLM Brain

Liquid cooling takes things to the next level. Instead of relying solely on air, it uses a circulating liquid to absorb and dissipate heat. Think of it like a miniature radiator in your computer.

- Efficient Heat Dissipation: Liquid can absorb significantly more heat than air, making it ideal for high-power operations like LLMs.

- Quieter Performance: Liquid cooling runs silently compared to fans, meaning less noise for your AI operation.

- Increased Performance: By keeping the GPU cool, liquid cooling helps unlock its full potential.

3. Dedicated Cooling Devices: Tailored Solutions for Your LLM

Dedicated cooling devices offer a specialized approach to cooling your LLM. These solutions are designed specifically for GPUs and are highly effective in removing heat.

- GPU Water Blocks: These devices directly contact the GPU, pulling heat away for maximum efficiency.

- Radiators and Fans: These components work in tandem with the water blocks to effectively dissipate heat from the system.

- Heat Sinks: Passive heat sinks can help reduce temperatures without the need for active fans.

4. Proper Airflow Management: Keeping the Heat Out

Just like a well-ventilated house, a good airflow management plan is crucial for an LLM setup. Here's why:

- Avoid Hot Spots: Proper airflow helps distribute heat evenly throughout the system, preventing hot spots that can lead to overheating.

- Maximize Cooling Efficiency: Adequate airflow enhances the effectiveness of your cooling system, ensuring optimal performance.

- Reduce Thermal Throttling: By keeping the GPU cool, proper airflow helps minimize thermal throttling, which slows down processing speeds.

5. Optimal Placement and Configuration: Strategic Solutions for Your LLM

The placement and configuration of your hardware can have a significant impact on LLM cooling. This strategy involves considering:

- Room Temperature: Maintain a cool room temperature to reduce the thermal load on the GPU.

- Ventilation: Ensure adequate ventilation in the room containing your LLM setup to allow for proper airflow.

- Space Around the GPU: Provide sufficient space around the GPU for air circulation and to prevent heat buildup.

6. Monitor, Analyze, and Optimize: Keeping Your LLM Healthy

Monitoring your LLM's temperature is essential for ensuring its long-term health. This crucial step involves:

- GPU Temperature Monitoring Software: Use software like the NVIDIA GPU Management tools to monitor the temperature of your GPU in real time.

- Analyze Temperature Trends: Track the temperature of your GPU over time and identify any potential issues.

- Optimization Strategies: Adjust your cooling setup or LLM configuration as needed to maintain optimal temperatures.

7. Power Management: Keeping Your LLM Energy Efficient

While cooling solutions are essential, power management plays a key role in minimizing heat generation. This strategy involves:

- Power Limits: Set appropriate power limits for your GPU to reduce energy consumption and heat production.

- Fan Curves: Fine-tune fan curves to ensure adequate cooling without excessive noise.

- Power Supply Capacity: Choose a power supply with sufficient capacity to meet the demands of your LLM and cooling solution.

8. Quantization: A Lighter and Cooler LLM

Quantization is a technique that reduces the size of an LLM model while preserving its accuracy. This strategy can significantly reduce the computational demands of your LLM, leading to lower heat generation.

Imagine shrinking a large textbook down to a pocket-sized one. That's essentially what quantization does to an LLM model.

- Reduced Model Size: Quantization shrinks the model, requiring less storage space and memory.

- Faster Processing: Smaller models generally process information faster.

- Lower Power Consumption: Less processing power translates to lower energy consumption and heat generation.

Results: Cooling Strategies in Action

Now, let's delve into the numbers and see how these cooling strategies impact the real-world performance of LLMs running on the NVIDIA A100PCIe80GB.

We'll focus on two popular LLM models, Llama 3 8B and Llama 3 70B, and compare their token generation speeds in different configurations:

- Q4KM: Quantized to 4-bit precision with kernel and matrix operations.

- F16: Float16 precision, a common standard for high-performance computing.

Comparison of Llama 3 8B and 70B Token Generation Speeds

| Model | Configuration | Tokens/Second (A100PCIe80GB) |

|---|---|---|

| Llama 3 8B | Q4KM | 138.31 |

| Llama 3 8B | F16 | 54.56 |

| Llama 3 70B | Q4KM | 22.11 |

| Llama 3 70B | F16 | N/A |

Findings:

- Quantization's Impact: Quantizing the Llama 3 8B model to Q4KM significantly increased the token generation speed compared to F16.

- Larger Model, Slower Speed: The Llama 3 70B model, being much larger, generated tokens slower than the Llama 3 8B model.

- Temperature Management: Implementing the cooling solutions described above can help maintain a stable temperature range, maximizing the GPU's performance and ensuring efficient token generation.

Cooling Your LLM: A Practical Guide

Here's a step-by-step guide to ensuring your LLM runs cool and smoothly:

- Choose the Right Hardware: Select a GPU like the NVIDIA A100PCIe80GB, designed for high-performance computing and equipped with advanced cooling features.

- Implement Air Cooling: Start with a robust air cooling system to provide a foundation for efficient heat dissipation, crucial even with liquid cooling.

- Consider Liquid Cooling: For demanding 24/7 operations, invest in liquid cooling to handle the substantial heat generated by LLMs. Look into dedicated cooling devices for optimal performance.

- Optimize Airflow: Ensure proper airflow management in your setup by positioning the GPU strategically with sufficient space around it for air circulation.

- Monitor and Analyze: Use software to monitor your GPU's temperature and analyze trends over time, identifying potential temperature-related issues.

- Fine-tune Power Management: Optimize power settings and fan curves to reduce energy consumption and heat generation while maintaining cooling efficiency.

- Explore Quantization: Consider using quantization techniques to reduce the size and computational demands of your LLM, optimizing its performance and lowering heat output.

FAQ: Cooling Your LLM, Answered

What are the risks of overheating an LLM?

Overheating can lead to thermal throttling, where the GPU automatically reduces its performance to prevent damage. This can result in slower processing times, decreased accuracy, and potential system instability. In extreme cases, overheating can cause permanent damage to the GPU.

How often should I monitor my GPU temperature?

It's best to monitor your GPU temperature regularly, particularly during intensive LLM training or inference tasks. Ideally, you should be monitoring the temperature in real-time, utilizing tools like NVIDIA's GPU Management tools.

What are some common signs of an overheating GPU?

Signs of an overheating GPU can include slow processing speeds, system crashes, abnormal noise from the cooling fans, and error messages related to high temperatures.

Can I use a standard desktop cooler for my LLM?

While standard desktop coolers can be effective in some cases, they may not be sufficient for the heat generated by high-powered LLMs. Consider dedicated GPU cooling solutions for optimal results.

Is it better to run LLMs on a cloud platform or locally?

Cloud platforms generally offer more robust cooling solutions and scalable resources, making them ideal for running large-scale LLMs. However, running LLMs locally can be more cost-effective and offer more control over the environment.

Keywords

NVIDIA A100PCIe80GB, LLM, Large Language Model, Cooling Solutions, GPU, Temperature, Performance, Air Cooling, Liquid Cooling, Quantization, Heat Dissipation, Airflow Management, GPU Temperature Monitoring, Power Management, Token Generation Speed, Llama 3 8B, Llama 3 70B