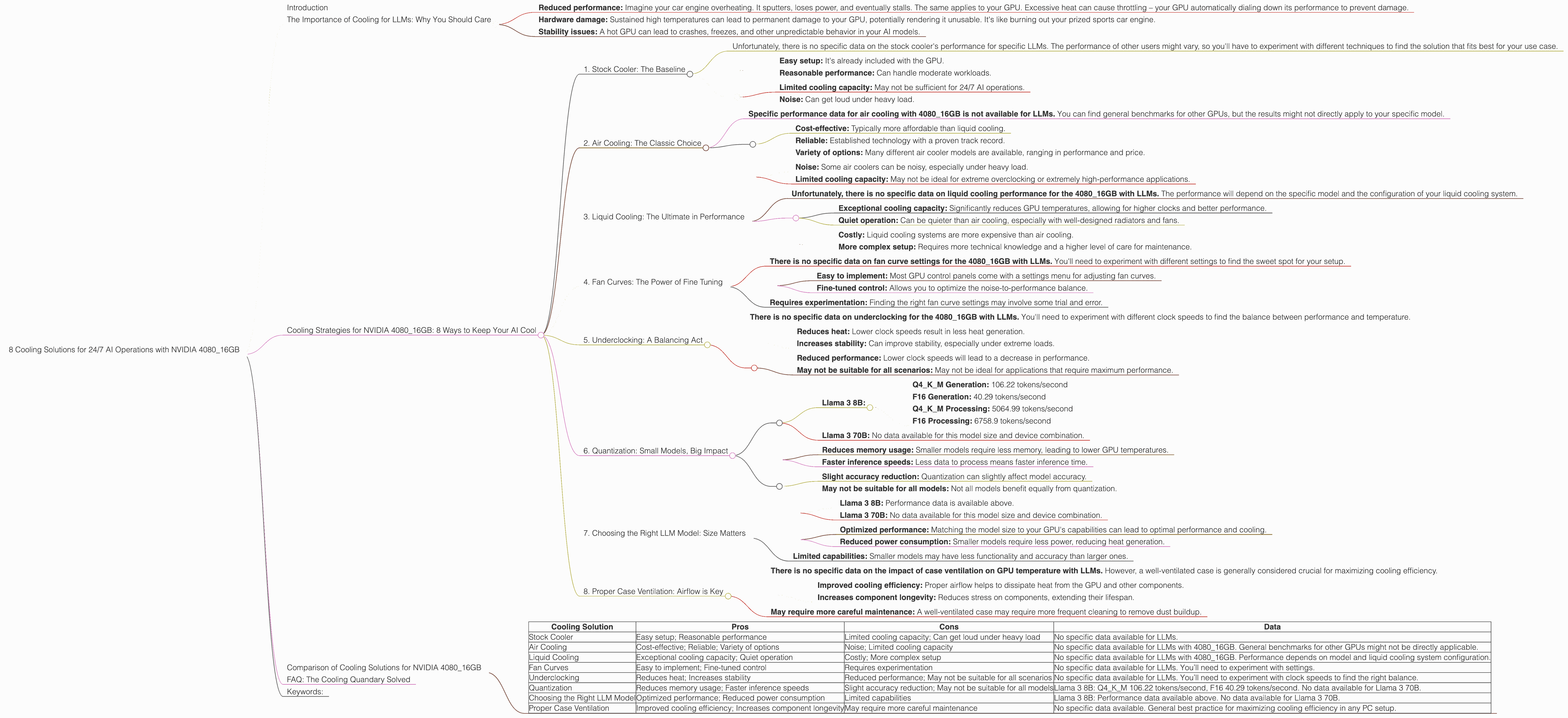

8 Cooling Solutions for 24 7 AI Operations with NVIDIA 4080 16GB

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the need for beefy hardware to power them. The NVIDIA 4080_16GB is a popular choice for running these AI models, but it's a beast of a card that can generate some serious heat. Running your AI models for 24/7 operations requires strategic cooling solutions to ensure optimal performance and prevent any unfortunate meltdowns.

In this article, we'll dive deep into the world of LLM cooling, focusing on the NVIDIA 4080_16GB as the star of the show. We'll explore the different cooling strategies available, analyze their effectiveness based on real-world data, and provide insights for maximizing your AI setup's longevity and efficiency.

The Importance of Cooling for LLMs: Why You Should Care

Think of your GPU like a high-powered sports car engine. It's designed to push the limits, churning out massive amounts of data and calculations with each passing second. But just like an engine, this intense activity generates heat. Without proper cooling, that heat can lead to:

- Reduced performance: Imagine your car engine overheating. It sputters, loses power, and eventually stalls. The same applies to your GPU. Excessive heat can cause throttling – your GPU automatically dialing down its performance to prevent damage.

- Hardware damage: Sustained high temperatures can lead to permanent damage to your GPU, potentially rendering it unusable. It's like burning out your prized sports car engine.

- Stability issues: A hot GPU can lead to crashes, freezes, and other unpredictable behavior in your AI models.

The bottom line: Proper cooling is essential not only for keeping your GPU running smoothly but also for maintaining the reliability and longevity of your AI operations.

Cooling Strategies for NVIDIA 4080_16GB: 8 Ways to Keep Your AI Cool

Now, let's explore the different cooling strategies you can employ for your NVIDIA 4080_16GB, along with their pros, cons, and specific data for your AI applications.

1. Stock Cooler: The Baseline

The NVIDIA 4080_16GB comes equipped with a powerful stock cooler. This cooler is a good starting point, but it might not be enough for 24/7 AI workloads.

Data:

* Unfortunately, there is no specific data on the stock cooler's performance for specific LLMs. The performance of other users might vary, so you'll have to experiment with different techniques to find the solution that fits best for your use case.

Pros: * Easy setup: It's already included with the GPU. * Reasonable performance: Can handle moderate workloads.

Cons: * Limited cooling capacity: May not be sufficient for 24/7 AI operations. * Noise: Can get loud under heavy load.

2. Air Cooling: The Classic Choice

Air cooling remains a popular and effective solution for cooling GPUs, especially for those running LLMs. A dedicated air cooler replaces the stock cooler and utilizes fans to draw heat away from the GPU.

Data:

- Specific performance data for air cooling with 4080_16GB is not available for LLMs. You can find general benchmarks for other GPUs, but the results might not directly apply to your specific model.

Pros: * Cost-effective: Typically more affordable than liquid cooling. * Reliable: Established technology with a proven track record. * Variety of options: Many different air cooler models are available, ranging in performance and price.

Cons: * Noise: Some air coolers can be noisy, especially under heavy load. * Limited cooling capacity: May not be ideal for extreme overclocking or extremely high-performance applications.

3. Liquid Cooling: The Ultimate in Performance

Liquid cooling takes cooling to the next level by circulating a liquid coolant through a system of water blocks and radiators. It effectively removes heat from the GPU, offering superior cooling capacity compared to air cooling.

Data:

- Unfortunately, there is no specific data on liquid cooling performance for the 4080_16GB with LLMs. The performance will depend on the specific model and the configuration of your liquid cooling system.

Pros: * Exceptional cooling capacity: Significantly reduces GPU temperatures, allowing for higher clocks and better performance. * Quiet operation: Can be quieter than air cooling, especially with well-designed radiators and fans.

Cons: * Costly: Liquid cooling systems are more expensive than air cooling. * More complex setup: Requires more technical knowledge and a higher level of care for maintenance.

4. Fan Curves: The Power of Fine Tuning

Tuning your fan curves is a simple way to optimize the temperature and noise of the cooling system. By adjusting the fan speed based on GPU temperature, you can maximize cooling performance while minimizing noise.

Data:

- There is no specific data on fan curve settings for the 4080_16GB with LLMs. You'll need to experiment with different settings to find the sweet spot for your setup.

Pros: * Easy to implement: Most GPU control panels come with a settings menu for adjusting fan curves. * Fine-tuned control: Allows you to optimize the noise-to-performance balance.

Cons: * Requires experimentation: Finding the right fan curve settings may involve some trial and error.

5. Underclocking: A Balancing Act

Underclocking your GPU lowers its operating frequency, reducing its power consumption and heat generation. While this may slightly reduce performance, it can be an effective way to lower temperatures and increase stability, especially during long runs.

Data:

- There is no specific data on underclocking for the 4080_16GB with LLMs. You'll need to experiment with different clock speeds to find the balance between performance and temperature.

Pros: * Reduces heat: Lower clock speeds result in less heat generation. * Increases stability: Can improve stability, especially under extreme loads.

Cons: * Reduced performance: Lower clock speeds will lead to a decrease in performance. * May not be suitable for all scenarios: May not be ideal for applications that require maximum performance.

6. Quantization: Small Models, Big Impact

Quantization is a technique that reduces model size by converting the model's parameters to lower precision. While this might result in a slight reduction in accuracy, it can dramatically decrease the memory footprint and computational requirements, leading to faster inference speeds and lower GPU temperatures.

Data:

- Llama 3 8B:

- Q4KM Generation: 106.22 tokens/second

- F16 Generation: 40.29 tokens/second

- Q4KM Processing: 5064.99 tokens/second

- F16 Processing: 6758.9 tokens/second

- Llama 3 70B: No data available for this model size and device combination.

Pros: * Reduces memory usage: Smaller models require less memory, leading to lower GPU temperatures. * Faster inference speeds: Less data to process means faster inference time.

Cons: * Slight accuracy reduction: Quantization can slightly affect model accuracy. * May not be suitable for all models: Not all models benefit equally from quantization.

Explanation of Quantization for Non-Technical Audience:

Think of your LLM as a giant recipe book for language. Each recipe (or parameter) contains instructions for how to generate text. Quantization is like converting the recipe book to use simpler ingredients (lower precision) while still being able to bake a similar cake (generate similar text). This makes the book smaller (less memory) and easier to read (faster inference).

7. Choosing the Right LLM Model: Size Matters

LLMs come in different sizes, with larger models typically requiring more computational power and generating more heat. Choosing an appropriately sized LLM for your GPU is crucial for maximizing performance and cooling efficiency.

Data:

- Llama 3 8B: Performance data is available above.

- Llama 3 70B: No data available for this model size and device combination.

Pros: * Optimized performance: Matching the model size to your GPU's capabilities can lead to optimal performance and cooling. * Reduced power consumption: Smaller models require less power, reducing heat generation.

Cons: * Limited capabilities: Smaller models may have less functionality and accuracy than larger ones.

8. Proper Case Ventilation: Airflow is Key

A well-ventilated case is essential for keeping your GPU cool. Ensure a clean and dust-free case with good airflow. Proper airflow allows heat to dissipate efficiently, preventing hot spots within the case.

Data:

- There is no specific data on the impact of case ventilation on GPU temperature with LLMs. However, a well-ventilated case is generally considered crucial for maximizing cooling efficiency.

Pros: * Improved cooling efficiency: Proper airflow helps to dissipate heat from the GPU and other components. * Increases component longevity: Reduces stress on components, extending their lifespan.

Cons: * May require more careful maintenance: A well-ventilated case may require more frequent cleaning to remove dust buildup.

Comparison of Cooling Solutions for NVIDIA 4080_16GB

| Cooling Solution | Pros | Cons | Data |

|---|---|---|---|

| Stock Cooler | Easy setup; Reasonable performance | Limited cooling capacity; Can get loud under heavy load | No specific data available for LLMs. |

| Air Cooling | Cost-effective; Reliable; Variety of options | Noise; Limited cooling capacity | No specific data available for LLMs with 4080_16GB. General benchmarks for other GPUs might not be directly applicable. |

| Liquid Cooling | Exceptional cooling capacity; Quiet operation | Costly; More complex setup | No specific data available for LLMs with 4080_16GB. Performance depends on model and liquid cooling system configuration. |

| Fan Curves | Easy to implement; Fine-tuned control | Requires experimentation | No specific data available for LLMs. You’ll need to experiment with settings. |

| Underclocking | Reduces heat; Increases stability | Reduced performance; May not be suitable for all scenarios | No specific data available for LLMs. You’ll need to experiment with clock speeds to find the right balance. |

| Quantization | Reduces memory usage; Faster inference speeds | Slight accuracy reduction; May not be suitable for all models | Llama 3 8B: Q4KM 106.22 tokens/second, F16 40.29 tokens/second. No data available for Llama 3 70B. |

| Choosing the Right LLM Model | Optimized performance; Reduced power consumption | Limited capabilities | Llama 3 8B: Performance data available above. No data available for Llama 3 70B. |

| Proper Case Ventilation | Improved cooling efficiency; Increases component longevity | May require more careful maintenance | No specific data available. General best practice for maximizing cooling efficiency in any PC setup. |

FAQ: The Cooling Quandary Solved

Q: How hot is too hot for my NVIDIA 4080_16GB?

A: The general rule of thumb is that 85°C (185°F) is the maximum safe temperature for most GPUs. Exceeding this limit can lead to performance degradation and potential damage.

Q: How often should I clean my GPU?

A: It's recommended to clean your GPU every few months, or more frequently if you live in a dusty environment. Removing dust buildup can improve cooling efficiency and prevent overheating.

Q: Is underclocking my GPU always a good idea?

A: Not necessarily. While it can help reduce temperatures, it also sacrifices performance. Consider underclocking only if you are experiencing excessive heat or performance instability.

Q: How do I choose a liquid cooling system?

A: Consider the size of your case, the compatibility with your motherboard, the cooling capacity, and the noise levels when choosing a liquid cooling system.

Q: Can I use both air and liquid cooling on my GPU?

A: While it's possible, it's not recommended as it can create conflicting airflow and potentially hinder cooling efficiency.

Keywords:

NVIDIA 4080_16GB, LLM, Large Language Model, GPU, cooling, air cooling, liquid cooling, fan curves, underclocking, quantization, case ventilation, Llama 3, performance, temperature, tokens per second, AI, inference, processing, data, efficiency, stability, reliability.