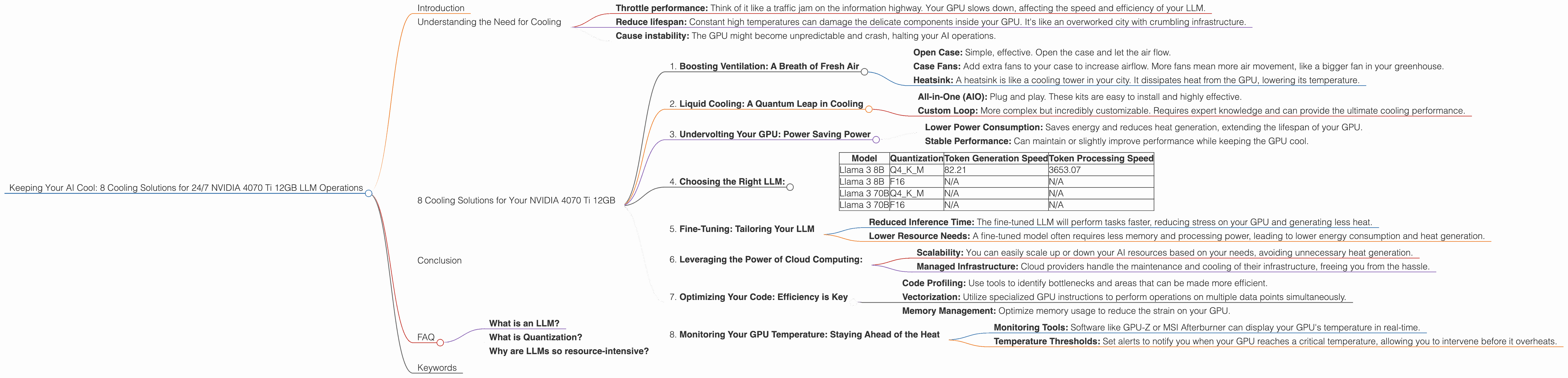

8 Cooling Solutions for 24 7 AI Operations with NVIDIA 4070 Ti 12GB

Introduction

The world of Large Language Models (LLMs) is heating up, and not just metaphorically! These powerful AI models, capable of generating human-like text, translation, and even code, require serious computing power. And that power comes with a price: heat.

Running an LLM 24/7 can significantly increase the temperature of your hardware, especially if you're using a beast like the NVIDIA 4070 Ti 12GB GPU. This article dives into eight innovative cooling solutions specifically designed to keep your 4070 Ti running cool and your LLMs humming along.

Understanding the Need for Cooling

Imagine your GPU as a tiny city, bustling with activity. Millions of transistors switch on and off, performing complex calculations to power your LLM, generating a significant amount of heat. Think of this heat as the city's traffic jams - it slows down everything if not managed properly.

If your GPU gets too hot, it can * Throttle performance: Think of it like a traffic jam on the information highway. Your GPU slows down, affecting the speed and efficiency of your LLM. * Reduce lifespan: Constant high temperatures can damage the delicate components inside your GPU. It's like an overworked city with crumbling infrastructure. * Cause instability: The GPU might become unpredictable and crash, halting your AI operations.

So, how do you keep your GPU cool and your LLMs operational without a hitch?

8 Cooling Solutions for Your NVIDIA 4070 Ti 12GB

1. Boosting Ventilation: A Breath of Fresh Air

Imagine your computer as a small, hot greenhouse - without proper airflow, the temperature rises. Ventilation is the key to letting fresh air circulate, reducing the trapped heat.

- Open Case: Simple, effective. Open the case and let the air flow.

- Case Fans: Add extra fans to your case to increase airflow. More fans mean more air movement, like a bigger fan in your greenhouse.

- Heatsink: A heatsink is like a cooling tower in your city. It dissipates heat from the GPU, lowering its temperature.

2. Liquid Cooling: A Quantum Leap in Cooling

Imagine a liquid cooler as a sophisticated air conditioning system for your GPU. It effectively removes heat using a circulating liquid, like a mini-water park for your hardware.

- All-in-One (AIO): Plug and play. These kits are easy to install and highly effective.

- Custom Loop: More complex but incredibly customizable. Requires expert knowledge and can provide the ultimate cooling performance.

3. Undervolting Your GPU: Power Saving Power

Think of undervolting as taking a more energy-efficient route to your destination. It reduces the power draw, making your GPU run cooler.

- Lower Power Consumption: Saves energy and reduces heat generation, extending the lifespan of your GPU.

- Stable Performance: Can maintain or slightly improve performance while keeping the GPU cool.

Here's a practical example:

Imagine you're driving a powerful car. You can floor it and get to your destination quickly, but you'll use more fuel and generate more heat. If you drive at a more moderate speed, you'll save fuel and generate less heat. Undervolting works similarly - it's like driving your GPU at a more moderate speed, reducing its power consumption and heat generation.

4. Choosing the Right LLM:

Not all LLMs are created equal. Some are more resource-intensive than others, demanding more power and generating more heat. Choosing the right LLM for your needs and hardware is essential.

- Lower Parameter Count: Smaller models like Llama 7B require less computing power than larger models like Llama 70B.

- Quantization: Think of quantization as a "diet" for your LLM. It reduces the model's size by representing its data using fewer bits. This leads to faster processing and lower memory requirements, resulting in less heat generation.

Table 1: LLM Performance on NVIDIA 4070 Ti 12GB (Tokens/Second)

| Model | Quantization | Token Generation Speed | Token Processing Speed |

|---|---|---|---|

| Llama 3 8B | Q4KM | 82.21 | 3653.07 |

| Llama 3 8B | F16 | N/A | N/A |

| Llama 3 70B | Q4KM | N/A | N/A |

| Llama 3 70B | F16 | N/A | N/A |

Note: Data for Llama 3 70B (both Q4_K_M and F16) is not available.

As you can see from Table 1, Llama 3 8B with Q4KM quantization exhibits notable performance. The faster token processing speed points to more efficient computation and potentially less heat generated.

5. Fine-Tuning: Tailoring Your LLM

Imagine fine-tuning as customizing your LLM for specific tasks. It allows you to train your LLM on a specific dataset, making it more efficient and requiring less computational power.

- Reduced Inference Time: The fine-tuned LLM will perform tasks faster, reducing stress on your GPU and generating less heat.

- Lower Resource Needs: A fine-tuned model often requires less memory and processing power, leading to lower energy consumption and heat generation.

6. Leveraging the Power of Cloud Computing:

Think of cloud computing as renting a massive, highly efficient data center for your LLM. Cloud service providers like Google Cloud Platform, Amazon Web Services, and Microsoft Azure offer powerful GPUs and specialized hardware designed to handle AI workloads with optimized cooling.

- Scalability: You can easily scale up or down your AI resources based on your needs, avoiding unnecessary heat generation.

- Managed Infrastructure: Cloud providers handle the maintenance and cooling of their infrastructure, freeing you from the hassle.

7. Optimizing Your Code: Efficiency is Key

Imagine your code as a blueprint for your LLM. Optimizing it is like streamlining the construction process, reducing wasted resources and energy.

- Code Profiling: Use tools to identify bottlenecks and areas that can be made more efficient.

- Vectorization: Utilize specialized GPU instructions to perform operations on multiple data points simultaneously.

- Memory Management: Optimize memory usage to reduce the strain on your GPU.

Here's a simple analogy:

Imagine you're building a house. You could use individual bricks to build each wall, which would be slow and inefficient. Or, you could use pre-fabricated wall panels, which would be much faster and require less effort. Vectorization works similarly - it allows your GPU to perform operations on multiple data points at once, like using pre-fabricated panels, making the process much faster and more efficient.

8. Monitoring Your GPU Temperature: Staying Ahead of the Heat

Think of GPU monitoring as a "thermometer" for your AI operations. It helps you stay informed about your GPU's temperature and take proactive steps to prevent overheating.

- Monitoring Tools: Software like GPU-Z or MSI Afterburner can display your GPU's temperature in real-time.

- Temperature Thresholds: Set alerts to notify you when your GPU reaches a critical temperature, allowing you to intervene before it overheats.

Conclusion

Keeping your NVIDIA 4070 Ti 12GB cool is crucial for smooth and sustainable AI operations. By implementing the right cooling solutions and optimizing your LLM setup, you can ensure your GPU runs cool and efficiently, powering your AI innovations for years to come.

FAQ

What is an LLM?

A Large Language Model (LLM) , like the Llama 7B or Llama 70B, is a type of AI model capable of understanding and generating human-like text. It learns from vast amounts of text data and can be used for various tasks, including translation, summarization, and even writing creative content.

What is Quantization?

Quantization is a technique that reduces the size of an LLM by representing its data using fewer bits, making it faster to process and require less memory. Imagine it like compressing a large image - it takes up less storage without losing significant detail.

Why are LLMs so resource-intensive?

LLMs are complex models with millions or even billions of parameters. They require significant processing power and memory to run, which can generate considerable heat. Think of it like a powerful engine needing more fuel and generating more heat to work efficiently.

Keywords

NVIDIA 4070 Ti 12GB, LLM, Cooling, GPU, AI, Llama 7B, Llama 70B, Quantization, Ventilation, Liquid Cooling, Undervolting, Cloud Computing, Code Optimization, Monitoring, Fine-tuning, AI Operations, Token Generation, Token Processing.