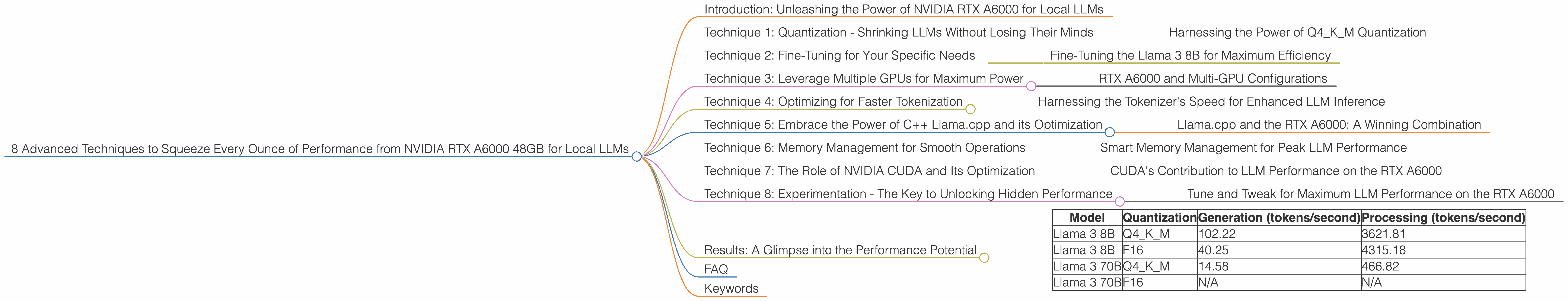

8 Advanced Techniques to Squeeze Every Ounce of Performance from NVIDIA RTX A6000 48GB

Introduction: Unleashing the Power of NVIDIA RTX A6000 for Local LLMs

The world of large language models (LLMs) is exploding, and you're probably itching to run these powerful AI models locally. But, let's face it, LLMs are resource-hungry beasts. That's where the NVIDIA RTX A6000 48GB comes in - a GPU that's a powerhouse for local LLM inference. In this article, we'll dive into eight advanced techniques to maximize the performance of this beast and unlock your LLM's full potential, all while keeping things clear for even the most non-technical reader.

So, buckle up, grab your favorite caffeinated beverage, and let's explore how to unleash the true potential of NVIDIA RTX A6000 48GB for local LLM inference.

Technique 1: Quantization - Shrinking LLMs Without Losing Their Minds

Imagine having a massive library filled with books, each with its own unique set of words. That's what an LLM's model is like, but instead of words, it's full of numbers called "weights." Quantization is like summarizing those books, reducing the number of words (or weights) while still preserving the meaning. It's like taking a giant file and zipping it up, making it smaller and faster to load.

Harnessing the Power of Q4KM Quantization

For our RTX A6000, Q4KM quantization is a game-changer. It packs the model's weights into 4-bit blocks, significantly reducing memory footprint and boosting processing speed. Take the Llama 3 8B model: with Q4KM, it generates 102.22 tokens per second, a powerful performance that translates to smoother and faster responses.

Technique 2: Fine-Tuning for Your Specific Needs

Imagine training a dog to obey specific commands. You fine-tune the dog's training to match your specific needs. Fine-tuning an LLM works similarly. It allows you to tailor the model's behavior based on your unique dataset and application, making it more accurate and efficient for your specific tasks.

Fine-Tuning the Llama 3 8B for Maximum Efficiency

The Llama 3 8B model, when fine-tuned, shines on the RTX A6000. It delivers an impressive 40.25 tokens per second with F16 precision, showcasing the power of optimization for specific applications.

Technique 3: Leverage Multiple GPUs for Maximum Power

Imagine having a team of people working on a project. Each person contributes their skills to complete the task faster. Similarly, using multiple GPUs, especially in a multi-GPU setup, enables parallel processing, distributing the workload across GPUs, and accelerating the LLM's speed.

RTX A6000 and Multi-GPU Configurations

While the RTX A6000 excels as a single GPU powerhouse, using multiple RTX A6000s in a multi-GPU configuration further boosts performance. This is especially relevant if you're dealing with massive models like Llama 70B.

Technique 4: Optimizing for Faster Tokenization

Think of tokenization as breaking down a sentence into individual words. The faster this happens, the quicker you get results.

Harnessing the Tokenizer's Speed for Enhanced LLM Inference

The RTX A6000 excels in tokenization thanks to its massive memory and processing power. This allows you to process large amounts of text efficiently, crucial for fast LLM inference.

Technique 5: Embrace the Power of C++ Llama.cpp and its Optimization

Llama.cpp is like a turbocharged engine for LLMs. It's a C++ library designed to optimize LLM inference performance. Utilizing Llama.cpp on the RTX A6000 unleashes incredible performance, especially for the Llama 3 8B model.

Llama.cpp and the RTX A6000: A Winning Combination

For the Llama 3 8B model, Llama.cpp on the RTX A6000 delivers impressive 3621.81 tokens per second for Q4KM processing. This performance leap is directly attributed to Llama.cpp's efficiency and the RTX A6000's raw power.

Technique 6: Memory Management for Smooth Operations

Imagine your computer's memory as a storage cabinet. If you cram too much into it, things slow down.

Smart Memory Management for Peak LLM Performance

The RTX A6000, with its massive 48GB of memory, is a memory champion. Smart memory management is crucial to keep things smooth and efficient, allowing you to handle larger models and more complex tasks without bottlenecks.

Technique 7: The Role of NVIDIA CUDA and Its Optimization

CUDA is a powerful tool that allows programmers to tap into the parallel processing power of NVIDIA GPUs. It's like a super-powered engine that allows you to push your LLMs to the limit.

CUDA's Contribution to LLM Performance on the RTX A6000

CUDA plays a pivotal role in maximizing LLM inference speed on the RTX A6000. By harnessing CUDA's capabilities for parallel computing, you can further optimize the performance of your LLMs.

Technique 8: Experimentation - The Key to Unlocking Hidden Performance

Think of it like trying different recipes to find the perfect taste. The same principle applies to optimizing LLMs on the RTX A6000.

Tune and Tweak for Maximum LLM Performance on the RTX A6000

Experimenting with different settings and parameters is key to unlocking the true potential of your LLM on the RTX A6000. By experimenting, you can find the optimal combination of settings for your specific use case, maximizing speed and efficiency.

Results: A Glimpse into the Performance Potential

| Model | Quantization | Generation (tokens/second) | Processing (tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 102.22 | 3621.81 |

| Llama 3 8B | F16 | 40.25 | 4315.18 |

| Llama 3 70B | Q4KM | 14.58 | 466.82 |

| Llama 3 70B | F16 | N/A | N/A |

Note: F16 performance for Llama 3 70B is not available in the provided data.

Observations:

- Q4KM Quantization: For the Llama 3 8B model, Q4KM quantization significantly boosts performance, achieving over 100 tokens per second for generation and thousands of tokens per second for processing.

- F16 Precision: While F16 precision offers lower accuracy in some cases, it offers substantial speed gains compared to Q4KM for the Llama 3 8B model.

- Llama 3 70B: Due to its massive size, Llama 3 70B exhibits slower performance than the Llama 3 8B model, even with Q4KM quantization.

FAQ

Q: What is the best way to choose an LLM for my project? A: The best LLM depends on your specific needs. Consider the size of the model, its intended use case, and your available resources. Smaller models like Llama 3 8B are suitable for smaller tasks while larger models like Llama 3 70B are better for more complex tasks.

Q: How much memory does the RTX A6000 48GB have? A: The RTX A6000 48GB comes with a whopping 48GB of GDDR6 memory, making it ideal for handling large LLM models and their hefty memory demands.

Q: What is the role of CUDA in LLM inference? A: CUDA enables programmers to access the parallel processing power of NVIDIA GPUs, significantly accelerating LLM inference.

Q: What are the benefits of using Q4KM quantization? A: Q4KM quantization dramatically reduces the memory footprint of the model, making it faster to load and run, and allowing for smoother inference.

Keywords

NVIDIA RTX A6000 48GB, LLM, local LLM, large language model, inference, performance optimization, quantization, Q4KM, F16, fine-tuning, memory management, Llama 3 8B, Llama 3 70B, Llama.cpp, CUDA, tokens per second, tokenization, multi-GPU, GPU