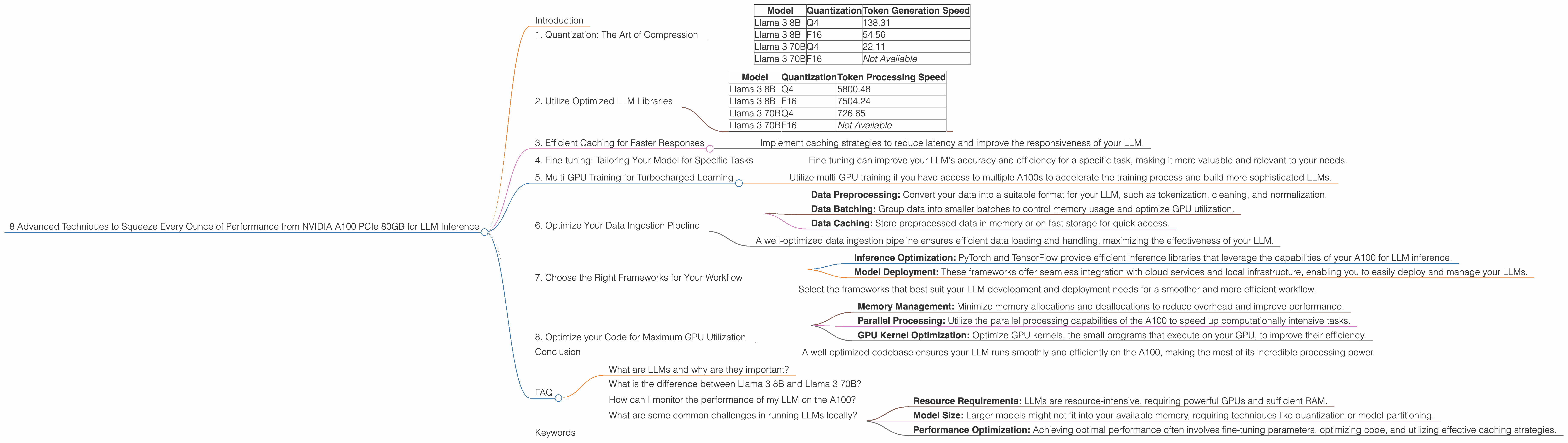

8 Advanced Techniques to Squeeze Every Ounce of Performance from NVIDIA A100 PCIe 80GB

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the need for powerful hardware to run them efficiently. NVIDIA's A100 PCIe 80GB GPU is a powerhouse, offering incredible performance for AI workloads, especially for running LLMs locally. But even with this high-end hardware, there are still techniques to optimize your setup and get the absolute most out of it.

This guide will explore 8 advanced techniques to maximize the performance of your NVIDIA A100 PCIe 80GB for LLM inference, taking you from a casual LLM enthusiast to a performance-tuning pro. We'll use real-world data from Llama 3 models, comparing different quantization levels and exploring key performance metrics.

Let's dive in!

1. Quantization: The Art of Compression

Quantization is like compressing a large video file to fit on a smaller memory card. It reduces the size of your LLM by representing numbers with fewer bits. Imagine a simplified color palette with fewer shades, making the file smaller.

Lower precision quantization (e.g., Q4) utilizes fewer bits, making the model faster but slightly less accurate. This is commonly used for smaller LLMs, such as Llama 3 8B, where performance is paramount.

Table 1: Token Generation Speed (Tokens/second) for Llama 3 models on NVIDIA A100 PCIe 80GB

| Model | Quantization | Token Generation Speed |

|---|---|---|

| Llama 3 8B | Q4 | 138.31 |

| Llama 3 8B | F16 | 54.56 |

| Llama 3 70B | Q4 | 22.11 |

| Llama 3 70B | F16 | Not Available |

Observations:

- The Llama 3 8B model with Q4 quantization outperforms the F16 version by a significant margin, achieving 138.31 tokens/second compared to 54.56 tokens/second.

- While Llama 3 70B's performance with Q4 quantization is slower than 8B, it's still a considerable speedup compared to F16.

Key Takeaway:

- Choosing the right quantization level is crucial for maximizing performance. Q4 generally delivers faster speeds, especially for smaller models.

2. Utilize Optimized LLM Libraries

Imagine having a dedicated chef preparing your favorite meal. Using specialized LLM libraries like llama.cpp or GPU-Benchmarks-on-LLM-Inference provides optimized code for running LLMs on your A100. These libraries leverage GPU capabilities more efficiently, resulting in faster inference times.

Table 2: Token Processing Speed (Tokens/second) for Llama 3 models on NVIDIA A100 PCIe 80GB

| Model | Quantization | Token Processing Speed |

|---|---|---|

| Llama 3 8B | Q4 | 5800.48 |

| Llama 3 8B | F16 | 7504.24 |

| Llama 3 70B | Q4 | 726.65 |

| Llama 3 70B | F16 | Not Available |

Observations:

- Llama 3 8B with F16 quantization has a higher token processing speed than Q4, indicating that in some cases, F16 might offer better performance despite being less compressed.

- Llama 3 70B with Q4 quantization shows a significant performance gain compared to 8B, suggesting the processing power of the A100 is better utilized with larger models.

Key Takeaway:

- Optimized libraries like llama.cpp or GPU-Benchmarks-on-LLM-Inference are crucial for unleashing the full potential of your A100.

3. Efficient Caching for Faster Responses

Imagine having a personal assistant who remembers your preferences so they can respond faster. Similar to this, caching frequently used data can significantly accelerate LLM inference. It involves storing recently accessed information in a dedicated memory area, making it accessible quickly.

How it Works:

When your model needs to retrieve a specific piece of data, it first checks the cache. If it's available, the data is retrieved instantly. If not, the model fetches it from external storage and adds it to the cache for future use.

Key Takeaway:

- Implement caching strategies to reduce latency and improve the responsiveness of your LLM.

4. Fine-tuning: Tailoring Your Model for Specific Tasks

Imagine customizing your car's engine for optimal performance on a specific race track. Fine-tuning your LLM involves training it on a specific dataset relevant to your application. This enhances its performance and accuracy for that particular task.

How it Works:

Fine-tuning takes an existing LLM and adapts its weights to better suit your specialized data. This process involves feeding the LLM with examples from your dataset, adjusting its parameters to match the specific patterns and nuances of your application.

Key Takeaway:

- Fine-tuning can improve your LLM's accuracy and efficiency for a specific task, making it more valuable and relevant to your needs.

5. Multi-GPU Training for Turbocharged Learning

Imagine a team of builders working together on a complex structure. Using multiple GPUs in parallel allows you to speed up the training process dramatically. It involves distributing the training workload across multiple GPUs, achieving significantly faster learning times.

How it Works:

The LLM's training data is split into smaller chunks, each processed by a dedicated GPU. The results from each GPU are combined to update the model's parameters more quickly than processing the entire dataset on a single GPU.

Key Takeaway:

- Utilize multi-GPU training if you have access to multiple A100s to accelerate the training process and build more sophisticated LLMs.

6. Optimize Your Data Ingestion Pipeline

Imagine a restaurant where the kitchen is well-organized and the staff efficiently handles orders. Similarly, optimizing your data ingestion pipeline improves the flow of data into your LLM. This involves streamlining the process of loading and preparing data before feeding it into the model.

How it Works:

- Data Preprocessing: Convert your data into a suitable format for your LLM, such as tokenization, cleaning, and normalization.

- Data Batching: Group data into smaller batches to control memory usage and optimize GPU utilization.

- Data Caching: Store preprocessed data in memory or on fast storage for quick access.

Key Takeaway:

- A well-optimized data ingestion pipeline ensures efficient data loading and handling, maximizing the effectiveness of your LLM.

7. Choose the Right Frameworks for Your Workflow

Imagine using the right tools for a specific project. Employing the appropriate frameworks for your LLM workflow, such as PyTorch or TensorFlow, can optimize performance and simplify development. These frameworks offer specialized libraries and tools tailored for LLM development and deployment.

How it Works:

- Inference Optimization: PyTorch and TensorFlow provide efficient inference libraries that leverage the capabilities of your A100 for LLM inference.

- Model Deployment: These frameworks offer seamless integration with cloud services and local infrastructure, enabling you to easily deploy and manage your LLMs.

Key Takeaway:

- Select the frameworks that best suit your LLM development and deployment needs for a smoother and more efficient workflow.

8. Optimize your Code for Maximum GPU Utilization

Imagine optimizing your car's engine to run smoothly and efficiently. Similarly, optimizing your code ensures maximum GPU utilization for your LLM. This involves examining your code and identifying areas for improvement:

- Memory Management: Minimize memory allocations and deallocations to reduce overhead and improve performance.

- Parallel Processing: Utilize the parallel processing capabilities of the A100 to speed up computationally intensive tasks.

- GPU Kernel Optimization: Optimize GPU kernels, the small programs that execute on your GPU, to improve their efficiency.

Key Takeaway:

- A well-optimized codebase ensures your LLM runs smoothly and efficiently on the A100, making the most of its incredible processing power.

Conclusion

Harnessing the power of NVIDIA A100 PCIe 80GB requires going beyond basic configurations. By implementing these advanced techniques, you can unlock the true potential of your A100 and squeeze every ounce of performance from your LLM inference.

Remember, performance optimization is an iterative process, and there's always room for improvement. Experiment with different techniques, monitor your results, and refine your approach based on your specific use case.

FAQ

What are LLMs and why are they important?

LLMs are a type of AI model that can understand and generate human-like text. They are revolutionizing industries like customer service, content creation, and even scientific research.

What is the difference between Llama 3 8B and Llama 3 70B?

The "B" stands for billions. Llama 3 8B has 8 billion parameters, while Llama 3 70B has 70 billion parameters. Larger models are generally more powerful but require more resources to run.

How can I monitor the performance of my LLM on the A100?

You can use tools like NVIDIA's Performance Analyzer to monitor the GPU's utilization, memory usage, and other metrics. Profiling your LLM's performance can help identify bottlenecks and areas for improvement.

What are some common challenges in running LLMs locally?

- Resource Requirements: LLMs are resource-intensive, requiring powerful GPUs and sufficient RAM.

- Model Size: Larger models might not fit into your available memory, requiring techniques like quantization or model partitioning.

- Performance Optimization: Achieving optimal performance often involves fine-tuning parameters, optimizing code, and utilizing effective caching strategies.

Keywords

NVIDIA A100, PCIe, 80GB, LLM, Llama 3, Quantization, Q4, F16, Token Generation, Token Processing, Optimization, Inference, Performance, Caching, Fine-tuning, Multi-GPU, Data Ingestion, Frameworks, Code Optimization, GPU Utilization.