7 Tricks to Avoid Out of Memory Errors on NVIDIA 3090 24GB x2

Introduction: The Power of Large Language Models and the Frustration of Memory Limits

Large language models (LLMs) are revolutionizing the way we interact with computers. Imagine a machine that can understand your questions, write creative content, and even translate languages – that's the power of LLMs. However, training and running these models can be computationally intensive, especially with their ever-growing size. This is where the dreaded "Out of Memory" (OOM) errors come in – like a frustrating roadblock in your AI adventures.

Training or running LLMs often requires massive amounts of memory, typically far more than what's available on a standard computer. Even with high-end devices, it's easy to hit those memory limits. Especially if you're working with multiple 3090 GPUs, you might be tempted to think: "Finally, I have enough RAM! Let's throw everything at it!" But wait, there's a catch! RAM isn't always the solution.

This article will explore the common concerns users face when running LLMs on NVIDIA 309024GBx2 setups and equip you with seven practical techniques to avoid dreaded OOM errors. Let's dive in!

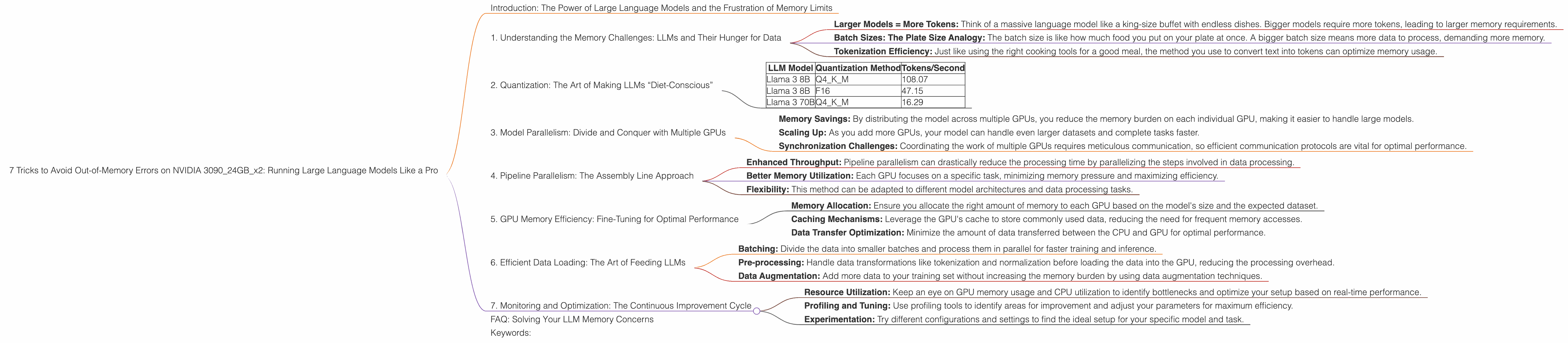

1. Understanding the Memory Challenges: LLMs and Their Hunger for Data

Imagine LLMs as voracious eaters – they crave vast amounts of data to learn and perform well. Every word, every sentence, every piece of code gets translated into a “token” – a tiny piece of information – before your GPU can process it. The process of converting text into tokens is like preparing a delicious meal, but for a powerful language model.

Let's break it down:

- Larger Models = More Tokens: Think of a massive language model like a king-size buffet with endless dishes. Bigger models require more tokens, leading to larger memory requirements.

- Batch Sizes: The Plate Size Analogy: The batch size is like how much food you put on your plate at once. A bigger batch size means more data to process, demanding more memory.

- Tokenization Efficiency: Just like using the right cooking tools for a good meal, the method you use to convert text into tokens can optimize memory usage.

But how do we navigate the world of tokens and memory limits? Let's explore some tricks to overcome these challenges.

## 2. Quantization: The Art of Making LLMs “Diet-Conscious”

Imagine reducing the size of a digital photograph without losing too much quality – that's essentially what quantization does for LLMs. It compresses the model's parameters, making it smaller and more memory-efficient. It's like using a recipe to make the same dish with less flour – the final product tastes great, but it's lighter on your wallet.

Let's look at two common quantization strategies:

- Q4KM: This is a light version of your LLM, like a "diet" version of your favorite meal. It reduces the size of model parameters, allowing you to fit more data into your GPU's memory.

- F16: This is a slightly less restrictive approach, similar to using a smaller, but not tiny, portion size. It still reduces memory demands compared to using no quantization.

Let's see how these quantization methods affect memory usage on our 309024GBx2 setup:

| LLM Model | Quantization Method | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 108.07 |

| Llama 3 8B | F16 | 47.15 |

| Llama 3 70B | Q4KM | 16.29 |

A few observations:

- Smaller Models, Higher Speed: The 8B Llama model with Q4KM quantization achieves the highest token speed, demonstrating the power of this technique for smaller LLMs.

- Trade-off Between Accuracy and Speed: While quantization can improve speed and memory usage, it might slightly affect the model's accuracy. Choosing the ideal quantization level is like finding the perfect balance between "diet" and "delicious."

3. Model Parallelism: Divide and Conquer with Multiple GPUs

Imagine building a complex structure with a team of skilled workers – everyone has a specific role and collaborates to achieve the final goal. That's essentially what model parallelism does. It divides the LLM into smaller parts, each handled by a separate GPU, which speeds up training and inference.

Key Points to Remember:

- Memory Savings: By distributing the model across multiple GPUs, you reduce the memory burden on each individual GPU, making it easier to handle large models.

- Scaling Up: As you add more GPUs, your model can handle even larger datasets and complete tasks faster.

- Synchronization Challenges: Coordinating the work of multiple GPUs requires meticulous communication, so efficient communication protocols are vital for optimal performance.

4. Pipeline Parallelism: The Assembly Line Approach

Imagine a factory with an assembly line – each station performs a specific function, ultimately contributing to the production of a finished product. Pipeline parallelism works similarly for LLMs. It breaks down the model's processing steps into stages, with each stage handled by a separate GPU. This allows for efficient handling of large models and massive datasets, achieving remarkable speed improvements.

Key Benefits:

- Enhanced Throughput: Pipeline parallelism can drastically reduce the processing time by parallelizing the steps involved in data processing.

- Better Memory Utilization: Each GPU focuses on a specific task, minimizing memory pressure and maximizing efficiency.

- Flexibility: This method can be adapted to different model architectures and data processing tasks.

5. GPU Memory Efficiency: Fine-Tuning for Optimal Performance

Just like a chef carefully chooses ingredients to optimize a recipe, fine-tuning your GPU's memory configuration can maximize performance and minimize memory consumption. These are a few critical aspects to consider:

- Memory Allocation: Ensure you allocate the right amount of memory to each GPU based on the model's size and the expected dataset.

- Caching Mechanisms: Leverage the GPU's cache to store commonly used data, reducing the need for frequent memory accesses.

- Data Transfer Optimization: Minimize the amount of data transferred between the CPU and GPU for optimal performance.

6. Efficient Data Loading: The Art of Feeding LLMs

Imagine efficiently organizing a catering event – you wouldn't just dump all the food on the table. Similarly, efficient data loading is crucial for smooth LLM training and inference. Consider these steps:

- Batching: Divide the data into smaller batches and process them in parallel for faster training and inference.

- Pre-processing: Handle data transformations like tokenization and normalization before loading the data into the GPU, reducing the processing overhead.

- Data Augmentation: Add more data to your training set without increasing the memory burden by using data augmentation techniques.

7. Monitoring and Optimization: The Continuous Improvement Cycle

Just like a chef constantly monitors and adjusts their cooking process to ensure optimal results, monitoring your LLM setup is crucial for maintaining optimal efficiency and avoiding memory issues.

- Resource Utilization: Keep an eye on GPU memory usage and CPU utilization to identify bottlenecks and optimize your setup based on real-time performance.

- Profiling and Tuning: Use profiling tools to identify areas for improvement and adjust your parameters for maximum efficiency.

- Experimentation: Try different configurations and settings to find the ideal setup for your specific model and task.

FAQ: Solving Your LLM Memory Concerns

Q: Can I run a 70B LLM on my 309024GBx2 setup without any issues?

A: It's possible! While it's challenging, you can use quantization techniques (like Q4KM) and model parallelism to fit a 70B LLM, but it will be a tight squeeze.

Q: What are the best practices for avoiding Out-of-Memory errors when running LLMs?

A: We covered many strategies in this article. In short, consider quantization, model parallelism, and efficient memory allocation based on your LLM and dataset size.

Q: How can I optimize my data loading process for better performance?

A: Use batching, pre-processing techniques, and consider data augmentation to streamline your data loading, reduce memory pressure, and improve overall efficiency.

Keywords:

LLM, Large Language Model, NVIDIA 3090, GPU, Out-of-Memory, OOM, Tokens, Quantization, Q4KM, F16, Model Parallelism, Pipeline Parallelism, Memory Efficiency, Data Loading, Batching, Pre-processing, Data Augmentation, Monitoring, Optimization, Deep Learning, AI, Machine Learning, NLP, Natural Language Processing