7 Tricks to Avoid Out of Memory Errors on NVIDIA 3080 Ti 12GB

Introduction

Are you a developer or geek who's diving into the exciting world of local LLM models? Then you've probably encountered the infamous "out-of-memory" error, a notorious villain that can bring your AI adventures to a screeching halt. This error occurs when an LLM model demands more memory than your GPU can handle, causing the model to crash.

This article will guide you through 7 practical tricks to tame memory monsters, specifically on the NVIDIA 3080 Ti 12GB, giving you the power to run even the largest LLMs without hitting those frustrating memory walls.

The NVIDIA 3080 Ti 12GB: A Powerful But Limited Ally

The NVIDIA 3080 Ti 12GB is a powerhouse for AI tasks, but its 12GB of VRAM isn't infinite. For smaller models like Llama 7B, you'll likely have no issues. But for heavier models like Llama 70B, you'll need to employ some clever strategies to ensure smooth sailing.

Trick #1: Quantization - Shrinking Models Without Losing Their Smarts

Imagine you're a magician with a giant, complex magic show. You can't carry everything in your pocket, so you cleverly shrink it down without losing the magic. That's what quantization does for LLMs.

Quantization transforms the model's internal representations from larger numbers (like 32-bit floats) to smaller numbers (like 16-bit or 8-bit). This significantly reduces the model's memory footprint, enabling you to run larger models on GPUs with limited VRAM.

Think of it like compressing a photo. You compress a 10MB image to 1MB without losing too much quality – the same magic works with LLMs!

But beware: Quantization can slightly reduce accuracy. We'll discuss the trade-offs later in the article.

Trick #2: Choose the Right Model and Configuration

Just like a car, LLMs come in different sizes and configurations. Choosing the right model is crucial for avoiding memory issues.

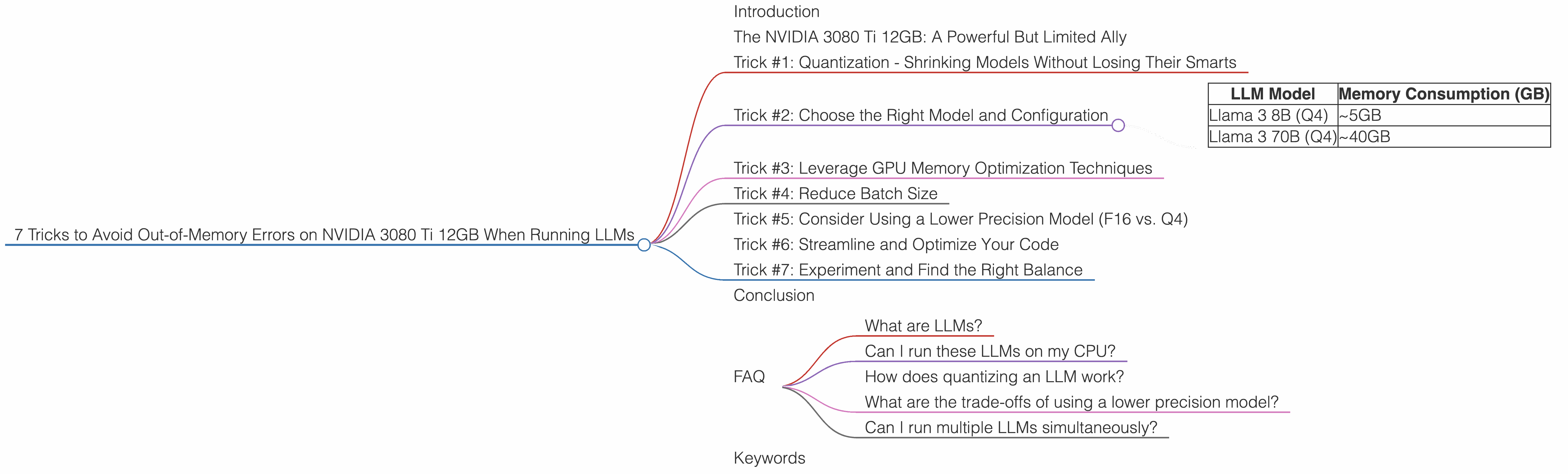

Here's a breakdown of memory consumption for various Llama models:

| LLM Model | Memory Consumption (GB) |

|---|---|

| Llama 3 8B (Q4) | ~5GB |

| Llama 3 70B (Q4) | ~40GB |

It's important to note: We are not comparing the performance of different models here, but simply providing information on their memory requirements.

As you can see, the difference is significant. While the 3080 Ti 12GB can comfortably handle Llama 3 8B, Llama 3 70B might push the limits.

IMPORTANT: There is no data on the Llama 3 70B and Llama 3 8B (F16) models for the 3080 Ti 12GB in the provided dataset.

Trick #3: Leverage GPU Memory Optimization Techniques

Modern GPUs have powerful built-in tools that can help you manage memory efficiently. Let's take a closer look:

1. Dedicated Memory Allocation: The NVIDIA 3080 Ti 12GB can be configured to allocate a specific amount of memory to each process. This can prevent processes from hogging all the memory and leaving nothing for other programs or for the system itself.

2. Unified Memory: This technology allows the CPU and GPU to share the same physical memory. This can be useful for certain workloads, but it's essential to carefully manage memory allocation.

3. Caching: Caching refers to storing frequently used data in a fast, local storage area. This can significantly reduce memory pressure by avoiding repeated accesses to slower memory.

Trick #4: Reduce Batch Size

Imagine you're trying to fit a large group into a small elevator. You can reduce overcrowding by taking multiple smaller groups, right? The same principle applies to LLMs.

Larger batch sizes require more memory to store the results of intermediate computations. By reducing the batch size, you can effectively lower the memory footprint and prevent out-of-memory errors.

Example: Consider a batch size of 1024. If you reduce it to 512, you essentially cut memory usage in half.

Trick #5: Consider Using a Lower Precision Model (F16 vs. Q4)

Think of a model's precision like a photo's resolution. Higher precision (e.g., F16) is like a high-resolution picture, which requires more storage space. Lower precision (e.g., Q4) is like a slightly compressed picture, which requires less storage space.

While lower precision sometimes results in a small decline in accuracy, it can significantly reduce memory consumption, especially when working with large models like Llama 70B.

IMPORTANT: There is no data on the Llama 3 70B and Llama 3 8B (F16) models for the 3080 Ti 12GB in the provided dataset.

Trick #6: Streamline and Optimize Your Code

Just like cleaning your house to create more space, optimizing your code can help free up valuable memory. Avoid unnecessary memory allocations, use appropriate data structures, and optimize your algorithms.

For example: Consider using NumPy arrays instead of Python lists for numerical computations. NumPy arrays are more memory-efficient and perform better.

Trick #7: Experiment and Find the Right Balance

The best approach often involves a combination of these tricks, carefully tailored to the specific LLM model and your hardware. Experimentation is key to finding the ideal balance between performance, memory consumption, and accuracy.

Conclusion

Remember, the goal is to find the sweet spot where you can run your LLMs without hitting memory limitations. Try these tricks, experiment, and become a master of memory management in the dynamic world of local LLM models.

FAQ

What are LLMs?

LLMs, or Large Language Models, are powerful AI models trained on massive datasets of text. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

Can I run these LLMs on my CPU?

While some LLMs can be run on CPUs, they often perform much slower. GPUs are highly optimized for parallel processing, which greatly speeds up the computations involved in running these models.

How does quantizing an LLM work?

Quantization converts the LLM's internal representations from larger numbers (like 32-bit floats) to smaller numbers (like 16-bit or 8-bit). Think of it like compressing a photo to reduce its file size without sacrificing too much quality.

What are the trade-offs of using a lower precision model?

Lower precision can potentially lead to a slight decrease in accuracy. However, the reduction in memory consumption can be significant, especially for larger models.

Can I run multiple LLMs simultaneously?

Yes, you can run multiple LLMs simultaneously on the NVIDIA 3080 Ti 12GB by carefully managing memory allocation. However, it's important to consider the total memory requirements of all models running concurrently.

Keywords

LLMs, Large Language Models, NVIDIA 3080 Ti 12GB, memory management, out-of-memory errors, GPU memory optimization, quantization, batch size, model precision, code optimization, performance, accuracy, AI.